Clear Sky Science · en

Forest Inspection Dataset: A Synthetic UAV Dataset for Semantic Segmentation of Forest Environments

Why Drones and Digital Forests Matter

Healthy forests help regulate climate, protect biodiversity, and support human livelihoods, but they are under pressure from logging, fires, pests, and storms. Inspecting vast wooded areas from the ground is slow and costly, so researchers are turning to unmanned aerial vehicles (UAVs), or drones, to watch over forests from above. This article presents the Forest Inspection dataset, a detailed, computer-generated collection of drone images designed to teach artificial intelligence (AI) systems how to recognize key elements of forest scenes—such as different kinds of trees, the forest floor, and fallen logs—quickly and accurately.

A Virtual Forest for Careful Watching

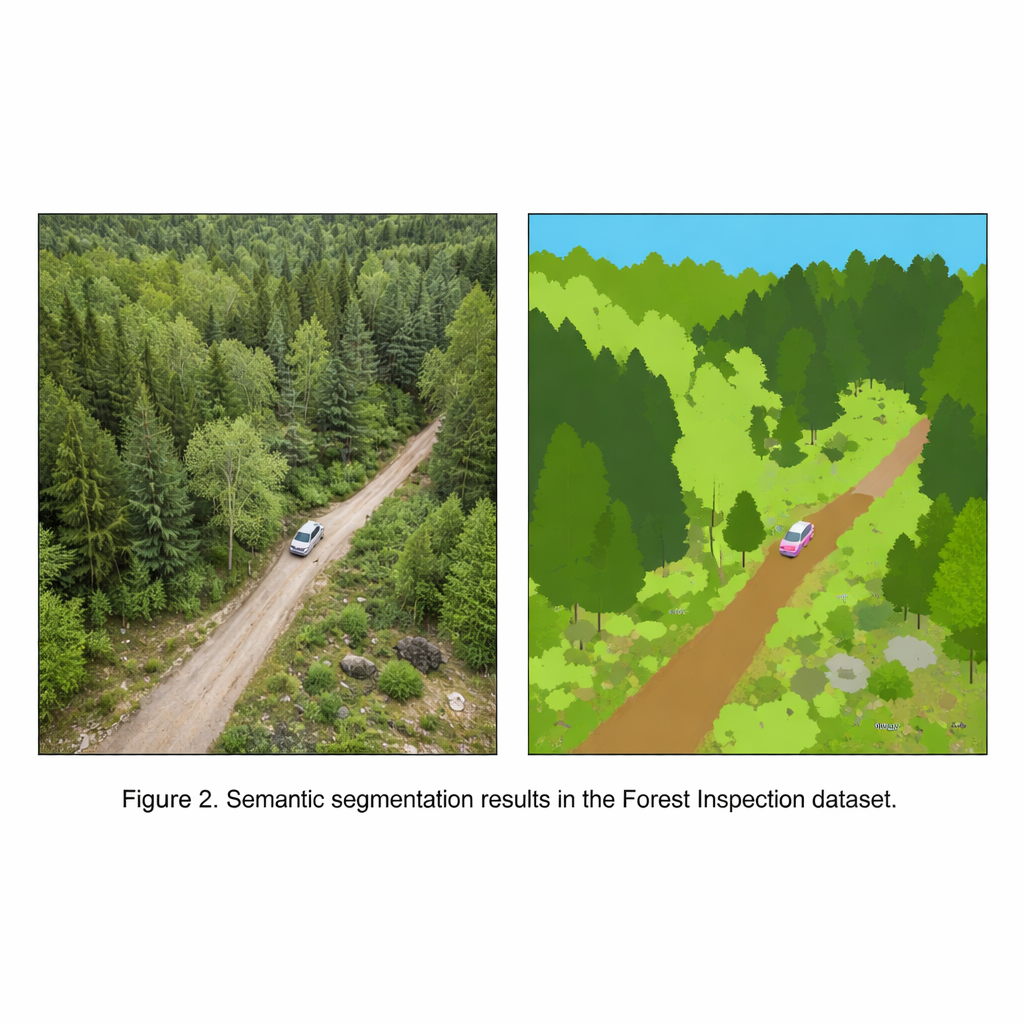

The Forest Inspection dataset is built inside a highly realistic virtual forest, created with a modern game engine. Instead of sending a physical drone into the woods, the authors fly a simulated drone through this digital landscape. Every image captured from the drone comes with a perfectly aligned “map” that assigns each pixel to one of 11 categories, including deciduous trees, coniferous trees, fallen trees, ground vegetation, dirt ground, rocks, sky, buildings, fences, and vehicles. Because everything is simulated, the team can generate thousands of images without manual drawing by human labelers, avoiding the time, cost, and inconsistencies that plague real-world annotation.

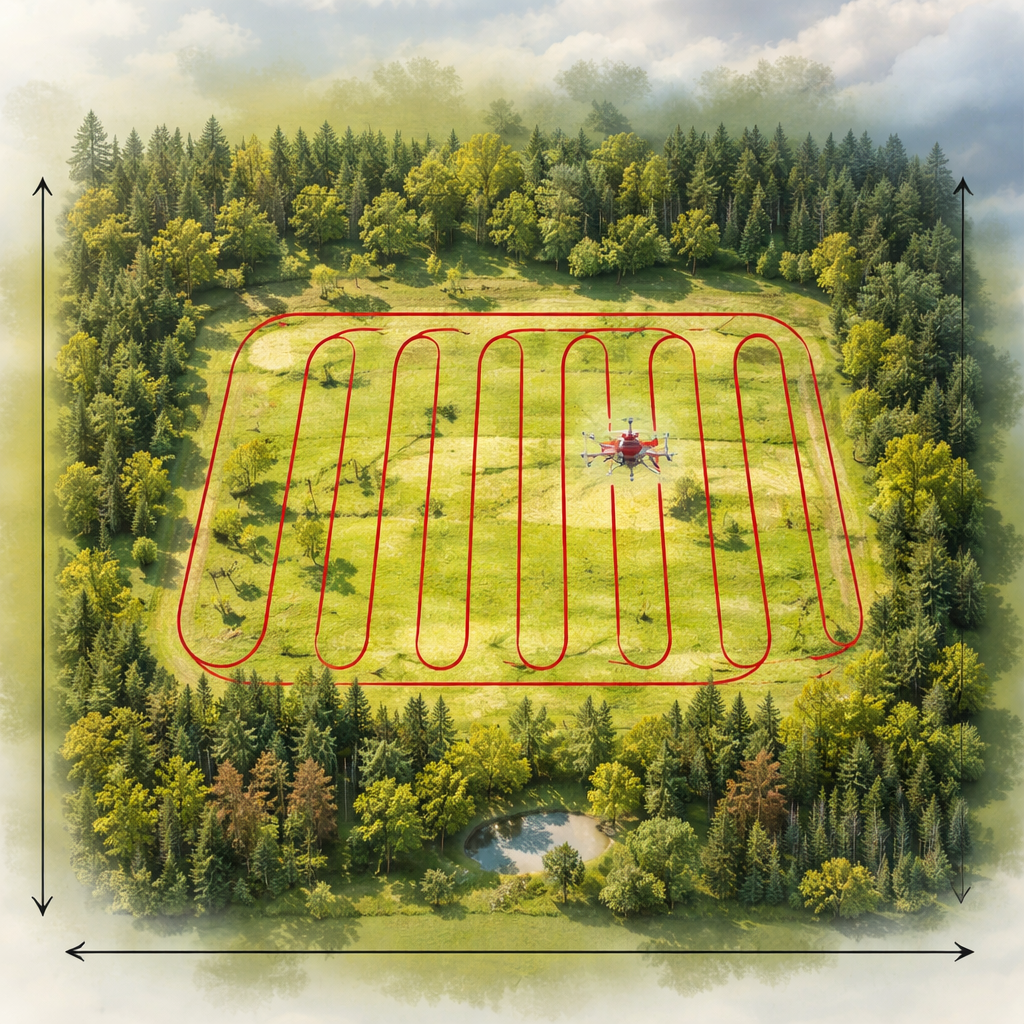

How the Synthetic Surveys Are Flown

To mimic real inspection flights, the virtual drone follows a classic back-and-forth “lawnmower” pattern over a rectangular patch of forest, similar to how a farmer might plow a field. The researchers record images at three flight heights—30, 50, and 80 meters—and three camera tilt angles: straight ahead, angled down, and straight down toward the ground. They repeat these flights under two common weather conditions, sunny and overcast, while keeping the camera settings fixed. The result is 18 sequences containing over 26,000 color images and matching label maps, all taken at a resolution suited to both scientific analysis and practical AI training.

Teaching Computers to Read the Woods

The main purpose of this dataset is to train and test AI systems that perform “semantic segmentation,” a task where each pixel in an image is classified into a meaningful category. The authors run several state-of-the-art segmentation models on Forest Inspection to check that the labels are reliable and informative. Modern neural networks achieve high accuracy on common categories such as sky, ground vegetation, and the two tree types. More challenging categories—especially rare but important ones like fallen trees, thin fences, or small cars—are harder to detect, but advanced models that capture broad context in the image perform noticeably better. This shows that the dataset can separate strong algorithms from weaker ones, a key property of a good benchmark.

How This Dataset Compares to Others

Many existing aerial datasets include forests, but most treat all trees and bushes as a single, generic “vegetation” class. The Forest Inspection dataset goes further by separating deciduous and coniferous trees and explicitly labeling fallen trees, which are crucial signs of storm damage, logging, or safety hazards. The authors compare their work with well-known drone datasets that cover cities, rural areas, or mixed natural scenes. Those collections are often larger in raw size or recorded with real cameras, but they either blur forest types together or lack disturbance-related classes. Forest Inspection aims squarely at inspection tasks: its controlled flight patterns, medium-scale size, balanced level of detail, and forest-focused labels make it particularly suitable for studying how drones can monitor wooded landscapes.

From Digital Woods to Real Forests

Because the images are synthetic, a natural question is whether AI trained on them can help in the real world. To test this, the authors first train a segmentation model only on the virtual forest, then fine-tune it on a real drone dataset collected over actual woodlands. The model that starts with synthetic training performs better than one trained on real data alone, especially for ground cover, trees, bare soil, and parked cars. This suggests that carefully designed digital forests can provide a powerful “starter lesson” for AI, which can then be refined using smaller amounts of real imagery.

What It Means for Forest Care

For non-specialists, the key message is that this work delivers a high-quality, freely available training ground where computers can learn to read forests from the air with exceptional precision. By distinguishing not just where trees are, but what kind they are and whether they are standing or fallen, the Forest Inspection dataset supports smarter tools for tracking forest health, spotting damage, and planning conservation efforts. Though born entirely in a virtual world, it is designed to help real drones and real people keep better watch over the world’s forests.

Citation: Blaga, BCZ., Nedevschi, S. Forest Inspection Dataset: A Synthetic UAV Dataset for Semantic Segmentation of Forest Environments. Sci Data 13, 298 (2026). https://doi.org/10.1038/s41597-026-06665-x

Keywords: forest monitoring, drone imagery, synthetic dataset, semantic segmentation, remote sensing