Clear Sky Science · en

A Chinese Elementary Science Question Dataset in Problem-Solving Process Generation

Helping Kids Learn Science with Smarter AI

Parents and teachers increasingly see artificial intelligence as a potential study partner, but current chatbots often give explanations that are either too shallow or far too advanced for children. This paper introduces a new Chinese Elementary Science Question (CSQ) dataset designed to teach large language models how to explain science the way a good primary school teacher would: step by step, at the right difficulty, and closely aligned with what children actually learn in class.

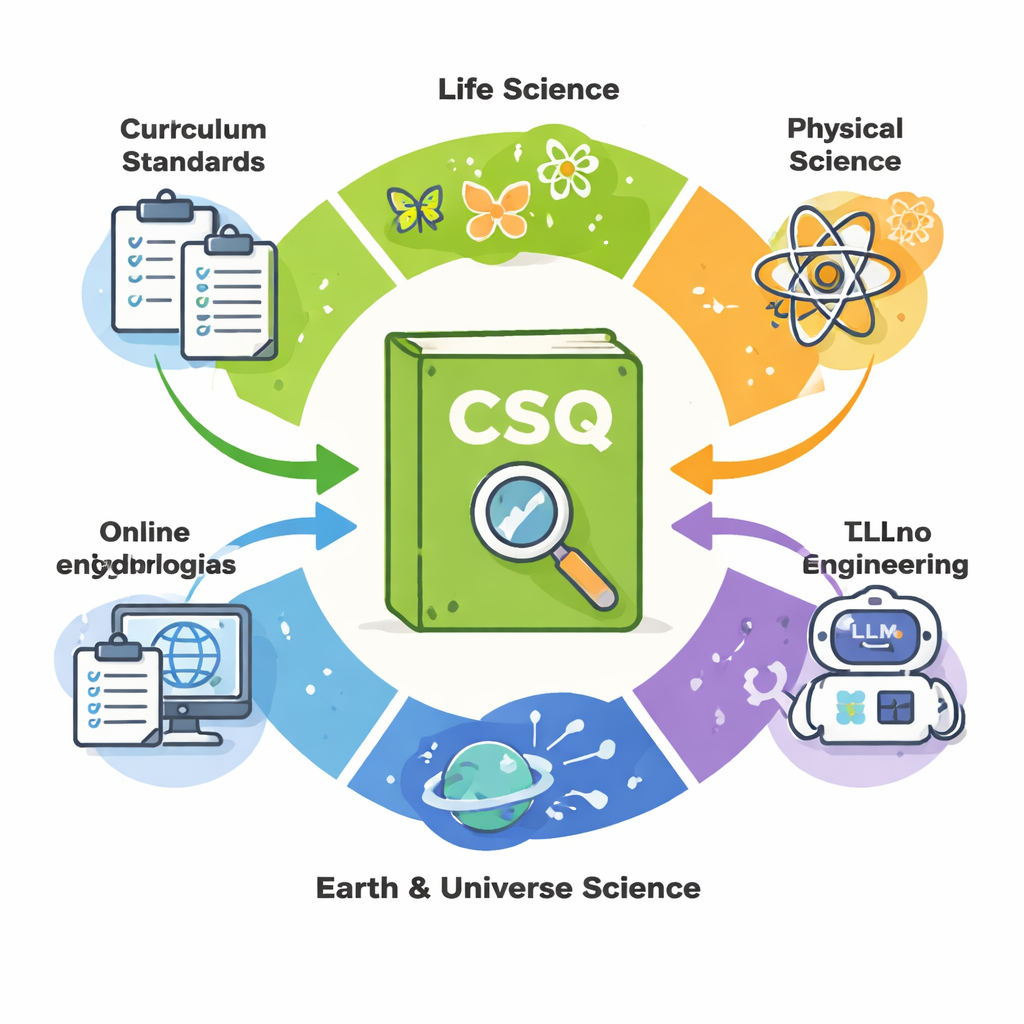

A New Question Bank for Young Science Learners

The CSQ dataset is a collection of 12,000 carefully crafted science questions drawn from China’s primary school curriculum, school exams, and trusted online resources. The questions cover four broad areas—life science, physical science, earth and space, and technology and engineering—across grades 1 through 6. Unlike many existing question banks that only list a question and its correct answer, each CSQ item also includes information about the grade level, topic, and which scientific skills are being tested, plus a full, age-appropriate explanation of the solution.

Capturing How Children Actually Think

A key innovation of CSQ is its focus on the “problem-solving thought” behind each answer. For every question, experts spell out the reasoning process in language and detail suitable for the target grade. For younger children, explanations stay concrete and observational—for example, describing what can be seen or felt. For older students, they gradually introduce more abstract ideas, such as systems, cause and effect, or simple models. Each item also tags the core skills involved, such as observing a phenomenon, comparing two objects, or identifying the function of a tool. This structure allows AI models not just to state the right answer, but to practice walking through the kind of thinking students are expected to learn.

Building the Dataset with Classroom Realism in Mind

Creating CSQ required a structured, human-centered process. A team of 19 researchers with experience in science education and AI divided the work into stages. Senior team members collected questions from official curriculum standards, exam papers, and encyclopedias, making sure they were legally reusable. Graduate students then adapted and annotated the questions so they fit multiple-choice or true/false formats and matched the official Science Curriculum Standards for Compulsory Education (2022). Their training stressed sticking to grade-appropriate vocabulary and cognitive depth. Every data item—question, discipline properties, and solution—was cross-checked by another annotator, and disagreements about the right skills or explanation depth were resolved using the national standards as a guide.

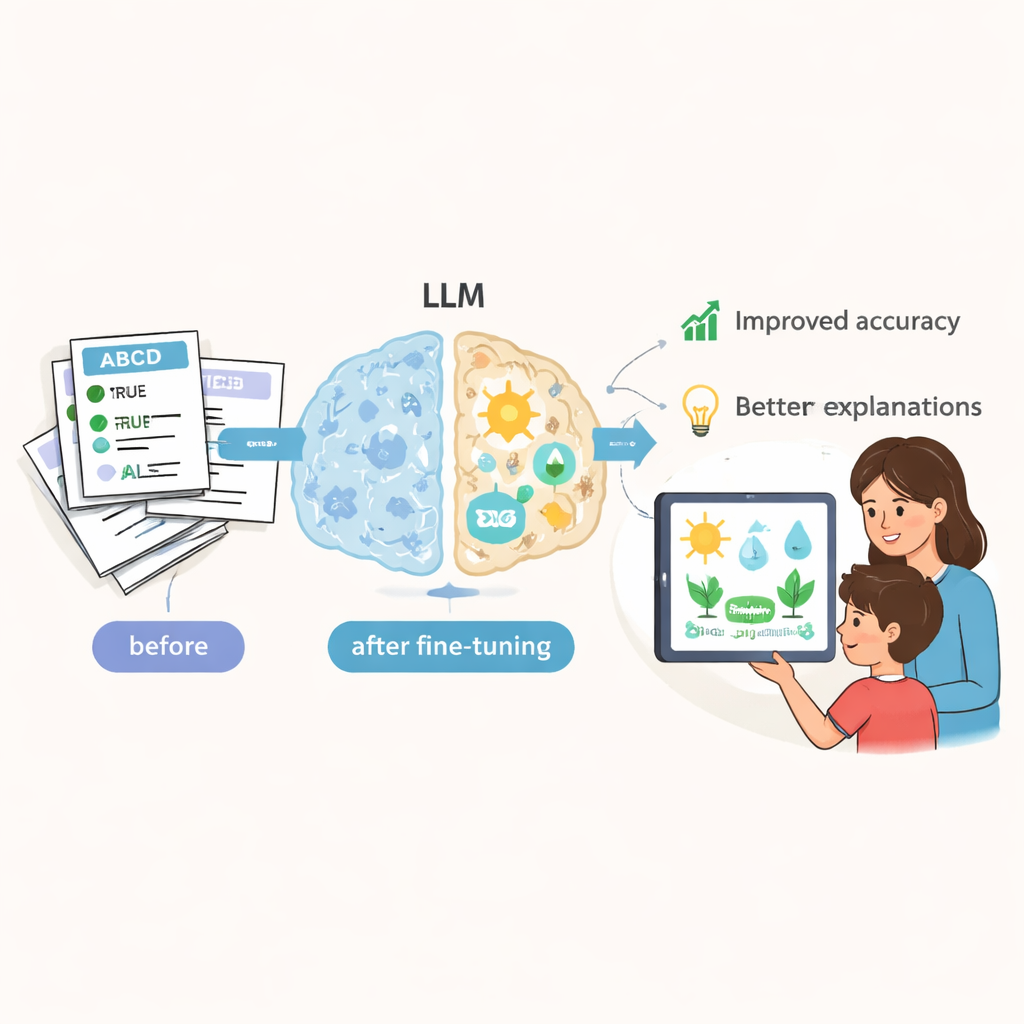

Teaching AI to Show Its Work

To test CSQ’s value, the researchers fine-tuned several open-source language models, as well as evaluating a leading commercial model, on this dataset. They did not just measure whether models picked the correct multiple-choice answer. They also assessed the quality of the generated reasoning using both automatic text metrics and expert human ratings. After training on CSQ, open-source models showed clear gains in accuracy and in the clarity and completeness of their explanations. For instance, a model that had previously answered an elementary sound question using advanced wave theory shifted toward a simpler, more age-appropriate description after fine-tuning. Human judges found that the fine-tuned models were much better at staying within the child’s grade level, avoiding “knowledge overshoot” where overly technical ideas confuse rather than help.

Limits Today, A Template for Tomorrow

The authors acknowledge that CSQ reflects the structure of China’s science curriculum and focuses only on question formats like multiple choice and true/false, not hands-on experiments or open-ended projects. The explanations were written by trained graduate students, not classroom teachers or children themselves, so there is more work to do to fully match real classroom language. Even so, the framework behind CSQ—linking each question to subject, topic, grade, specific skills, and step-by-step reasoning—is general enough to inspire similar resources for other languages and school systems. In simple terms, this work shows how carefully designed question sets can help AI become a more reliable, age-sensitive science tutor for young learners.

Citation: Li, D., Liu, Z., Wen, C. et al. A Chinese Elementary Science Question Dataset in Problem-Solving Process Generation. Sci Data 13, 291 (2026). https://doi.org/10.1038/s41597-026-06618-4

Keywords: elementary science education, large language models, question answering dataset, personalized tutoring, Chinese curriculum