Clear Sky Science · en

A multimodal dataset of causal mechanisms in materials science literature

Why this matters beyond the lab

Modern life depends on new materials, from phone batteries to medical implants. Yet the know-how that tells scientists which processing steps lead to which structures, properties, and real-world performance is scattered across millions of research papers. This article describes a large, organized "map" of that hidden know-how, built by combining artificial intelligence with human expertise, so that researchers and future AI tools can more quickly discover better materials.

Four pillars of materials, one big challenge

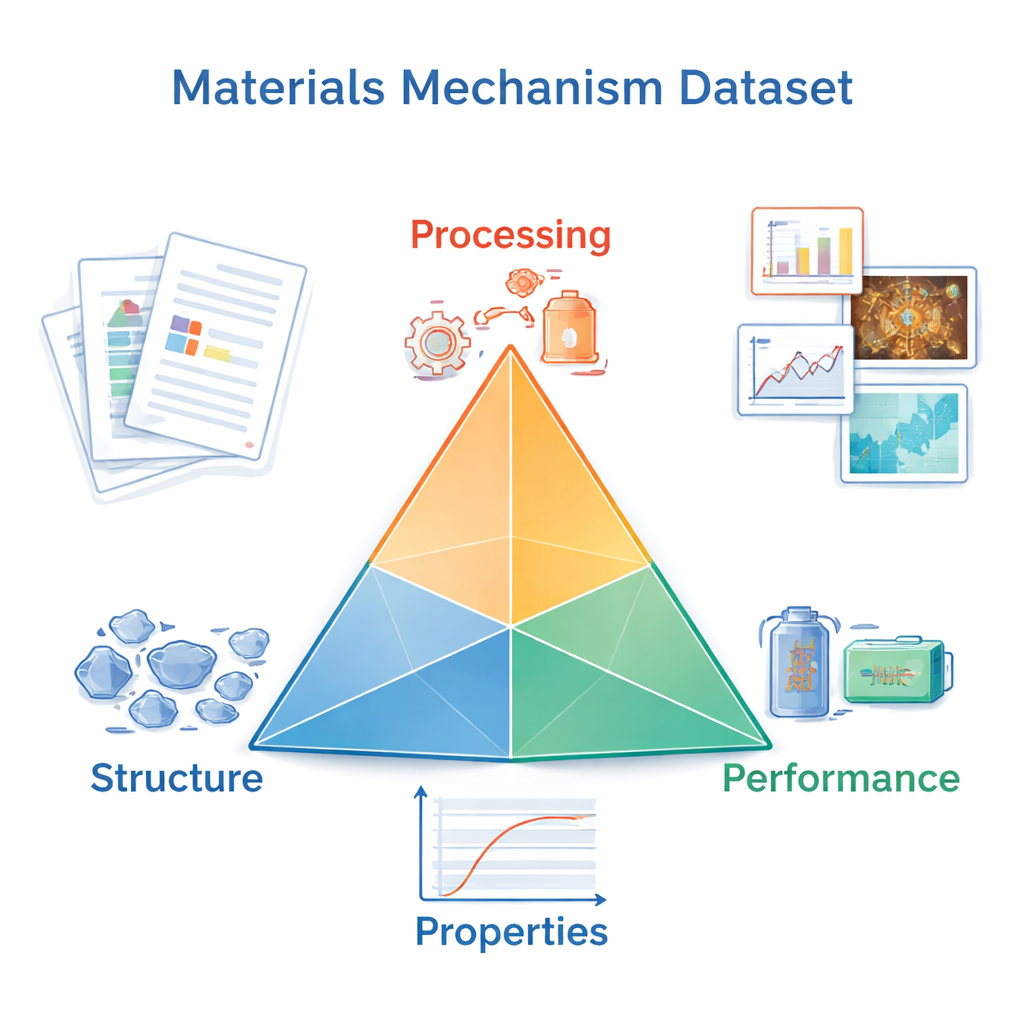

Materials scientists often think in terms of a "tetrahedron" with four corners: processing (how a material is made or treated), structure (how its atoms and grains are arranged), properties (such as strength or electrical conductivity), and performance (how it behaves in use). Researchers do not just want to know that one corner influences another; they want to understand the step‑by‑step mechanisms that explain why a certain heat treatment produces a tougher alloy or a brighter solar cell. Those explanations are buried in text, figures, and references across decades of literature, making them hard to search, compare, or reuse systematically.

Turning scattered papers into structured knowledge

The authors assembled a corpus of more than 61,000 research articles from 15 major materials journals, covering metals, ceramics, polymers, composites, thin films, nanomaterials, and biomaterials. Using advanced language models, they identified the main material in each paper and extracted the relevant processing steps, structural features, measured properties, and performance outcomes. At the same time, they pulled out the causal chains that link these elements, such as "processing → structure → property," focusing on the core scientific claims of each study.

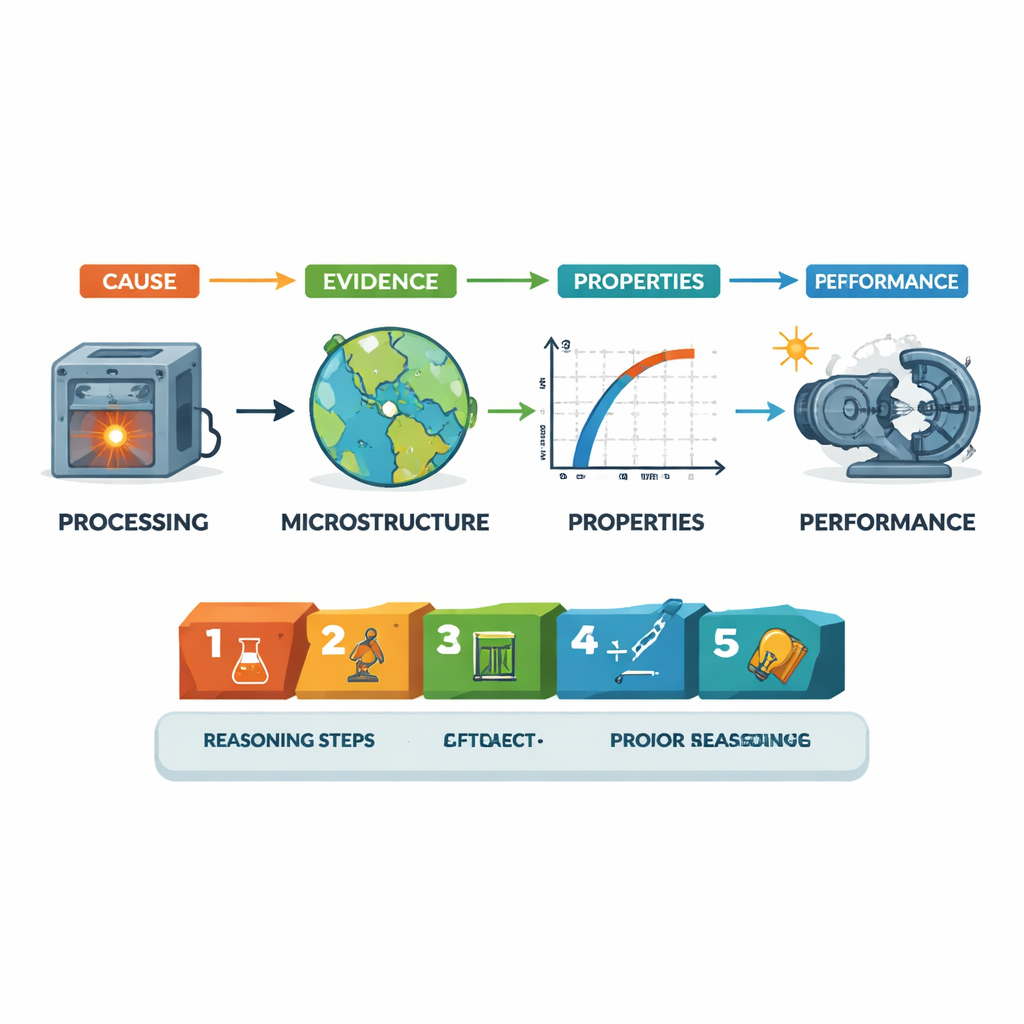

Seeing what images and experiments really show

Much of the evidence for these causal chains comes from images and experiments. The team trained an image classifier to recognize microscopic pictures—like electron microscope views of grain boundaries—that directly reveal a material’s inner structure. They also wrote routines to find and summarize experimental procedures and results, and to separate new findings from background knowledge cited from earlier work. All of this information is stored in a unified JSON format: each causal link is backed by specific experiments, images, and external knowledge, along with a stepwise reasoning chain that spells out how the authors argue from cause to effect.

Checking for errors and disagreements

Because AI can misread or overinterpret scientific text, the authors built safeguards into their pipeline. They used a special model to flag possible "hallucinations"—statements that are not clearly supported by the original paper—and to assign a confidence score to each extracted piece of evidence. They also looked for contradictions by comparing similar sentences across different articles, asking whether two papers report conflicting claims about the same kind of mechanism. Human experts in materials science then validated a carefully chosen sample. Overall, the system reached accuracies around or above 95% for identifying materials, images, and mechanisms, and found that outright contradictions and hallucinations remain relatively rare in the final dataset.

What the dataset reveals about materials research

With hundreds of thousands of mechanisms and over a million pieces of supporting evidence, the dataset offers a panoramic view of how modern materials science is practiced. It shows, for example, that studies most often follow the classic path from processing to structure, then to properties and performance, and that explanations typically use compact reasoning chains of about five steps. The collection spans diverse material types and chemical elements, with nanomaterials and coatings especially prominent, and tracks how interests have shifted over decades—from purely mechanical strength in metals toward electrical and optical behavior in nanomaterials and composites.

How this helps future discoveries

For non-specialists, the key outcome is a searchable, structured map of how scientists think about and justify cause‑and‑effect in materials. Instead of reading hundreds of papers, a researcher—or an AI assistant—can query the dataset to find all processing routes reported to improve, say, the ductility of a titanium alloy, along with the images and experiments that support those claims. By organizing mechanism-level knowledge across many studies, this work lays a foundation for more transparent, explainable AI tools that can not only predict promising new materials, but also clearly explain why they should work.

Citation: Liu, Y., Wang, C., Liu, J. et al. A multimodal dataset of causal mechanisms in materials science literature. Sci Data 13, 269 (2026). https://doi.org/10.1038/s41597-026-06598-5

Keywords: materials science, causal mechanisms, multimodal dataset, large language models, structure–property relationships