Clear Sky Science · en

RAID-Dataset: human responses to affine image distortions and Gaussian noise

Why tiny image changes matter to your eyes

Every day, your eyes effortlessly cope with photos that are tilted, zoomed, shifted, or a bit grainy—think of snapping a moving subject with your phone or scrolling through slightly blurry social media images. But how, exactly, do people sense these changes, and can computers be taught to judge image quality the way we do? This article introduces a new dataset, called RAID, that carefully measures how human observers react to simple but common image distortions, providing a bridge between everyday visual experience and the algorithms that power cameras, streaming services, and artificial intelligence.

Common picture tweaks put to the test

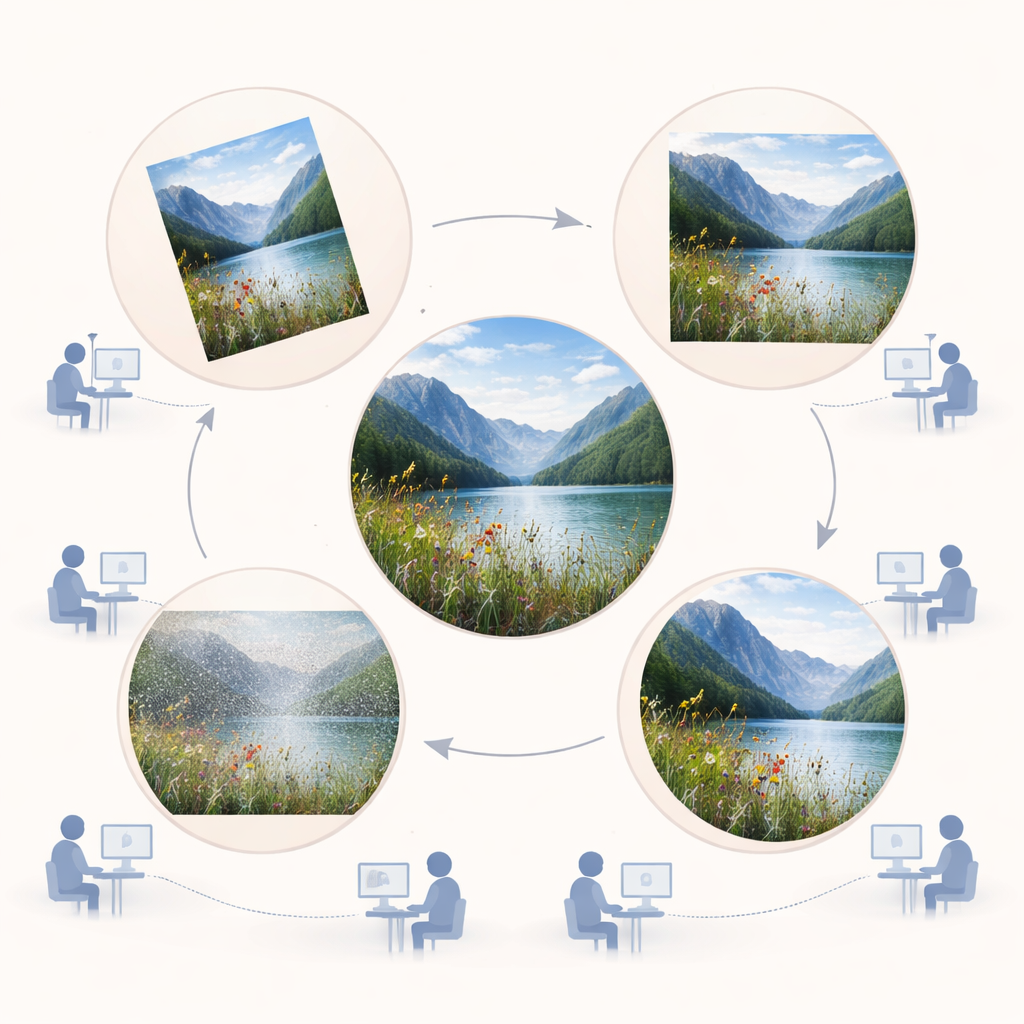

The researchers focused on four very basic changes that happen constantly in both the real world and digital images: rotation (tilting an image), translation (sliding it sideways), scaling (zooming in or out), and adding grainy speckle known as Gaussian noise. Unlike many existing image-quality databases that emphasize compression artifacts or digital glitches, these transformations mimic what happens when you move your head, shift your gaze, or when objects move and lighting changes. Using 24 natural color photographs from a well-known Kodak image collection, the team created nine increasing levels of each distortion, plus the original, for a total of 888 images.

How people compared picture differences

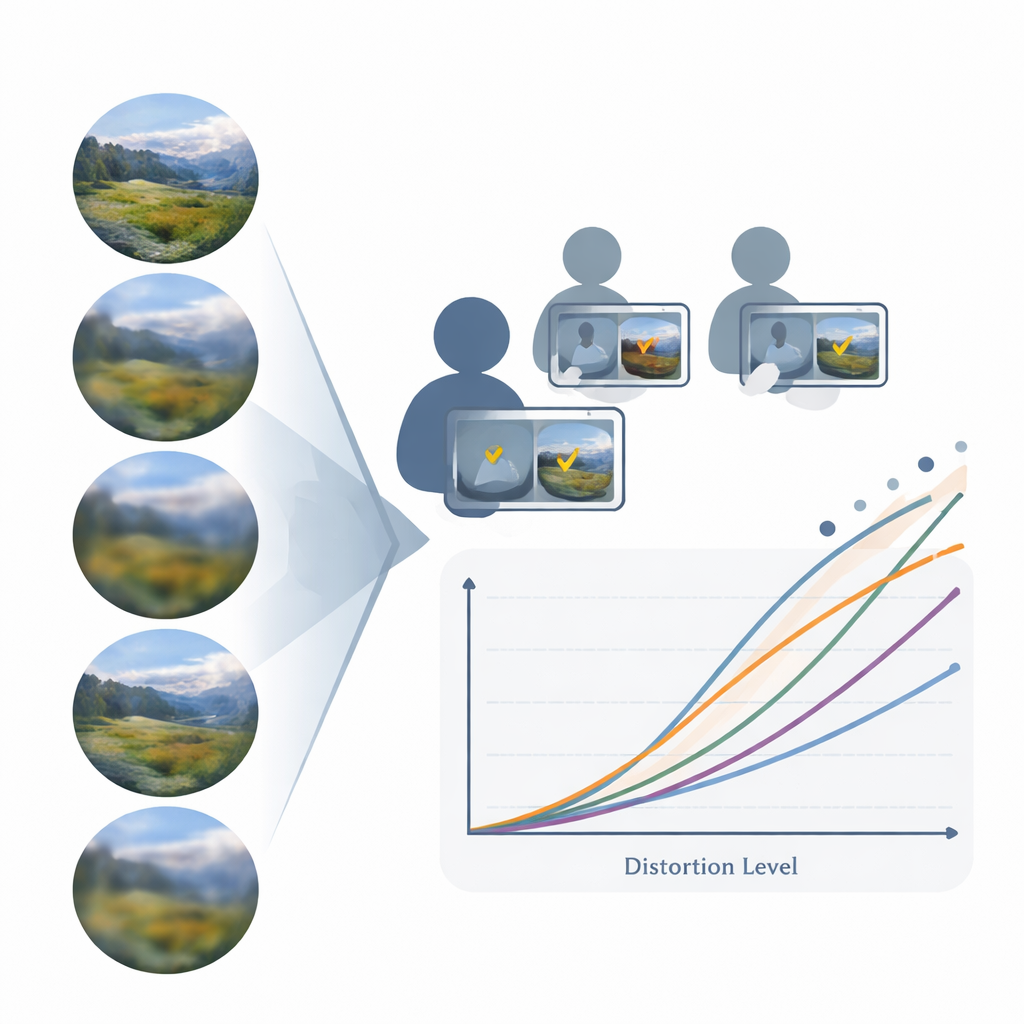

To find out how noticeable these changes really are, 210 volunteers came into a controlled laboratory, sat in front of calibrated monitors, and took part in more than 40,000 trials. In each trial, they saw two pairs of images on the screen and had to answer a simple question: which pair looks more different from each other, the left pair or the right pair? This method, known in vision science as Maximum Likelihood Difference Scaling, allowed the researchers to turn many such choices into a smooth “perceptual scale” for each distortion. Each point on a scale shows how strong a given distortion level feels to the average observer, from barely visible to clearly obvious.

Timing how fast the brain reacts

While people were making their choices, the experiment also recorded how long they took to respond. These reaction times revealed a classic pattern seen in other areas of perception: when the difference between the images was very small or extremely large, people responded relatively quickly, but at intermediate difficulty they slowed down. As distortions grew stronger, the visual system needed less time to decide which pair differed more. This behavior matches a well-known rule in psychology, Piéron’s law, which links stronger sensory signals to faster responses and supports the idea that the dataset is capturing genuine properties of human vision rather than random noise in people’s decisions.

Checking against existing quality scores

To make the new data useful for engineers and scientists who already rely on established image-quality benchmarks, the authors compared their measurements for noisy images with scores from a popular database called TID2013, where people rated image quality on a typical “opinion score” scale. They found a strong, nearly straight-line relationship: distortions that RAID observers judged as more noticeable tended to receive lower quality scores in TID2013. This link allowed the team to derive a simple formula to convert their perceptual scale values into standard opinion scores, making it easy to combine RAID with older datasets and to plug it into existing evaluation pipelines.

Why this matters for vision and AI

Beyond matching previous work, the new dataset highlights cases where its careful measurements outperform traditional opinion scores. By deliberately searching for image pairs where one method says the distortions are similar but the other says they are very different, and then asking people which is right, the authors show that their approach tends to align better with what viewers actually see. The dataset also reveals intuitive patterns: a slight tilt is far more obvious in a seascape with a strong horizon than in a busy scene full of angled shapes, and noise stands out more on smooth skies than on detailed textures. Put together, these results mean that RAID offers a richer, more human-centered description of how we notice everyday changes in pictures, providing a solid testing ground for improving both models of human vision and the AI systems that aim to see the world as we do.

Citation: Daudén-Oliver, P., Agost-Beltran, D., Sansano-Sansano, E. et al. RAID-Dataset: human responses to affine image distortions and Gaussian noise. Sci Data 13, 256 (2026). https://doi.org/10.1038/s41597-026-06581-0

Keywords: image quality, human vision, visual perception, image distortions, psychophysics