Clear Sky Science · en

A Mouse Cortex Video Segmentation Dataset for Intrinsic Optical Signal Tracking and Neural Activity Analysis

Watching Brain Waves Without Opening the Skull

Understanding how waves of activity ripple across the brain is essential for tackling disorders like epilepsy, stroke, and dementia. But directly watching these waves in living brains is technically demanding. This study introduces MouseCortex-IOS, a carefully built open dataset that lets researchers around the world explore how mouse brain activity spreads over the surface of the cortex, and test new artificial intelligence (AI) tools to analyze it more reliably and automatically.

A Camera on the Living Brain

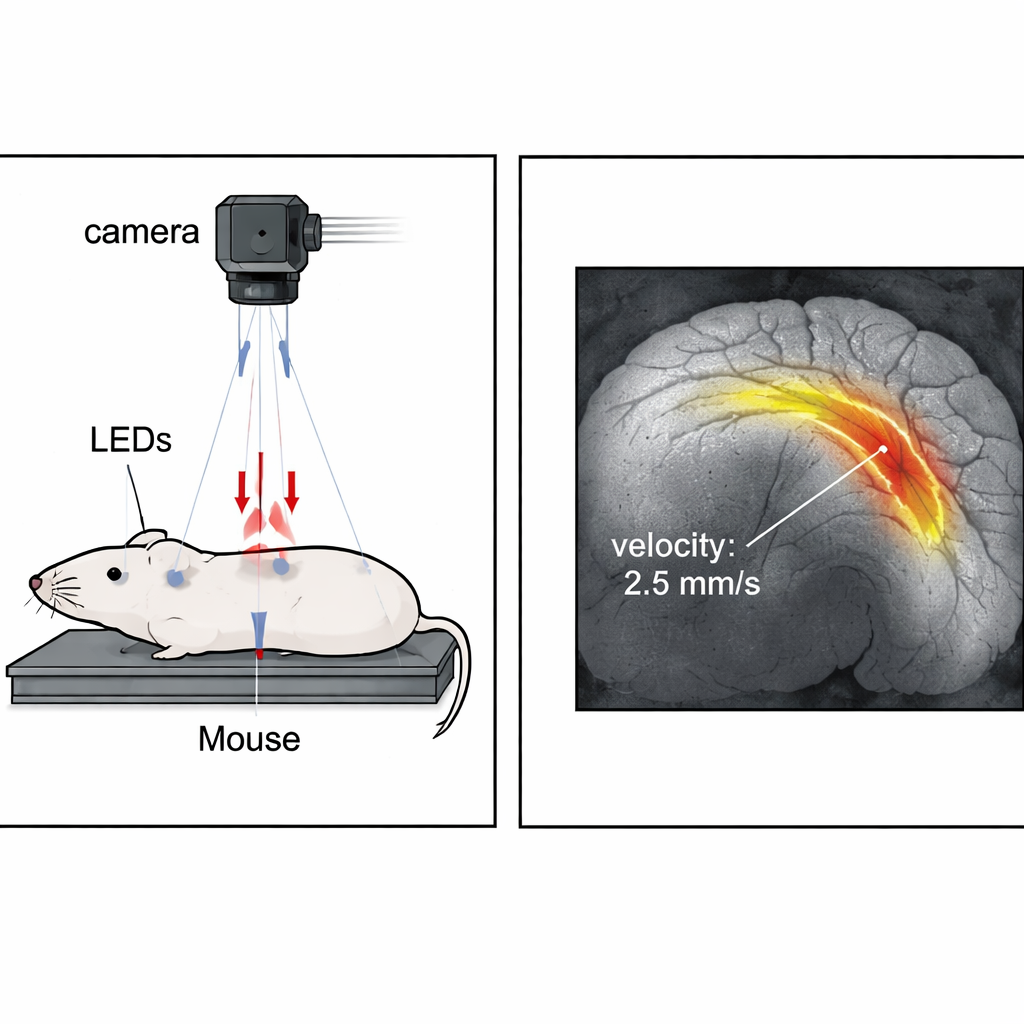

Instead of inserting electrodes into the brain, the researchers used a method called intrinsic optical signal imaging, where a sensitive camera looks through a tiny window in the mouse skull. Subtle changes in how the brain surface reflects light reveal shifts in blood and oxygen linked to nerve activity. These changes are extremely faint—often less than a few percent of the background—and easily drowned out by noise or small movements, which has made the data hard to interpret and compare across labs.

Turning Noisy Movies into Meaningful Maps

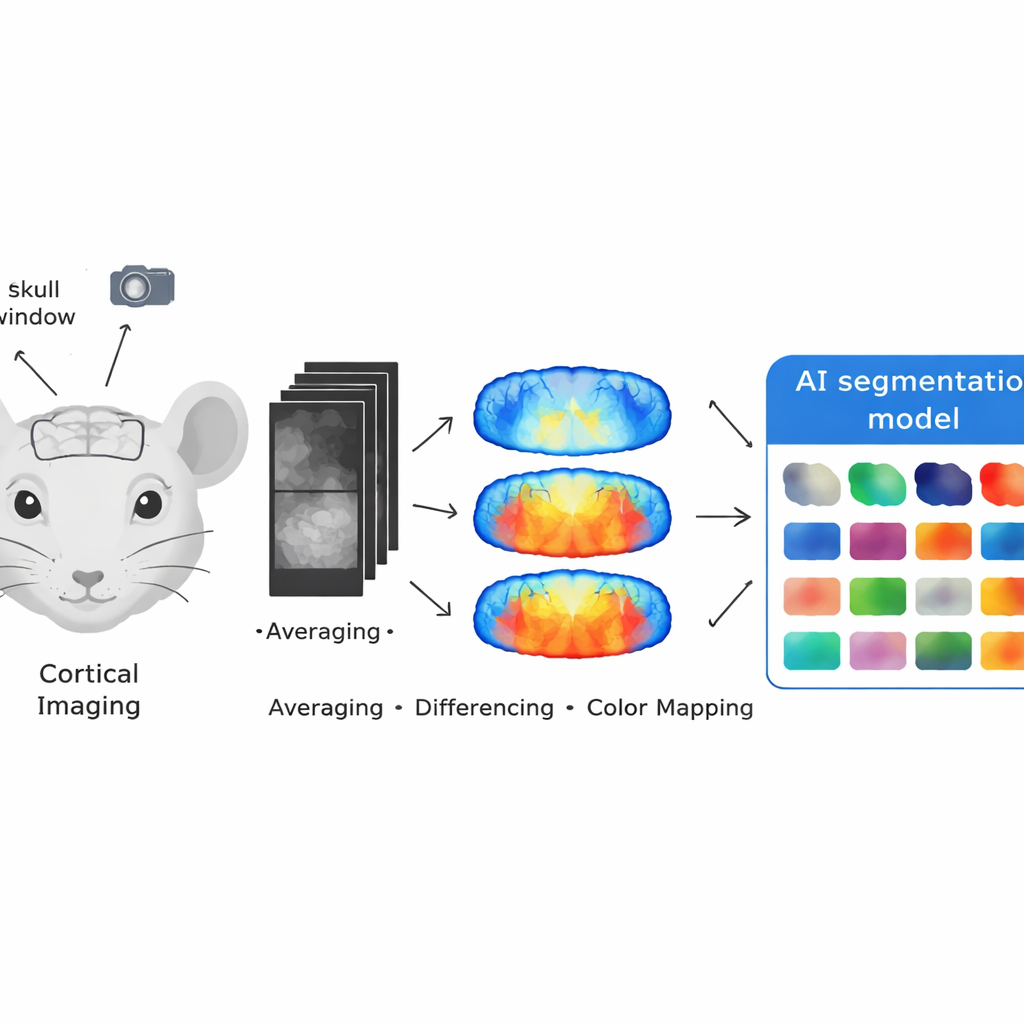

To tackle this, the team built a dataset from 14 mice that underwent different experimental conditions, including nerve stimulation and chemical triggers of spreading waves of brain activity. From long recording sessions they extracted 5,732 key images grouped into 194 short video clips. Before any AI touched the data, the raw grayscale movies were processed in three steps: first, frames were averaged over time to reduce random noise and movement; second, differences between frames were computed to highlight real changes in the signal; and third, the cleaned signals were converted into color maps so activity patterns popped out clearly against the background.

Letting an AI Assistant Draw the Boundaries

Once these clearer maps were created, the authors used a new family of AI tools originally designed to "segment anything" in images and videos. In their pipeline, a human expert only needs to mark the area of interest in the first frame of a clip. The AI model, tuned for video, then automatically follows that region across the rest of the frames, drawing the outlines of active brain areas with a single click. For most clips this semi-automatic approach replaces the painstaking process of tracing each frame by hand, cutting labeling time by roughly an order of magnitude while keeping human oversight where it matters most.

Checking That the Maps Match Reality

To ensure these AI-generated outlines were trustworthy, the team compared them to detailed manual markings made by experienced annotators. They tested their pipeline against a classic deep learning model (U-Net) and against the raw output of the segmentation AI itself, across easy, moderate, and very noisy videos. Their tailored pipeline consistently matched human labels more closely than the alternatives, even in the hardest cases, with strong agreement scores that indicate the outlines reliably capture real brain signals. Additional checks showed that two different human experts were themselves highly consistent with each other, reinforcing confidence in the "ground truth" used for evaluation.

From Colored Blobs to Brain Insights

Because every frame in MouseCortex-IOS is precisely labeled, researchers can now compute practical measures such as where a signal starts, how far and how fast it travels, how long it lasts, and how much of the cortex it covers. The authors demonstrate this by tracking waves triggered by stimulating the vagus nerve, showing how activity sweeps across the brain surface in a way that agrees with expert expectations. By making both the dataset and the processing code publicly available, this work offers a shared foundation for building and testing new analysis tools, ultimately helping scientists better understand how brain activity spreads in health and disease.

Citation: Zhang, W., Zeng, G., Zheng, Z. et al. A Mouse Cortex Video Segmentation Dataset for Intrinsic Optical Signal Tracking and Neural Activity Analysis. Sci Data 13, 255 (2026). https://doi.org/10.1038/s41597-026-06580-1

Keywords: mouse cortex imaging, intrinsic optical signals, video segmentation, neural activity mapping, brain imaging dataset