Clear Sky Science · en

A generalizable foundation model for analysis of human brain MRI

Teaching Computers to Read Brain Scans

Magnetic resonance imaging (MRI) lets doctors look inside the living brain without surgery, but making sense of those images still relies heavily on human experts and large labeled datasets. This study introduces BrainIAC, a kind of “general-purpose brain engine” that learns from tens of thousands of unlabeled brain scans and can then be quickly adapted to many medical questions—from estimating brain age to outlining tumors—often with only a handful of examples. For patients, such technology could eventually mean faster diagnoses, better treatment planning, and access to advanced imaging tools even in hospitals with limited specialized expertise.

Why Brain Scans Are Hard for Computers

Brain MRI is rich but messy. A single person can be scanned with several different settings, each highlighting different tissues or disease features. Hospitals use a variety of scanners and protocols, so images can look quite different from place to place. On top of that, detailed expert labels—for example, drawing exact tumor borders or tracking long-term survival—are expensive and rare. Traditional artificial intelligence systems are usually trained for one narrow task on one curated dataset. They tend to struggle when asked to work on new hospitals, rare diseases, or questions they were not specifically built for.

A Single Core Model for Many Brain Tasks

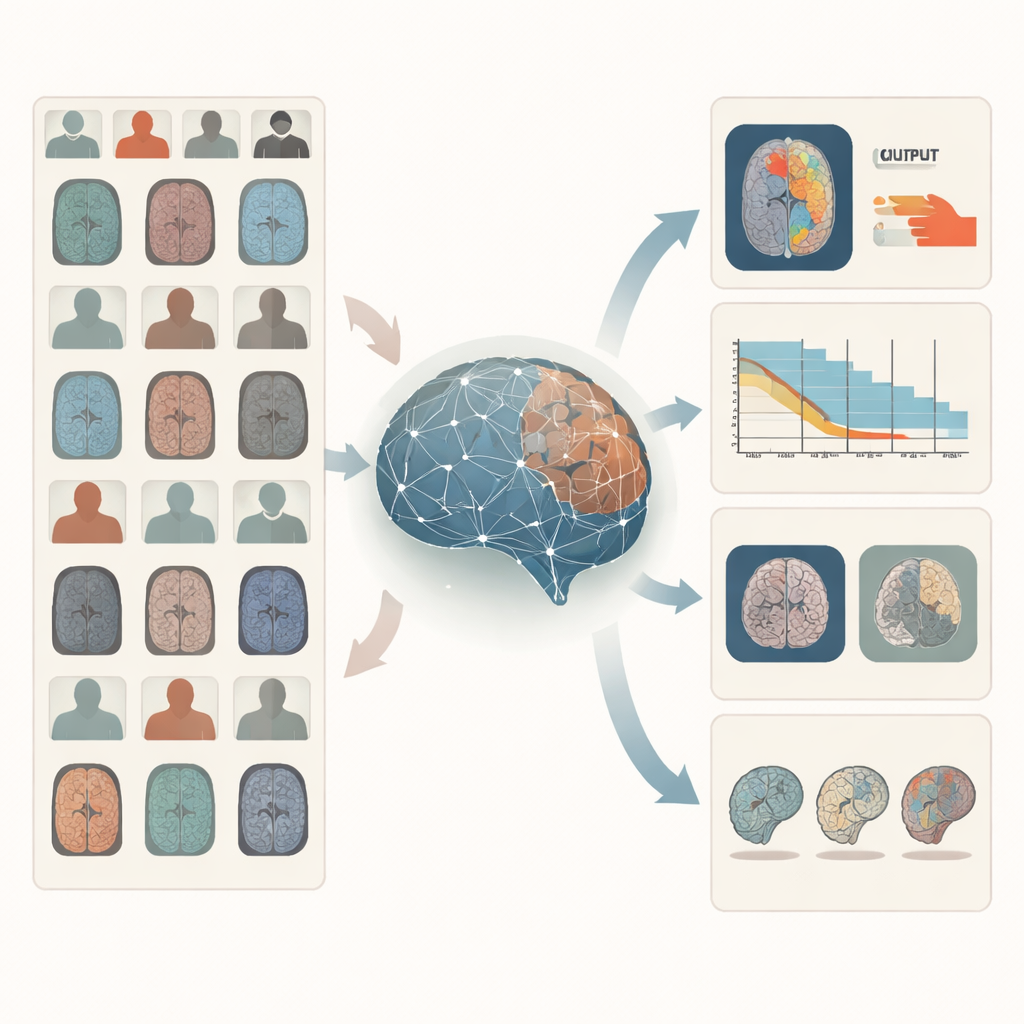

BrainIAC takes a different route: instead of learning one task at a time, it first learns the general “language” of brain structure and disease from 32,015 MRI scans drawn from 34 datasets and ten neurological conditions, totaling nearly 49,000 scans in the full pool. The model is trained in a self-supervised way, meaning it does not need human labels. It looks at many small three-dimensional patches cut from whole-brain scans and learns to tell when two differently augmented versions come from the same location versus from different brains. By pulling matching patches closer together and pushing unrelated ones apart in its internal space, BrainIAC builds a flexible representation of how healthy and diseased brains commonly look across ages, scanners, and hospitals.

Putting the Brain Engine to Work

Once this core representation is learned, the researchers test BrainIAC on seven concrete tasks that mirror real clinical problems. These include sorting scans by MRI sequence type, estimating how old a person’s brain appears, predicting whether a brain tumor carries a key genetic mutation, forecasting survival for patients with aggressive tumors, distinguishing early memory problems from normal aging, estimating how long ago a stroke occurred, and outlining tumors on images. For each task, they compare three strategies: training a model from scratch on that task alone, starting from earlier medical imaging models built for other purposes, or fine-tuning BrainIAC’s already learned brain features. Across the board, BrainIAC either matches or outperforms the alternatives, especially when only limited labeled data are available.

Working Well When Data Are Scarce

A major test is how the system behaves when labeled data are extremely scarce, as often happens with rare diseases or expensive imaging studies. The team explores scenarios where only 10% of the usual training scans are used and even tougher “few-shot” settings where there is just one or five labeled examples per class. In these tight conditions, BrainIAC consistently gives more accurate predictions than models trained from scratch or other available foundation models. For example, it better distinguishes subtle MRI sequence types, more accurately predicts tumor genetics and survival, and draws cleaner tumor outlines using far fewer annotated images. The model also proves more stable when common MRI artifacts, such as contrast shifts or scanner-related distortions, are artificially added, suggesting that it has learned robust features rather than brittle shortcuts.

What This Could Mean for Patients and Clinicians

To understand whether BrainIAC is focusing on clinically meaningful regions, the authors generate visual “attention maps” showing where the model looks when making decisions. These maps highlight structures such as the hippocampus for early memory problems, white matter regions for age estimation, and the tumor core for genetic and survival predictions—areas that align with human expert intuition. Because BrainIAC can plug into different analysis pipelines and can be adapted with minimal extra training, it offers a flexible backbone for future imaging tools, including potential combinations with clinical records or genetic data.

A Step Toward Smarter, More Accessible Brain Imaging

Overall, the study shows that a single, carefully trained foundation model can serve as a strong starting point for many different brain MRI tasks, often outperforming specialized systems that must be rebuilt from scratch each time. For non-specialists, the key takeaway is that BrainIAC acts like a broadly educated “brain reader” that can quickly pick up new skills with only a few examples. While it does not replace tailored models or medical judgment, it lays important groundwork for making advanced image-based predictions more accurate, more robust, and more widely available, including in situations where collecting large labeled datasets would otherwise be impossible.

Citation: Tak, D., Garomsa, B.A., Zapaishchykova, A. et al. A generalizable foundation model for analysis of human brain MRI. Nat Neurosci 29, 945–956 (2026). https://doi.org/10.1038/s41593-026-02202-6

Keywords: brain MRI, medical AI, foundation models, self-supervised learning, neuroimaging