Clear Sky Science · en

An LLM chatbot to facilitate primary-to-specialist care transitions: a randomized controlled trial

Why a digital helper in the waiting room matters

Anyone who has waited hours to see a busy hospital specialist knows how rushed the final conversation can feel. This study asks a simple question with big implications: could an artificial-intelligence chatbot talk with patients before the visit, gather their story, and hand specialists a clear summary—saving time while actually improving the human side of care? In two large Chinese hospitals, researchers tested a patient-facing large language model (LLM) called PreA to see whether such a digital helper could make crowded clinics run more smoothly and feel more personal, especially in resource‑strained settings.

The problem of crowded clinics

Health systems around the world are struggling with aging populations, people living with several chronic conditions at once, and uneven access to primary care. In China, many patients bypass local clinics and head straight to big hospitals, flooding specialist clinics with first‑time visits. Specialists often meet patients with no prior referral notes, must reconstruct the full medical story on the spot, and have only a few minutes to do so. The result is long waiting lines, short face‑to‑face visits, and high stress for both doctors and patients. Simple stopgaps like nurse‑led triage help, but nurses rarely have time or training to gather detailed histories for every case.

How the chatbot was built with the community

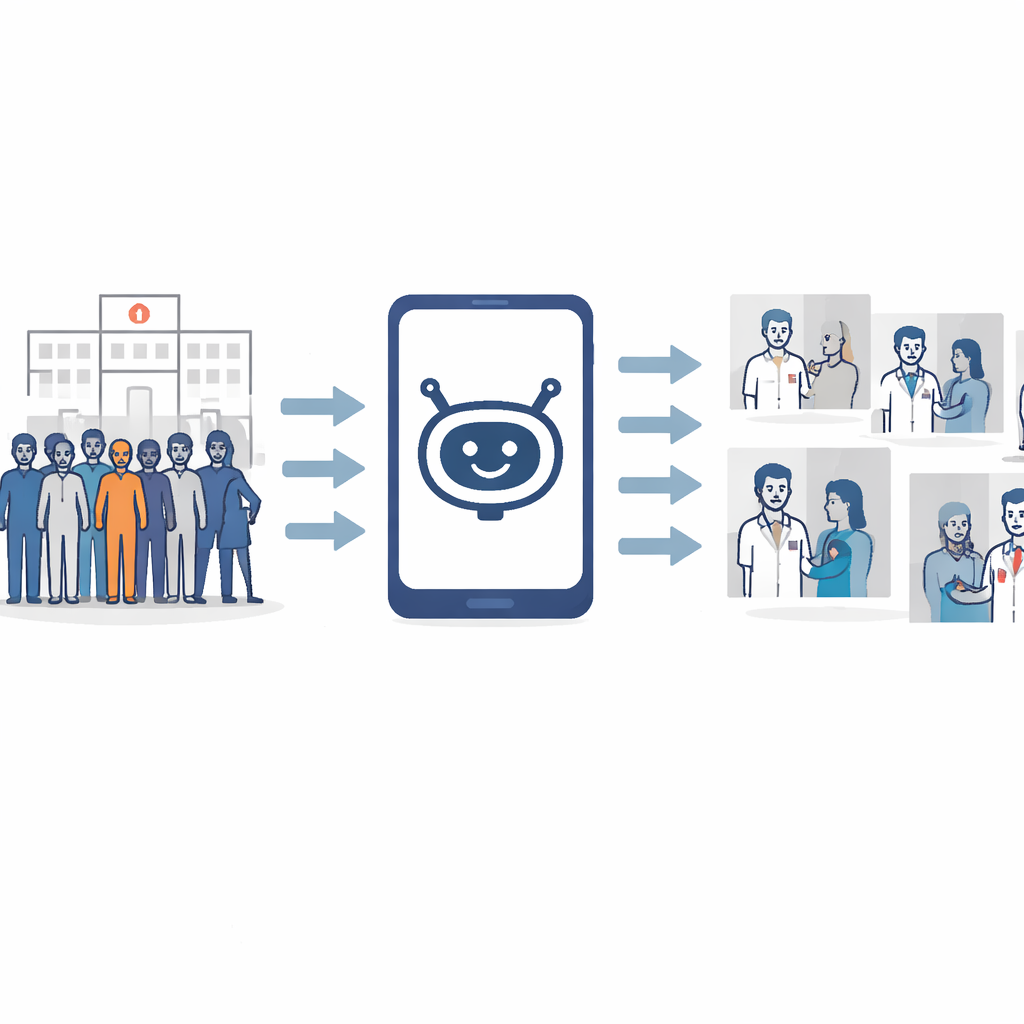

The team developed PreA as a conversational assistant designed specifically for the gap between a patient arriving at the hospital and sitting down with a specialist. Instead of training the system mainly on messy local transcripts—which can encode rushed habits and bias—the researchers used a co‑design process. Patients, caregivers, community health workers, nurses, primary‑care doctors, specialists and hospital leaders all helped shape how the chatbot should ask questions, what information it should collect, and how its summaries should look. The chatbot works on a mobile phone, supports text or voice, uses simple language for people with limited health literacy and shares access with family members who help older or sicker relatives navigate care.

Putting the digital assistant to the test

To see whether PreA worked in the real world, the team ran a randomized controlled trial in 24 specialties across two large hospitals in western China. More than 2,000 adults seeking specialist care were assigned to one of three groups: using PreA on their own before the visit; using PreA with help from staff; or receiving usual care with no chatbot. In the PreA groups, patients spent about three and a half minutes chatting with the system, which then produced a structured referral report on their main concerns, medical history, likely diagnoses and suggested tests. Specialists quickly reviewed this report, then met patients as usual. Consultations in the PreA‑only group were 28.7% shorter than in the usual‑care group, yet doctors saw more patients per shift without increasing waiting times. Remarkably, the results were just as strong when patients used the chatbot without staff support, hinting at scalability in busy clinics.

Did faster visits still feel human?

Shorter visits often raise fears of colder, more mechanical care. Here, the opposite happened. Patients and caregivers who used PreA reported that conversations with their doctors felt easier, that physicians seemed more attentive and respectful, and that they were more satisfied with the visit and more willing to use such tools again. Specialists rated the chatbot’s referral reports as far more helpful for coordinating care than the minimal notes they usually receive. Independent experts judged PreA’s summaries to be more complete and clinically relevant than many physician notes, in part because routine documentation in strained clinics often leaves gaps. Yet an analysis of doctors’ own notes showed no sign that they simply copied or blindly followed the AI’s suggestions, easing concerns that automation bias might quietly steer decisions.

Why the way the AI was trained matters

The researchers also probed a deeper issue: should medical AI simply mirror local practice, or help improve it? They compared the co‑designed PreA with a version further fine‑tuned on hundreds of real‑world primary‑care conversations from the same regions. That data‑tuned version performed worse. It echoed local shortcuts, skipped important questions, missed needed tests and sometimes adopted an unfriendly tone—essentially scaling up existing weaknesses. By contrast, the co‑designed model, shaped around best‑practice guidelines and community priorities, produced higher‑quality histories, diagnoses and test suggestions in simulated cases. This contrast suggests that involving local stakeholders in steering model behavior may be safer and more equitable than simply feeding an algorithm raw local dialogue.

What this means for patients and health systems

For patients, the bottom line is that a short conversation with an AI assistant before seeing a doctor can make the actual visit feel clearer, calmer and more focused on what matters most to them. For overstretched health systems, PreA hints at a way to reclaim scarce specialist time without sacrificing the human connection at the heart of medicine. Instead of replacing clinicians, the chatbot shoulders the routine work of information gathering and documentation, allowing doctors to concentrate on listening, explaining and making nuanced decisions. While larger and more diverse studies are still needed, this trial points to a future where carefully co‑designed chatbots serve as front‑door guides—helping patients navigate complex hospitals and helping clinicians deliver more patient‑centered care, even when every minute counts.

Citation: Tao, X., Zhou, S., Ding, K. et al. An LLM chatbot to facilitate primary-to-specialist care transitions: a randomized controlled trial. Nat Med 32, 934–942 (2026). https://doi.org/10.1038/s41591-025-04176-7

Keywords: AI in healthcare, patient chatbots, hospital workflow, primary care referrals, medical co-design