Clear Sky Science · en

Towards end-to-end automation of AI research

Why a Robot Scientist Matters

Imagine a tireless digital researcher that can dream up ideas, write computer code, run experiments, draw graphs, and even draft and review scientific papers with almost no human help. This paper describes such a system, called “The AI Scientist.” It shows that modern artificial intelligence can now handle nearly every step of a research project in machine learning, hinting at a future where discoveries come faster—but also raising serious questions about trust, jobs, and the health of science itself.

From Idea to Finished Paper

The AI Scientist is designed to walk through the full life cycle of a study, much like a graduate student might. First, it proposes research directions within a chosen area of machine learning, explaining why each idea might be interesting and outlining a plan to test it. It then checks these ideas against online research databases to avoid simply copying existing work. Only ideas that appear genuinely new move forward. Next, the system writes and edits the computer code needed to run experiments, fixes many of its own bugs, and keeps a running “lab notebook” of what it tried and what happened.

Two Ways to Let the System Explore

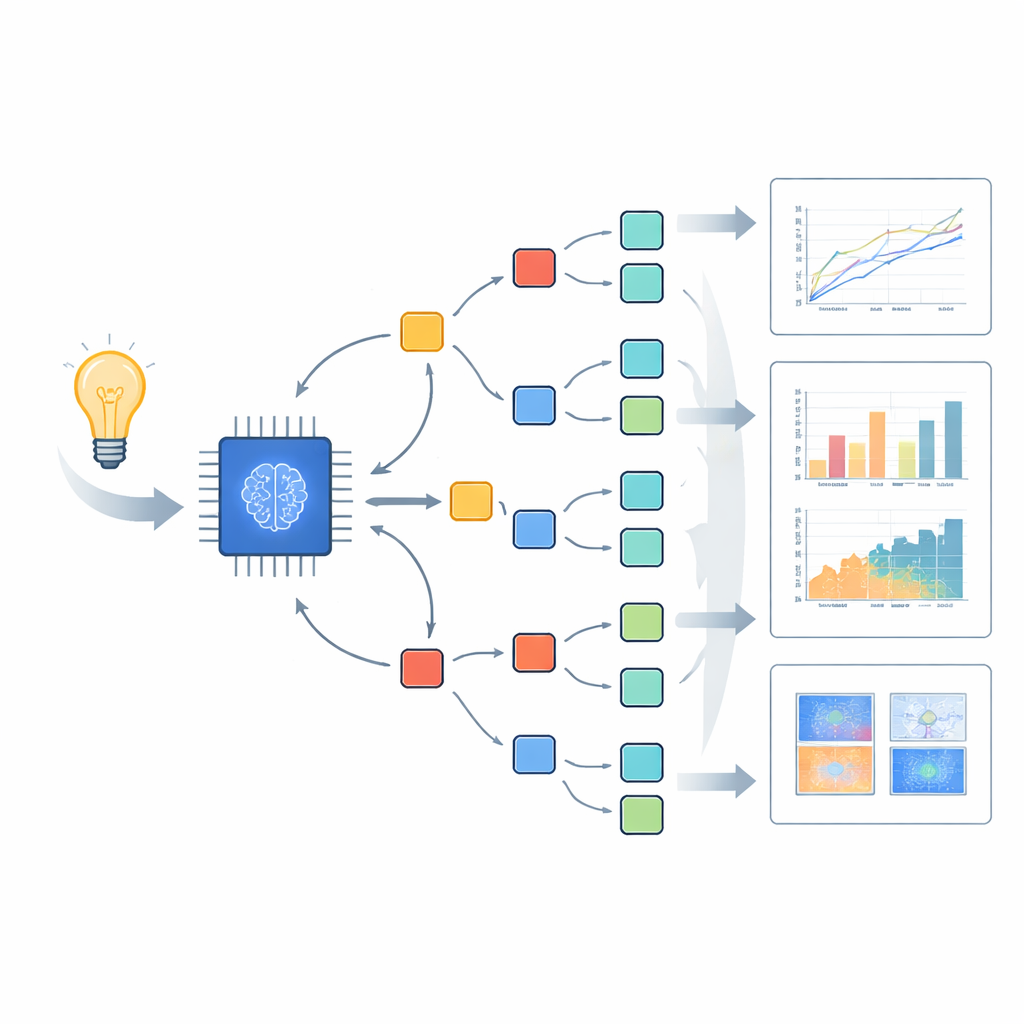

The researchers built two versions of this digital scientist. In the “template-based” mode, humans supply a simple starter program, and the system gradually modifies it to explore related questions. In the “template-free” mode, the AI starts almost from scratch: it invents ideas, designs experiments, and writes code on its own, guided only by broad instructions such as the theme of a conference workshop. This open-ended version uses a branching search through many parallel experiment “paths,” promoting the most promising ones and pruning those that crash or produce poor results. More computing power lets it explore more branches and tends to produce stronger final studies.

Teaching an AI to Act Like a Peer Reviewer

Judging the quality of an endless stream of AI-written papers is a challenge, so the team also built an Automated Reviewer. This tool reads research papers, scores them on soundness and contribution, lists strengths and weaknesses, and makes an accept-or-reject recommendation using the same guidelines as a top machine learning conference. When tested on thousands of real papers with known decisions, the Automated Reviewer’s judgments matched human reviewers about as well as humans match each other. It performed similarly even on recent papers that were not in its training data, suggesting it had truly learned the review task rather than memorizing outcomes.

Putting the AI Scientist to the Test

To see how well their system actually performs in the wild, the authors asked it to generate full papers for a workshop at a leading machine learning conference. With ethics approval and the organizers’ cooperation, three AI-generated manuscripts were submitted alongside human-written ones. Reviewers were told that some submissions might be AI-written but not which ones. One of the three AI-created papers earned review scores that would have cleared the bar for acceptance at the workshop; the authors withdrew it afterward under a pre-agreed protocol. The other two papers fell short of the standard. Overall, the system produced work that is not yet on par with the best human research, but is already good enough to occasionally pass real peer review.

Promises, Pitfalls, and the Road Ahead

Although The AI Scientist still makes mistakes—such as shallow ideas, coding errors, and misleading citations—the study suggests that as underlying AI models and computing resources improve, such systems will likely get much better. That could dramatically speed up discovery in fields where experiments can be run on computers or in automated labs. At the same time, easy paper generation could flood journals with low-quality work, blur lines around authorship and credit, and enable risky or unethical experiments. The authors argue that the scientific community needs clear rules and safeguards now, while the technology is still emerging, so that automated researchers end up strengthening science rather than weakening it.

Citation: Lu, C., Lu, C., Lange, R.T. et al. Towards end-to-end automation of AI research. Nature 651, 914–919 (2026). https://doi.org/10.1038/s41586-026-10265-5

Keywords: automated scientific research, AI scientist, machine learning experiments, peer review automation, scientific integrity