Clear Sky Science · en

A large-scale coherent 4D imaging sensor

Seeing the World in Four Dimensions

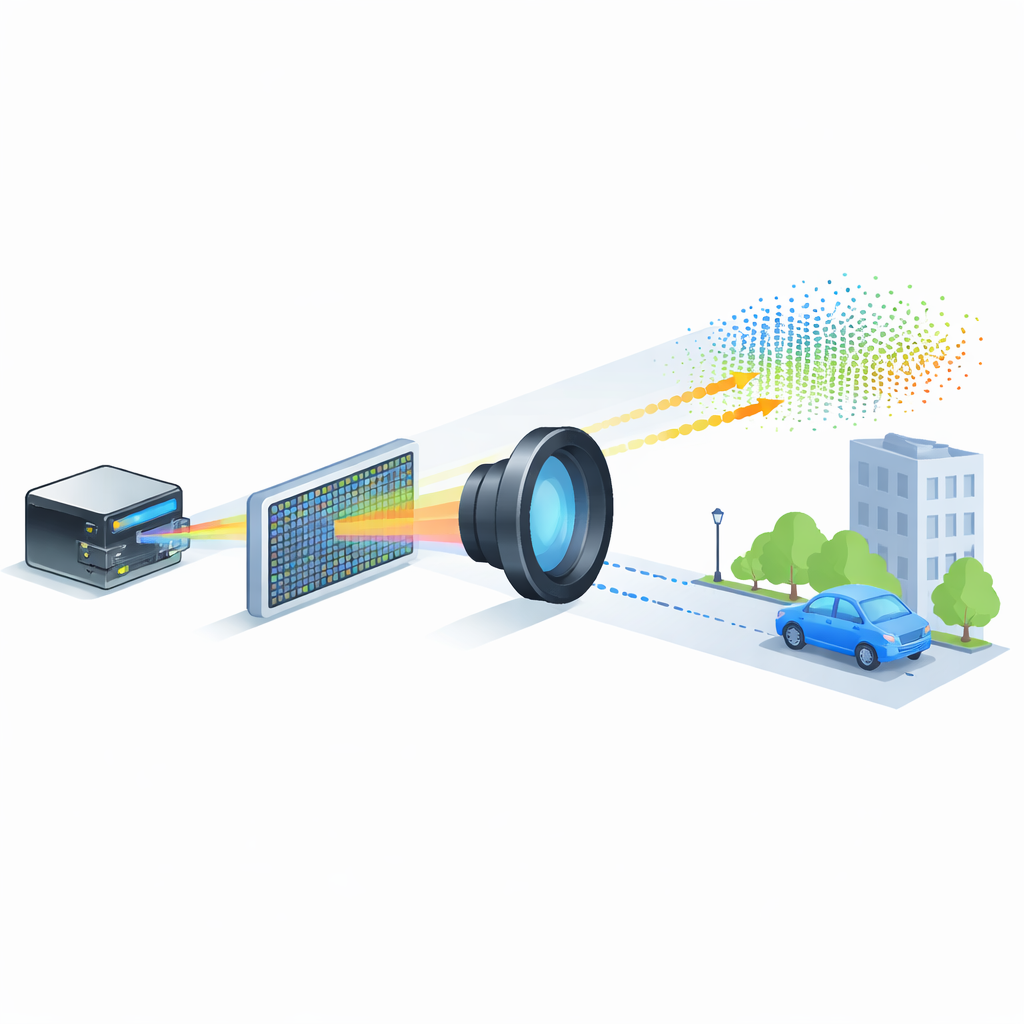

Self-driving cars, delivery drones and augmented-reality headsets all rely on machines that can understand the 3D world around them in real time. Today, that kind of vision is often bulky, expensive or power-hungry. This paper reports a major step toward a “4D camera” — a chip-sized sensor that not only maps the shape of a scene in 3D but also measures how things are moving, potentially bringing compact machine vision to everything from robots to smartphones.

From Flat Photos to Living Maps

Conventional cameras capture light intensity on a flat surface, producing beautiful 2D pictures but no direct information about distance. By contrast, light detection and ranging (LiDAR) systems send out laser pulses and time how long they take to return, building a 3D map of their surroundings. Existing approaches can see far and with high detail, but they tend to require moving parts, large optics or high energy per measured point. That makes it hard to build something as small, cheap and robust as a smartphone camera, yet capable of safely scanning streets, industrial sites or crowded rooms in fine detail.

A Chip That Measures Distance and Motion

The researchers present a new kind of LiDAR focal plane array — essentially a LiDAR version of the imaging chip inside a digital camera. Their device contains 352 by 176 pixels, for a total of more than 60,000 sensing sites, all built on a single silicon photonics chip together with its control electronics. Instead of using short laser pulses, the system relies on frequency-modulated continuous-wave (FMCW) light, in which the laser’s color is swept in a controlled “chirp.” When light bounces off objects and returns to the chip, it is combined coherently with a reference beam. Tiny differences in frequency reveal both how far away each point is and how fast it is moving toward or away from the sensor, adding velocity as a fourth measured dimension.

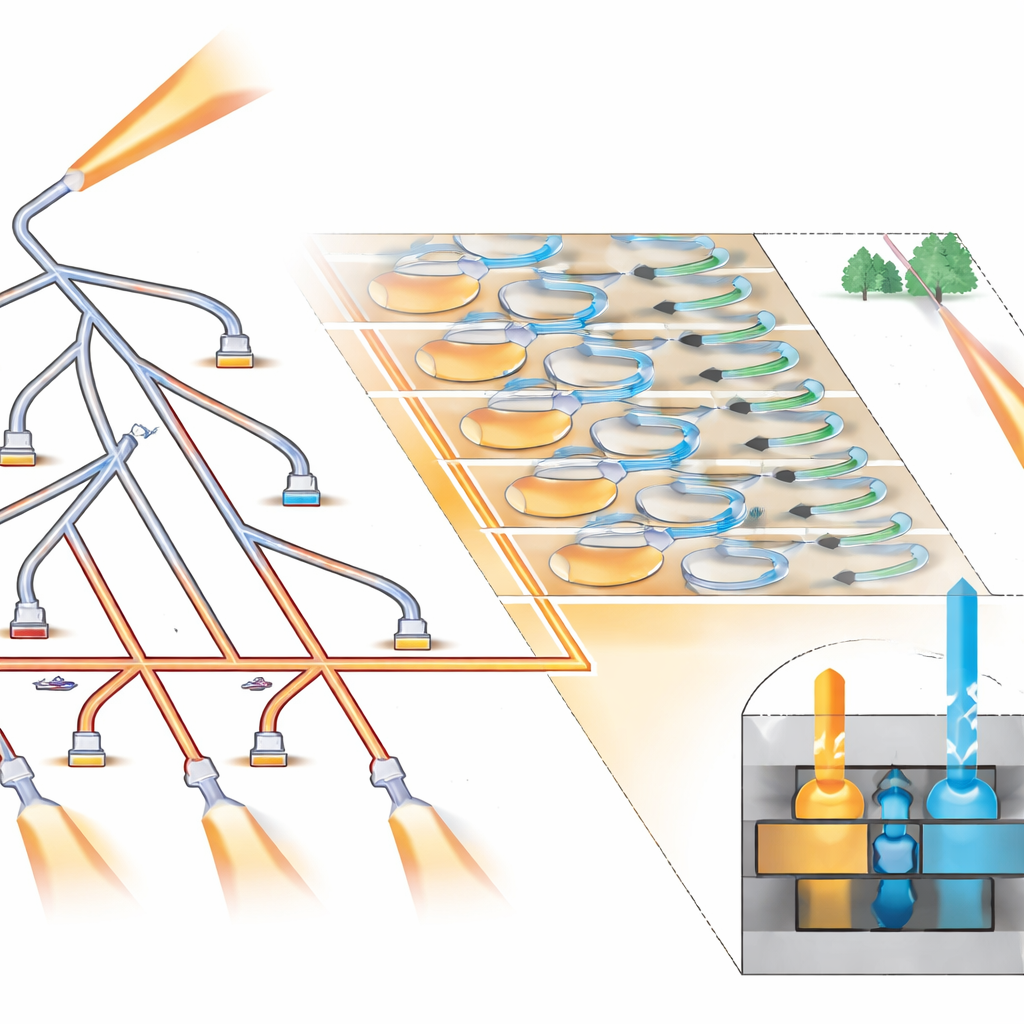

How the Tiny Light Grid Works

To cover many pixels without wasting power, the chip routes the chirped laser light through a tree of miniature optical switches, guiding it sequentially to groups of eight neighboring pixels. Within each group, light is split evenly so that all eight act as transmitters and receivers at once. Each pixel uses a pair of grating couplers to send and collect light, plus a pair of balanced photodetectors and an on-pixel amplifier to extract the beat signal that encodes distance and speed. Specially designed microlenses deposited directly on the chip help funnel more light in and out, improving efficiency. Because the same aperture sends and receives light (a “monostatic” design), the system avoids stray coupling between pixels and needs only a single external imaging lens, much like a regular camera.

Putting the 4D Camera to the Test

Using off-the-shelf short-wave infrared lenses, the team built a camera-like module around the chip and captured detailed 3D point clouds of indoor and outdoor scenes. With one lens, the sensor achieved a field of view of about 33 by 19 degrees and an angular resolution as fine as 0.06 degrees — enough to resolve furniture in an office and architectural features on buildings tens of meters away. The system measured objects from 4 to 65 meters using only tens of nanojoules of optical energy per point and average on-target power of about 178 microwatts per pixel, staying within strict eye-safety limits. It also tracked motion: in one experiment, it measured the changing radial velocity of a spinning disc with millimeter-per-second precision.

Performance, Limits and Future Growth

Careful measurements show that the sensor’s performance is close to fundamental physical limits set by the quantum nature of light, but not quite there yet. Today, the main limitation is electronic noise from the amplifiers in each pixel, which slightly reduces the signal-to-noise ratio compared with an ideal, purely photon-limited detector. The authors outline straightforward design tweaks — primarily increasing the internal reference light level and refining the optical layout, potentially using silicon–silicon nitride blends — that could push the system into a truly shot-noise-limited regime and increase usable range beyond 200 meters. Moving some on-chip switches out of the pixel array would also remove small gaps in the far-field coverage, producing cleaner point clouds.

Toward Everyday 4D Vision

This work demonstrates a compact, fully integrated 4D imaging sensor that rivals the pixel counts and ranges demanded by many real-world applications, while keeping power and size in check. By bringing light emitters, receivers, beam steering and control electronics together on a single silicon chip, the device plays a similar role for 3D and motion sensing that the CMOS sensor did for digital photography. With further refinements, such sensors could become inexpensive and robust enough to embed in cars, robots, phones and headsets, giving machines a precise, real-time understanding of the 3D world and how it is changing from moment to moment.

Citation: Settembrini, F.F., Gungor, A.C., Forrer, A. et al. A large-scale coherent 4D imaging sensor. Nature 651, 364–370 (2026). https://doi.org/10.1038/s41586-026-10183-6

Keywords: LiDAR, 4D imaging, silicon photonics, autonomous systems, depth sensing