Clear Sky Science · en

Distinct neuronal populations in the human brain combine content and context

How Your Brain Knows Which Memory Matters

We rarely remember things in isolation. A friend’s face comes bundled with where we met, what we talked about, and why it mattered. This study peeks inside the human brain at single neurons to ask a deceptively simple question: how does the brain keep track of both “what” happened and “in what situation” it occurred, so that the right memory pops up when we need it?

A Thoughtful Guessing Game for the Brain

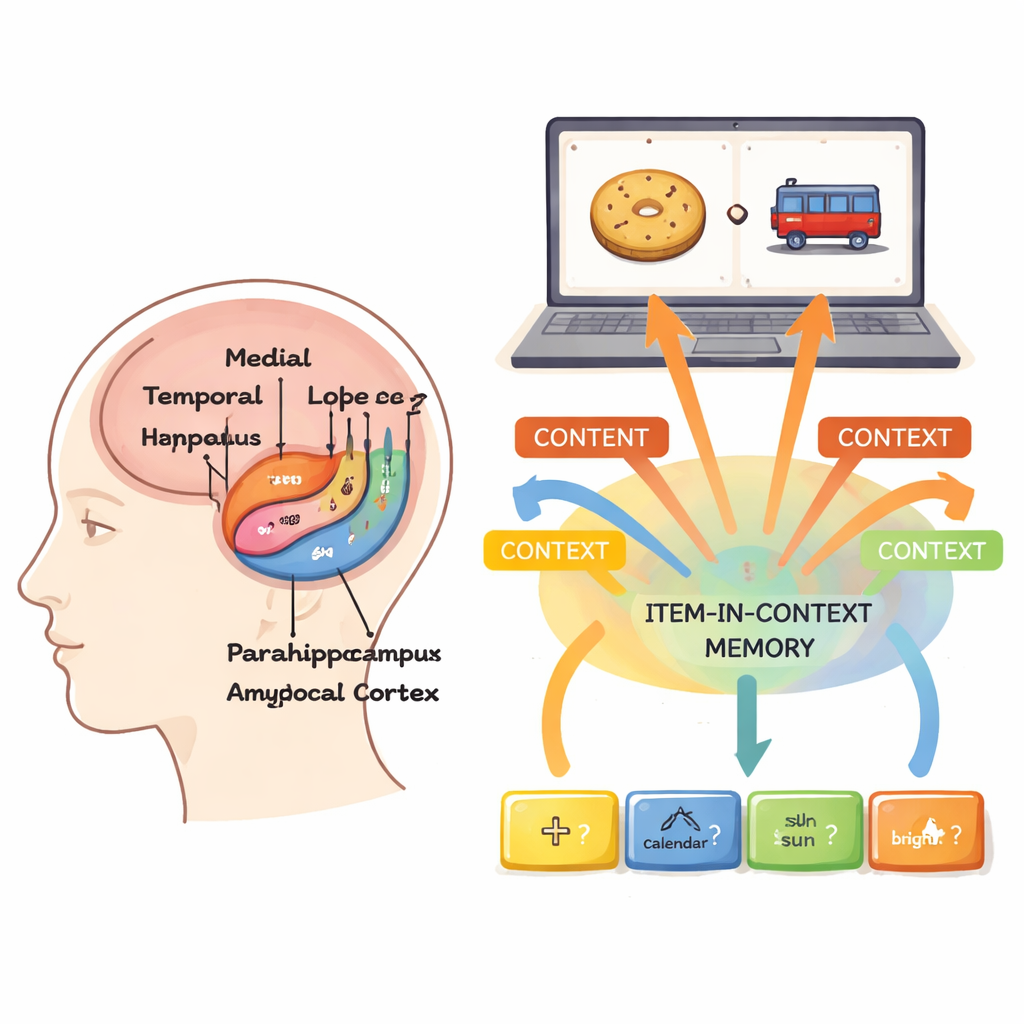

To probe this, neurosurgical patients with tiny electrodes in deep brain areas played a picture-comparison game on a laptop. Each trial began with a short question that set the context, such as whether one picture was bigger, older, more expensive, brighter, or last seen in real life. Then two images—chosen from just four that strongly drove the patients’ neurons—appeared one after another. The volunteers had to decide which picture best answered the question and whether it came first or second. This design forced them to remember both the pictures themselves (the content) and the question that framed the comparison (the context).

Separate Neuron Teams for “What” and “In What Situation”

From 3,109 neurons recorded in the medial temporal lobe—a memory-critical region that includes the hippocampus and nearby structures—the researchers found two main “teams.” One group of neurons fired selectively for particular pictures no matter which question was asked; these were pure content cells. A second group cared about the question but not the image, responding whenever, for example, the task was to judge which picture was older, regardless of whether the screen showed a train, a biscuit, or anything else. Only a small minority of neurons fired specifically for a particular picture in a particular question, indicating that, unlike many rodent neurons, most human cells did not rigidly bind content and context into single, highly specific codes.

Abstract Codes That Generalize Across Situations

Using machine-learning decoders, the authors showed that context cells carried enough information to reliably tell the five questions apart. Importantly, this “context code” did not depend on which pictures were shown or the order in which they appeared. Likewise, content cells signaled which picture was on the screen, largely independent of the question. During each trial, context activity rose with the question, dipped slightly, then re-emerged during the late part of viewing each picture and stayed present right up to the decision. Picture signals were strongest while a given image was on the screen, but traces of the first picture later reappeared while the second was shown—evidence that the brain was reactivating earlier content as it compared the two.

How Content and Context Team Up Over Time

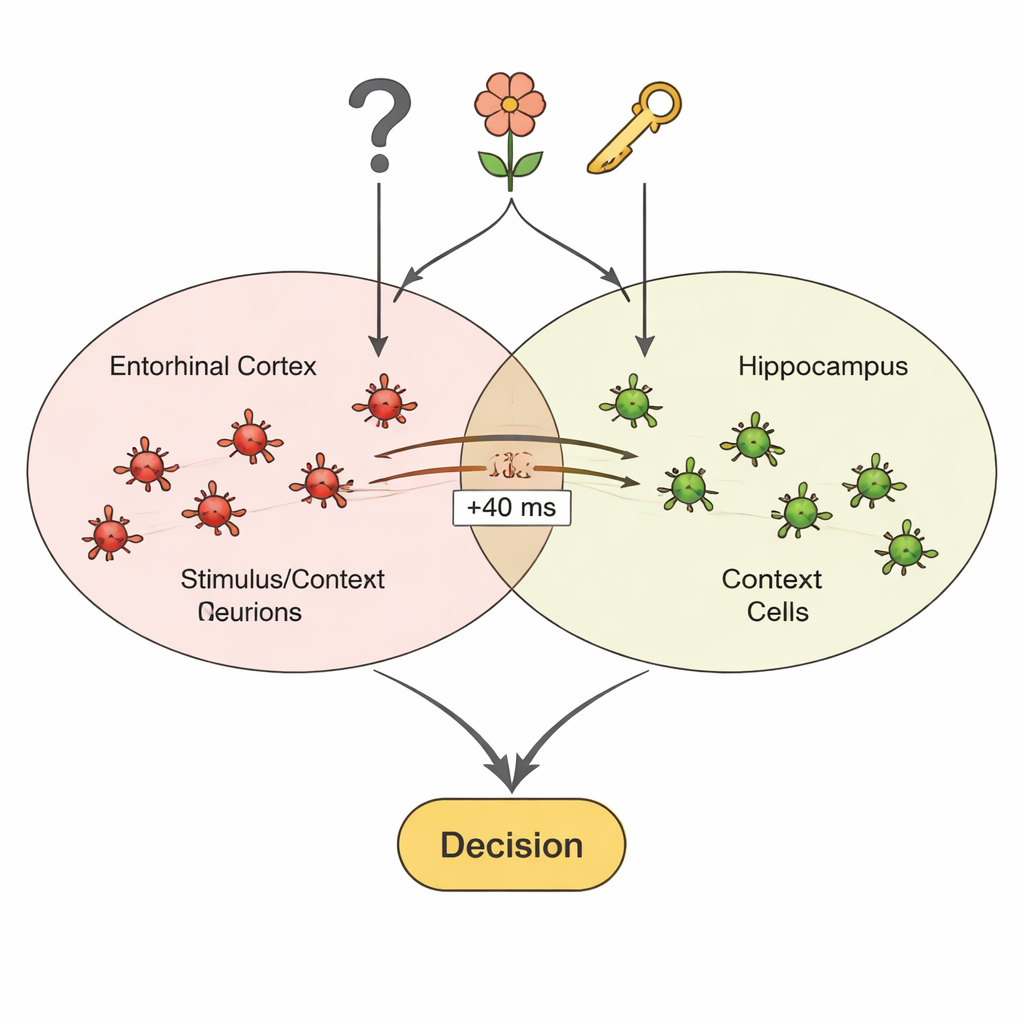

The most intriguing finding came from pairs of neurons recorded in different, but connected, brain areas. In the entorhinal cortex, many cells responded to specific pictures; in the hippocampus, others signaled the question context. As patients performed the game, the firing of picture cells in entorhinal cortex began to systematically precede the firing of context cells in hippocampus by about 40 milliseconds, and this pattern grew stronger during the experiment and lingered afterward. This timing suggests that repeated pairing of pictures and questions strengthened connections between the two neuron teams, so that seeing a picture could help rekindle the relevant question context. Context cells were also more excitable when they had just been strongly activated by their preferred question, making them especially ready to respond when matching pictures appeared.

Why This Matters for Everyday Memory

Taken together, the results support a view in which the human brain keeps relatively clean, separate codes for “what” and “in what situation,” then flexibly combines them when needed. Rather than storing a separate, hard-wired trace for every possible picture–question pairing, the medial temporal lobe seems to favor reusable, general representations of items and contexts that can be linked on the fly. This arrangement may help explain how we can recall the same friend across many different dinners, or reconstruct a particular evening when given just a hint of place or purpose: distinct neuron populations for content and context cooperate through rapid, learned interactions to spotlight the memory that best fits the moment.

Citation: Bausch, M., Niediek, J., Reber, T.P. et al. Distinct neuronal populations in the human brain combine content and context. Nature 650, 690–700 (2026). https://doi.org/10.1038/s41586-025-09910-2

Keywords: episodic memory, hippocampus, context processing, single-neuron recording, medial temporal lobe