Clear Sky Science · en

Deep learning models identify brain changes during the progression of Alzheimer’s disease

Why tracking brain change over time matters

Alzheimer’s disease slowly robs people of memory and thinking, but the damage in the brain builds up years before everyday symptoms become obvious. Doctors usually rely on a single brain scan or test result to judge whether someone has Alzheimer’s, even though the disease unfolds over time. This study asks a simple question with big consequences: if we follow people’s brain scans across several years and let an advanced computer model learn from these changes, can we not only detect Alzheimer’s more accurately, but also see which brain areas are hit first and hardest?

Following the brain’s story, not just a snapshot

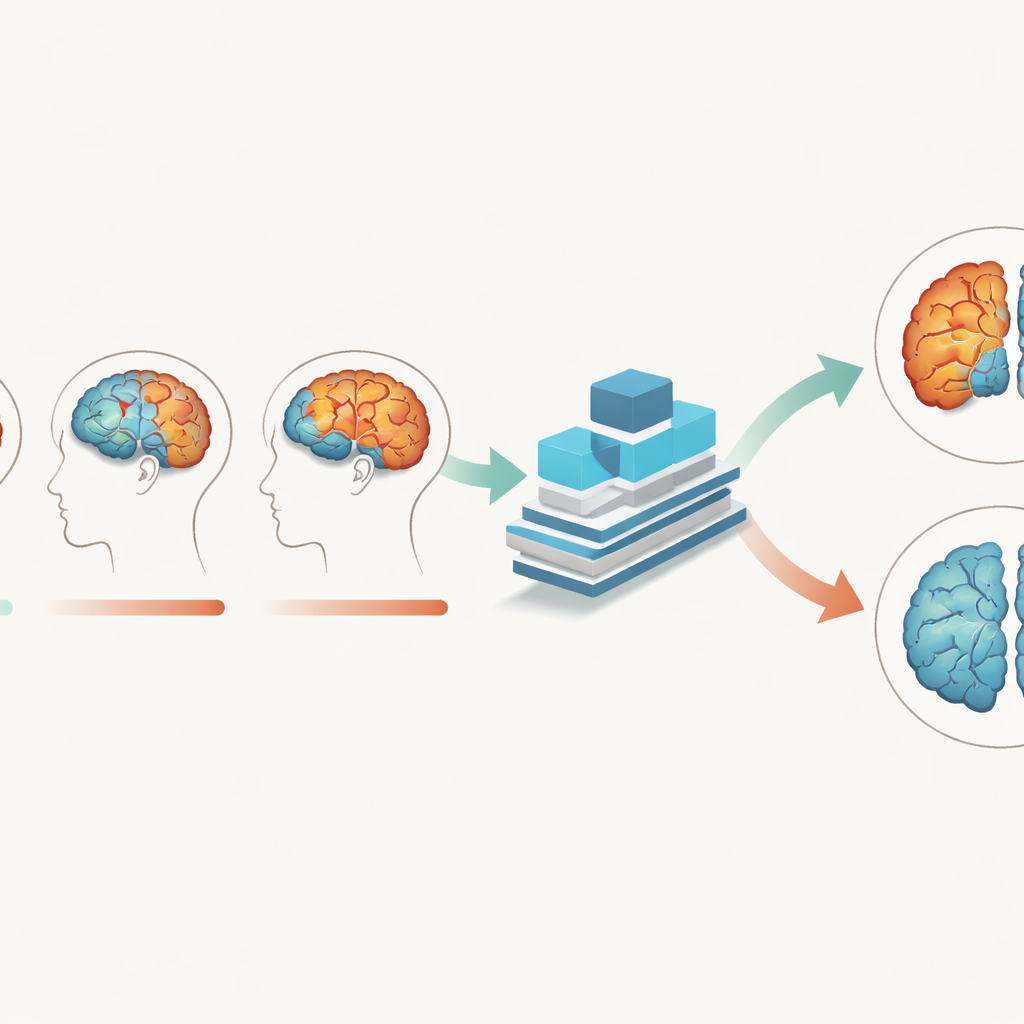

The researchers used structural MRI scans, which show detailed brain anatomy, from over 280 older adults, including people with Alzheimer’s and cognitively normal peers. Crucially, each person had three scans taken about a year apart, allowing the team to track how brain tissue changed over two years. Instead of treating each scan as a separate picture, they built a deep learning model that takes all time points together. The model was designed to pay attention to gray matter—the brain tissue packed with nerve cell bodies—as well as white matter and cerebrospinal fluid, and to learn how patterns in these tissues evolve as disease progresses.

A deep-learning model tuned to brain rhythms

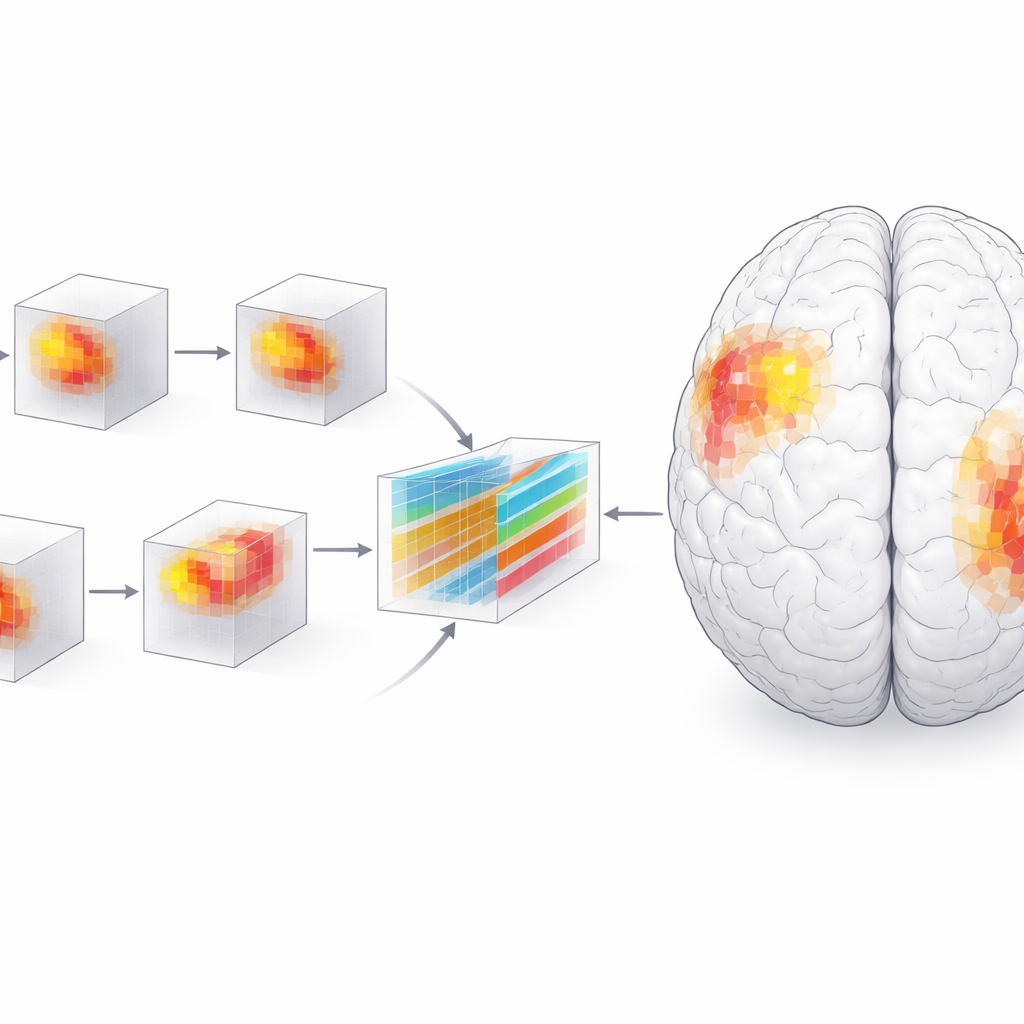

To capture these subtle shifts, the team created a Multi-Branch Fusion Channel Attention Network, a 3D convolutional neural network that processes MRI volumes rather than flat images. Separate branches handle different tissues or time points and then fuse their information, while an “attention” mechanism helps the model focus on the most informative regions in three-dimensional space. Trained mainly on gray matter data, the network learned to distinguish Alzheimer’s brains from normally aging brains with about 93% accuracy and perfect specificity on one dataset, outperforming several existing AI methods. It also generalized well to an independent Australian dataset, suggesting that it is not simply memorizing quirks of one study but capturing broader disease signals.

Seeing which brain regions tip the scales

High accuracy alone is not enough for medicine; clinicians need to understand what drives a model’s decisions. The researchers therefore used an interpretability technique called SHAP, which assigns an importance score to each tiny three-dimensional pixel—or voxel—in the MRI. Grouping these voxels into anatomical regions revealed a dynamic picture of disease. Early on, the amygdala, a region involved in emotion and memory, stood out as especially important for distinguishing patients from healthy peers. Over time, the hippocampus, parahippocampal gyrus, and especially the posterior parts of the temporal lobe gained influence, while the amygdala’s relative role waned. By the two-year mark, differences between patients and controls were much sharper and more clustered, particularly in the left side of the brain.

Patterns that match symptoms and clinical scores

To check that the model’s focus aligned with biology, the team performed traditional brain-volume analyses and statistical tests. They found that gray matter in the highlighted regions shrank faster in people with Alzheimer’s than in normally aging adults, and that lower volume in these areas tracked closely with worse scores on standard cognitive tests such as the Mini-Mental State Examination and the Clinical Dementia Rating. The path of damage—from inner temporal structures outward to posterior language and association areas—mirrored classic pathological staging schemes for Alzheimer’s. A left-sided bias emerged as well, consistent with the brain’s dominance for language and certain memory functions on that side. Voxel-based morphometry showed that early changes were scattered and small, then became larger and more concentrated in the posterior temporal and frontal regions as disease advanced.

What this means for patients and doctors

For non-specialists, the key takeaway is that Alzheimer’s does not act like a simple on–off switch in the brain; it follows an orderly but accelerating path, leaving distinct footprints over time. By teaching a deep-learning model to read not just where the brain looks different, but how those differences grow over several years, this study offers a way to flag Alzheimer’s more accurately and earlier in its course. It also pinpoints a small set of brain regions—including the amygdala, hippocampus, parahippocampal gyrus, and posterior temporal cortex—whose changing size and structure are closely tied to cognitive decline. While more work is needed, especially with additional imaging methods and larger datasets, this approach brings us closer to using time-resolved brain scans and interpretable AI as practical tools for early diagnosis, monitoring, and ultimately guiding interventions against Alzheimer’s disease.

Citation: Sun, J., Han, JD.J. & Chen, W. Deep learning models identify brain changes during the progression of Alzheimer’s disease. npj Syst Biol Appl 12, 42 (2026). https://doi.org/10.1038/s41540-026-00666-7

Keywords: Alzheimer’s disease, brain MRI, deep learning, longitudinal imaging, neurodegeneration