Clear Sky Science · en

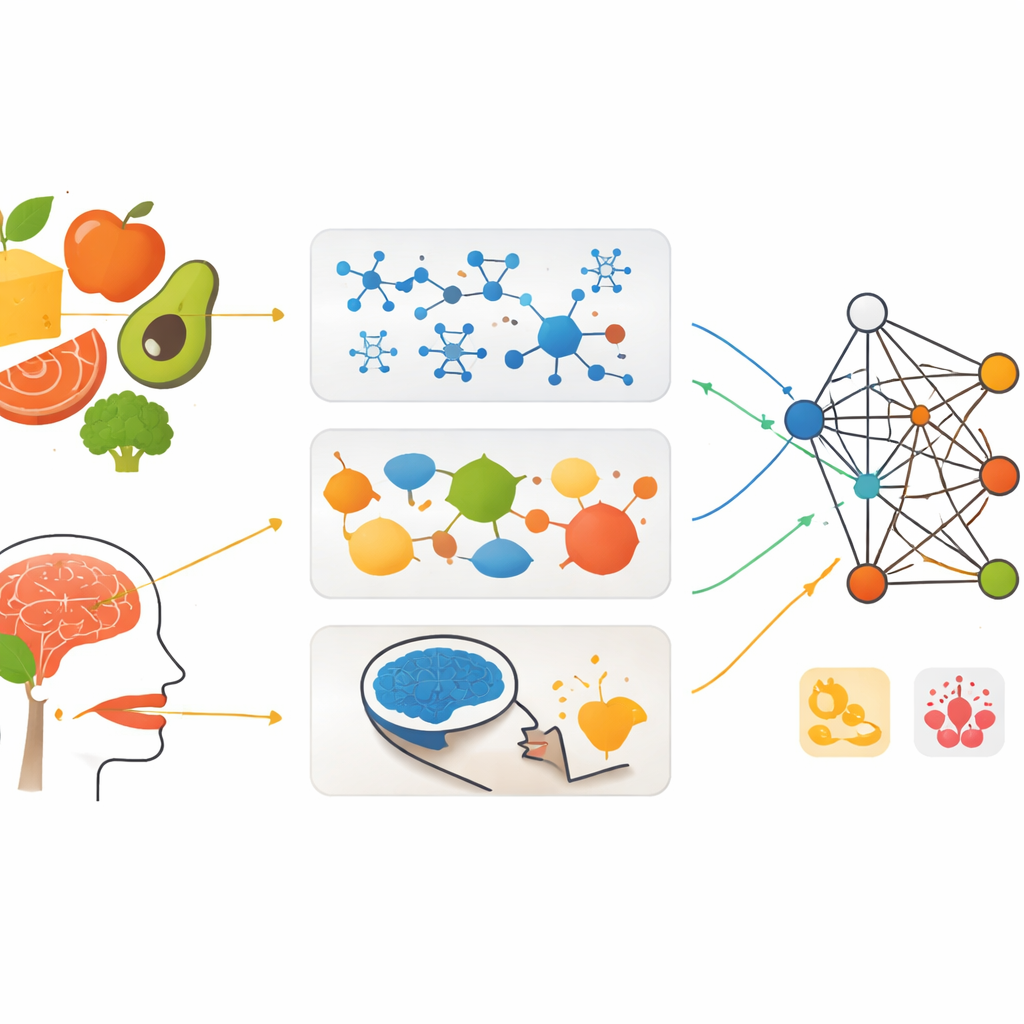

Machine learning unveils three layers of food complexity

Why Smarter Food Matters

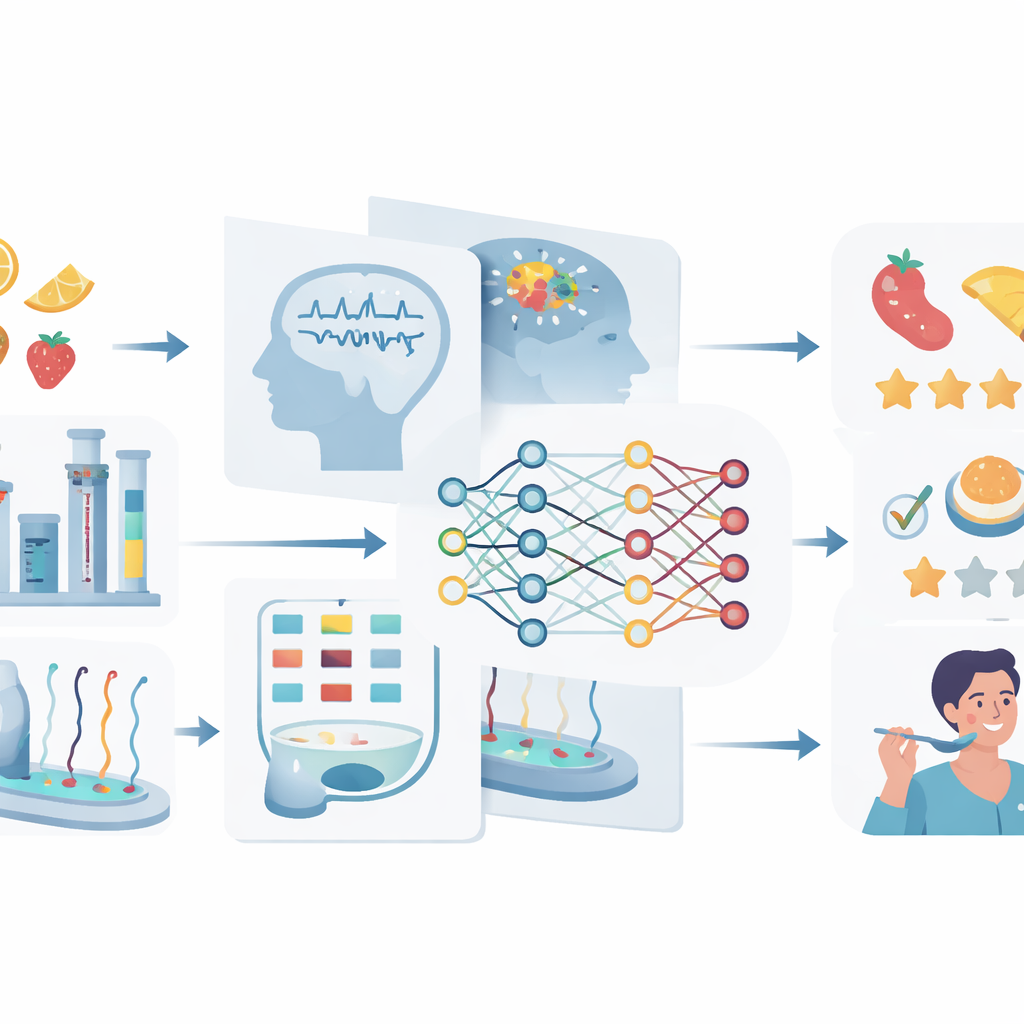

Every bite of food hides a world of complexity: thousands of invisible molecules, tangled interactions among ingredients, and the unique ways each person’s brain responds to taste and smell. This article explains how modern machine learning helps scientists make sense of that complexity. By connecting chemical analyses, factory sensors, and even brain scans, researchers hope to design tastier, healthier, more reliable foods—and to better match what different people actually enjoy.

Looking Inside Food’s Hidden Building Blocks

At the most basic level, foods are made of tens of thousands of distinct chemicals. Many are tiny aroma and taste molecules; others affect nutrition, safety, or how long food keeps. Only a fraction of these chemicals have been carefully studied, so scientists often do not know which ones create a particular flavor or health effect. Machine learning helps fill these gaps by spotting patterns between a molecule’s structure and its behavior. Algorithms can be trained on known data to predict whether new molecules are likely to taste sweet or bitter, smell fruity or smoky, or interact with human receptors in helpful or harmful ways. Deep-learning models that treat molecules as networks of atoms are especially powerful, revealing structure–flavor links that would be hard to capture by hand.

How Ingredients Work Together

Food rarely behaves like the simple sum of its parts. Sugar, acids, fats, and aromas can amplify or mute one another, changing texture, aroma release, and flavor balance. To study these interactions, scientists collect detailed “fingerprints” of foods using instruments such as gas and liquid chromatography or ion mobility spectrometry, which separate and detect complex mixtures of chemicals. Electronic noses and tongues go a step further by using sensor arrays to capture the overall smell or taste pattern of a sample. Feeding these rich signals into machine-learning models allows researchers to classify product quality, detect spoilage or fraud, and estimate flavor profiles more quickly and objectively than traditional tasting panels. Data-fusion methods then combine multiple sources—chemical fingerprints, sensor signals, color images, and basic composition—into unified models that better capture how ingredients work together.

How Our Brains Experience Flavor

A food’s journey does not end at the tongue; it continues into the brain. People differ widely in how they experience the same food due to genetics, culture, and past experiences. New brain-imaging tools, such as electroencephalography (EEG), functional near-infrared spectroscopy, and functional MRI, can track how different regions of the brain respond when people taste or smell something. Machine-learning models trained on these signals can distinguish between basic tastes like sweet, sour, or umami, recognize specific odors, and even estimate how pleasant someone finds a smell. By combining fast methods like EEG with imaging that shows where in the brain activity occurs, researchers are starting to build richer, individualized maps of flavor perception.

Bringing Many Data Streams Together

Because no single method can capture every aspect of food, the article highlights the importance of blending many kinds of data. At one end are molecular databases that list nutrients, additives, and aroma compounds. In the middle are measurements of whole foods from laboratory instruments and smart sensors. At the other end are human-centered data such as tasting notes, consumer reviews, and brain signals. Data-fusion strategies join these pieces at different stages: raw signals may be merged early, extracted features may be combined midstream, or separate models may be blended at the decision stage. When carefully cleaned, standardized, and shared under common rules, such multimodal datasets allow machine-learning systems to link what is in the food, how it is processed, and how it ultimately feels to eat.

What This Means for Future Meals

The authors conclude that machine learning provides a new toolkit for understanding food from molecule to mind. In plain terms, it can help scientists predict which combinations of ingredients will be tasty, safe, and stable before spending months in the kitchen or pilot plant. It can also connect objective measurements from instruments and sensors to the subjective experiences of diverse eaters, guiding more inclusive and personalized food design. To fully realize this vision, the field needs larger and better-organized databases, models that are easier to interpret, and closer collaboration among food scientists, chemists, data scientists, and neuroscientists. If these goals are met, tomorrow’s foods could be developed faster, tailored more closely to individual preferences and health, and judged more reliably than ever before.

Citation: Ke, Q., Zhang, J., Huang, X. et al. Machine learning unveils three layers of food complexity. npj Sci Food 10, 87 (2026). https://doi.org/10.1038/s41538-026-00730-w

Keywords: machine learning in food science, food flavor prediction, electronic nose and tongue, brain responses to taste, multimodal food data