Clear Sky Science · en

Statistics-informed parameterized quantum circuit: towards practical quantum state preparation and learning via maximum entropy principle

Turning Real-World Data into Quantum States

Modern quantum computers promise big gains in finance, science, and machine learning—but only if we can first translate messy real-world data into the fragile language of quantum states. This paper introduces a new way to do that translation, called the statistics-informed parameterized quantum circuit (SI-PQC). By baking basic patterns of the data directly into the structure of a quantum circuit, SI-PQC aims to load probability distributions onto qubits far more efficiently, making many proposed quantum speedups more realistic in practice.

Why Getting Data into Quantum Form Is Hard

Before a quantum algorithm can run, it needs its input encoded as a quantum state whose amplitudes match a target probability distribution, such as a bell curve or a mix of several peaks. Building such a state in full generality is notoriously expensive: in the worst case, the number of gates or helper qubits grows exponentially with the size of the dataset. Existing methods try to exploit models of the data—for example, by using known formulas for standard distributions or by training flexible quantum circuits to imitate observed samples. But these approaches often hide a heavy price. They require substantial pre-computation or long training runs to translate model parameters into gate settings, and this overhead can erase the theoretical advantages of the quantum algorithm itself, especially when the data or model parameters change over time.

Using Symmetry and Uncertainty as Design Guides

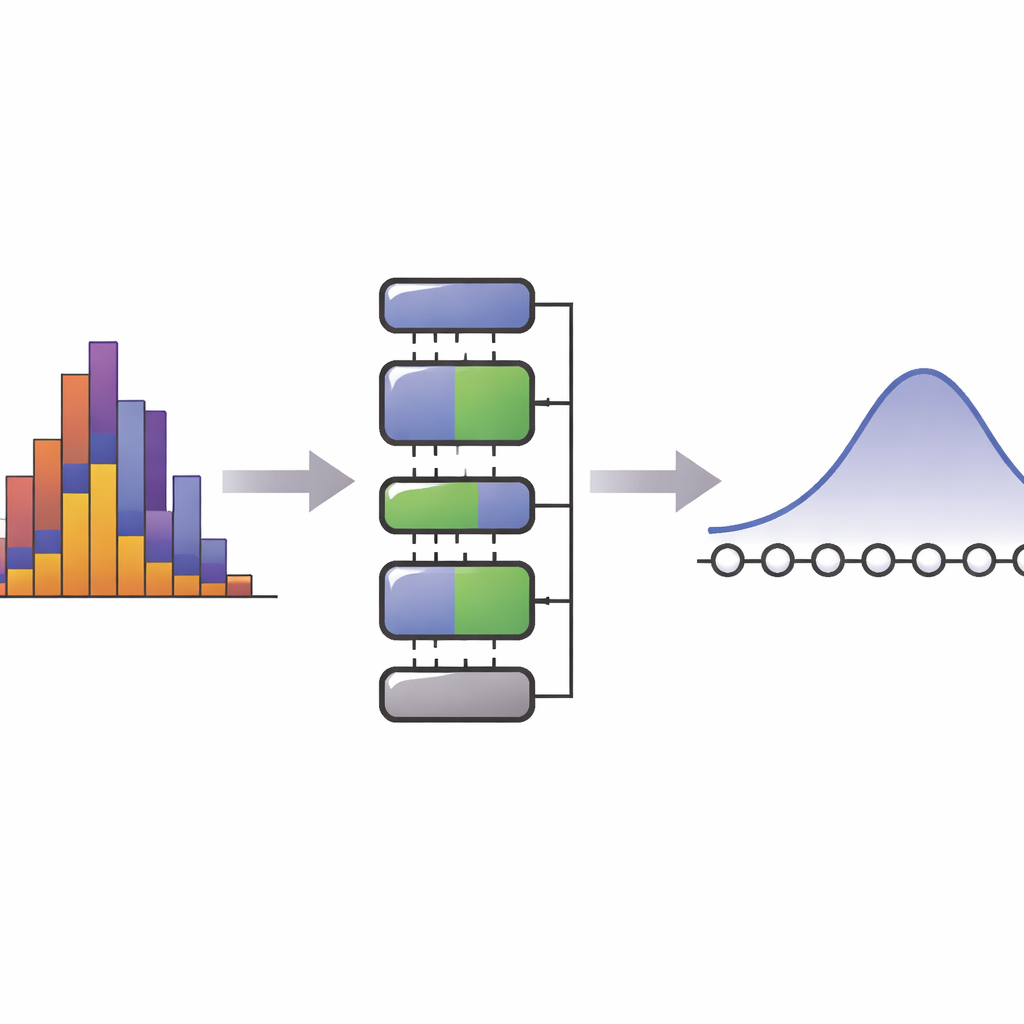

The key idea of SI-PQC is to treat data not as an arbitrary collection of numbers, but as something structured by simple “symmetries,” such as a fixed average value or spread. The authors draw on the maximum entropy principle, a concept from statistics and physics that says: among all distributions consistent with a small set of known averages, the most honest, least biased guess is the one with the largest entropy. Many familiar distributions—like the Gaussian—can be seen this way. SI-PQC separates information into two parts. One part is fixed knowledge about the form of the model and the conserved features it should respect. The other part is a handful of tunable parameters that capture what is still unknown or changing in the data. In the circuit, this translates into fixed layers that never change across problems, and a compact set of adjustable rotation gates that directly encode the model parameters.

Building and Mixing Quantum Distributions

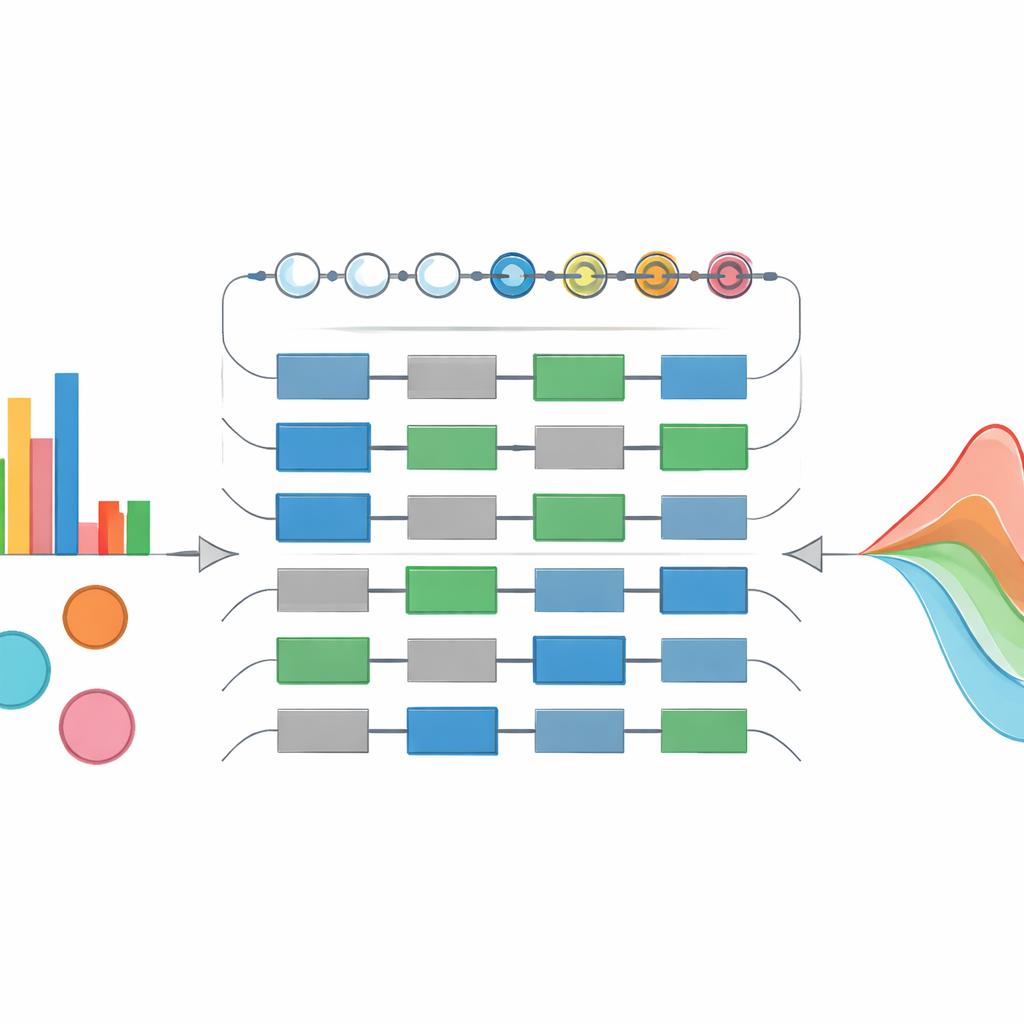

Using this design, the authors construct a "maximum entropy distribution loader" that can prepare a wide range of standard probability shapes on a modest number of qubits. They test their circuits on exponential, chi-squared, Gaussian, and Rayleigh distributions and show that, by adjusting the degree of a polynomial approximation, they can make the quantum state closely match the target curve while keeping the circuit depth under control. A standout feature is that the circuit structure stays the same even when the parameters change, enabling re-use and aggressive optimization. The authors then extend the idea to mixtures of distributions—situations where uncertainty in parameters is described by another probability law, as in Gaussian mixture models used in machine learning and finance. Their "weighted distribution mixer" can encode both the visible data and a latent space of possible parameter settings in a single quantum state, avoiding the exponential blow-up that plagues more naive quantum constructions.

Learning from Data with Quantum Help

Beyond state preparation, SI-PQC also serves as a trainable model for learning from data. Because the number of free parameters in the circuit is tightly matched to the degrees of freedom of the underlying statistical model, the training landscape is smaller and more interpretable than in generic variational quantum circuits. The authors demonstrate this by fitting a Gaussian mixture model using a hybrid quantum–classical loop that adjusts circuit angles to minimize the distance between the prepared quantum state and sample data. As training proceeds, both the quantum state and the classical parameters it represents (such as means and variances) converge toward their true values. Theory suggests that such compact, symmetry-guided circuits should generalize better, require fewer training samples, and be less prone to flat, "barren" regions where gradients vanish.

Practical Payoffs in Finance and Risk

To show real-world impact, the paper examines two financial tasks: pricing derivatives and evaluating risk. Many quantum proposals in this area rely on Monte Carlo–like quantum routines that can accelerate the estimation of expected payoffs or loss probabilities—provided that the underlying price distribution can be prepared quickly on a quantum device. SI-PQC sharply reduces the classical pre-processing time and the depth of the state-preparation part of these algorithms, and can update its parameters in constant time when market conditions shift, which is crucial for online pricing and Greek calculations. The authors also design a quantum-assisted procedure for estimating Value at Risk directly from streaming empirical data. Here, simple running averages from classical monitors are used as constraints in a maximum-entropy model, which SI-PQC turns into an approximate quantum version of the real-time loss distribution. Quantum amplitude estimation then yields risk measures that track closely with those computed from the raw data.

What This Means Going Forward

For non-specialists, the central message is that efficient "data loading" is just as vital to quantum advantage as the speed of the quantum algorithm itself. SI-PQC offers a principled way to bridge this gap by encoding simple, interpretable statistical structure directly into the layout of quantum circuits, while keeping the adjustable part small and flexible. The authors show that this strategy can prepare and learn complex distributions, handle mixtures naturally, and substantially cut end-to-end resource costs in finance-focused applications. If these ideas scale on future hardware, they could help move quantum computing from abstract promise toward practical tools in areas like real-time trading, adaptive machine learning, and even medical diagnostics, wherever rapidly changing statistical patterns must be captured and processed at quantum speed.

Citation: Zhuang, XN., Chen, ZY., Xue, C. et al. Statistics-informed parameterized quantum circuit: towards practical quantum state preparation and learning via maximum entropy principle. npj Quantum Inf 12, 45 (2026). https://doi.org/10.1038/s41534-026-01191-5

Keywords: quantum state preparation, maximum entropy, quantum machine learning, Gaussian mixture models, quantum finance