Clear Sky Science · en

Overcoming Dimensional Factorization Limits in Discrete Diffusion Models through Quantum Joint Distribution Learning

Why this new twist on AI and quantum matters

Modern AI systems are remarkably good at generating text, images and other data, but they still struggle when many parts of the data are strongly linked together. This paper shows that a major class of generative AI models, called discrete diffusion models, has a built‑in limitation: as data become higher‑dimensional and more correlated, their errors can grow rapidly. The authors propose a new approach that uses quantum computers to learn these complex relationships more faithfully, potentially yielding faster and more flexible generative models than today’s classical techniques.

When breaking things apart breaks what matters

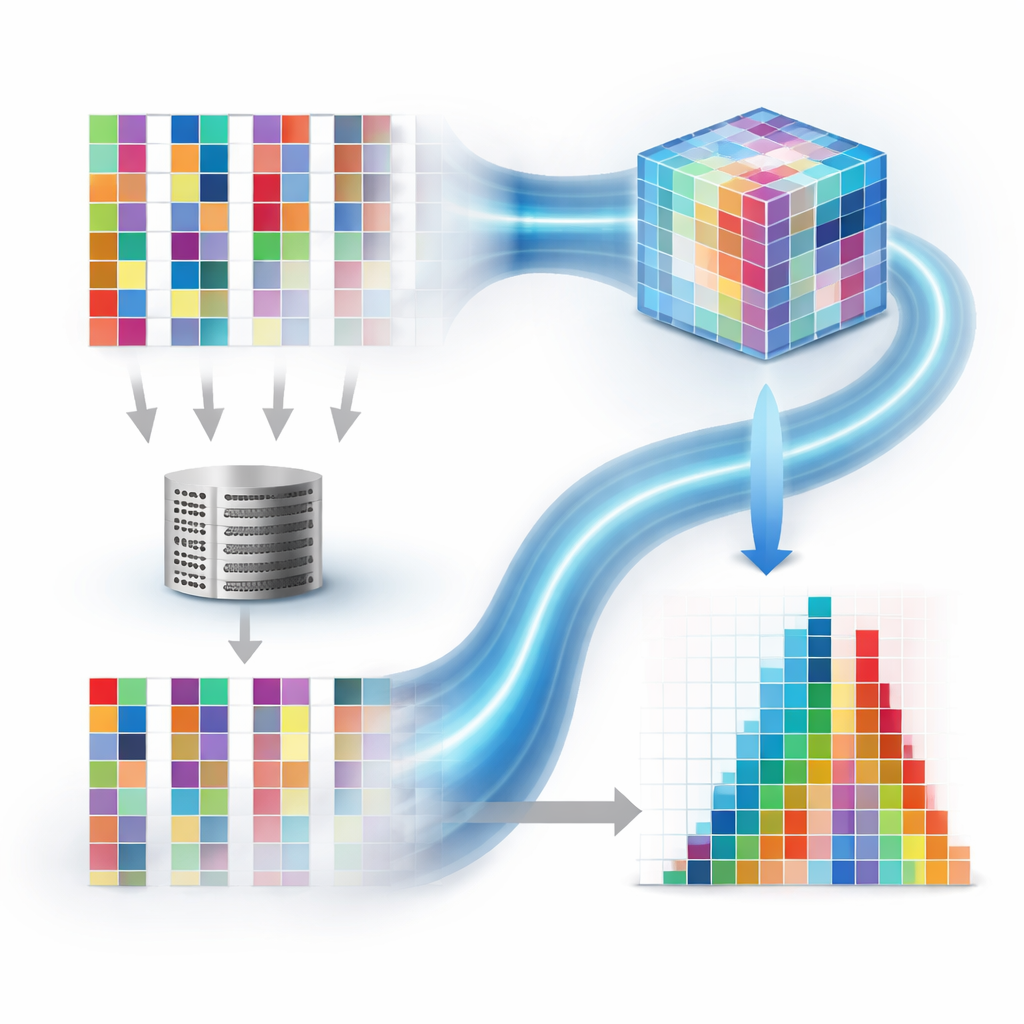

Classical discrete diffusion models work by gradually corrupting data with noise and then learning to reverse this process, step by step, to generate new samples. To keep computations manageable, they treat each dimension—such as each pixel in an image or each symbol in a sequence—as if it changes independently. This "factorization" avoids an exponential explosion in complexity, but it also ignores correlations among dimensions. The authors analyze a worst‑case scenario in which every part of the data is strongly tied to every other part. They prove that, for such data, the mismatch between the true distribution and what a factorized model can learn can grow roughly in proportion to the number of dimensions. In other words, as data get larger and more structured, classical discrete diffusion models can fundamentally fail to capture how pieces of information depend on each other.

Using quantum states to keep correlations intact

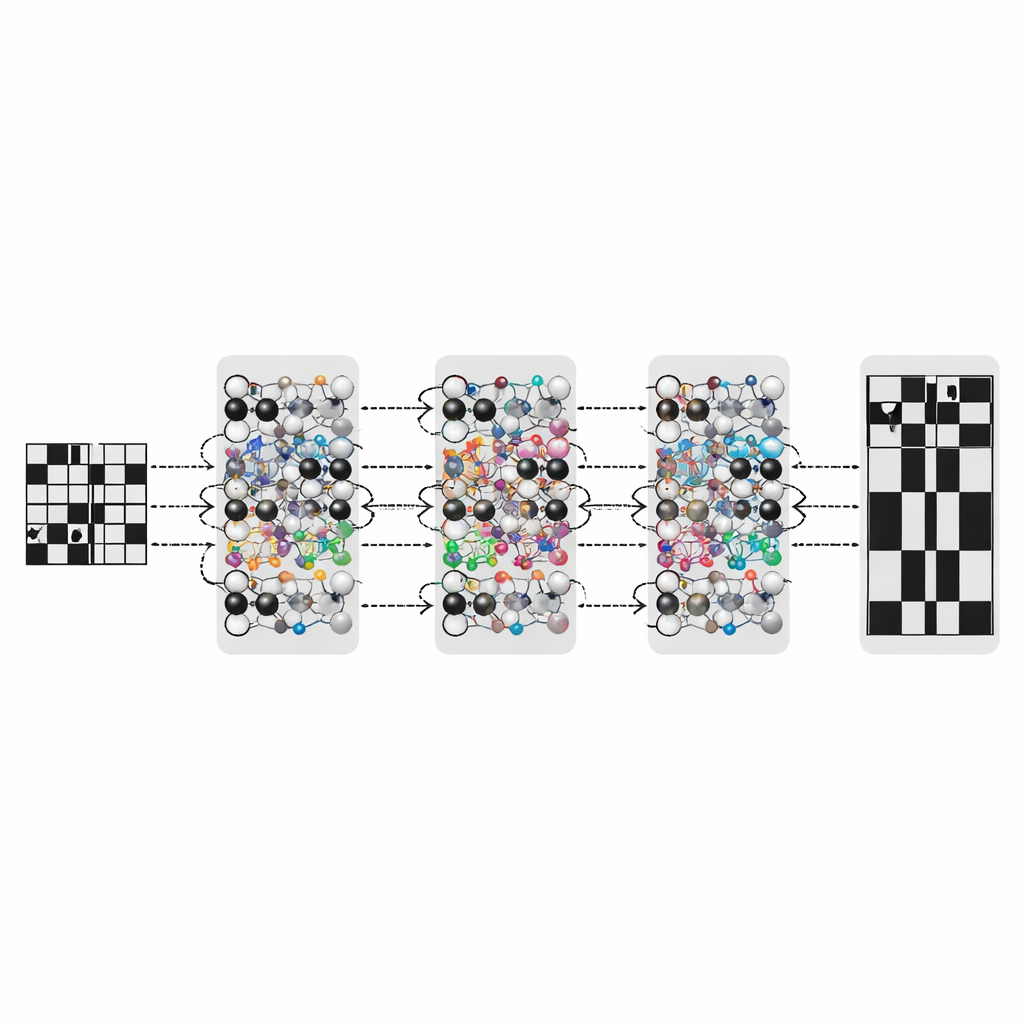

The proposed quantum discrete denoising diffusion probabilistic model (QD3PM) tackles this problem by representing data as quantum states rather than as separate classical variables. In a quantum system, a collection of qubits naturally lives in a very large combined space where joint configurations and correlations are stored together. QD3PM encodes discrete data into this space, applies a controlled "diffusion" process that adds noise through quantum channels, and then learns to reverse that process with a trainable quantum circuit. Crucially, the model operates on the full joint state, so the interdependence among dimensions is preserved throughout diffusion and denoising. Using a version of Bayes’ rule adapted to quantum theory, the authors derive how to compute the exact "posterior" quantum state that should guide training, and they design circuits that physically implement this update.

From many slow steps to a single quantum leap

Standard diffusion models usually need many rounds of gradual denoising to turn pure noise into a realistic sample, which makes them computationally expensive. QD3PM is first described in this familiar iterative way, but the authors then show how to train the same quantum circuitry to jump directly from noise to clean data in one step. They do this by having the quantum circuit learn the distribution of original data conditioned on a noisy input, and then carefully composing this learned mapping with the quantum diffusion and update rules. Thanks to properties of quantum operations and measurements, the final sampling only depends on certain diagonal elements of the quantum state, which allows the procedure to be simplified without changing the observable outcomes. This yields a one‑shot generator that, in principle, can be much faster than classical multi‑step diffusion while still modeling the full joint distribution.

Filling in the blanks without starting over

A practical advantage of QD3PM is how naturally it handles conditional tasks such as inpainting—filling in missing parts of an image given the visible region. Because the model describes the full joint distribution over all dimensions, the authors can condition on known values simply by repeatedly resetting those parts of the data during the denoising steps while allowing the unknown parts to vary. This gently steers the sampling process toward the correct conditional distribution, without changing the circuit or retraining it. In simulations on synthetic datasets that include highly structured "bars and stripes" patterns, QD3PM not only fits the overall distribution more accurately than both classical diffusion models and quantum models that rely on factorization, but also performs robustly under realistic levels of quantum hardware noise and handles conditional generation well.

What the results mean going forward

Taken together, the analysis and experiments show that treating dimensions independently is a serious bottleneck for discrete diffusion models when data are strongly correlated. By instead using quantum states to learn joint distributions directly, QD3PM avoids this limitation and can, in theory, match complex target distributions perfectly in cases where classical factorized approaches cannot. The work also demonstrates how quantum generative models can offer not just raw expressive power, but also practical benefits like faster one‑step sampling and flexible conditional inference without retraining. While current demonstrations are limited to relatively small systems that can be simulated on classical computers, the framework provides a concrete roadmap for how emerging quantum hardware could one day enhance the core machinery of generative AI.

Citation: Chen, C., Zhao, Q., Zhou, M. et al. Overcoming Dimensional Factorization Limits in Discrete Diffusion Models through Quantum Joint Distribution Learning. npj Quantum Inf 12, 49 (2026). https://doi.org/10.1038/s41534-026-01188-0

Keywords: quantum generative models, diffusion models, joint distribution learning, high-dimensional correlations, conditional generation