Clear Sky Science · en

A multimodal framework for fatigue driving detection via feature fusion of vision and tactile information

Why Staying Awake at the Wheel Matters

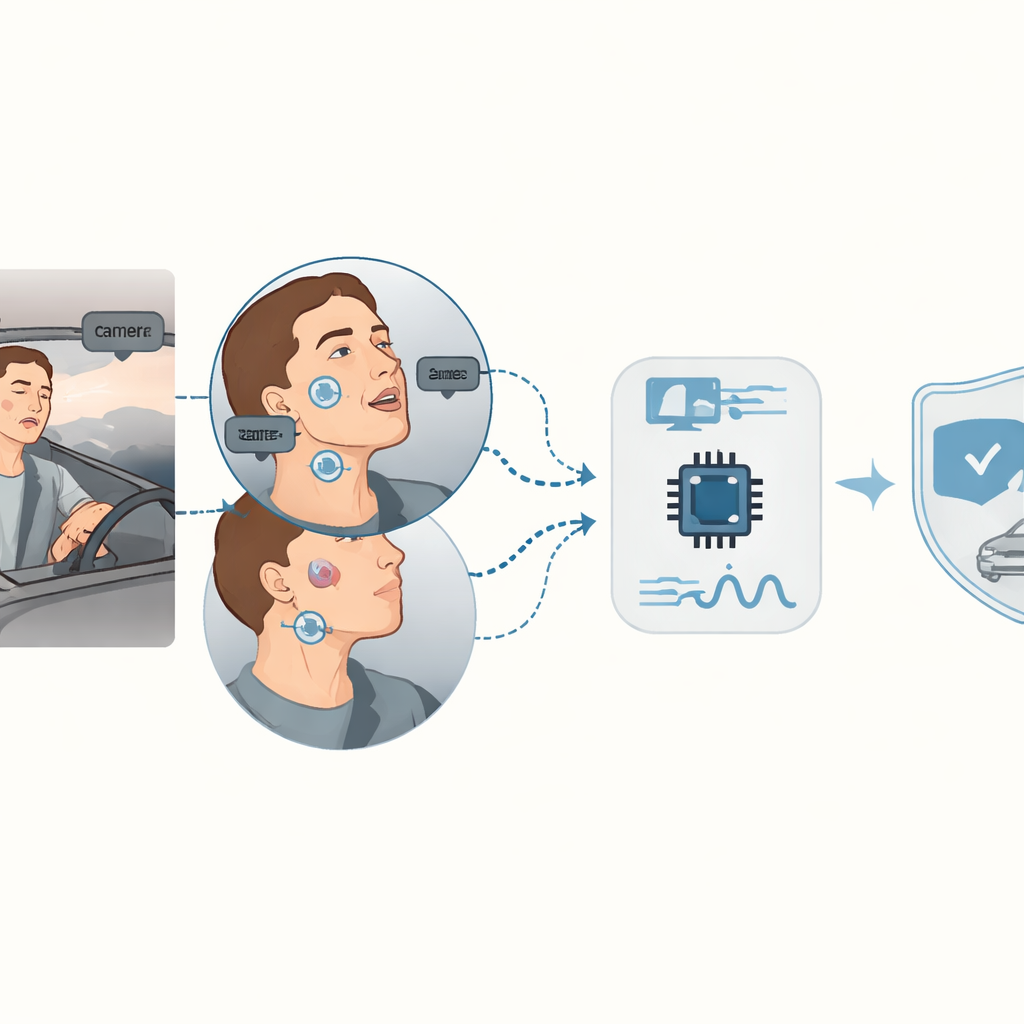

Long drives, late nights, and busy schedules mean many people get behind the wheel when they are too tired. Fatigue quietly slows reaction time and blurs attention, contributing to a large share of serious traffic accidents every year. This study introduces a new in-car monitoring system that watches both a driver’s face and subtle pressure changes on the skin to detect drowsiness early and more reliably than today’s camera-only or sensor-only solutions.

Two Senses Are Better Than One

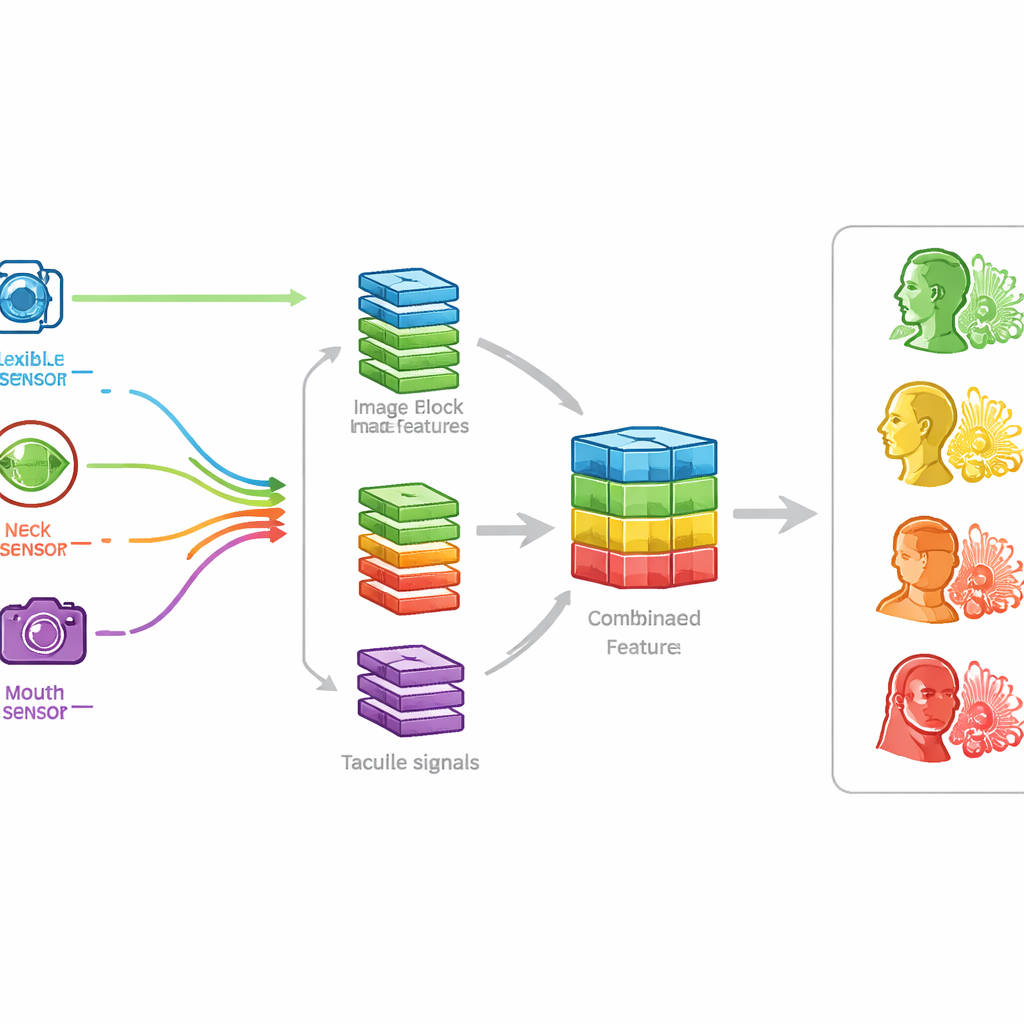

Most current systems try to spot fatigue from a single source of information. Camera-based tools look for clues such as drooping eyelids, long blinks, and yawns, but they struggle at night, in glare, or when faces are partly hidden by glasses or masks. Other approaches rely on electrical signals from the body or bulky wearables, which can be uncomfortable and noisy. The research team instead mimics how the human brain mixes touch and sight: their system combines video of the driver’s face with gentle “tactile” readings from soft, skin-friendly patches placed near the eyes, mouth, and neck, then lets an artificial intelligence model judge whether the driver is alert or drifting toward sleep.

Soft Sensors That Feel What Cameras Miss

At the heart of the system are flexible pressure sensors made from a lightweight, porous plastic blended with a conductive polymer shaped into tiny wormlike structures. This sponge-like material compresses easily and changes its electrical behavior in response to very small presses and bends. When glued lightly to the skin, one patch near the eyes responds when eyelids close, another at the neck feels the head nodding, and a third near the mouth senses the wide opening and stretching that comes with yawning. Tests showed that these sensors react in a few thousandths of a second, can detect extremely gentle pressures, and keep working reliably even after tens of thousands of bending and pressing cycles—important for something that might be worn every day in a moving car.

Teaching the System to Read Tiredness

To teach the system what fatigue looks like, the researchers built a dataset that paired short video clips of five volunteers with matching signals from the three skin patches. They recorded four typical states: normal driving, eyes closing, head nodding, and yawning, under both bright daylight and dim parking-garage lighting. A modern image-recognition network learned to extract key patterns from the face images, while a second network converted the sensor readings into compact signatures. These two streams of information were then fused into a single combined representation, allowing the model to see when touch and sight agreed on signs of tiredness and to rely more heavily on the sensors when the video was dark or degraded.

From Momentary Signals to Actionable Warnings

Once the system could recognize the four basic states with about 98 percent accuracy in controlled tests, the team went a step further: turning frame-by-frame judgments into practical advice for drivers. They defined simple rules based on how often someone blinked for too long, nodded, or yawned per minute and converted those counts into a three-level fatigue score: normal, mildly tired, or severely fatigued. The system runs in real time on a compact in-car computer, continuously updating the driver’s score and triggering a gentle prompt for a break at mild levels or a strong stop-now alert when severe fatigue is detected. It maintained high performance across different ages, skin tones, facial hair, masks, and even under poor lighting or motion shake, showing that the combined camera-and-touch approach is robust in realistic conditions.

What This Means for Everyday Driving

For non-specialists, the takeaway is straightforward: by blending what a camera sees with what soft skin sensors feel, this study delivers a smarter “co-pilot” that notices subtle signs of drowsiness before they turn into disasters. The technology avoids many weaknesses of camera-only systems at night and of uncomfortable medical wearables, while remaining fast and efficient enough to run inside a car. Although larger, real-world road tests are still needed, this multimodal framework points toward future vehicles that quietly monitor driver alertness in the background and step in with timely warnings, helping reduce fatigue-related crashes and making long trips safer for everyone on the road.

Citation: Li, K., Yue, W., Shin, DB. et al. A multimodal framework for fatigue driving detection via feature fusion of vision and tactile information. npj Flex Electron 10, 40 (2026). https://doi.org/10.1038/s41528-026-00543-7

Keywords: driver fatigue detection, in-car safety monitoring, flexible skin sensors, multimodal AI, drowsy driving prevention