Clear Sky Science · en

Physics-informed Hamiltonian learning for large-scale optoelectronic property prediction

Why this matters for better solar cells and LEDs

Designing next-generation solar cells, LEDs, and other light-based technologies increasingly depends on simulating how electrons move through complex materials. But the most accurate quantum-mechanical calculations are so computationally heavy that they break down for realistic, disordered crystals with tens of thousands of atoms. This paper introduces a new approach, called HAMSTER, that blends tried-and-true physics with machine learning to make those large, realistic simulations both feasible and reliable.

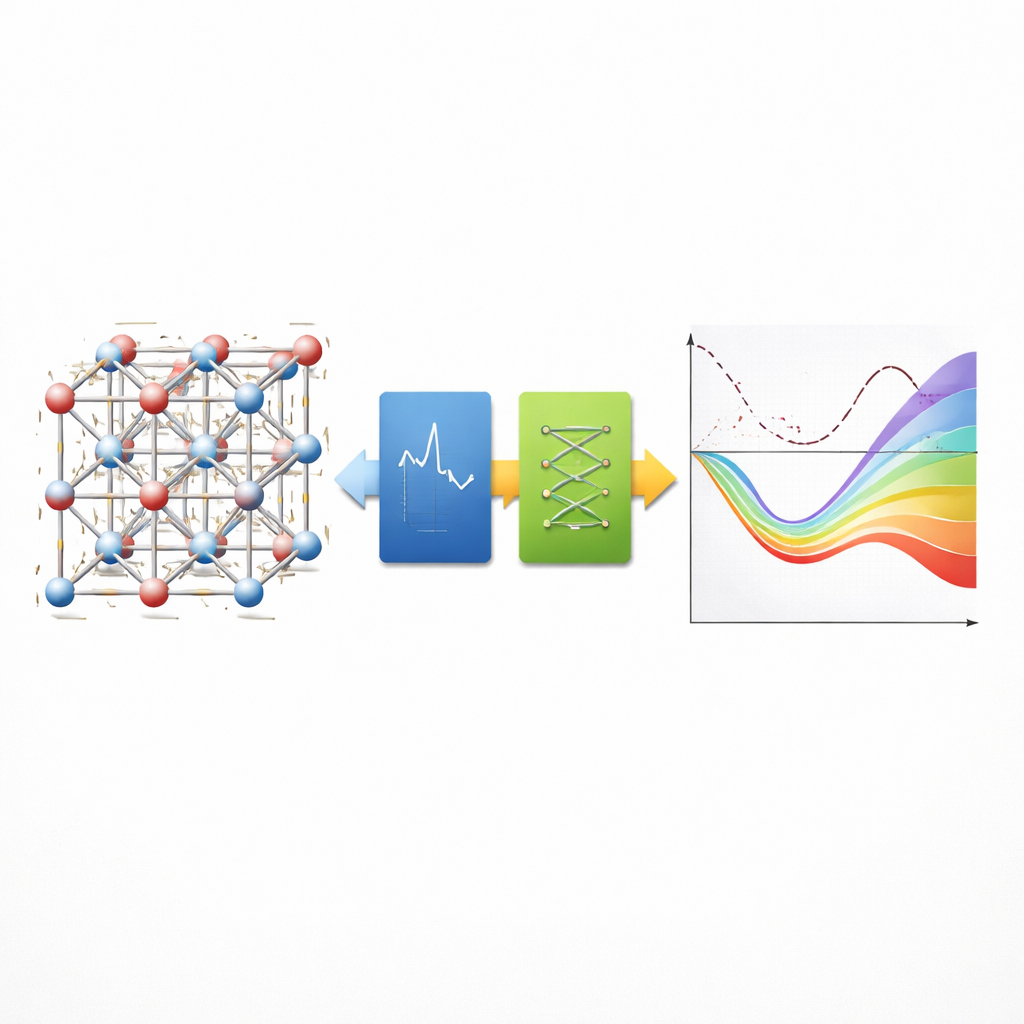

A shortcut that still respects the physics

At the heart of the work is the challenge of predicting the Hamiltonian, the central mathematical object that encodes how electrons behave in a material. If you know the Hamiltonian, you can compute key quantities like band gaps, which determine how a material absorbs and emits light. Purely data-driven neural networks can learn this mapping from atomic positions to Hamiltonians, but they usually require huge training sets and offer little insight into what the model is doing. The authors instead start from a well-understood approximate physics model called tight binding, which already captures the main interactions between atoms. The machine learning component is then asked to learn only the remaining differences between this approximation and high-accuracy quantum calculations, drastically reducing the learning burden.

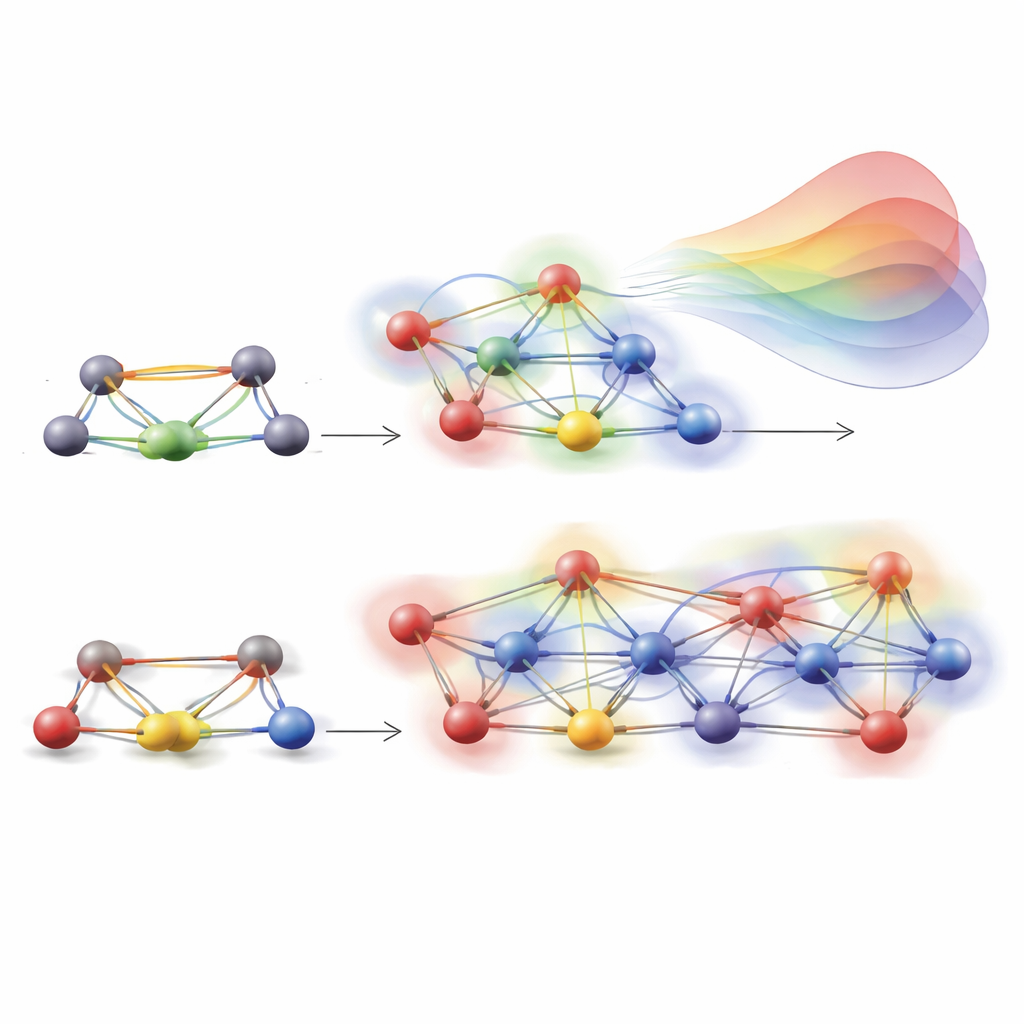

Teaching the model to feel its surroundings

A key innovation is how HAMSTER encodes the “environment” around each pair of atoms. In real materials, atoms vibrate and shift as temperature rises, and nearby atoms subtly change how electrons move between a given pair of sites. Traditional tight-binding models largely ignore these multi-atom influences. HAMSTER represents the local surroundings of two interacting atoms using a compact descriptor that reflects which neighbors lie within a chosen distance, how far away they are, and how their orbitals are oriented. A smooth cutoff ensures that distant atoms contribute less. A simple radial-basis machine learning model then uses these descriptors to add small corrections to the tight-binding Hamiltonian elements, focusing squarely on the missing environmental effects instead of relearning basic physics from scratch.

From simple semiconductors to complex perovskites

To validate the idea, the team first applies HAMSTER to gallium arsenide, a well-studied semiconductor, and shows that it can reach near–first-principles accuracy in predicting energy levels using only a handful of training structures. They then tackle a far tougher target: halide perovskites such as CsPbBr3 and MAPbBr3, promising materials for solar cells and light emitters that are notoriously difficult to model because of their soft lattices and strong thermal fluctuations. For CsPbBr3, HAMSTER trained on molecular dynamics snapshots at a single temperature reproduces detailed quantum calculations across a wide temperature range, keeping errors in the band gap and energy levels within a few hundredths of an electron volt. It also captures how the band gap fluctuates in time as atoms move, a critical ingredient for realistic device predictions.

Reaching truly large systems

Because HAMSTER is far cheaper than full quantum calculations, the authors can scale up to simulation boxes containing tens of thousands of atoms—sizes that are utterly impractical for standard density functional theory. For CsPbBr3, they combine a machine-learned force field for atomic motion with HAMSTER for the electronic structure, and analyze a 16 × 16 × 16 supercell holding more than 20,000 atoms. In these huge systems, short-term band-gap fluctuations average out, revealing a clean temperature trend that agrees well with experimental measurements. A similar strategy for MAPbBr3 allows them to study cells nearing 50,000 atoms and to map how both system size and temperature influence the band gap, again in good qualitative accord with experiments.

What this means for future materials design

Overall, the study shows that weaving physical knowledge into machine learning is a powerful way to bridge the gap between simple models and fully first-principles simulations. HAMSTER keeps the interpretability of a Hamiltonian-based description while achieving the accuracy and versatility needed to handle thermal effects, chemical substitutions, and realistic length scales. For non-specialists, the takeaway is that this kind of physics-informed learning could become a practical workhorse for exploring new light-harvesting and light-emitting materials on the computer, guiding experiments toward the most promising candidates without the prohibitive cost of traditional quantum calculations.

Citation: Schwade, M., Zhang, S., Vonhoff, F. et al. Physics-informed Hamiltonian learning for large-scale optoelectronic property prediction. Nat Commun 17, 2652 (2026). https://doi.org/10.1038/s41467-026-70865-7

Keywords: halide perovskites, machine learning in materials science, electronic structure, optoelectronic properties, tight-binding Hamiltonian