Clear Sky Science · en

Aligned representation of visual and tactile motion directions in hMT+/V5 and fronto-parietal regions

Why this matters for everyday life

Swatting a mosquito on your arm feels effortless, but your brain is quietly solving a hard problem: it must combine what you see with what you feel to figure out which way something is moving across your skin and how to react. This study asks where in the human brain sight and touch are brought into alignment, and how the brain turns very different kinds of signals—light on the eyes and pressure on the skin—into a single, shared sense of motion in the world around us.

Two ways of knowing where things move

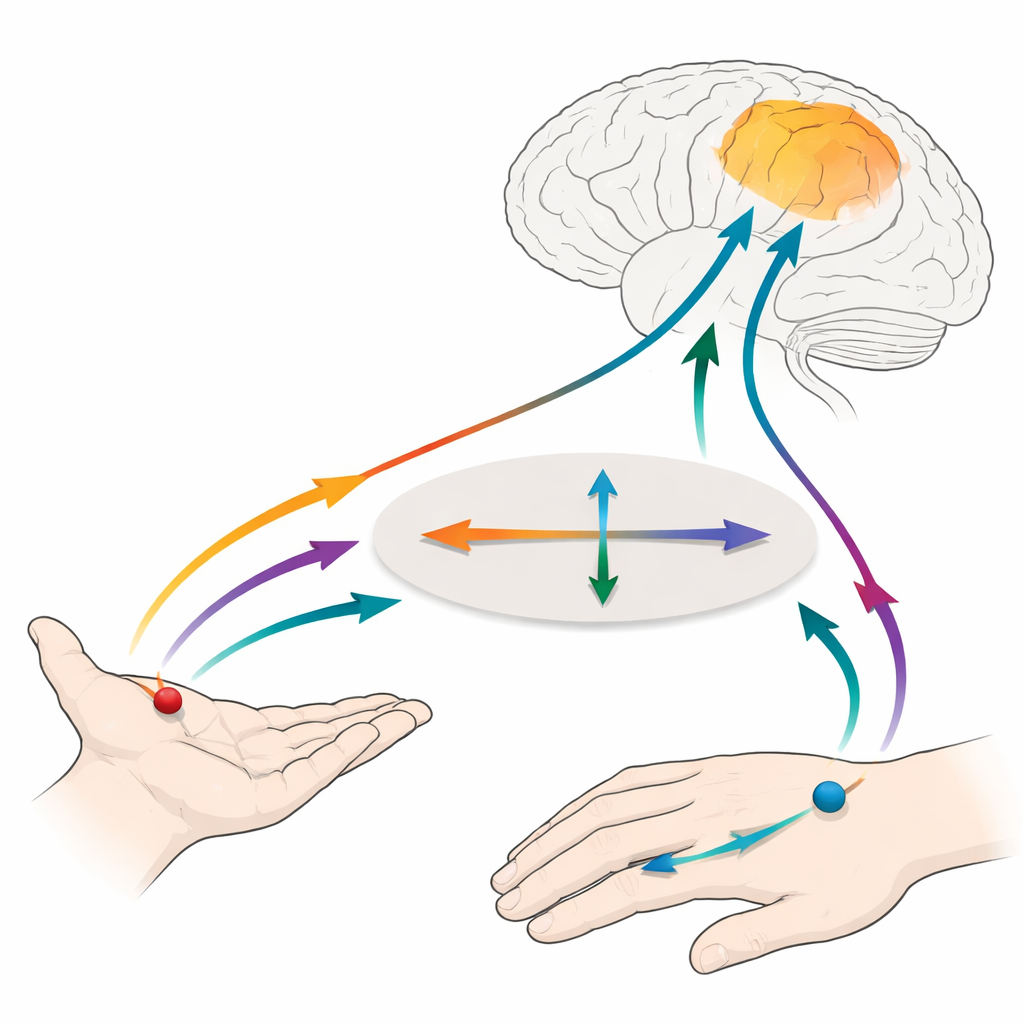

Vision and touch start out speaking different “spatial languages.” Visual motion is first mapped relative to the eyes: which part of the retina is stimulated. Tactile motion is mapped relative to the skin: which part of the hand is brushed. Yet our actions are guided by where things are in the external world, not just on our eyes or skin. The brain must therefore translate these body-based maps into a common, world-based frame so that a moving object seen near the hand and a matching sensation on the skin are treated as the same event.

A clever test using moving dots and moving brushes

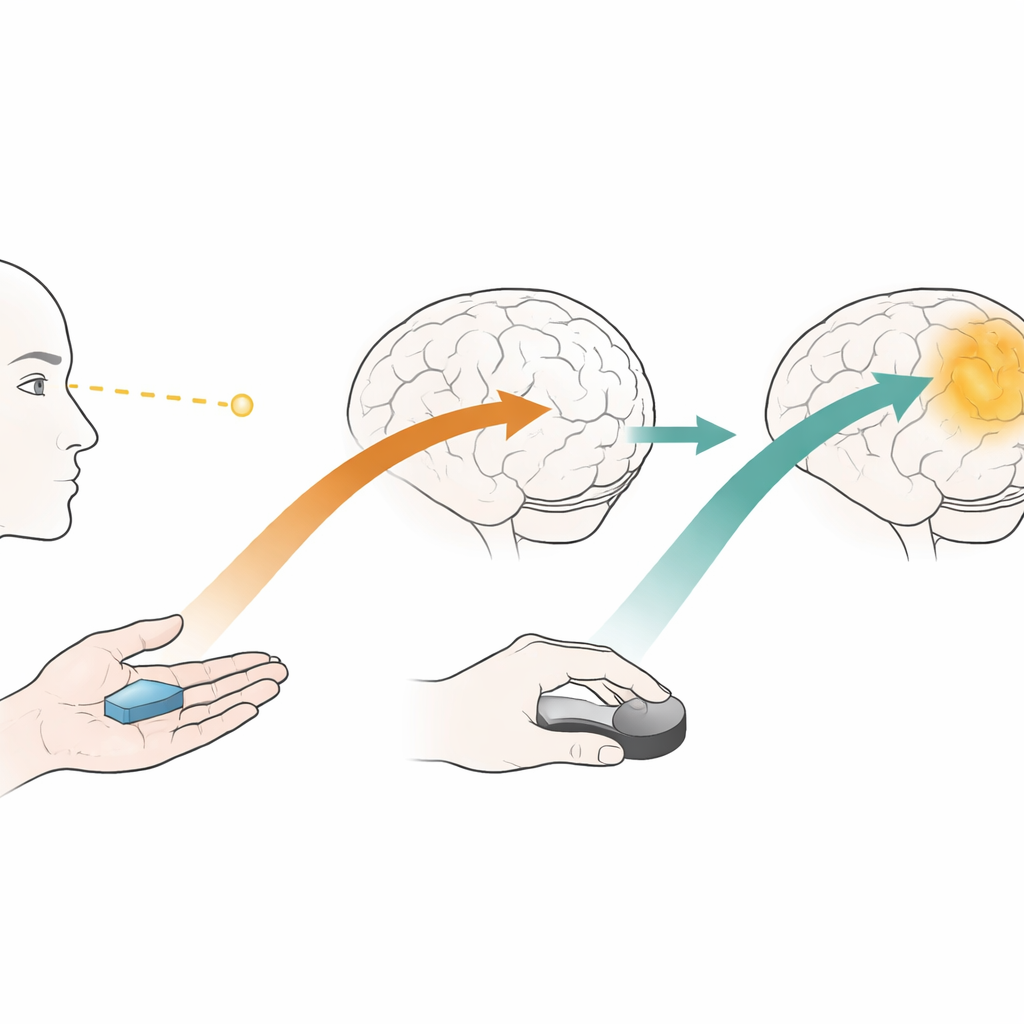

The researchers used functional MRI to monitor brain activity while people watched moving dot patterns and felt a brush sweep across their right hand. They changed the posture of the hand—sometimes palm-up, sometimes rotated—so that the same physical motion on the skin could point in different directions in external space. By comparing patterns of brain activity across many tiny measurement points, they asked whether a region could tell motion directions apart, and whether its “code” for direction stayed consistent when motion was defined on the skin versus out in space.

A visual motion hub that also feels motion

One central target was hMT+/V5, a patch of visual cortex long known for processing motion. The team first confirmed that this area lit up not only for moving visual dots but also when the hand was brushed, and that it could distinguish motion directions in both senses. This was true for subregions known as MT and MST as well. In contrast, primary touch areas on the left side of the brain responded strongly to tactile motion but not to visual motion, consistent with their classic role as a detailed map of the body surface.

From skin-based to world-based motion

The key question was how these areas handled different “reference frames.” In primary touch cortex, motion along the pinky-to-thumb axis and motion along the finger-to-wrist axis were most clearly separated when defined purely on the skin, showing a dominant body-based code. In right hMT+/V5, however, tactile directions were more cleanly distinguished when defined relative to the outside world—horizontal versus vertical in space—regardless of hand posture. Crucially, only in right hMT+/V5 could a computer trained on visual motion patterns correctly guess tactile motion directions, and this cross-sense decoding worked only when touch was described in external coordinates. Whole-brain analyses showed that matching visual and tactile motion in this way also engaged a network of right parietal and frontal regions linked to spatial attention and movement planning.

A shared map that still remembers the sense

Even though right hMT+/V5 carried enough shared information to align motion directions across sight and touch, it did not completely erase the difference between the senses: patterns for visual and tactile motion could still be told apart. The authors argue that this region, together with fronto-parietal partners, acts as a multisensory motion hub. It converts skin-based and eye-based inputs into a partly common, external map of motion directions while preserving which sense provided the information. This flexible coding may help the brain keep track of moving events as our eyes and limbs shift, allowing us to coordinate perception and action smoothly in a busy, dynamic world.

Citation: Shahzad, I., Battal, C., Cerpelloni, F. et al. Aligned representation of visual and tactile motion directions in hMT+/V5 and fronto-parietal regions. Nat Commun 17, 2625 (2026). https://doi.org/10.1038/s41467-026-70537-6

Keywords: multisensory motion, hMT+/V5, visual touch integration, spatial reference frames, brain imaging