Clear Sky Science · en

Bridging the latency gap with a continuous stream evaluation framework in event-driven perception

Why Faster Robot Vision Matters

Imagine a self-driving car spotting a sudden obstacle or a robot trying to return a speeding ping-pong ball. In these split-second situations, seeing quickly is just as important as seeing clearly. This article explores a new way to judge how fast and reliable cutting-edge "event cameras" really are when they track objects in motion, and shows that the usual lab tests can dramatically overestimate how well these systems will perform in the real world.

From Snapshots to Streams

Most of today’s computer vision systems treat the world like a slideshow. Regular cameras capture images at fixed intervals, and algorithms process one frame at a time. Even when engineers use neuromorphic, or event-based, cameras that sense changes in brightness at microsecond resolution, they often convert that rich, continuous stream back into coarse frames. This frame-based mindset hides a crucial problem: delay. Each time the system waits for the next frame and then processes it, precious milliseconds slip by. In high-speed tasks like autonomous driving or human–robot interaction, that delay means the system is always reacting to the recent past rather than the present.

A New Way to Score Real-Time Vision

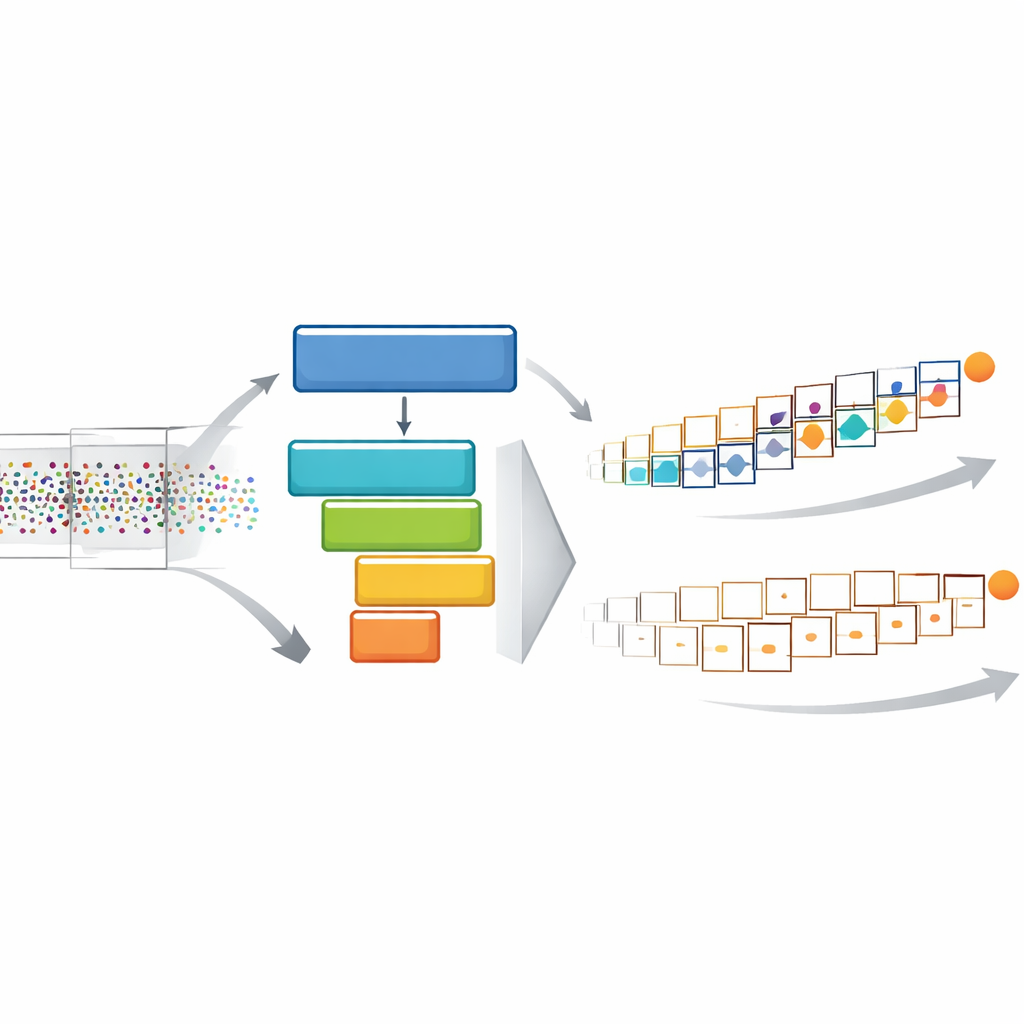

To close this gap between lab scores and real-world needs, the authors introduce a framework called STream-based lAtency-awaRe Evaluation, or STARE. Instead of forcing event data into fixed frames, STARE feeds a model with the freshest events as soon as it has finished its last prediction. This "Continuous Sampling" keeps the model busy and pushes its output rate as high as the hardware allows. At the same time, STARE judges accuracy in a new way: every ground-truth position of a moving object is paired with the most recent prediction available at that instant. If the model is slow, the same stale prediction is reused across many time points, and its apparent accuracy drops. This directly builds the cost of delay into the final score.

Building a High-Speed Testbed

Measuring such fine-grained timing requires equally fine-grained data, which existing event-camera datasets lack. They usually record where an object is only a few dozen times per second. The authors therefore created ESOT500, a new dataset where objects are annotated 500 times per second, on both low- and high-resolution event cameras and across varied scenes such as spinning fans, flying birds, and moving vehicles. At this density, the ground truth tracks fast, complex motion closely enough to avoid "temporal aliasing," where slow sampling makes a twisting, speeding path look deceptively simple. ESOT500 thus acts as a stress test for any method that claims to handle rapid, unpredictable dynamics.

What Really Happens When Latency Counts

Armed with STARE and ESOT500, the authors re-evaluated a range of state-of-the-art object trackers. When judged under traditional frame-based tests, heavier, more complex models often look best. Under STARE, however, many of these high-accuracy but slow systems lose more than half of their effective accuracy once delay is accounted for. Lighter, faster models suddenly rise to the top, because they provide more frequent, up-to-date predictions. The team confirmed this in a robot ping-pong experiment: a robot used an event camera and a tracker to hit back incoming balls. Moderately faster perception nearly doubled the hit rate, while a slower but offline-strong model performed poorly. In other words, in real time, speed and freshness of information can outweigh raw precision.

Smarter Use of Continuous Streams

Beyond evaluation, the authors explore how to design better systems for continuous vision. One strategy, "Asynchronous Tracking," pairs a slow but careful base model with a smaller, nimble companion that keeps updating the object’s position between the base model’s full passes. This dual setup reuses shared features and exploits the constant flow of events, boosting output rate by nearly 80% and improving latency-aware accuracy by about 60%. A second strategy, "Context-Aware Sampling," watches how many events occur around the tracked object. When the scene is quiet and little changes, the tracker temporarily reuses its last good estimate instead of recomputing, cutting wasted effort. It then reactivates when motion picks up, especially helping in low-activity or sparse-event conditions.

Closing the Gap Between Lab and Life

For non-specialists, the key message is simple: in fast-moving situations, how quickly a vision system can update its understanding of the world matters as much as how accurate each individual prediction is. By treating the camera’s output as a true stream and by baking delay directly into the score, STARE reveals weaknesses that conventional tests miss and highlights designs that really work under pressure. Together with the ESOT500 dataset and the proposed tracking strategies, this work points toward future robots, vehicles, and interactive machines that do not just see well, but see in time.

Citation: Chu, J., Zhang, R., Yang, C. et al. Bridging the latency gap with a continuous stream evaluation framework in event-driven perception. Nat Commun 17, 2441 (2026). https://doi.org/10.1038/s41467-026-70240-6

Keywords: event cameras, real-time tracking, robotic vision, latency-aware evaluation, neuromorphic perception