Clear Sky Science · en

Reliable uncertainty estimates in deep learning with efficient Metropolis-Hastings algorithms

Why smarter uncertainty matters

From medical scans to self-driving cars, modern artificial intelligence often makes decisions where being confidently wrong can be dangerous. Standard deep learning systems are excellent at recognizing patterns, but they are notoriously bad at telling us how unsure they are. This paper tackles that gap: it presents new ways to equip deep neural networks with reliable measures of uncertainty, while keeping the heavy computation of traditional Bayesian methods under control.

From guesswork to measured confidence

In everyday deep learning, a model is trained once and then used as-is. It outputs a single best guess, but offers little insight into how trustworthy that guess is. Bayesian neural networks take a different route: instead of fixing one set of model parameters, they treat parameters as random and try to capture a whole distribution of plausible models. Averaging predictions over this collection can reveal both the most likely answer and how much the model should trust itself. The challenge is that accurately sampling from this distribution with gold-standard methods like Hamiltonian Monte Carlo is extremely costly for today’s large networks and data sets.

Faster sampling without losing the big picture

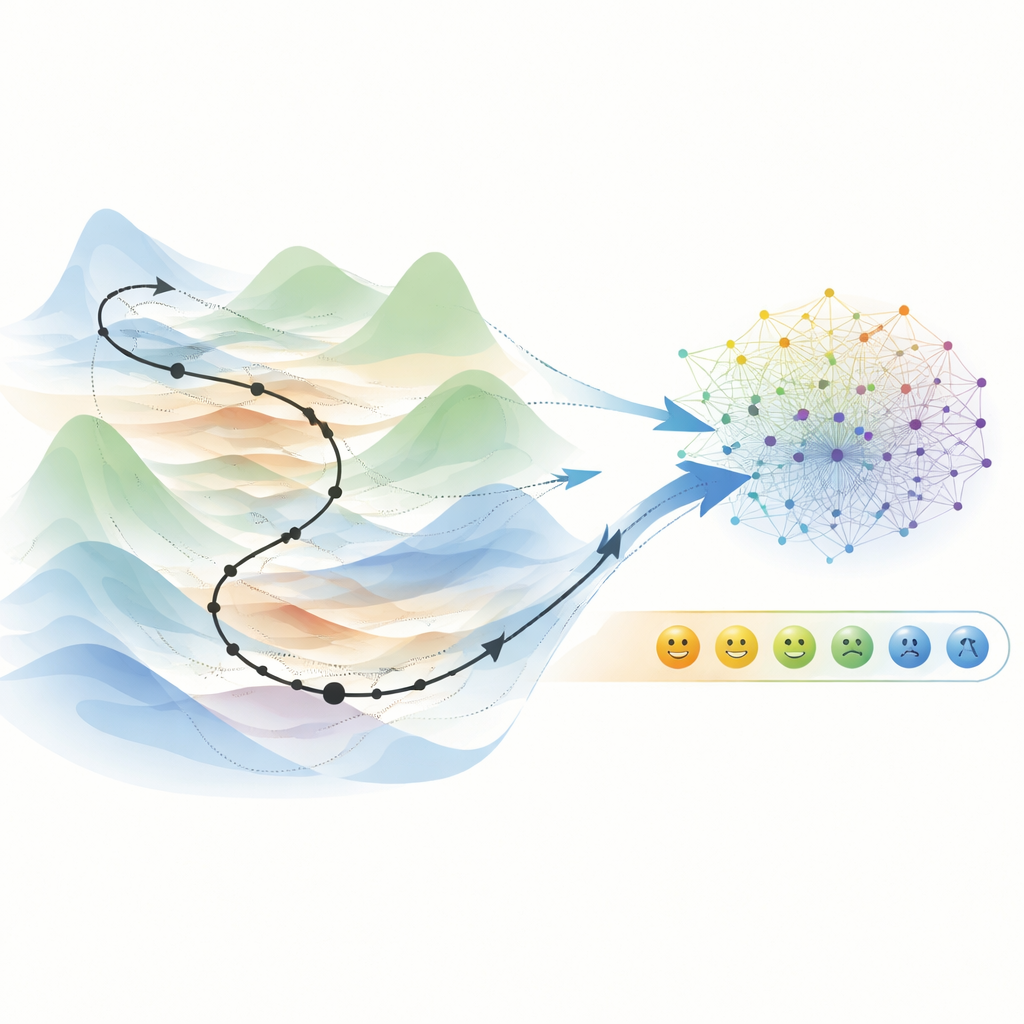

The authors focus on a family of methods called stochastic gradient Hamiltonian Monte Carlo, which already reuses ideas from standard training—mini-batches and noisy gradients—to speed up sampling. The missing ingredient is a reliable “filter” that decides which tentative parameter updates to keep. In classical Hamiltonian Monte Carlo, this role is played by a Metropolis-Hastings acceptance step that corrects numerical errors and prevents the sampler from drifting to the wrong regions. Bringing this acceptance step into the noisy, mini-batch world of deep learning is difficult, because it usually demands full-dataset evaluations and can stall progress if acceptance rates are too low.

Two new ways to walk the landscape

The paper introduces two complementary strategies. The first, called generalized stochastic gradient Hamiltonian Monte Carlo (GSGHMC), designs an acceptance test that runs on mini-batches while preserving the important property that genuine optima of the full problem are still correctly recognized. It uses a carefully chosen numerical integrator so that step-by-step acceptance remains stable, even though it only sees a slice of the data at a time. This gives an efficient sampler that stays close to the true Bayesian picture and produces ensembles of models with particularly well-calibrated confidence scores.

Riding long trajectories for better predictions

The second method, the Hamiltonian Trajectory Ensemble (HTE), makes a deliberate trade-off: instead of insisting on exact Bayesian behavior, it favors long, momentum-driven walks through parameter space that resemble aggressive training runs. At the end of each such trajectory, a Metropolis-style test decides whether to keep the resulting model snapshot. Because these paths tend to settle in broad, generalizable valleys of the loss landscape, the collected models form a diverse but focused ensemble. In image classification benchmarks such as EMNIST and CIFAR-10, HTE improves accuracy by up to about six percentage points over strong Bayesian baselines, and by several points over ordinary deterministic training, while still offering useful uncertainty information and strong detection of out-of-distribution inputs.

Smaller ensembles, smarter use

Sampling hundreds or thousands of models sounds expensive at test time, so the authors also study how many ensemble members are truly needed. By greedily discarding models that have little effect on performance, they find that roughly a third of the ensemble can often be kept without harming accuracy, though maintaining very sharp calibration typically requires more members. Across image tasks and a chaotic time-series forecasting problem, their methods consistently outperform or match popular alternatives such as variational inference, Monte Carlo dropout, and simpler deep ensembles, albeit at a higher training cost than plain deterministic learning.

What this means for real-world AI

For a non-specialist, the core message is that we can make deep learning not just accurate but also honest about what it does not know, without needing supercomputer-scale resources. By carefully weaving classical sampling ideas into modern, mini-batch-based training, the two proposed approaches deliver better predictions and more trustworthy confidence estimates than many existing techniques. This combination of efficiency, robustness, and calibrated uncertainty is a key step toward deploying deep learning safely in sensitive domains where the cost of overconfidence can be measured in lives, not just percentage points.

Citation: Schmal, M., Mäder, P. Reliable uncertainty estimates in deep learning with efficient Metropolis-Hastings algorithms. Nat Commun 17, 2531 (2026). https://doi.org/10.1038/s41467-026-70015-z

Keywords: Bayesian neural networks, uncertainty estimation, Hamiltonian Monte Carlo, deep learning ensembles, stochastic gradient MCMC