Clear Sky Science · en

Text embedding models yield detailed conceptual knowledge maps derived from short multiple-choice quizzes

Seeing What a Student Really Knows

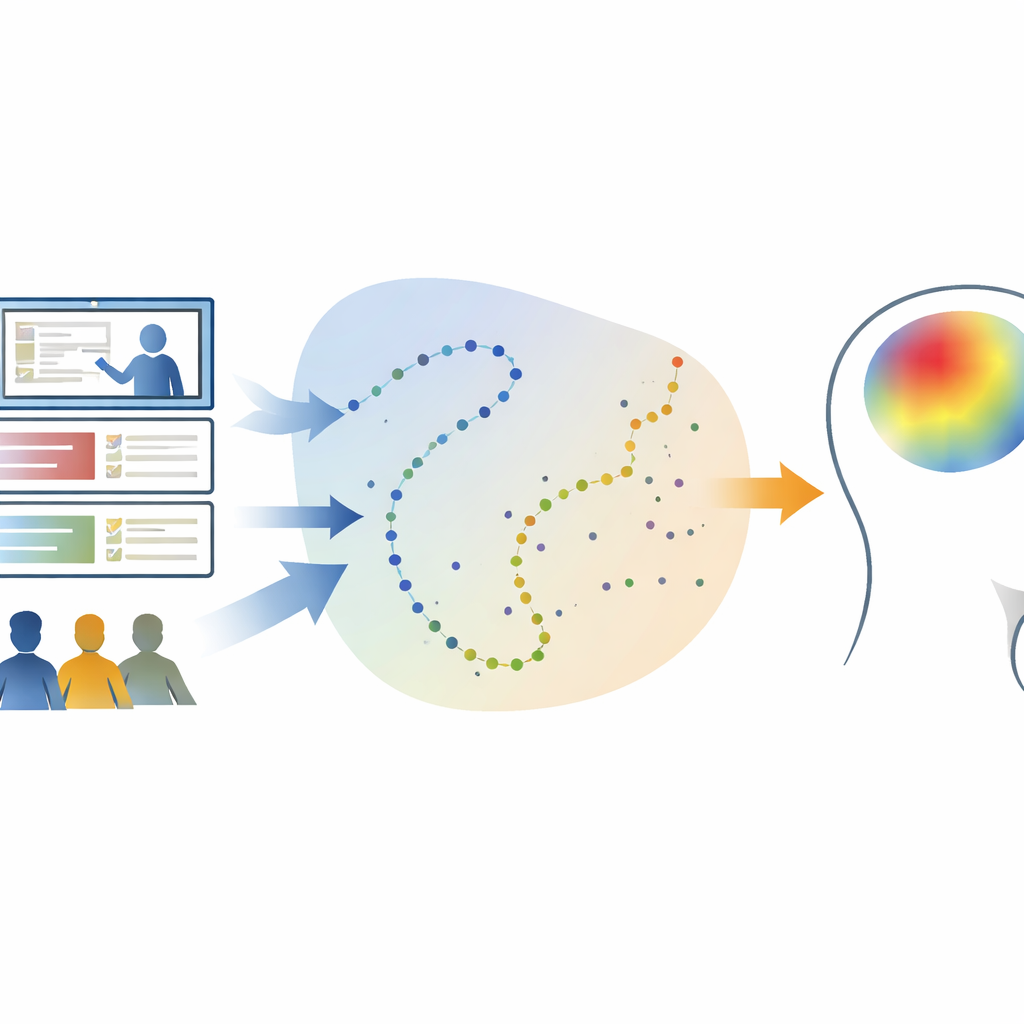

Imagine if a teacher could open a detailed map of everything a student understands—not just a single test score, but a living picture of strengths, gaps, and how new ideas take root. This study shows that such maps may be closer than we think. By combining short multiple-choice quizzes with modern language tools used in search engines and chatbots, the authors show how to turn a handful of answers into rich, evolving portraits of a learner’s knowledge.

From Simple Quizzes to Rich Learning Maps

Most tests boil a student’s work down to a single number or letter grade. That number hides a lot: two students with the same score may know very different things. The researchers set out to recover that hidden detail without adding more testing. Their key idea is that each quiz question points toward certain ideas and away from others, and that the pattern of right and wrong answers across questions can be used to reconstruct what a learner likely understands about many related ideas.

Turning Words into a Landscape of Ideas

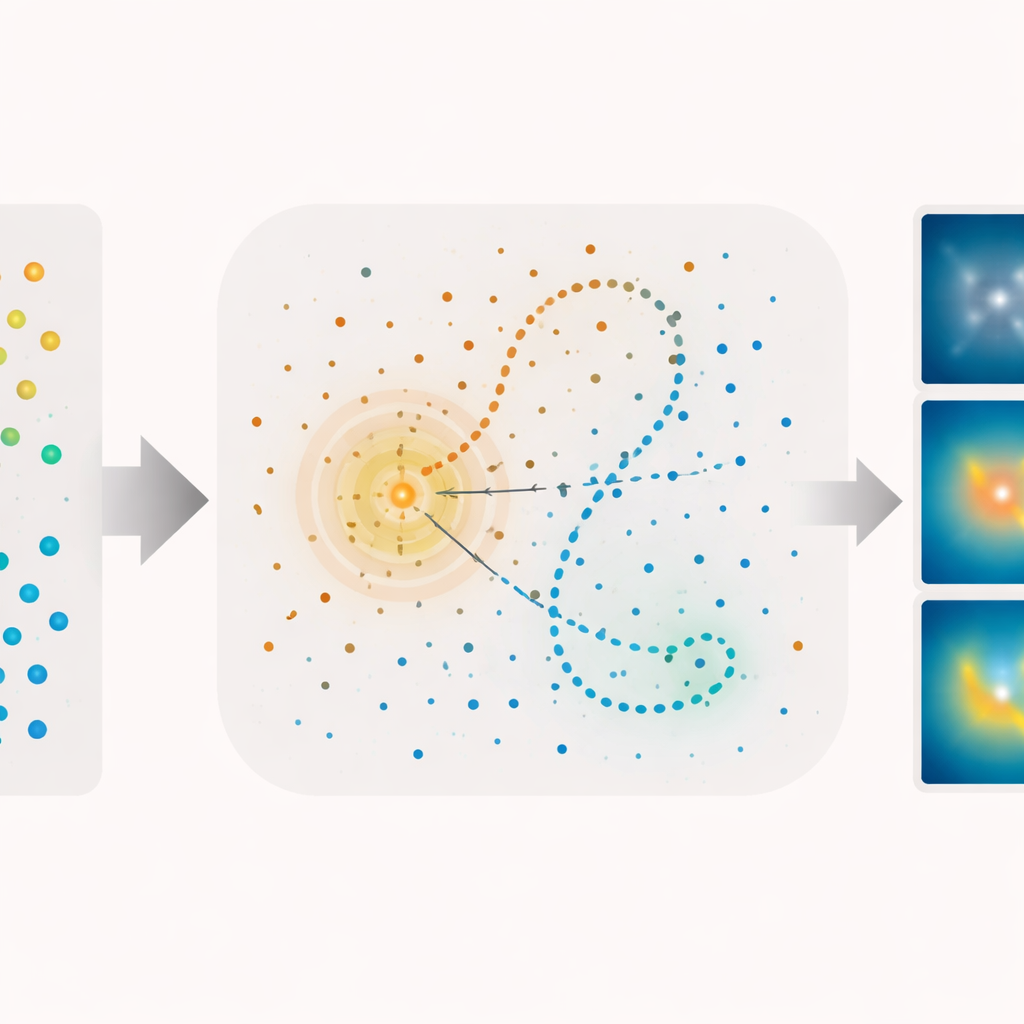

To do this, the team used a technique from natural language processing that represents text as points in a high-dimensional space, where nearby points have related meanings. They fed transcripts from two Khan Academy physics lectures—one on the four fundamental forces of nature and another on how stars are born—into a topic model that discovers recurring themes in the wording. Each brief slice of lecture, and each quiz question, was turned into a coordinate in this abstract space. The result is a kind of conceptual landscape in which the lectures trace winding paths and the questions appear as scattered landmarks.

Linking Questions to Moments of Learning

With this landscape in hand, the authors could ask which parts of a lecture each question was really about. They found that most questions lined up strongly with narrow stretches of a lecture’s path, even though the questions had not been used to train the model and often used different wording than the videos. This allowed them to estimate how much each student knew about the content at every second of each video. By comparing three short quizzes taken before, between, and after the videos, they could watch knowledge about each lecture’s content rise sharply after the corresponding video and remain high later on.

Predicting Success and Tracing the Spread of Knowledge

The model did more than replay the past; it could also forecast performance. When the researchers used their knowledge estimates to predict whether a student would answer a particular question correctly, the predictions were far better than chance across all three quizzes. They also examined how knowledge “spills over” to nearby concepts in the landscape. If a student knew the answer to one question, they were more likely to know the answers to other questions whose coordinates lay close by, and this advantage faded smoothly with distance. Finally, the team drew two-dimensional “knowledge maps” and “learning maps” showing where in the space students knew the most before any instruction, where knowledge grew after each lecture, and how those gains were tightly clustered around the concepts actually taught.

Implications for Smarter Teaching Tools

In everyday terms, this work shows that a short, well-designed quiz can reveal far more than a raw score suggests. By embedding course materials and questions in a shared conceptual space, teachers—or future educational software—could build fine-grained maps of what each learner understands, how that understanding is organized, and how it changes over time. Such maps could guide personalized lessons that target specific gaps, highlight useful connections between ideas, and perhaps even help forecast how easily a student will grasp new material. While the current framework focuses on text and does not yet capture all the subtleties of human understanding, it offers a promising path toward evaluation methods that are both more informative for educators and less burdensome for students.

Citation: Fitzpatrick, P.C., Heusser, A.C. & Manning, J.R. Text embedding models yield detailed conceptual knowledge maps derived from short multiple-choice quizzes. Nat Commun 17, 2055 (2026). https://doi.org/10.1038/s41467-026-69746-w

Keywords: conceptual learning, education technology, text embeddings, adaptive testing, learning analytics