Clear Sky Science · en

A data-efficient foundation model for porous materials based on expert-guided supervised learning

Teaching Computers to Read Sponges for Gases

Porous materials are like microscopic sponges that can soak up, sort, and store gases such as carbon dioxide, methane, and hydrogen. They are vital for cleaner fuels, carbon capture, and chemical manufacturing. But discovering which new material will work best usually demands huge amounts of painstaking simulation and experiment. This paper introduces SpbNet, a new kind of artificial intelligence model that learns the language of these sponge-like materials far more efficiently, using built-in physical knowledge instead of brute-force data alone.

Why Smart Sponges Matter

Metal–organic frameworks, covalent organic frameworks, porous polymers, and zeolites all belong to a family of materials riddled with tiny, regularly arranged holes. Their performance depends on how those holes are shaped and how gas molecules “feel” as they move through them. In principle, computers can predict this behavior, but traditional machine-learning models need massive training sets that are expensive or impossible to gather in materials science, where measured structures and high-quality simulations are limited. SpbNet tackles this bottleneck by weaving well-established physical rules directly into its training, allowing it to do more with far less data.

Building on the Physics of Attraction and Repulsion

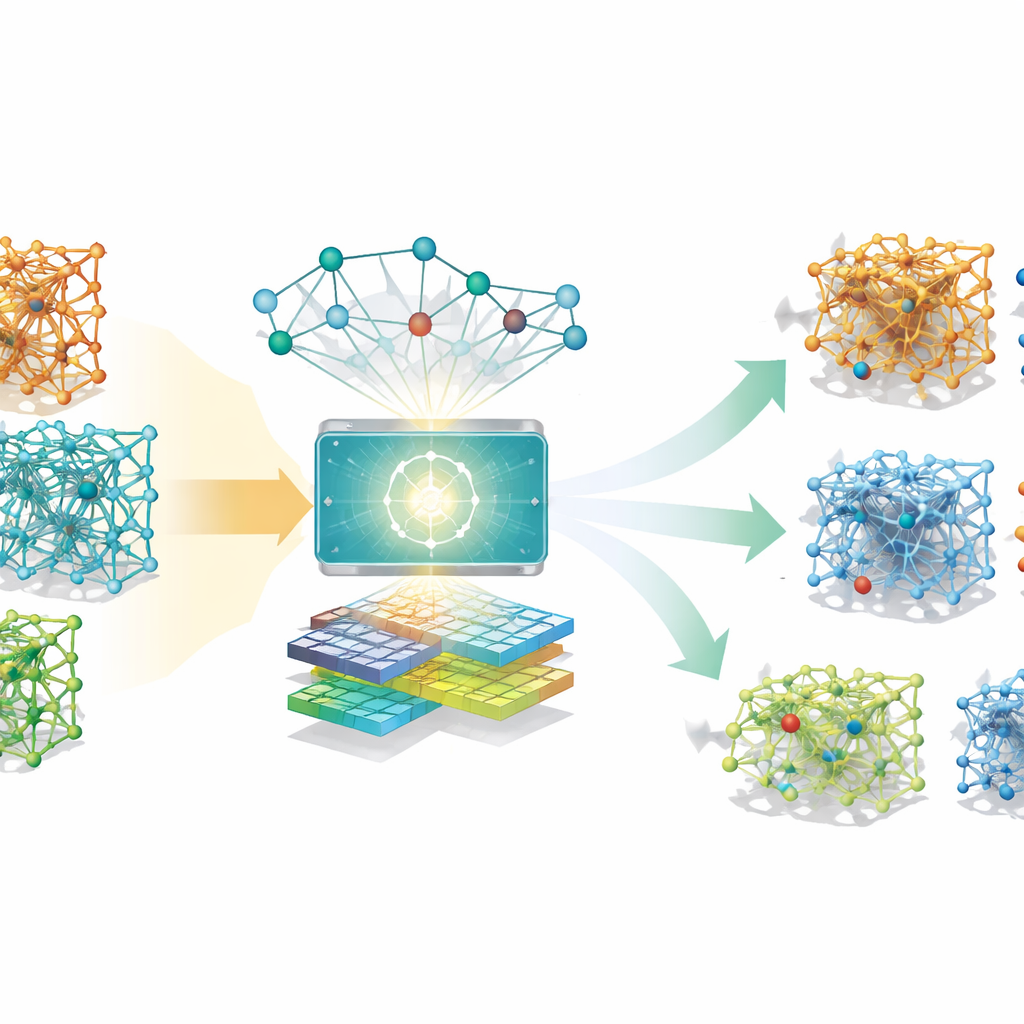

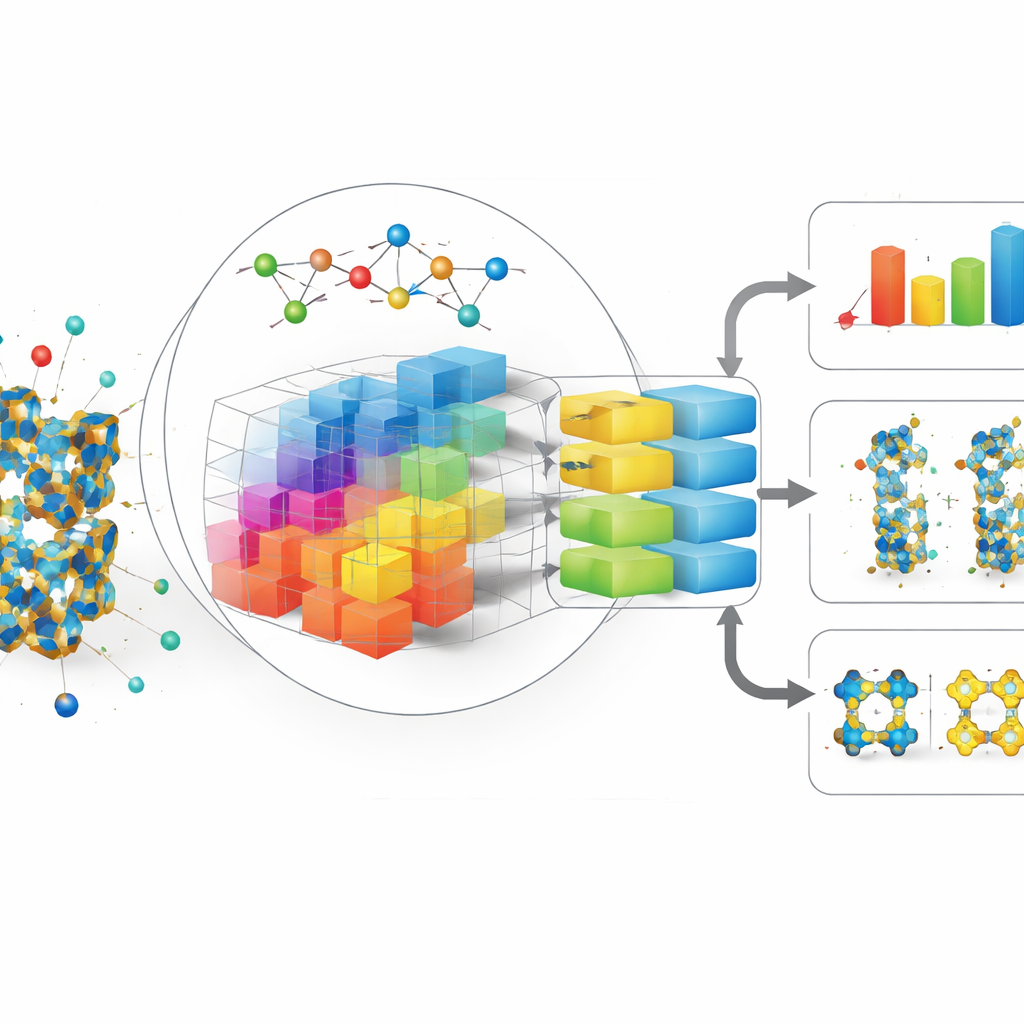

Instead of feeding the model only raw atomic positions, the authors encode how a generic gas molecule would interact with a material at many points in space. They construct 20 “basis” patterns that describe familiar forces: short-range repulsion when atoms get too close and longer-range attraction between them. These patterns are combined into a three-dimensional grid that spans the pores of the material, capturing an energy landscape that is not tied to any single gas species. One part of SpbNet, a graph-based network, studies the material’s atoms and bonds, while another, image-like network examines this energy grid. A cross-attention module lets these two streams talk to each other, so the model can connect local force patterns with global pore shapes.

Learning Geometry Across Scales

To prepare SpbNet for many different tasks, the team does not start by asking it to predict gas uptake directly. Instead, they first train it to master geometric questions that materials scientists already know how to calculate: how wide the narrowest channels are, how large the biggest cavities are, and how much volume and surface area are actually accessible to different probe sizes. At a finer scale, the model learns how many atoms sit in each small region and how far that region is from the solid surface. These supervised exercises force the network to develop a detailed internal map of pore shape and connectivity, which later proves useful for a wide range of properties related to gas storage, separation, and even mechanical strength.

Outperforming Bigger Models with Less Data

After this training, SpbNet is fine-tuned on practical tasks such as predicting how much carbon dioxide or methane a material will adsorb, how well it can separate gas mixtures, and how gases diffuse through it. Across more than 50 benchmarks, SpbNet consistently makes more accurate predictions than previous state-of-the-art models, including those trained on nearly twenty times more materials. It also generalizes surprisingly well: although it is pre-trained only on one class of porous crystals (metal–organic frameworks), it transfers effectively to related but distinct materials like covalent organic frameworks, porous polymer networks, and zeolites, with large error reductions in many cases.

Peeking Inside the Model’s Reasoning

To understand why this strategy works, the authors probe the inner workings of SpbNet. They find that the combination of global geometric targets and local surface-related tasks encourages the model to keep rich, localized information as signals flow through its many layers, instead of smoothing everything into a bland average. Removing parts of this physics-guided training or discarding the energy-based descriptors makes the predictions noticeably worse, especially for tasks that rely on subtle size and shape effects, such as distinguishing gases that differ only slightly in size.

What This Means for Future Material Discovery

In simple terms, SpbNet shows that you can train a powerful, flexible model for porous materials without drowning it in data, as long as you carefully encode what physics already tells us. By teaching the network to first understand pore geometry and generic interaction patterns, the authors build a foundation that supports accurate and data-efficient predictions for many specific goals. This approach could speed up the discovery of better materials for capturing greenhouse gases, purifying chemicals, and storing clean fuels, while offering a blueprint for how to design similarly efficient models in other data-poor areas of science.

Citation: Zou, J., Lv, Z., Tan, W. et al. A data-efficient foundation model for porous materials based on expert-guided supervised learning. Nat Commun 17, 2618 (2026). https://doi.org/10.1038/s41467-026-69245-y

Keywords: porous materials, metal-organic frameworks, machine learning, gas adsorption, foundation models