Clear Sky Science · en

Closed-form feedback-free learning with forward projection

Teaching Machines Without Backward Messages

Modern artificial intelligence mostly learns using a method called backpropagation, where errors are sent backward through a network to adjust its internal connections. But this process is unlike how real brains work and can be slow and resource-hungry. This paper introduces a new way for neural networks to learn, called Forward Projection, that skips the backward step entirely while still achieving strong performance, especially on challenging biomedical tasks with limited data.

A New Way to Guide Learning

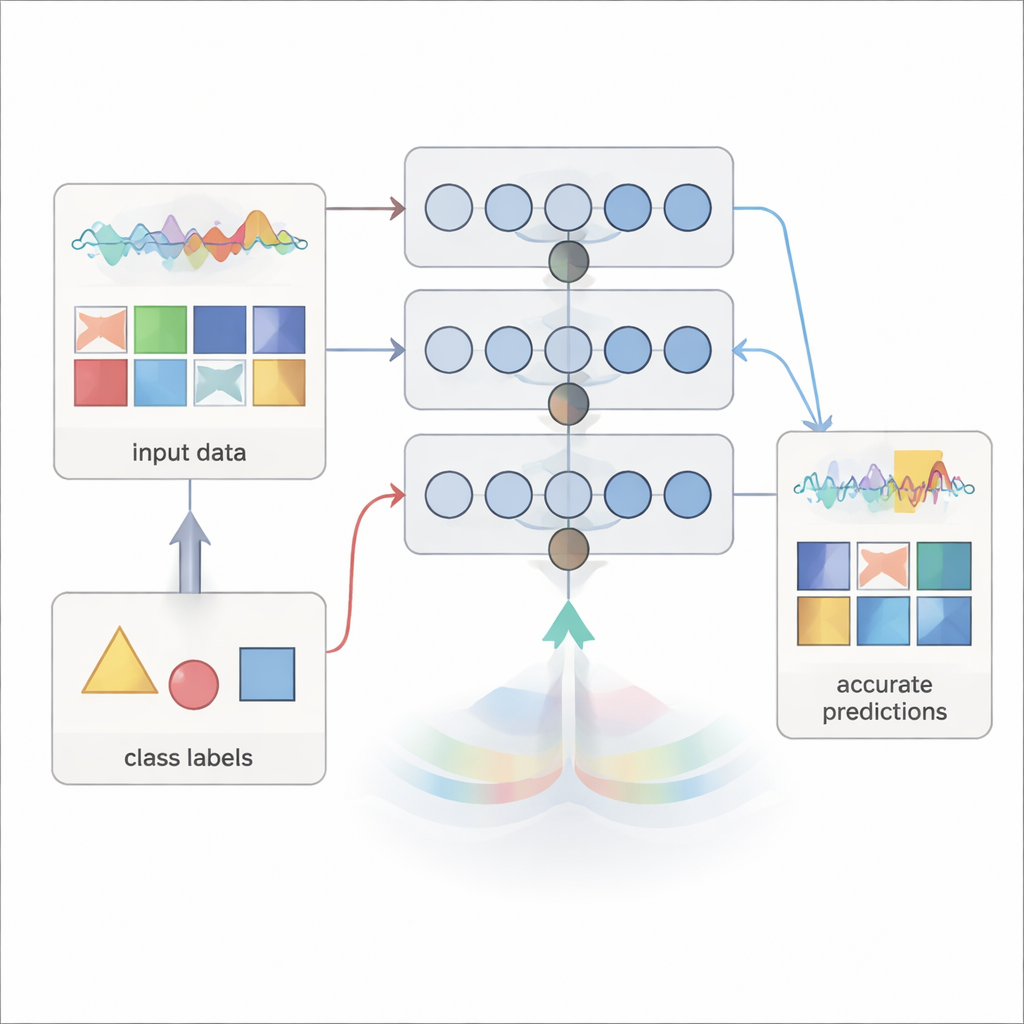

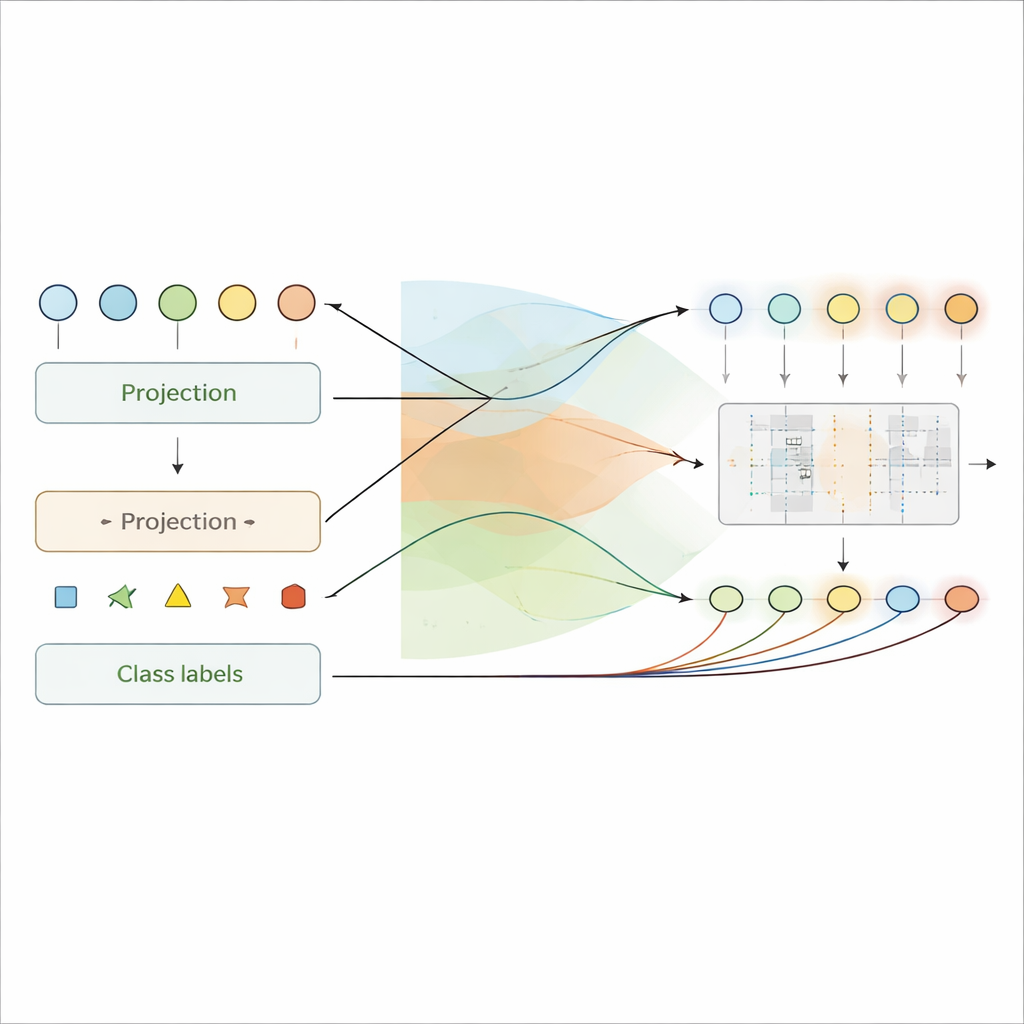

Traditional neural networks learn by comparing their predictions with the correct answers and sending error signals backward through every layer to fine-tune the connections. Forward Projection takes a different route. Instead of relying on these backward error messages, it uses only information available as signals move forward: the current layer’s activity and the target label. At each layer, the method combines the input to that layer and the desired output label using fixed random projections passed through a simple nonlinearity. This produces a “target” internal signal for that layer—a pattern of electrical-like activity that the layer should try to match.

Once these targets are created, each layer’s connection weights are solved in one shot using closed-form regression, a standard statistical formula rather than iterative gradient descent. This means the network can be trained in a single pass over the dataset, without repeatedly revisiting the same examples or storing large numbers of intermediate activations. Because no information needs to travel backward, the method respects the one-way communication seen in biological neurons and could be easier to implement on specialized hardware with one-directional connections.

Seeing Meaning in Hidden Activity

A striking advantage of Forward Projection is that the internal signals in hidden layers become directly interpretable. Since each layer is explicitly trained to encode both the input and the label in its membrane-like potentials, these internal values can be read as local predictions of the class. The authors show how to approximately “decode” these signals back into label space, turning activity patterns into per-layer explanations of what the network believes at each stage. In experiments, these explanations become more accurate in deeper layers, reflecting progressive learning—early layers see broad patterns, while later ones focus on decision-critical details.

This interpretability is particularly valuable in medicine, where understanding why a model made a decision can matter as much as the decision itself. Using electrocardiogram data, the authors show that Forward Projection highlights clinically known signs of heart attack—such as changes in specific waveform segments—at the right moments in time. On eye scans used to detect abnormal blood vessel growth, the method naturally focuses on fluid pockets, bright deposits, and scar-like regions that specialists look for, even when trained with as few as 100 examples per class.

Fast Training, Strong Results

The team benchmarked Forward Projection against several alternatives that also try to avoid full backpropagation, as well as against standard backpropagation itself. On image and sequence tasks such as Fashion-MNIST digits, DNA promoter recognition, heart attack detection from electrocardiograms, and object recognition, the new method matched or exceeded the performance of other local learning rules. In standard settings, backpropagation still had an overall edge, but Forward Projection’s accuracy came surprisingly close while using only a single training pass.

The benefits became clearer in “few-shot” scenarios, where only a handful of labeled examples are available, as is common in clinical practice. Here, Forward Projection often generalized better than both backpropagation and competing local methods on chest X-rays, retinal scans, and small image subsets. Backpropagation tended either to overfit the tiny datasets or fail to learn rich enough features, whereas Forward Projection produced more stable, reusable internal representations. From a computational standpoint, training a large layer required orders of magnitude fewer multiply-and-accumulate operations than running many epochs of backpropagation, translating into substantial speedups and lower energy cost.

What This Means for Future AI and Brain-Inspired Computing

In everyday terms, this work shows that neural networks do not have to rely on heavy, biologically implausible feedback loops to learn useful and understandable internal representations. By cleverly mixing inputs and labels in a single forward sweep and solving for weights in closed form, Forward Projection offers a way to train models quickly, interpret their inner workings, and handle small, noisy biomedical datasets. While backpropagation remains the gold standard for many large-scale tasks, this feedback-free approach points toward more brain-like and hardware-friendly learning strategies that could underpin the next generation of efficient, explainable AI systems.

Citation: O’Shea, R., Rajendran, B. Closed-form feedback-free learning with forward projection. Nat Commun 17, 2414 (2026). https://doi.org/10.1038/s41467-026-69161-1

Keywords: feedback-free learning, neural networks, few-shot training, biomedical AI, explainable deep learning