Clear Sky Science · en

Owl-vision-inspired near sensor computing

Seeing in the Dark Like an Owl

Imagine a drone that can spot a lost hiker or a distant spacecraft without any searchlights, even under starlight so faint that ordinary cameras see almost nothing. This paper describes new vision hardware inspired by owls that brings that idea closer to reality. By mimicking how an owl’s eyes adapt to darkness and how its brain efficiently processes faint signals, the researchers build a tiny electronic "synapse" that both senses weak light and performs part of the computation needed for recognition, directly on the sensor.

Why Existing Machines Struggle at Night

Modern artificial intelligence can recognize faces, objects, and scenes with impressive accuracy, but doing so typically requires powerful chips that burn enormous amounts of energy. Conventional cameras also separate sensing from computing: first, the camera captures an image; then, distant processors crunch the data. Under very low light, these cameras usually fail unless aided by bright lamps or heavy digital enhancement. By contrast, an owl’s eye and brain work together: its retina accumulates tiny trickles of photons over time, and its neural circuits adapt so that dim shapes gradually become visible. The authors aim to bring a similar, tightly integrated and energy-efficient strategy to machine vision.

A Tiny Device That Learns From Light

At the heart of the work is an "owl-inspired dual-mode adaptive synapse"—a small transistor-based device that acts like both a light sensor and a learning connection between nerve cells. The device is built in layers: a transparent bottom electrode, a dielectric film that can trap charges, a specially engineered light-absorbing blend, and an organic semiconductor channel on top. When faint light hits the absorbing layer, a few charge carriers are generated and, guided by an applied voltage, become trapped in the dielectric and accumulated in the channel. This gradually boosts the device’s electrical response, much as rod cells in an owl’s retina become more sensitive as they adapt to darkness. The authors show that their device can respond to light intensities as low as 0.146 nanowatts per square centimeter—roughly three orders of magnitude weaker than what standard camera chips can handle—while displaying a strong, tunable gain that quantifies its dark adaptation.

Acting as an Artificial Synapse

Beyond just sensing light, the device also mimics how biological synapses strengthen or weaken with activity. Under repeated light pulses across a broad range of colors, from ultraviolet to near-infrared, the device’s current increases in lasting steps, storing a memory of the optical history. Under electrical pulses applied to its gate, it exhibits long-term potentiation and depression—gradual, reversible changes in conductance that encode synaptic "weights" in artificial neural networks. These weights are stored non‑volatily, meaning the device remembers them without constant power, and can be adjusted across multiple levels like a multi-bit digital value. Crucially, each synaptic event consumes on the order of 10 femtojoules of energy, comparable to or even lower than estimates for biological synapses, and orders of magnitude below the energy budgets of typical AI hardware.

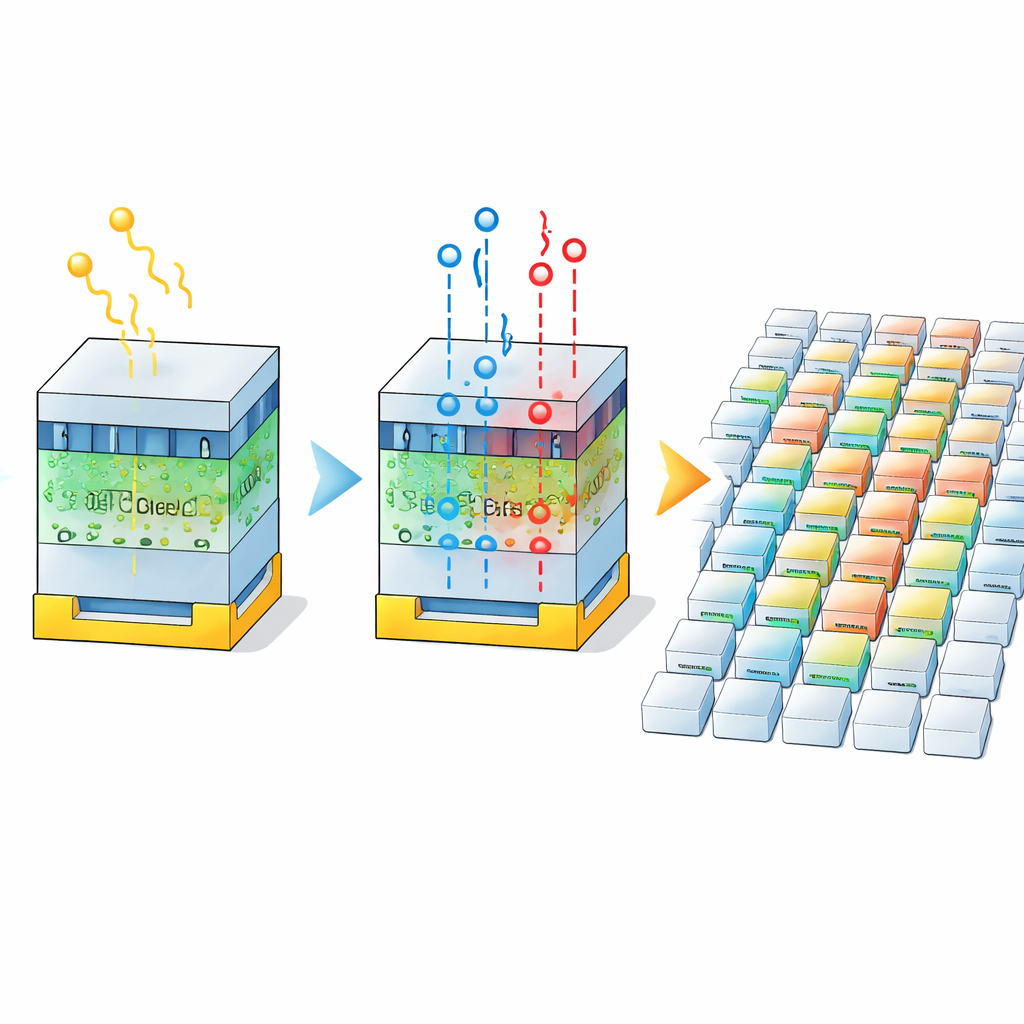

From Single Synapse to Vision System

To confirm that this behavior holds at scale, the team fabricates a 19-by-17 array of devices and shows that they operate uniformly. When a faint light pattern is projected onto the array, the photocurrents at illuminated spots gradually grow during adaptation, revealing previously hidden shapes under ultra-low illumination, much like an owl’s retina sharpening an image in the dark. The authors then map the device’s multiple conductance levels onto weights in machine-learning models, including simple multilayer perceptrons, convolutional networks, and a deep VGG-style architecture. Even with relatively coarse, discrete weights, these simulated networks still reach over 90 percent accuracy on standard image datasets, demonstrating that the synaptic states are sufficient for practical computation.

Night Vision for Drones and Beyond

To illustrate real-world potential, the researchers simulate an air-to-ground recognition system mounted on a small drone, trained to detect a clothing-shaped target at different brightness levels that correspond to starlit conditions. By relating the device’s time-dependent response to the contrast of captured images, they build a preprocessing stage that "adapts" the image, boosting useful contrast while staying within realistic sensor behavior. A popular object-detection network (YOLOv5) trained on this adapted data attains over 95 percent recognition accuracy even at the lowest tested light level. In plain terms, the work shows that by combining owl-like dark adaptation with built-in synaptic learning directly at the sensor, it is possible to push machine vision down to conditions where traditional cameras fail, while using far less energy. Such technology could eventually underpin search-and-rescue drones, autonomous explorers, or astronomy instruments that see more while shining less.

Citation: Zhao, Z., Cao, Y., Huang, S. et al. Owl-vision-inspired near sensor computing. Nat Commun 17, 2676 (2026). https://doi.org/10.1038/s41467-026-69123-7

Keywords: low-light vision, neuromorphic sensor, owl-inspired imaging, near-sensor computing, night-time object detection