Clear Sky Science · en

Transfer learning in DeepLC improves LC retention time prediction across substantially different modifications and setups

Why predicting chemistry timing matters

Every time scientists study the proteins in our cells, they rely on a technique that first sends tiny protein fragments, called peptides, through a liquid-filled column before weighing them in a mass spectrometer. How long each peptide spends in the column – its “retention time” – is incredibly informative, helping researchers recognize and confirm what they are measuring. But because each laboratory uses slightly different instruments and settings, computer models that predict these retention times often break when moved from one setup to another. This article shows how a modern machine-learning trick called transfer learning can make those predictions much more reliable and flexible across many experimental conditions.

Timing the journey of protein fragments

In protein research, liquid chromatography–mass spectrometry is the workhorse method. The liquid chromatography step separates thousands of peptides based on their chemical properties, so they do not all arrive at the detector at once. The resulting retention time, alongside the peptide’s measured mass, gives scientists a powerful two-dimensional fingerprint. Over the past decade, researchers have trained computer models to predict retention times directly from peptide sequences. These predictions improve the confidence of peptide identifications, help design better experiments, and are essential for building large, computer-generated spectral libraries used in modern high-throughput workflows.

The problem of changing lab conditions

Unfortunately, retention time is highly sensitive to details such as solvent acidity, column material, pressure, and temperature. Even small changes can reshuffle the order in which peptides emerge from the column. Traditional approaches try to fix this by “calibrating” a model trained elsewhere with a small set of reference peptides, assuming that the order in which peptides elute stays the same. When that assumption breaks – for example when the chemistry of the column or the sample pH changes – calibration can fail badly. Another option is to train an entirely new model for each setup, but this demands many well-measured peptides, which are not always available, especially for rare or unusual chemical modifications.

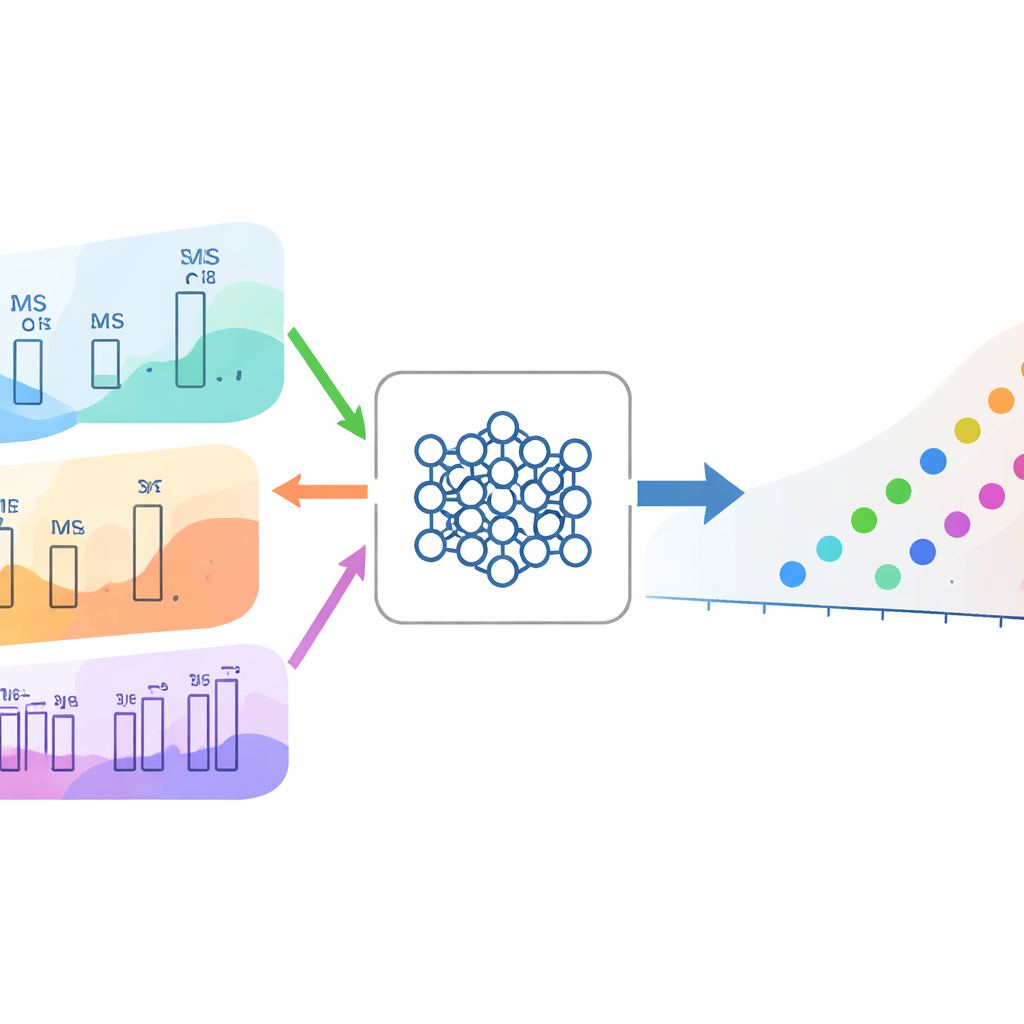

Reusing knowledge with transfer learning

The authors build on DeepLC, a deep-learning model that already predicts retention times for many peptide types. Instead of starting from scratch for every new situation, they reuse a model that was trained on a large, high-quality data set and fine-tune it on a much smaller collection of peptides from the new setup. Across 474 data sets drawn from hundreds of public experiments, this transfer-learning strategy almost always beats both simple calibration and training a new model from random starting settings. The gains are especially clear when only a few hundred to a few thousand training peptides are available, a common scenario in real studies. Even when many examples exist, transfer learning still tends to provide slightly better accuracy.

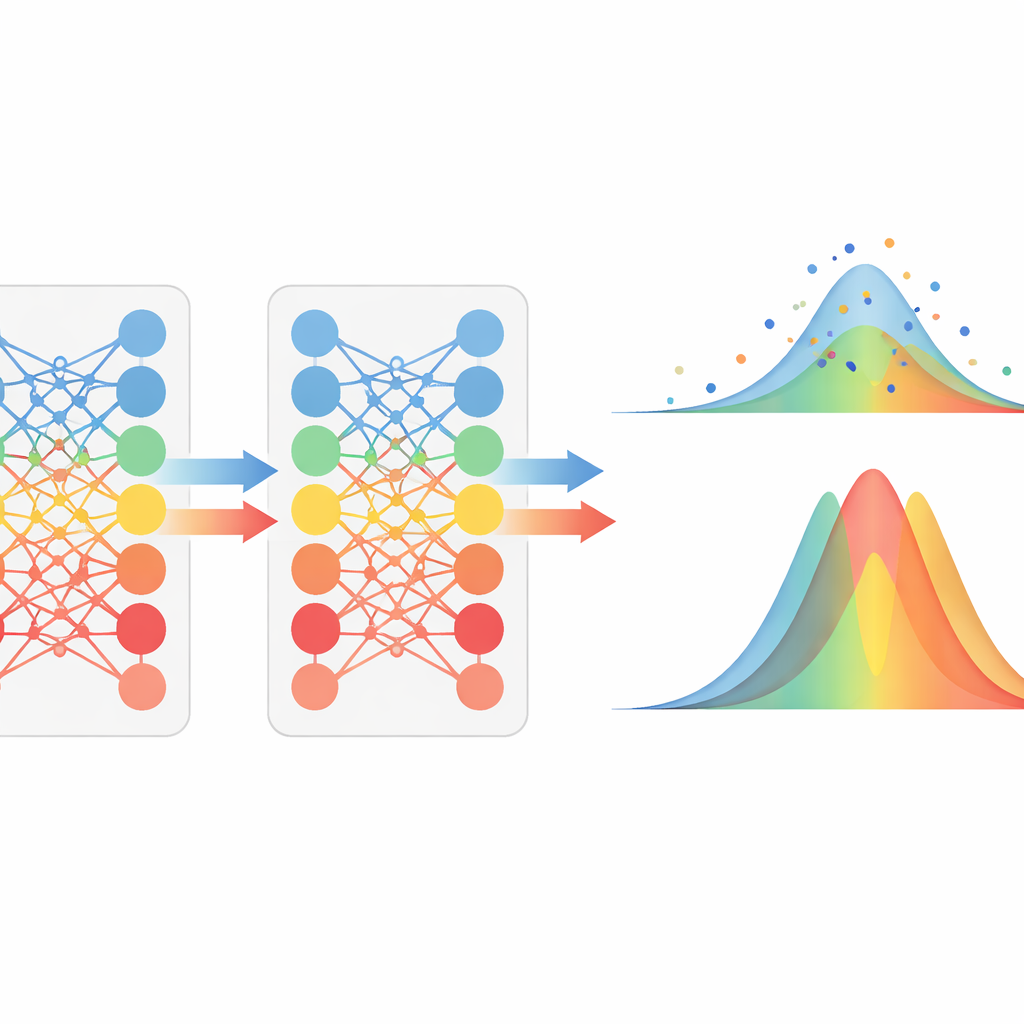

Handling unusual chemistries and extreme conditions

To test how far this approach can be pushed, the team examined very challenging scenarios. In one, peptides carried a bulky chemical label that makes them much more “greasy,” strongly shifting their retention times. In another, the liquid in the column was made basic rather than acidic, fundamentally changing how peptides interact with the column. In both cases, simply calibrating an old model failed, and even a newly trained model needed many examples to reach good accuracy. Transfer learning, however, adapted quickly, achieving similar or better performance with two to three times fewer training peptides. The method also improved predictions for a wide panel of post-translational modifications that were never seen during training, indicating that the model’s prior knowledge about peptide chemistry transfers to new modifications.

What this means for future protein studies

For non-specialists, the central message is that reusing what a neural network has already learned about peptide behavior makes it much easier to obtain precise timing predictions under new experimental conditions. Instead of laboriously collecting large training sets or accepting poor performance from simple calibration, researchers can fine-tune an existing DeepLC model with a modest number of examples and still achieve highly accurate retention times. This makes advanced prediction tools more robust and more accessible, enabling reliable analyses across different instruments, chemical settings, and rare peptide modifications, and ultimately helping scientists read the protein world with greater clarity and efficiency.

Citation: Bouwmeester, R., Nameni, A., Declercq, A. et al. Transfer learning in DeepLC improves LC retention time prediction across substantially different modifications and setups. Nat Commun 17, 2601 (2026). https://doi.org/10.1038/s41467-026-68981-5

Keywords: proteomics, liquid chromatography, retention time prediction, deep learning, transfer learning