Clear Sky Science · en

Homogeneous integration of two-dimensional material-based optoelectronic neurons and ferroelectric synapses for neuromorphic vision

Smart vision closer to the eye

Today’s cameras and computers burn a lot of power shuttling images back and forth between separate chips for sensing, memory, and processing. This paper describes a new kind of tiny "electronic eye" that combines all three jobs in one material. By mimicking how the human retina turns light into electrical spikes, the researchers show a path toward small, low‑energy vision systems that could help cars, robots, and portable devices see and react in real time.

Why current machine vision wastes effort

Most digital vision systems follow a familiar recipe: a camera sensor records light, data are shipped to memory, and a processor crunches the numbers. Because these pieces sit apart, raw images must be repeatedly read, moved, and rewritten, which costs time and energy. This becomes a serious problem for tasks like driver assistance or drones, where fast, continuous video must be analyzed at the edge. The brain avoids this bottleneck by doing early processing directly in the retina, where light‑sensitive cells and nerve connections are tightly interwoven. The authors aim to bring a similar "in‑sensor" strategy to electronics, using hardware that naturally speaks in neural spikes rather than conventional digital signals.

A light‑sensitive neuron built from a sheet of atoms

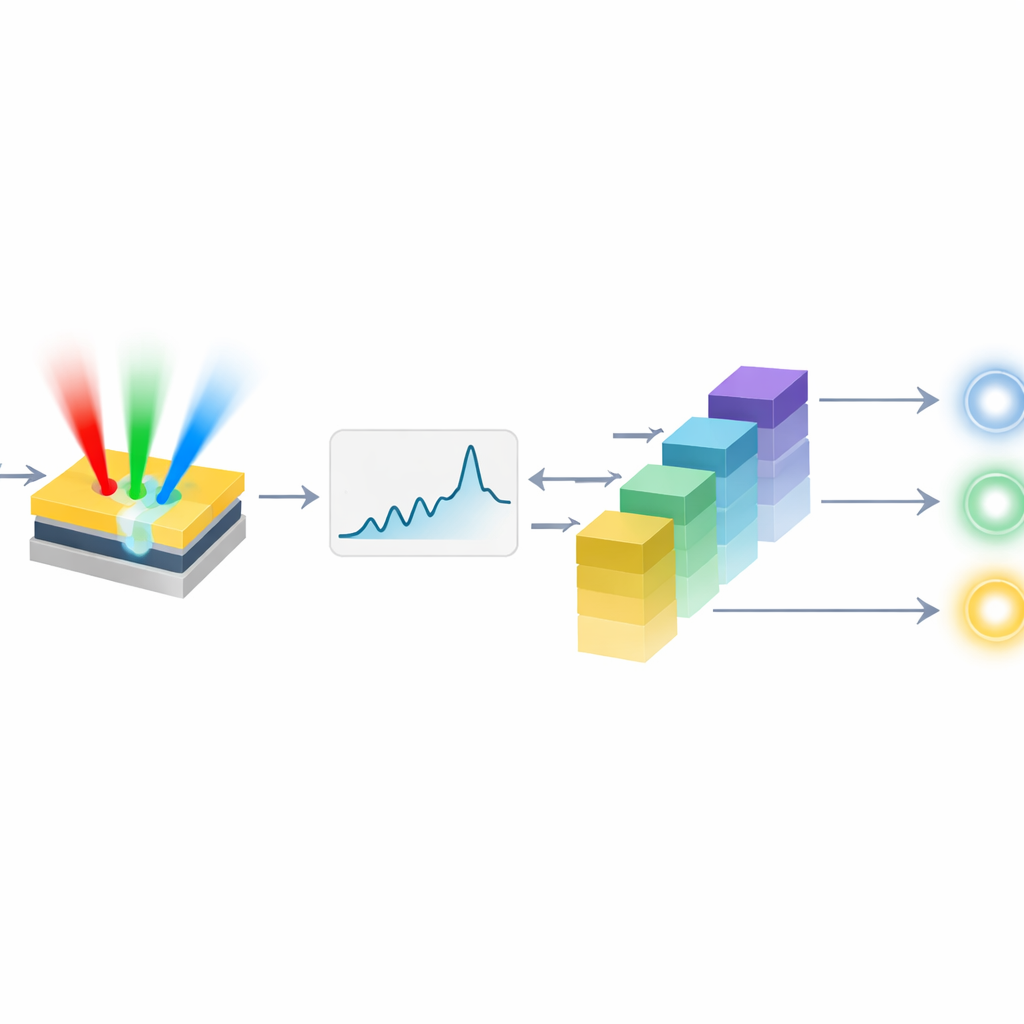

At the heart of the work is a light‑driven artificial neuron made from molybdenum disulfide (MoS2), a two‑dimensional semiconductor only a few atoms thick. When light hits this device, charges become trapped at its interface and gradually raise its electrical output, much like the way a biological neuron’s membrane potential builds up incoming signals. Once this output crosses a set threshold, a small circuit forces the device to emit a brief spike and then automatically reset, ready for the next batch of light. Because the same tiny transistor both senses light and accumulates it over time, no bulky capacitor is needed. The neuron responds to different colors (red, green, and blue) and can encode images in two useful ways: by how often it fires spikes, and by how long it waits before its first spike after a change in brightness.

Electronic synapses that remember

To complement the neurons, the team builds artificial synapses—devices whose electrical conductance can be tuned and then retained. These are based on ferroelectric field‑effect transistors, where a special oxide layer keeps an internal electric polarization even after the control voltage is removed. By applying a sequence of brief voltage pulses, the conductance of each synapse can be stepped up or down across about 50 stable levels, echoing the strengthening and weakening of connections between real neurons during learning. The design separates the ferroelectric layer from the main channel with an insulating buffer, which improves stability and allows the memory window to be adjusted by geometry. The synapses operate like tiny variable resistors, ideal for performing the multiply‑and‑add operations that underlie neural network computation.

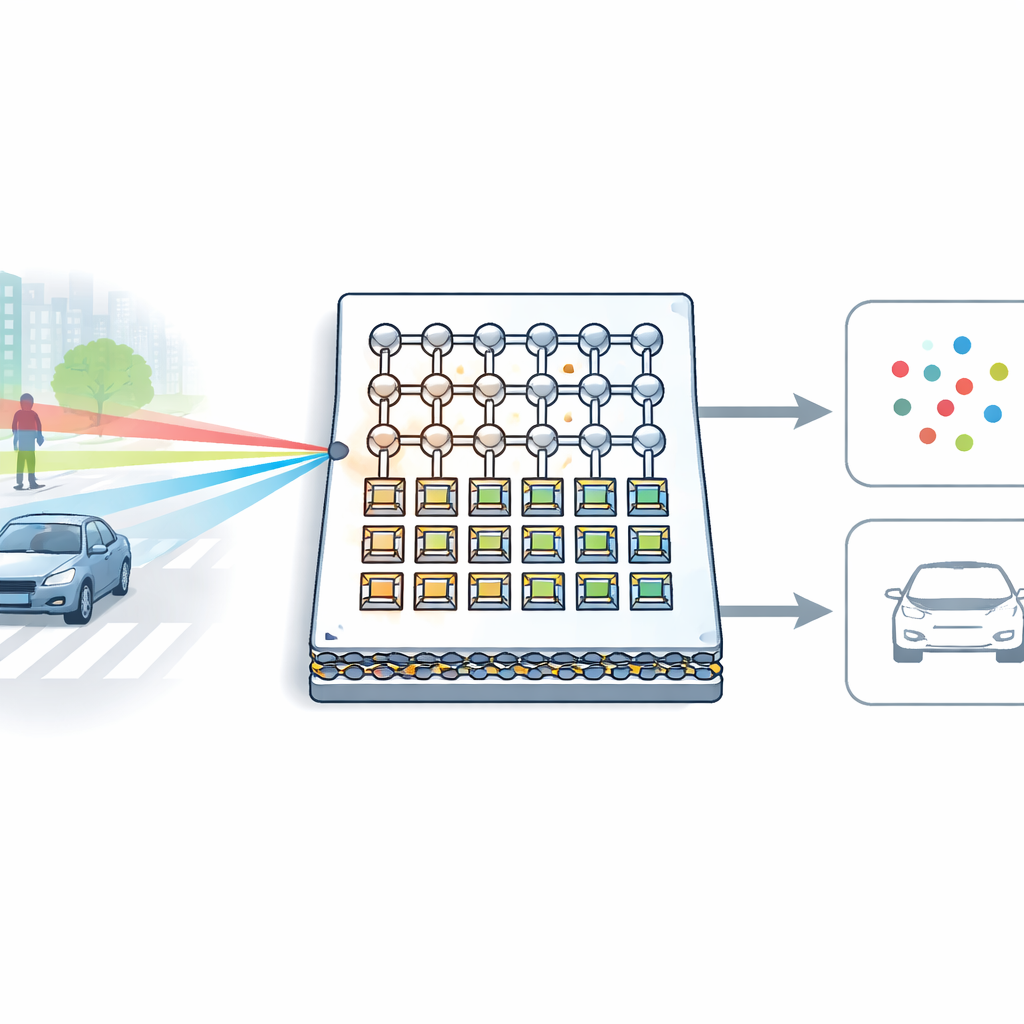

Putting the pieces together for seeing and recognizing

The researchers then show that both neurons and synapses can be built from MoS2 on the same wafer, forming a compact array where light‑sensing neurons feed their spikes directly into a grid of memory‑bearing synapses. A simple circuit board hosts the remaining neuron electronics. In tests and detailed simulations, the system first encodes color patterns into spike trains and then classifies them with a small spiking neural network, reaching about 92% accuracy on basic color‑recognition tasks. Pushing further, the authors model a larger network that uses their measured device behavior to detect vehicles and pedestrians in road images. After training, this spike‑based network correctly identifies objects in a driving dataset about 94% of the time, while still relying on the hardware’s built‑in timing and rate codes for robustness and speed.

What this means for future electronic eyes

By uniting light sensing, neural‑style encoding, and synaptic memory in a single two‑dimensional material platform, this work moves neuromorphic vision closer to practical chips that can see and decide on their own. The MoS2 neuron closely copies key behaviors of biological cells, and the ferroelectric synapses provide fine‑grained, low‑power weight storage without extra memory blocks. Although today’s demonstration is small and still depends on external circuitry and training in software, the results suggest that future cameras could incorporate layers of such devices directly in the sensor. That would allow machines to filter, recognize, and react to visual scenes on the fly, with far less energy than shuttling every pixel to a distant processor.

Citation: Wang, J., Liu, K., Tiw, P.J. et al. Homogeneous integration of two-dimensional material-based optoelectronic neurons and ferroelectric synapses for neuromorphic vision. Nat Commun 17, 2538 (2026). https://doi.org/10.1038/s41467-026-68905-3

Keywords: neuromorphic vision, spiking neural networks, two-dimensional materials, in-sensor computing, ferroelectric synapses