Clear Sky Science · en

Recurrent connections facilitate occluded object recognition by explaining-away

How the Brain Sees What Isn’t There

In everyday life, we effortlessly recognize objects that are partly hidden—a cat behind a curtain, a car behind a tree. This paper asks how brains, and brain-inspired artificial networks, pull off this feat. The authors show that circuits with feedback loops can use information about the blocking object to mentally “fill in” what’s behind it, revealing a key trick our visual system may rely on when the world is cluttered and incomplete.

Why Hidden Objects Are a Hard Problem

When an object is occluded, many of its usual visual features are missing or distorted. A simple feedforward visual system, where information flows straight from eyes to recognition centers, must guess the hidden object based only on the visible fragments. Biological brains, however, are full of recurrent connections—loops where higher areas talk back to earlier ones. These loops have long been suspected to help with tough tasks like recognizing occluded objects, but it was unclear exactly what advantage they provide or how they change the internal representations of what we see.

Putting Brain-Inspired Networks to the Test

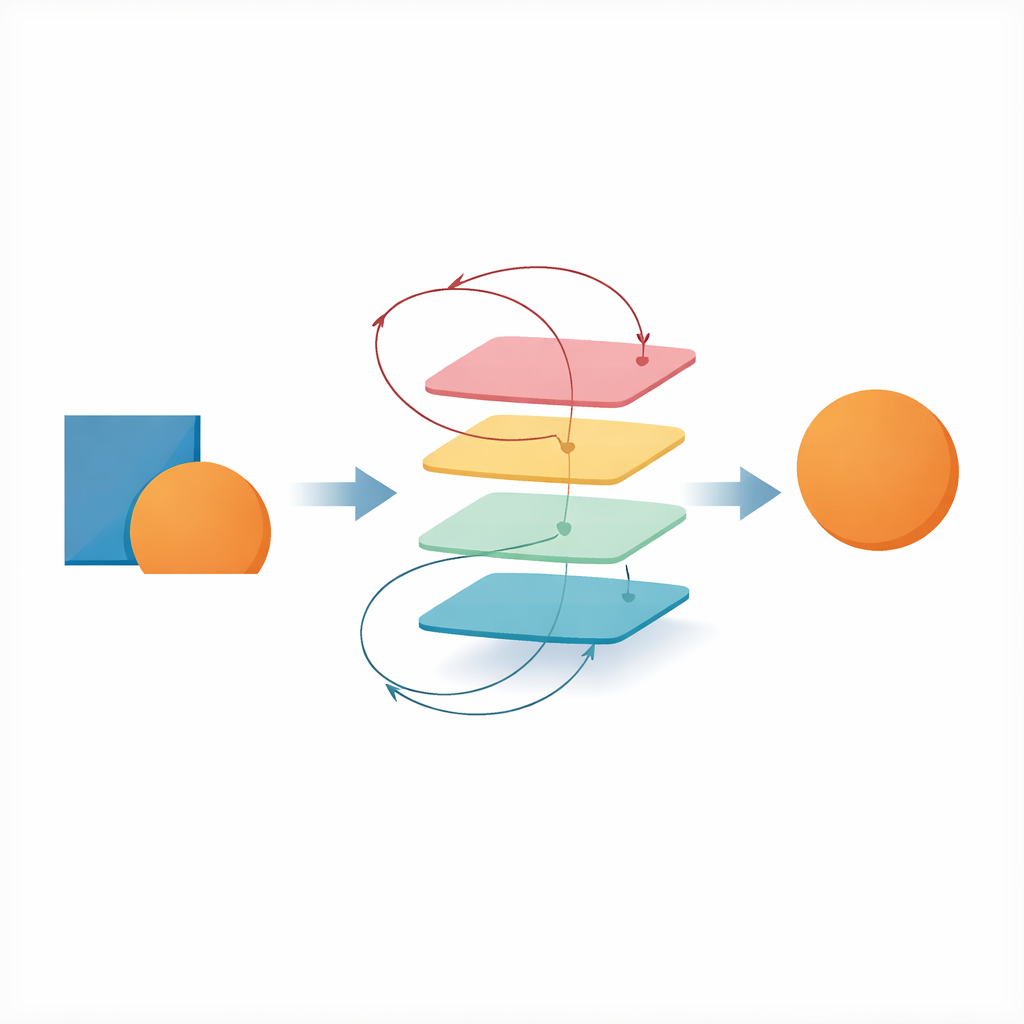

The authors built a large battery of deep convolutional networks that mimic stages of visual processing. Some were purely feedforward, while others had recurrent loops or additional top-down feedback. They trained these models on custom image sets where one fashion item partly covered another. The networks had to identify both the front (occluding) and back (occluded) objects under different task setups. Performance depended less on whether a network was recurrent or feedforward and more on its “computational depth” – how many sequential processing steps an input passed through. Deep feedforward models could rival or beat recurrent ones at the basic recognition task, showing that recurrence is not magically superior on its own.

A Special Trick: Explaining Away the Occluder

Although depth mattered most for raw accuracy, recurrent networks showed a distinctive advantage in how they used context. When these networks were asked first to identify the front object and only then the hidden one, their performance on the hidden object improved compared with when they classified it alone. This pattern did not appear in ordinary feedforward networks that output both labels at once. The authors interpret this as “explaining away”: once the system has recognized the occluder, it can treat the odd, missing features in the image as caused by that occluder, rather than as evidence for some strange new object. In more realistic 3D scenes and in a primate-inspired model (CORnet), the same ordering—front object before hidden object—also boosted recognition.

Seeing the Same Effect in People

To ask whether humans use a similar strategy, the researchers ran an online experiment. Participants briefly saw a single object, then a scene where one object occluded another, and finally had to choose which of two options had been the hidden object. On some trials, the initial single object was the same as the later occluder; on others it was unrelated. When people had just seen the actual occluder, they identified the hidden object more accurately and responded faster, across a range of occlusion levels. This suggests that our brains, like the recurrent networks, benefit from processing the blocking item first and then using that knowledge to interpret the partial evidence for what lies behind.

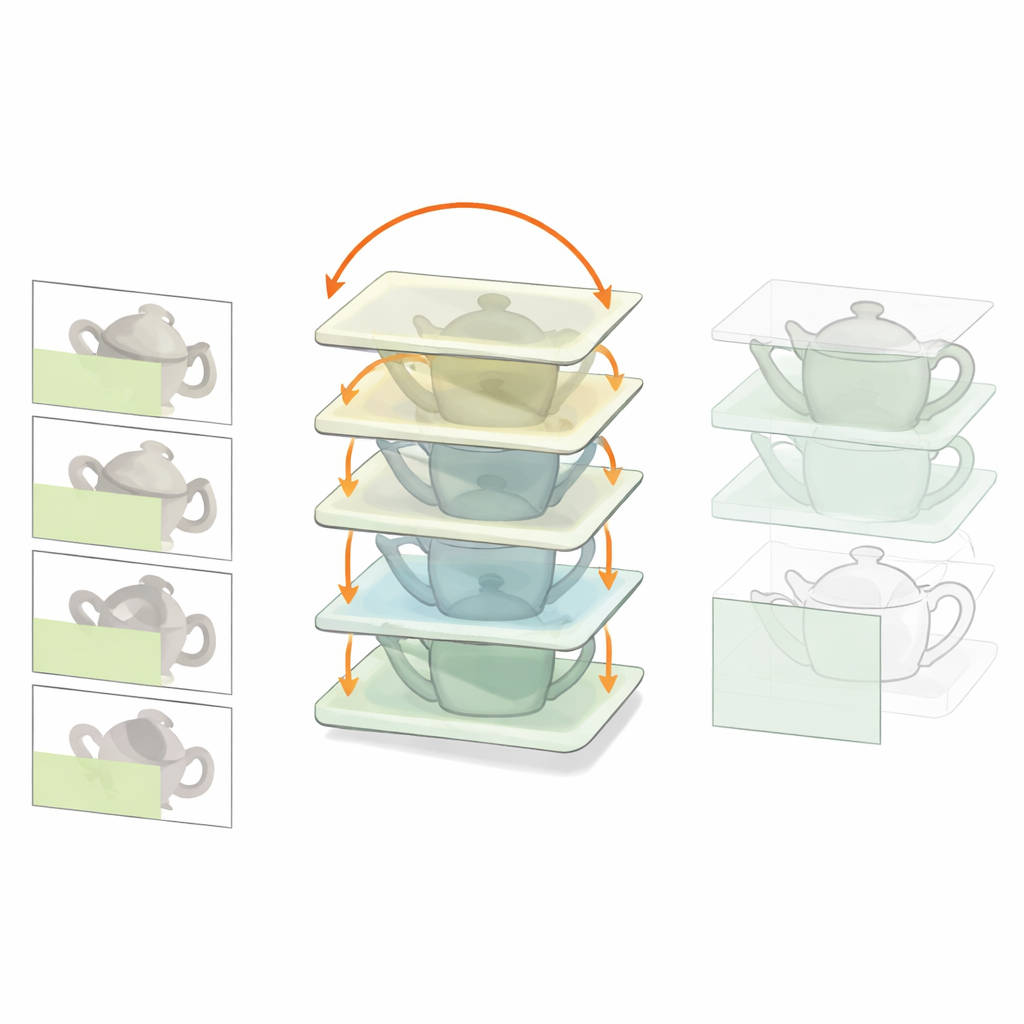

Rebuilding Hidden Images from the Inside

To dig deeper into mechanism, the authors designed a more biologically inspired model, Recon-Net, based loosely on interactions between visual cortex and prefrontal cortex. Recon-Net receives an image containing an occluded object plus a separate view of the occluder and iteratively transforms an internal representation until it matches what an unoccluded version of the hidden object should look like. Strikingly, classifiers trained only on clean, unoccluded images can recognize the outputs of Recon-Net almost as well as if they had been trained directly on occluded examples. This means the recurrent processing effectively “reconstitutes” a clean internal picture of the hidden object, even though the pixels are missing.

What This Means for Brains and Machines

Overall, the study shows that feedback loops are not simply about raw performance, but about a qualitatively different way of using context. Recurrent connections naturally support explaining-away: they allow the visual system to account for how an occluder distorts what we see and to restore a stable internal representation of the hidden object. At the same time, the authors find that training on heavily occluded images can leave responses to clear images largely unchanged, potentially easing learning in real brains by avoiding constant rewiring. These insights point toward a common principle for both neuroscience and artificial intelligence: when the world hides information, smart systems don’t just look harder—they infer why it is missing.

Citation: Kang, B., Midler, B., Chen, F. et al. Recurrent connections facilitate occluded object recognition by explaining-away. Nat Commun 17, 2225 (2026). https://doi.org/10.1038/s41467-026-68806-5

Keywords: occluded object recognition, recurrent neural networks, visual perception, explaining away, computational neuroscience