Clear Sky Science · en

Training tactile sensors to learn force sensing from each other

Robots That Can Feel and Share Their Sense of Touch

As robots move out of factories and into homes, hospitals, and warehouses, they need a sense we usually take for granted: touch. Just as our fingers automatically adjust when we pick up a potato chip versus a heavy box, future robots must learn how hard to squeeze and when an object is about to slip. This article introduces a new way for robotic "skin" to learn force sensing from other skins, cutting down on costly calibration and nudging machines closer to human-like dexterity.

Why Robot Touch Is So Hard to Get Right

Modern robots already have many types of artificial skin. Some use tiny cameras looking into soft gels, others rely on magnets or electronic grids that sense pressure. Each design excels at certain tasks, but they all speak different "dialects" of touch: the same push on two sensors can produce very different signals. Today, every new sensor usually needs its own laborious training process with precise force meters, repeated thousands of times. Worse, soft materials age and wear out, so this costly calibration must be done again whenever a sensor is replaced.

Borrowing a Page from the Human Brain

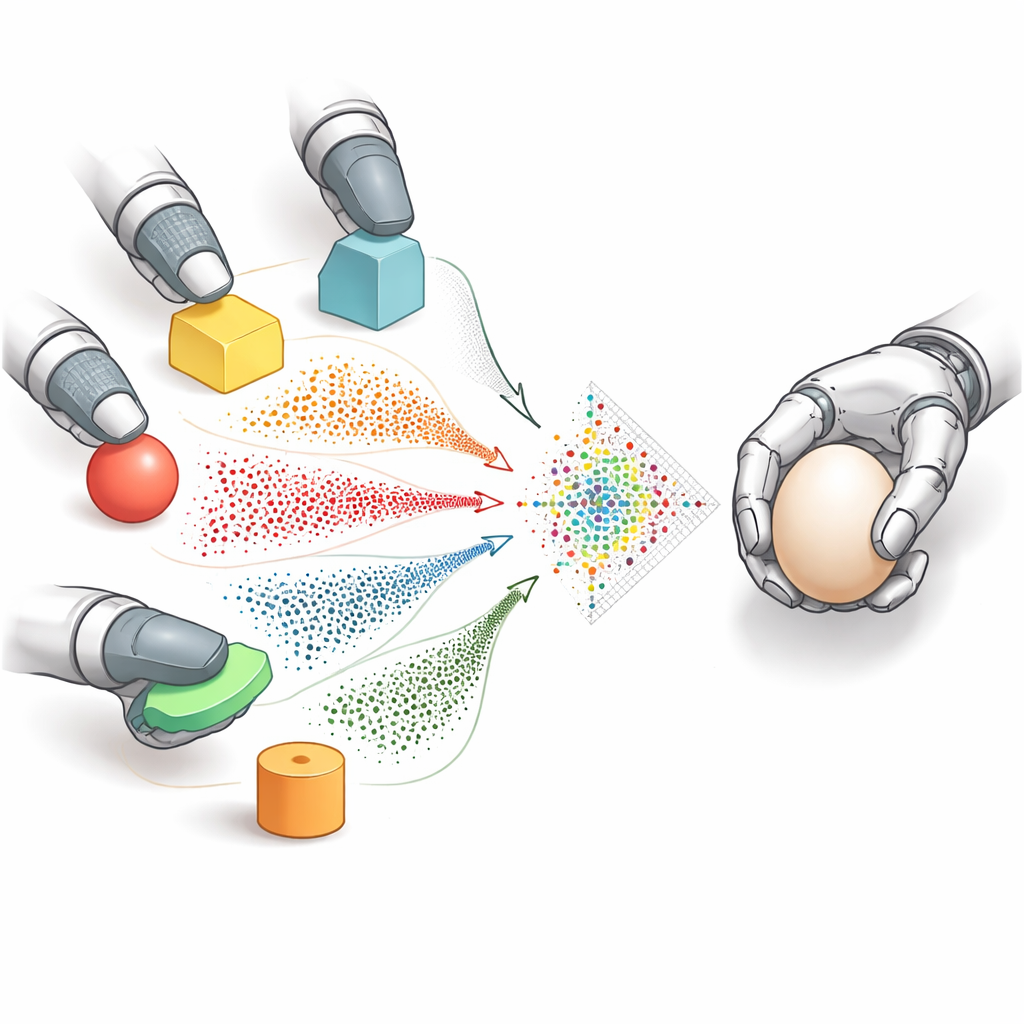

Humans solve a similar problem effortlessly. Our skin is packed with different kinds of touch receptors, yet the brain converts all their signals into a shared internal code. That unified tactile memory lets us estimate how something would feel on a part of the hand that has never touched it before, simply by drawing on past experience. The researchers behind this work mimic that idea in robots. They convert all sensor outputs—camera images, magnetic readings, or electronic signals—into a common picture-like form made of dots, which stand in for how the skin is deforming. This shared marker representation acts as a simple "language of touch" that any sensor can use.

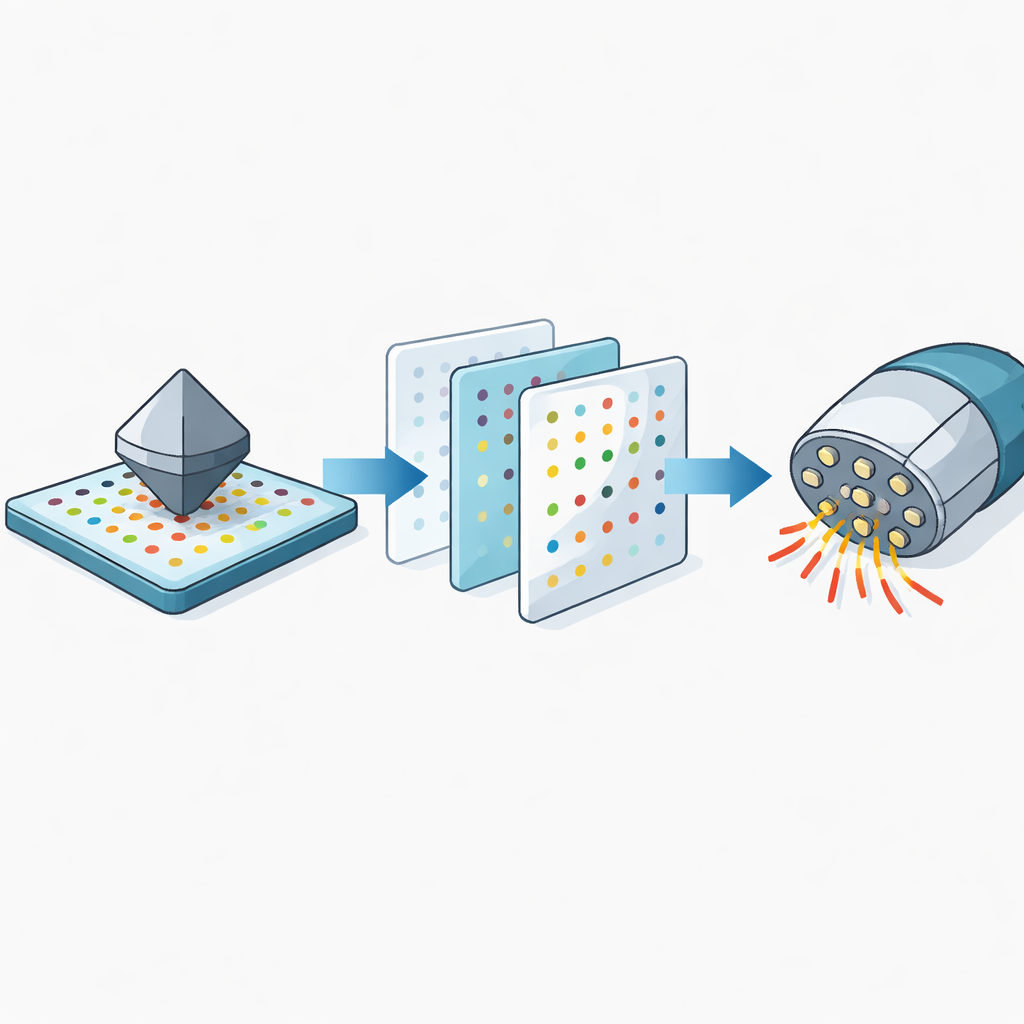

Teaching One Sensor to Imitate Another

Once all sensors speak this dot-based language, the team introduces a translation step called marker-to-marker translation. Using powerful generative models, they train a system that can transform the dot pattern from one sensor into the pattern that a different sensor would have shown under the same contact. That means a well-calibrated sensor can effectively "imagine" what an uncalibrated sensor would feel, and generate synthetic training data for it. A second model then looks at short sequences of these dot images to predict how forces change over time in three directions, taking into account both pushing and sideways shearing.

Handling Real-World Differences in Soft Skin

In practice, different robotic skins are not only shaped and wired differently; they are also made from materials that can be softer or stiffer, and that change as they age. These differences can distort force estimates even if the patterns look similar. The researchers measure how each type of soft material bends under load and build a simple correction step that scales force labels up or down before training. This material compensation greatly reduces errors, especially when transferring knowledge between very soft and very stiff skins.

From Lab Bench to Everyday Manipulation

The team tests their method, called GenForce, on a wide mix of sensors, from multiple copies of the same camera-based pad to very different designs that use magnets or curved, fingertip-like shapes. Across more than 200 combinations in simulation and hardware, GenForce sharply cuts prediction errors compared with simply reusing a model trained on another sensor. In demonstrations, a robot hand equipped with different tactile skins on each finger uses transferred models to delicately grasp fragile items like fruit and potato chips, and to detect and correct slipping objects by coordinating the readings from both sides of a grasp.

What This Means for the Future of Robot Hands

By allowing tactile sensors to learn force sensing from each other instead of starting from scratch, GenForce points toward robot hands that are easier and cheaper to deploy at scale. A single, carefully calibrated sensor could train many others, even of different designs, and pre-trained models could be fine-tuned with only a small amount of new data. For non-specialists, the bottom line is simple: this work makes it more practical for robots to feel how hard they are squeezing and to respond quickly when objects start to slip, bringing us closer to machines that handle the real world with the same confident touch as human hands.

Citation: Chen, Z., Ou, N., Zhang, X. et al. Training tactile sensors to learn force sensing from each other. Nat Commun 17, 2101 (2026). https://doi.org/10.1038/s41467-026-68753-1

Keywords: robot touch, tactile sensors, force sensing, robot manipulation, transfer learning