Clear Sky Science · en

Counting cells can accurately predict small-molecule bioactivity benchmarks

Why simply counting cells matters

When drug companies test thousands of chemicals, they rely more and more on artificial intelligence to predict which ones will help patients and which might be harmful. This study uncovers a surprising twist: in many widely used test collections, just counting how many cells are left alive after treatment can predict the outcome almost as well as much more complicated methods. That means some headline-grabbing AI successes may really be rediscovering a very basic signal: are the cells dying or not?

Modern drug tests and smart imaging

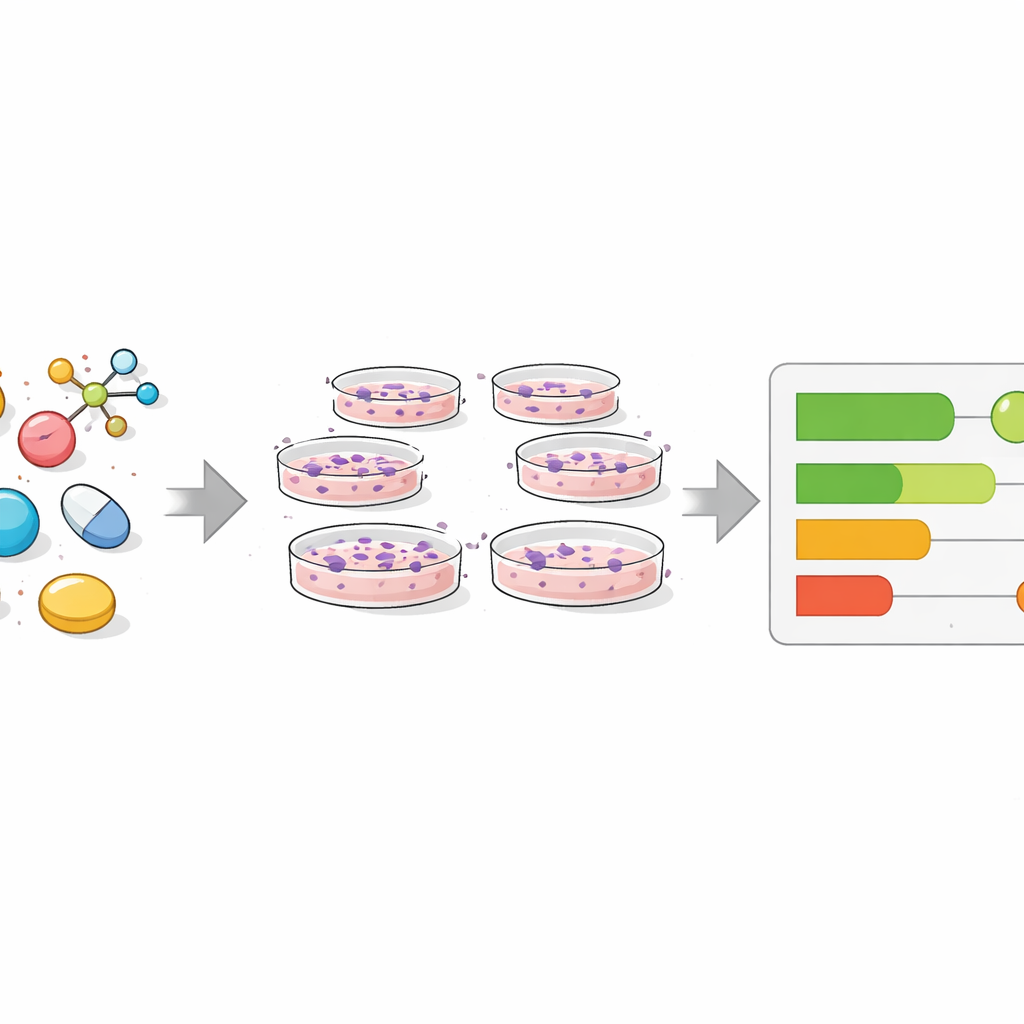

To find new medicines, researchers grow human cells in dishes and expose them to chemicals, then measure how the cells respond. Traditionally, computer models have relied on the molecules’ structures, but these often fall short when similar-looking compounds behave very differently. Newer approaches use “phenotypic profiling,” in which cells are stained with fluorescent dyes and imaged. A popular method called Cell Painting creates rich pictures of cell shape, structure, and internal organization. From these images, computers extract thousands of numerical features that can be fed into machine-learning models alongside other data such as gene activity.

A simple signal hiding in plain sight

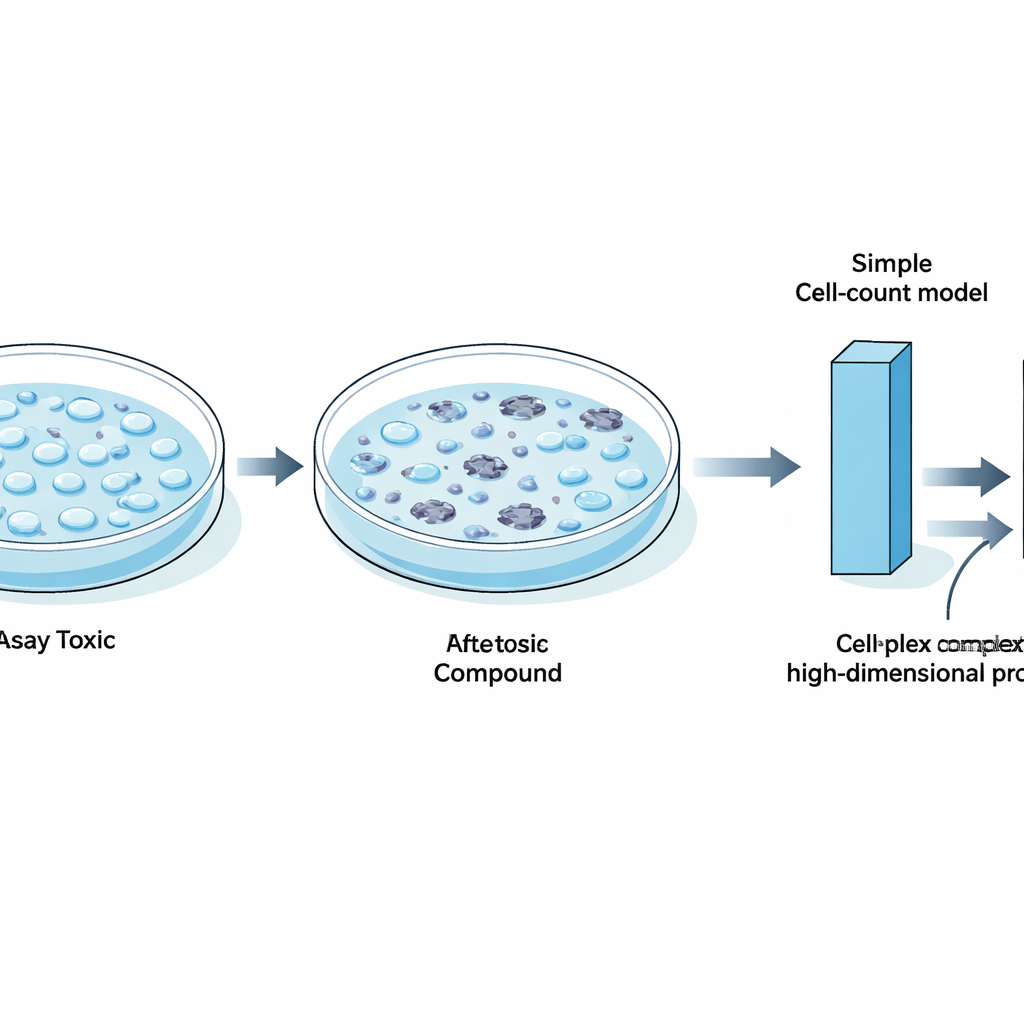

The authors revisited several influential benchmark datasets that many groups use to test new machine-learning techniques. These datasets contain results from hundreds of biological tests, including toxicity screens and measurements of whether compounds hit specific protein targets. By focusing on a single feature from Cell Painting images—the number of cells remaining in each well—they asked how far one simple measure could go in predicting whether a compound was labeled “active” or “inactive” in each test. They found that in a large fraction of assays, especially those involving tumor cell growth or general cell health, active compounds tended to strongly reduce cell count, while inactive ones did not. In these cases, a minimalist model based only on cell count matched or nearly matched the performance of sophisticated neural networks trained on thousands of image features or on gene expression profiles.

When cell death masquerades as insight

Digging deeper, the team showed that compounds marked as active in many different assays often shared a common trait: they broadly harmed cells. Gene activity data linked these chemicals to stress and cell-death pathways such as apoptosis, suggesting that general toxicity, rather than a precise drug effect, was often driving the signals that models learned. They also demonstrated that some “state-of-the-art” methods, including contrastive learning across images and chemical structures and advanced meta-learning approaches, did not clearly outperform a cell-count baseline in these viability-heavy benchmarks. In some tests, simply reversing the model’s output—because labels had been defined in an unusual way—was enough to match the reported performance of complex few-shot learning systems.

Where richer imaging truly helps

Importantly, the study does not argue that cell counting is all that matters. When the authors assembled a carefully filtered benchmark focusing on 24 well-defined protein targets, and removed heavily toxic and confounded assays, models that used full Cell Painting profiles clearly outperformed those based on cell count alone. Subtle image features related to the texture and distribution of cell structures, such as the endoplasmic reticulum and mitochondria, captured real biology that could not be reduced to simple loss of cells. In dose–response experiments, detailed morphological changes appeared at lower chemical concentrations than those that caused overt cell death, showing that rich image data can reveal early, mechanism-specific effects that a crude cell count would miss.

How to build better tests for smarter models

From these findings, the authors offer practical guidance for the drug discovery community. Benchmark collections should be checked and pruned so they are not dominated by assays that mainly reflect whether cells are alive or dead. Every study, they argue, should include a straightforward cell-count–based model as a baseline, so that any claimed improvement from fancier methods can be judged against the simplest plausible explanation. They also recommend using metrics that are robust to data imbalance, ensuring enough active and inactive examples in test sets, and always considering the biological context of each assay.

What this means for future drug discovery

For nonspecialists, the key message is reassuring but sobering: some of the impressive numbers reported for AI in drug discovery may come from learning easy shortcuts rather than deep biological insight. By revealing how far a basic measurement like cell count can go, this work helps reset expectations and encourages more honest comparisons between models. At the same time, it highlights where advanced imaging and machine learning genuinely add value—uncovering subtle, specific changes in cells that simple death-or-survival readouts cannot detect. In the long run, more carefully designed benchmarks should help ensure that computational tools move beyond counting casualties and toward truly understanding how potential medicines work.

Citation: Seal, S., Dee, W., Shah, A. et al. Counting cells can accurately predict small-molecule bioactivity benchmarks. Nat Commun 17, 2436 (2026). https://doi.org/10.1038/s41467-026-68725-5

Keywords: cell viability, phenotypic profiling, Cell Painting, drug discovery, machine learning benchmarks