Clear Sky Science · en

Neural and computational mechanisms underlying one-shot perceptual learning in humans

Seeing the Hidden Picture

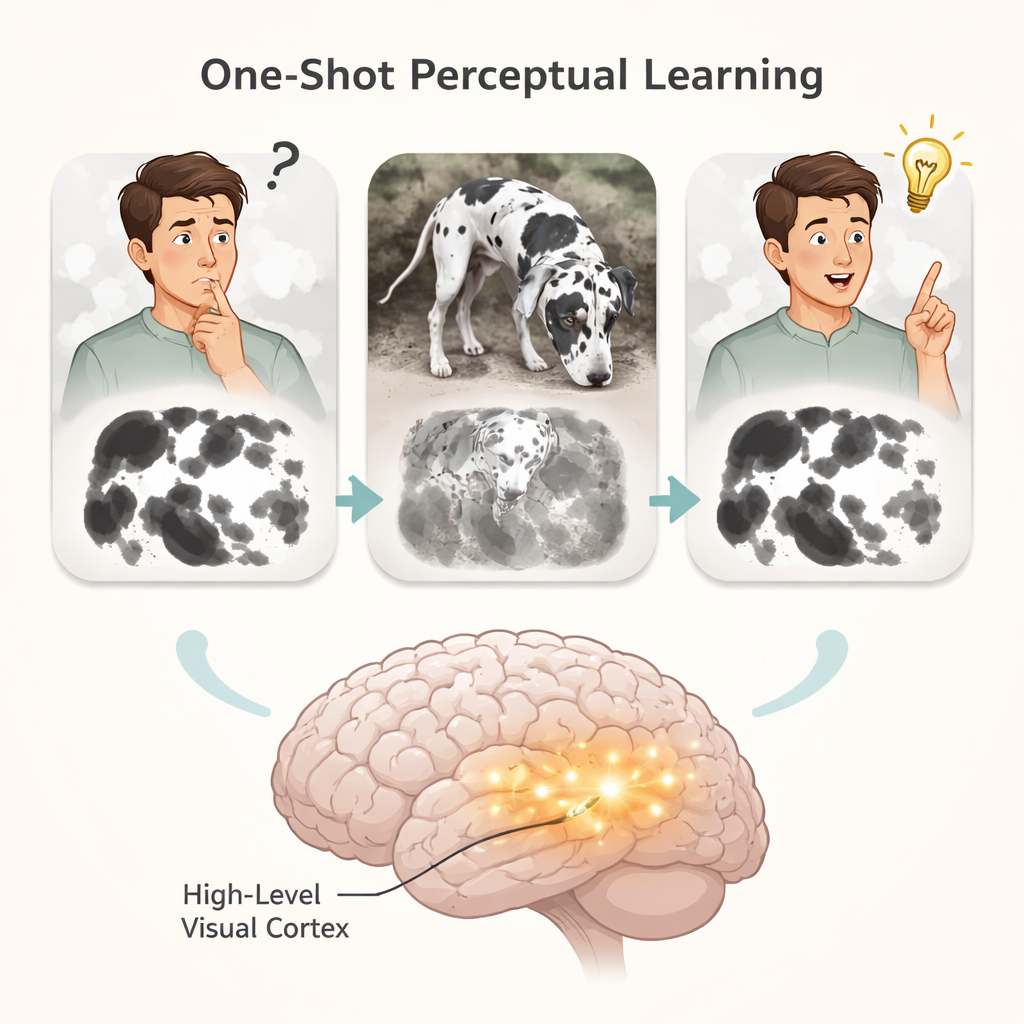

Many people have experienced that sudden “aha!” moment when a confusing black-and-white blotchy picture suddenly turns into a clear image of a dog or a face—and once you see it, you can never un-see it. This study asks how a single brief glimpse of a clear picture can permanently change what we see in a muddled version, and what that reveals about how our brains—and future artificial intelligence systems—learn from just one example.

From Blurry Blobs to Instant Recognition

The researchers used classic “Mooney images”: highly simplified black-and-white pictures that are hard to recognize until you see the original grayscale photo they came from. Volunteers first tried to name what they saw in these difficult images. Later, they briefly viewed the matching clear photos, and then tried the hard images again. After that single exposure, people could suddenly recognize the once-mysterious pictures, and this improvement lasted. By carefully altering the clear photos—flipping them left-right, rotating them, changing their size, or shifting their position on the screen—the team mapped what kind of visual information the brain actually stores during this one-shot learning.

Where the Brain Stores the New Insight

Different tweaks to the images affected learning in different ways. Making the clear image twice as big or half as big did not harm learning, suggesting the brain’s stored “template” is flexible about size. But flipping, rotating, or moving the image on the screen made learning weaker, though not impossible. Replacing the clear picture with a different example from the same category—for instance, a different dog—completely abolished learning. This shows that the brain is not just storing the idea “this is a dog”; instead, it keeps a detailed, picture-like memory of the specific shape and layout of that exact image. Combining these behavioral results with what is known about the visual system pointed to high-level visual areas, rather than early visual regions or memory structures like the hippocampus, as the likely storage site of this new knowledge.

Watching Learning Unfold Inside the Brain

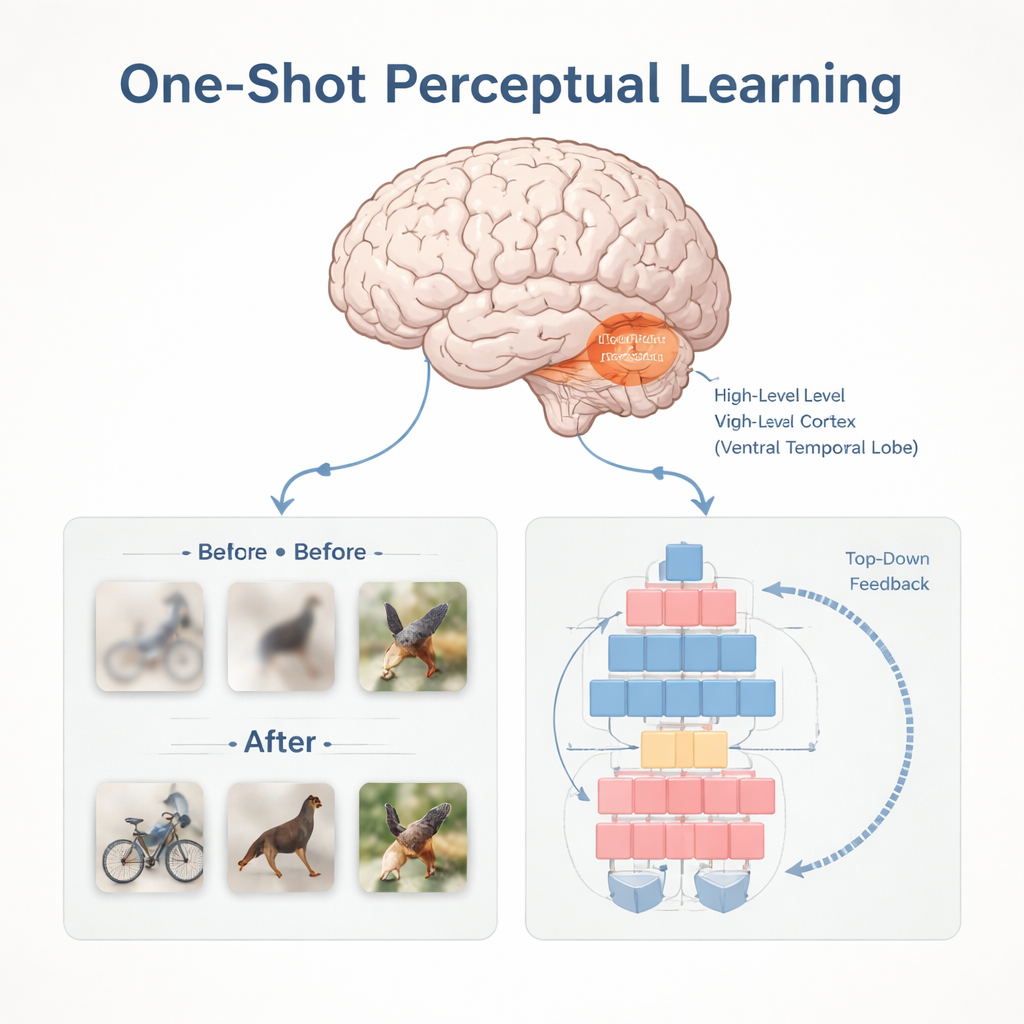

To confirm this, the team used ultra-high-field 7-Tesla MRI scans and direct recordings from electrodes placed on the brains of epilepsy patients. The MRI experiments showed that neurons in a region called the high-level visual cortex responded to different versions of the same object (changed in size, position, or orientation) in just the way predicted from the behavioral tests. In the electrode recordings, the crucial change appeared in this high-level visual cortex first: after learning, activity patterns triggered by the hard image became more similar to those triggered by its clear counterpart, and this happened earlier here than in primary visual areas. That timing suggests this region is where the new “prior” is stored and reactivated, and that it then sends feedback down to earlier visual areas to help make sense of ambiguous input.

Building a Machine That Learns in One Shot

The researchers also built a deep neural network model designed to mimic this ability. Their system used a modern vision transformer as a “bottom-up” visual engine, paired with a special module that stores prior information and sends “top-down” feedback when it later sees a related image. Trained on Mooney-like tasks, the model showed genuine one-shot learning: its accuracy jumped after just one exposure to the clear image and far exceeded what could be explained by simple repetition. It even shared many of the same successes and failures as human observers on specific images, and the internal features it learned from the clear images could predict which pictures people would or would not learn to recognize. When the team compared the model’s stored prior information to human brain scans, they found the closest match in the same high-level visual regions highlighted by the experiments.

Why This Matters for Brains and Machines

Together, these findings suggest that our sudden “I see it now!” moments arise when high-level visual areas rapidly adjust their connections after a single experience, storing a detailed picture-like prior that can later reshape how we interpret noisy input. This fast yet stable form of learning, rooted in high-level visual cortex and supported by top-down feedback, offers a blueprint for building AI systems that can learn from very few examples. It also provides a starting point for understanding what might go wrong when perception leans too heavily on prior expectations, as in certain psychiatric conditions that involve hallucinations.

Citation: Hachisuka, A., Shor, J.D., Liu, X.C. et al. Neural and computational mechanisms underlying one-shot perceptual learning in humans. Nat Commun 17, 1204 (2026). https://doi.org/10.1038/s41467-026-68711-x

Keywords: one-shot learning, visual perception, high-level visual cortex, perceptual learning, deep neural networks