Clear Sky Science · en

iDesignGPT enhances conceptual design via large language model agentic workflows

Why Smarter Design Tools Matter

From electric cars to emergency drones, every complex product starts as a rough idea on a whiteboard. The earliest design choices often lock in most of a product’s cost, safety, and performance, yet this stage still relies heavily on expert intuition, long meetings, and scattered documents. This article introduces iDesignGPT, a new AI-based framework that aims to turn large language models—the same family of tools behind modern chatbots—into disciplined collaborators for engineers, helping both experts and newcomers explore ideas, gather information, and judge early concepts more systematically.

The Trouble With Early-Stage Engineering

Conceptual design is the “fuzzy front end” of engineering: teams must decide what a system should do, how it might work, and whether it is even feasible, all while information is incomplete. Studies show that up to 80% of lifecycle cost is fixed at this stage, and mistakes can be enormously expensive to fix later. Traditional methods—such as structured requirement charts and problem-solving handbooks—were built for narrower industrial settings and often demand deep specialist training. At the same time, computer-aided design and simulation tools mostly help once a detailed layout already exists, leaving a gap in support for the earliest, most creative phase. As products become more multidisciplinary, and as companies seek to involve less specialized designers, these limitations become harder to ignore.

What Today’s AI Gets Right—and Wrong

Recent large language models (LLMs) like GPT-4o and DeepSeek have shown impressive reasoning skills and can already help with tasks such as drafting reports or brainstorming ideas. They can also be turned into “agents” that plan steps, call tools, and consult external databases. However, out of the box they struggle with engineering design: they lack domain-specific knowledge, may misread user intent, and are prone to “hallucinations”—confident but incorrect statements. Existing AI design helpers typically focus on a single step, like idea generation, and are sensitive to how well a user crafts prompts. This makes them hard to trust for high-stakes design decisions or for supporting novices who cannot easily spot subtle technical errors.

A Structured AI Partner for Designers

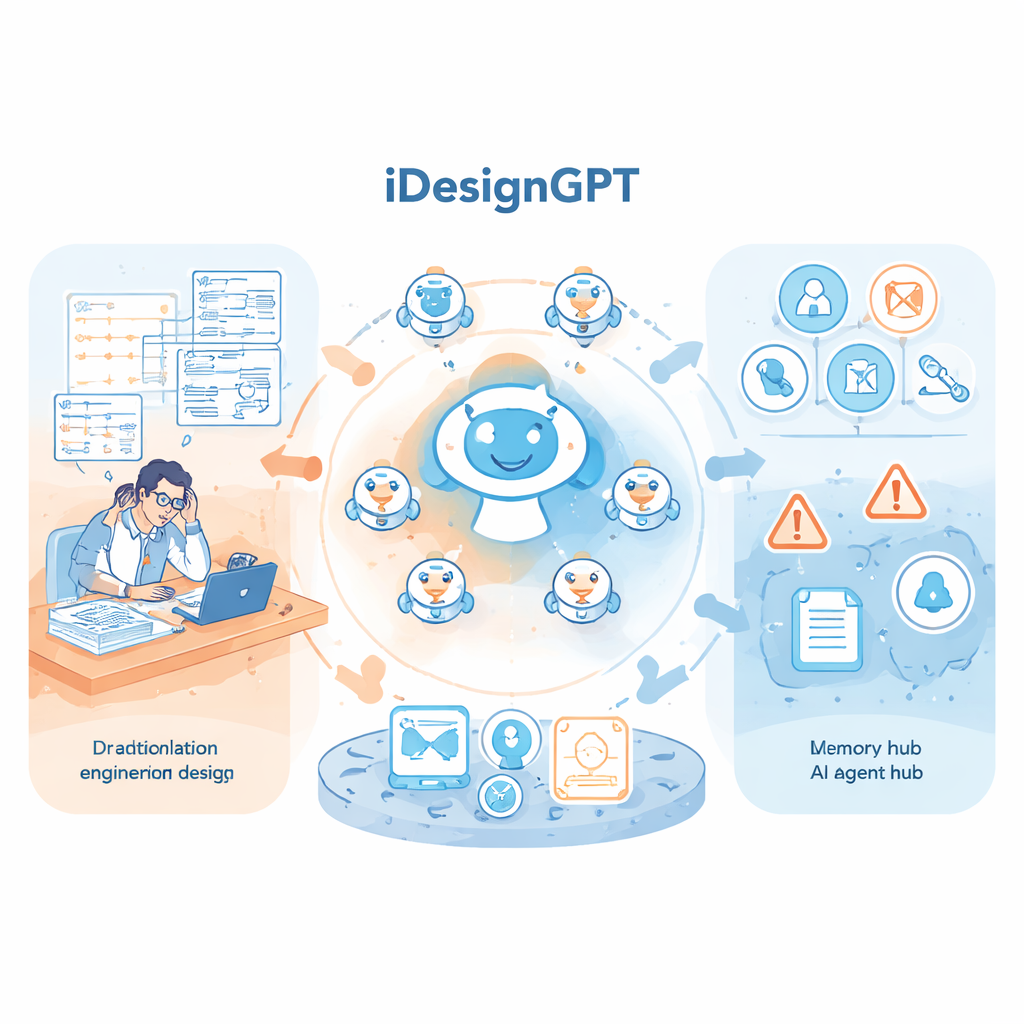

iDesignGPT addresses these issues by weaving LLM agents into a complete, method-driven design process. Built on an open platform, it organizes AI assistants into clusters with distinct roles—analysts, information officers, innovators, and evaluators—linked to four stages: defining the problem, gathering information, generating concepts, and evaluating options. In “Copilot” mode, a conversational agent works with the user to clarify goals and refine requirements through natural dialogue, accepting text and images. In “Agent” mode, specialized agents automatically apply established design techniques, such as need-analysis frameworks and quality-function matrices, to turn customer wishes into weighted engineering targets. A knowledge base pulls in patents, academic articles, and award-winning product examples, while guardrails and cross-checking agents help reduce hallucinations and keep the process transparent.

Putting the System to the Test

To see whether this framework works in practice, the authors tested iDesignGPT on a high-profile challenge: designing a compact rescue aircraft that can fly autonomously in emergencies. The system first expanded and reorganized the original list of requirements, dropping narrow test-case details and inferring broader needs like safety and autonomy. It then searched patents, research papers, and design prize databases, and used multiple creative methods—biomimicry, brainstorming, structured recombination, and inventive-principle analysis—to build modular solution options. Finally, it scored and selected a combined design. Quantitative measures showed that this process widened the explored design space and increased diversity and novelty of ideas early on, then shifted toward refinement. When the resulting concept was compared with 22 winning human entries from the same competition, its customer-satisfaction score placed it in roughly the top quarter.

How It Compares to Other AI Workflows

The team also benchmarked iDesignGPT against standard LLM setups—simple prompting, chain-of-thought prompting, and a reasoning-focused model—on six public engineering challenges from agencies such as NASA and the U.S. Department of Energy. Using objective metrics grounded in engineering practice, they scored solutions on novelty, originality (how unlike existing patents they were), rationality, technical maturity, and modularity. iDesignGPT consistently produced more original and modular concepts while maintaining strong rationality, even if its ideas were slightly less ready for immediate implementation than those from the most conservative models. Expert reviewers broadly confirmed these patterns. In user studies with 48 participants ranging from undergraduates to professional engineers, AI assistance in general reduced mental workload compared with human-only design, and iDesignGPT in particular gave novice designers clearer process guidance, uncovered overlooked needs, and supported decision-making without demanding advanced prompt-writing skills.

What This Means for Future Designers

For lay readers, the key takeaway is that tools like iDesignGPT are not about replacing engineers, but about making the early, messy stages of design more accessible, transparent, and exploratory. By packaging rigorous design methods inside multi-agent AI workflows, the framework helps users articulate what they really need, explore a broader range of possibilities, and compare options using explicit criteria. While it still faces limits—especially in tightly constrained problems and outside the conceptual phase—it offers a glimpse of design environments where students, generalists, and experts alike can co-create complex systems with AI that behaves less like a chatty assistant and more like a methodical, well-trained collaborator.

Citation: Liu, S., Shen, Y., Zhang, Y. et al. iDesignGPT enhances conceptual design via large language model agentic workflows. Nat Commun 17, 1997 (2026). https://doi.org/10.1038/s41467-026-68672-1

Keywords: engineering design, AI design tools, large language models, concept generation, human–AI collaboration