Clear Sky Science · en

Ultrafast visual perception beyond human capabilities enabled by motion analysis using synaptic transistors

Why Faster Robot Vision Matters

When a car’s self-driving system or a flying drone reacts even a fraction of a second too slowly, the consequences can be serious. Today’s best computer vision algorithms can match or beat humans on standard tests, yet they still take too long to process each video frame in real time. This paper presents a new kind of vision hardware, inspired by the brain, that lets machines detect motion much faster than humans can, without sacrificing accuracy.

How We Normally Teach Machines to See Motion

Conventional motion analysis relies on a technique called optical flow, which estimates how every point in an image moves from one frame to the next. It works well but is computationally heavy: for a full high-definition image, a powerful graphics card may need more than half a second to finish the job. In fast-moving scenarios such as highway driving, that delay can translate into tens of meters traveled before the system even recognizes a hazard. Unlike the human visual system, which quickly focuses on the most relevant parts of a scene, standard algorithms dutifully process every pixel, even in static background regions that carry little useful information.

Borrowing a Trick from the Brain’s Early Vision Stages

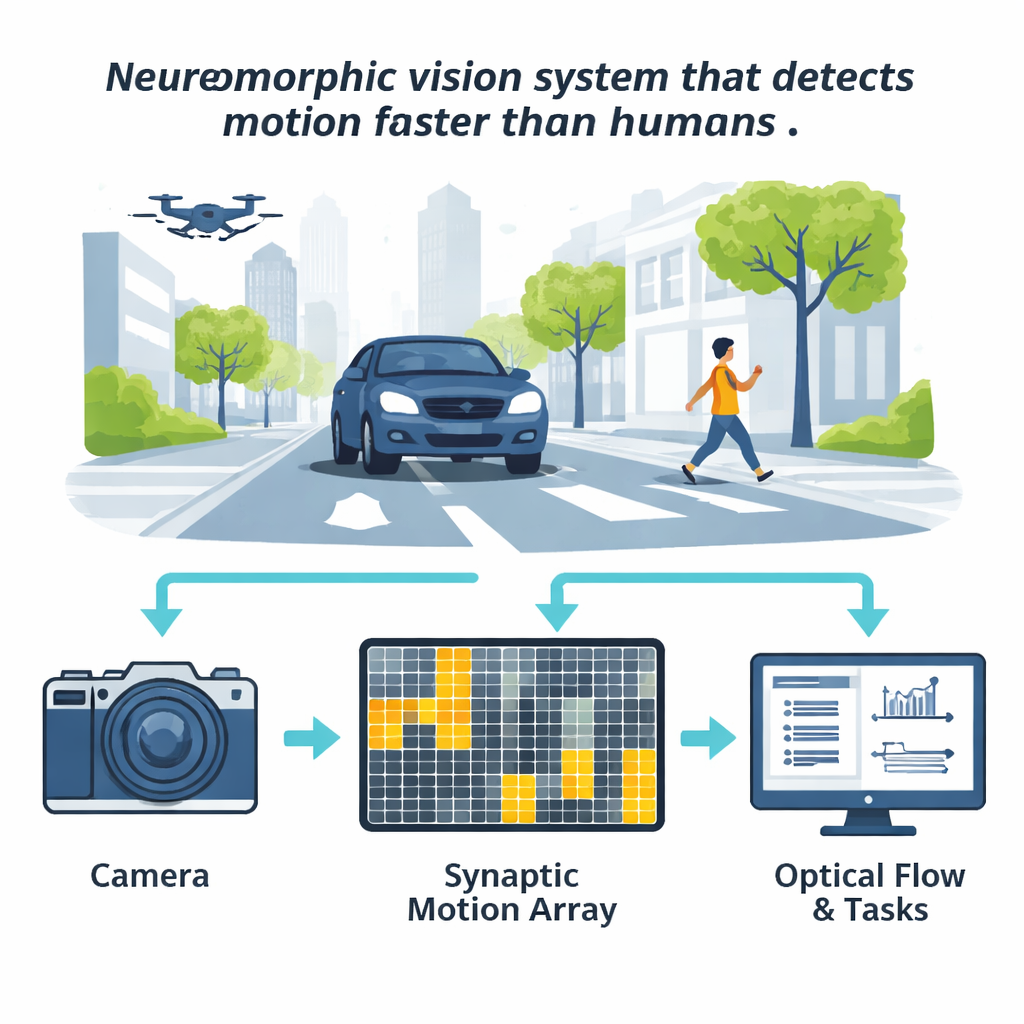

Biology solves this problem by using early filtering layers in the eye and thalamus to highlight where change is happening and to downplay everything else. The authors mimic this idea in silicon by building a neuromorphic “temporal attention” module. A standard camera still captures the images, but its brightness changes are also fed into a compact grid of synaptic transistors—electronic devices that behave a bit like adjustable connections in the brain. Each device locally accumulates how much the light at its assigned region has changed over a short time window. Patches of the grid that see strong change light up as regions of interest, while calmer areas fade into the background.

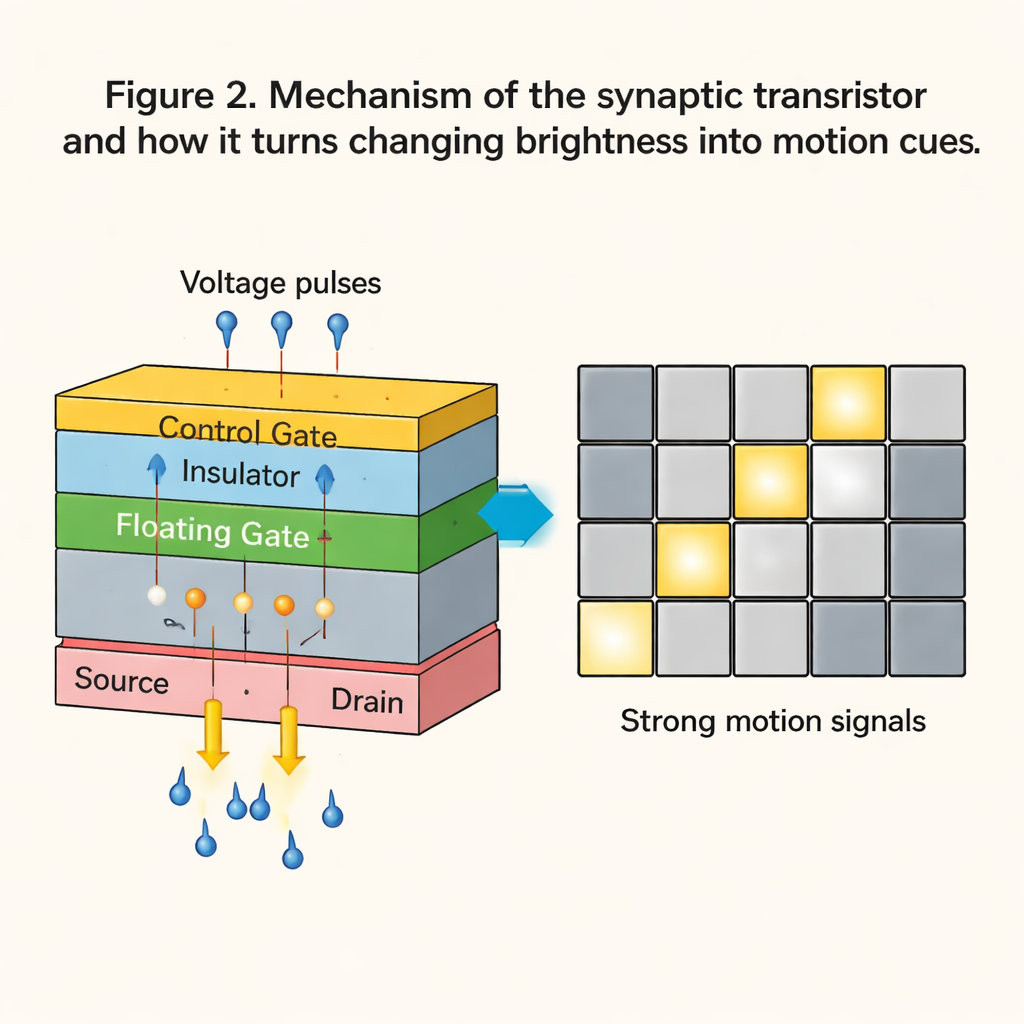

Smart Transistors that Remember Motion

At the heart of this system is a specially engineered floating-gate synaptic transistor built from layered, atomically thin materials. By applying brief voltage pulses, the device’s conductance can be tuned and then held for hours, effectively storing a memory of recent visual activity. The transistors respond in about 100 microseconds—fast enough for high-speed video—and withstand thousands of update cycles without degrading. The team scaled a single device into a 4×4 array and showed how changes in camera brightness are converted into voltage pulses that selectively push some cells into high-conductance “motion” states while suppressing minor flicker and noise.

Focusing Heavy Computation Only Where It Counts

The array’s output is turned into a coarse “heat map” of motion that marks compact regions of interest. Instead of running expensive optical flow code on the entire image, the system only analyzes these highlighted areas, with a bit of padding around them. The authors demonstrate that this approach plugs directly into several popular optical flow methods, from classical algorithms like Farneback to modern deep-learning models such as RAFT and GMFlow. Across tests involving cars, drones, robotic arms, and fast sports like table tennis, the neuromorphic front end routinely cuts the time spent on motion estimation and follow-up tasks—such as predicting where an object will move, segmenting moving objects from the background, or tracking a target—by around a factor of four.

Outrunning Human Reaction Without Losing Accuracy

Crucially, this speedup does not come at the cost of reliability. By providing extra information about where motion is likely to be, the temporal cues often improve accuracy, especially for object tracking and segmentation in cluttered scenes. In vehicle and small-drone scenarios, task performance metrics more than doubled compared with conventional pipelines, while total processing times dropped into the tens of milliseconds—on par with, or better than, typical human reaction times of about 150 milliseconds. The authors argue that this neuromorphic motion front end can be paired with many existing vision algorithms, and even with object detectors beyond optical flow, to give robots, vehicles, and interactive machines a much faster and more focused way to understand dynamic environments.

Citation: Wang, S., Zhao, J., Pu, T. et al. Ultrafast visual perception beyond human capabilities enabled by motion analysis using synaptic transistors. Nat Commun 17, 1215 (2026). https://doi.org/10.1038/s41467-026-68659-y

Keywords: neuromorphic vision, optical flow, synaptic transistors, robot perception, autonomous driving