Clear Sky Science · en

The mosaic memory of large language models

Why near-miss copies matter

Large language models like ChatGPT learn from oceans of text, and sometimes they memorize pieces of that text. That raises worries about privacy, copyright, and how fairly we can judge what these systems really know. This paper shows that memorization is not just about exact copy‑and‑paste. Instead, language models can reconstruct passages from many slightly different versions, much like assembling a mosaic. Understanding this hidden kind of memory is crucial for anyone who cares about safe, reliable AI.

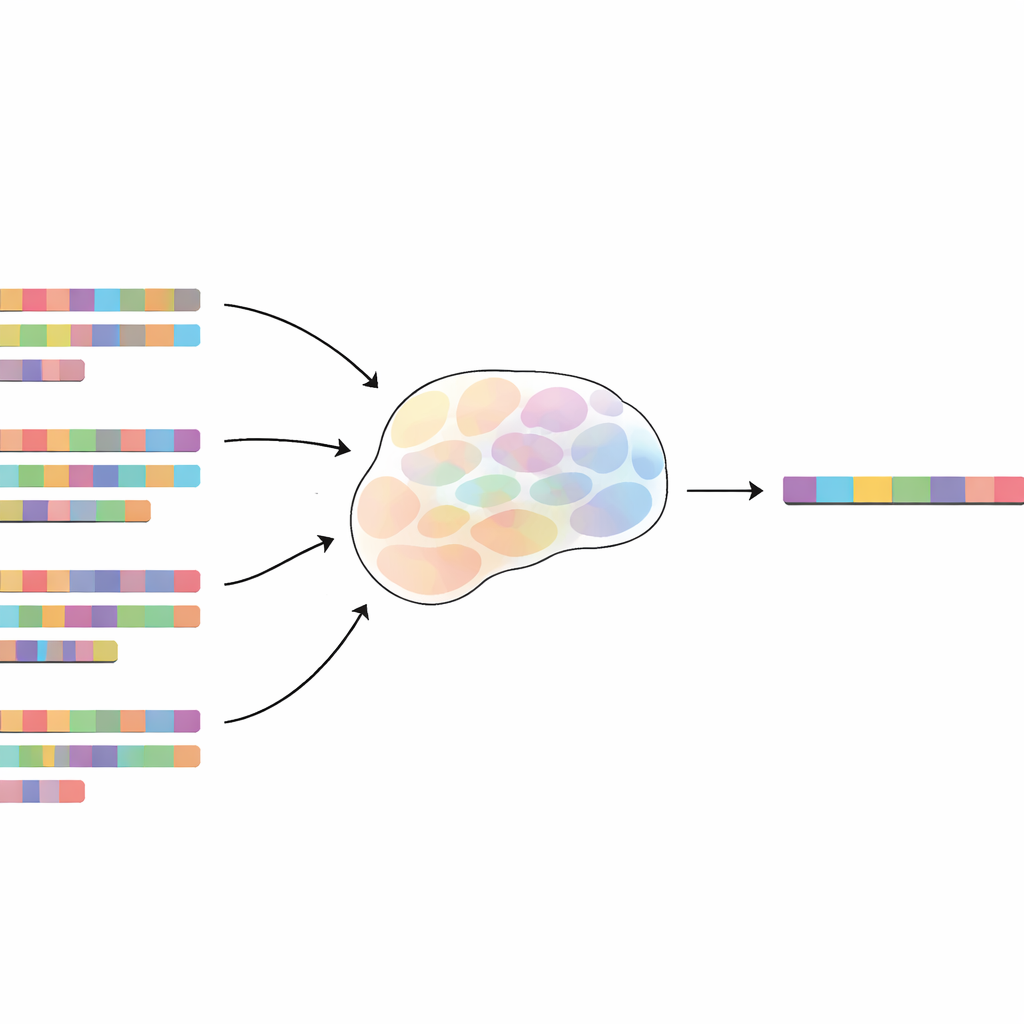

A new way to think about machine memory

Most people assume that a language model only memorizes something if it sees the exact same sentence over and over. The authors challenge this view by introducing “mosaic memory.” In this view, a model can memorize a 100‑word passage not only from exact repeats, but also from many fuzzy duplicates—versions where some words are missing, changed, or shuffled. To study this carefully, they plant artificial test phrases, called canaries, into a model’s training data, along with many altered versions. After training, they measure how easy it is to tell whether a given canary was in the training set, using a kind of privacy test called a membership inference attack.

How fuzzy copies still leave a clear trace

By comparing fuzzy duplicates to exact repeats, the researchers define an “exact duplicate equivalent”: how much one fuzzy copy contributes to memorization compared with a perfect copy. They find that even very mild changes barely weaken memorization. If only about 10% of the words in each duplicate are replaced at random, a single fuzzy copy still contributes roughly 60–65% as much as an exact duplicate. Even when half the words are changed, each altered version still counts for around 15–20% of a full copy. The effect is robust: adding random filler words between key phrases or shuffling chunks of the sentence reduces memorization, but does not eliminate it. The model appears able to skip over noise, focus on overlapping fragments, and stitch them back together.

Form over meaning in what models store

Given that modern language models can solve math problems, follow instructions, and translate between languages, one might expect their memories to be about meaning. Surprisingly, the study finds the opposite. When the authors replace words with alternatives that keep the sentence’s meaning, memorization improves only slightly compared with replacing them with random words. Paraphrases generated by other AI systems—which preserve the same idea but change many surface details—contribute relatively little to memorization unless they still share many short word sequences with the original passage. Across a range of tests, what really drives memory is overlap in the exact tokens (the model’s basic word pieces), not shared ideas. In other words, the model’s mosaic memory is mainly about form, not meaning.

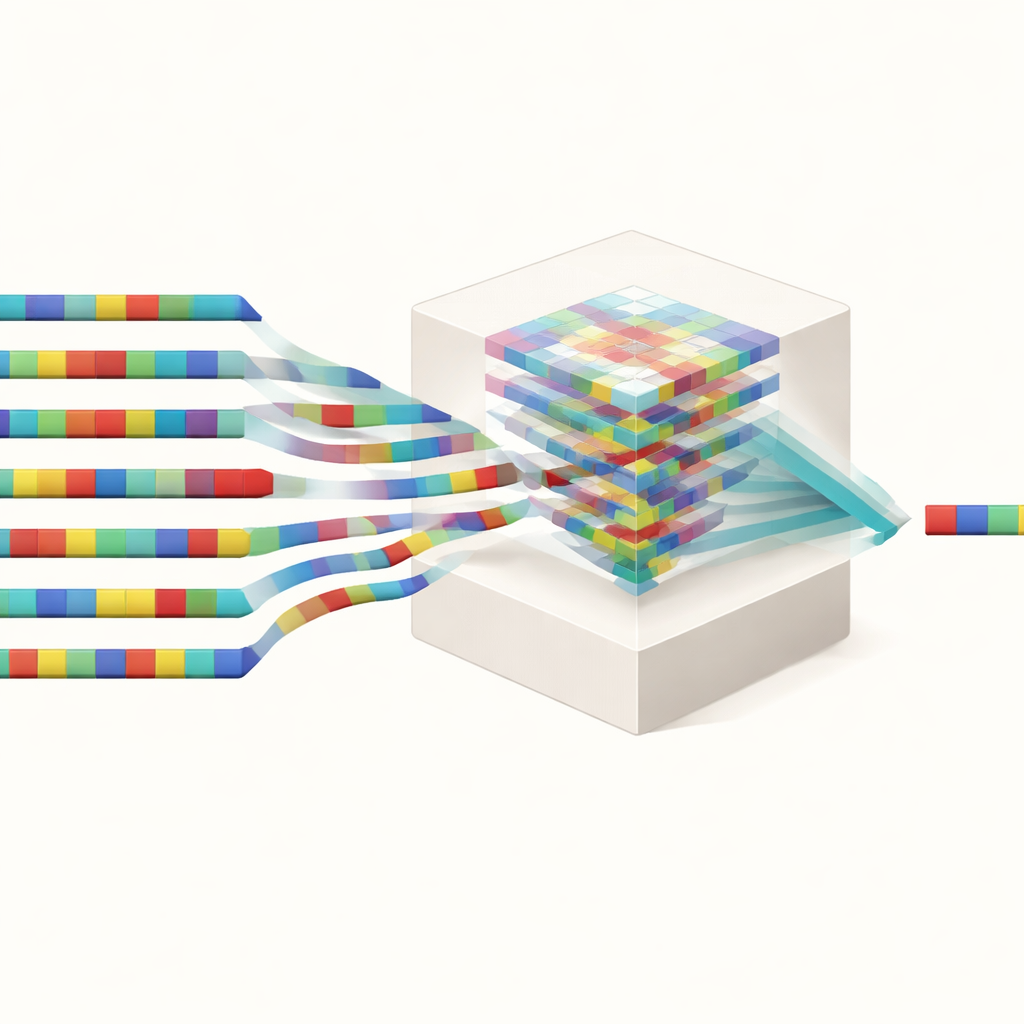

Hidden duplicates in real-world training data

The authors then ask how common fuzzy duplicates are in a popular, heavily cleaned web dataset used to train language models, known as SlimPajama. Even though this dataset has already had near‑identical documents removed, the team finds that many 100‑token sequences that appear exactly 1,000 times also have thousands of near‑miss versions. For small editing distances—roughly 10% of characters changed—there are on average about 4 times as many fuzzy duplicates as exact ones, and tens of thousands more at larger but still influential distances. Importantly, standard “deduplication” techniques used in industry, which typically remove only long exact overlaps, leave most of these fuzzy copies untouched. That means models can still memorize sensitive or copyrighted material by piecing it together from many slightly altered sources.

Why this matters for privacy, fairness, and control

These findings have wide‑ranging implications. For privacy, they show that simply scrubbing exact repeats from training data is not enough: personal or confidential information can be memorized through families of similar passages. For copyright and benchmarking, fuzzy duplicates can cause models to reproduce protected text or artificially boost scores on tests they have effectively seen in disguised form. For efforts to “unlearn” specific data, it is not sufficient to delete one offending example if many variants remain. Overall, the work reveals that language model memory is a complex mosaic built from many small overlaps, challenging today’s safety tools and calling for smarter, more fine‑grained ways to clean and audit training data.

Citation: Shilov, I., Meeus, M. & de Montjoye, YA. The mosaic memory of large language models. Nat Commun 17, 2142 (2026). https://doi.org/10.1038/s41467-026-68603-0

Keywords: language model memorization, fuzzy duplicates, data privacy, training data deduplication, benchmark contamination