Clear Sky Science · en

Assimilative causal inference

Why tracing causes backwards matters

When we ask what caused a storm, a market crash, or a seizure, we usually look back in time and try to connect the dots. Yet most mathematical tools for “causal inference” actually run time forward: they ask how today’s conditions shape tomorrow’s outcomes, averaged over long records. This article introduces a new way of thinking that instead mirrors our intuition. It presents assimilative causal inference (ACI), a framework that uses weather-forecast–style techniques to trace causes backward from their observed effects, moment by moment, even in noisy, complex systems like climate or the brain.

A new angle on cause and effect

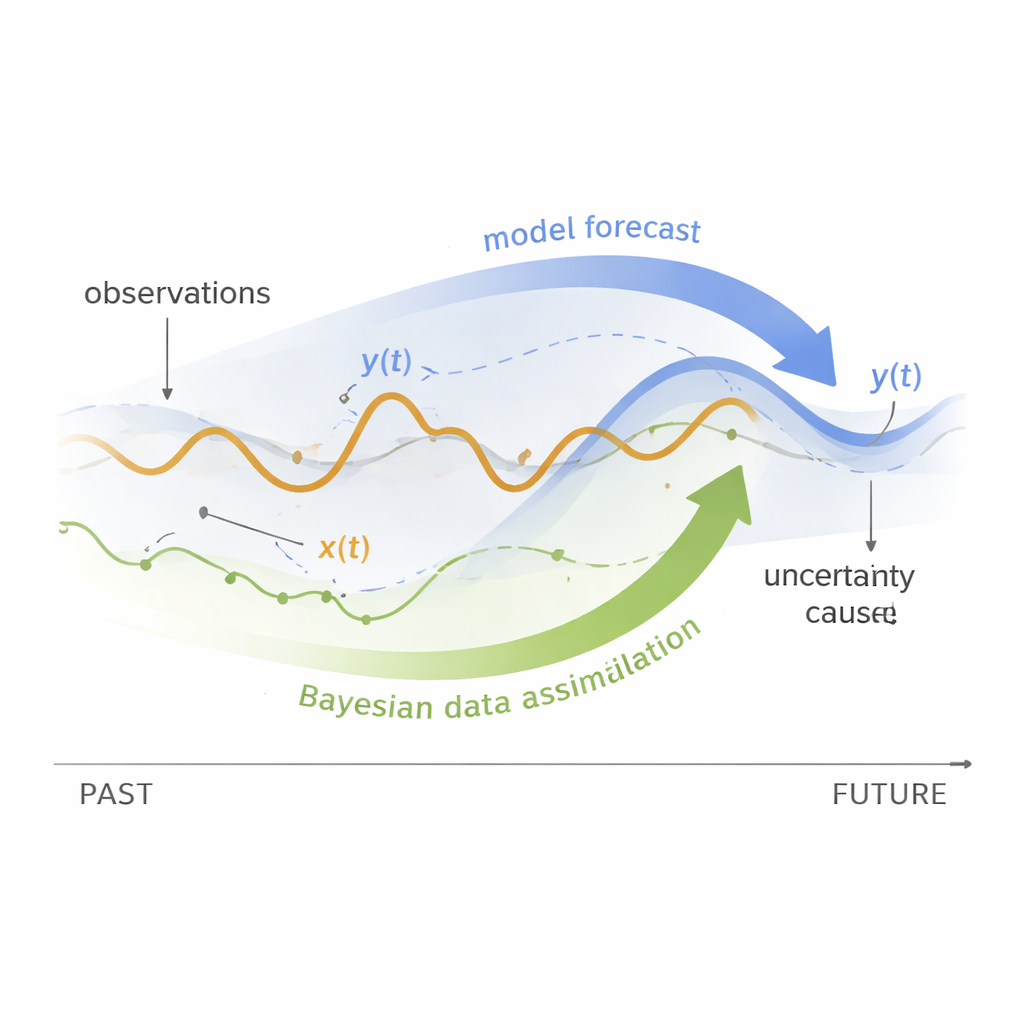

Traditional causal methods typically fall into two camps. Data-driven techniques look for patterns in long multivariate time series, asking whether adding information about one variable improves predictions of another. Model-based approaches, common in physics and climate, use equations and run them forward from slightly different starting points to see how outcomes change. Both strategies have drawbacks: they struggle with rapidly changing relationships, short records, and very high-dimensional systems. ACI takes a different route. It treats causality as an inverse problem: instead of pushing causes forward to see their effects, it pulls information backward from observed effects to infer their most likely causes. To do this, it relies on Bayesian data assimilation, the same family of methods used to blend weather models with fresh observations.

In practice, ACI assumes we can observe at least one “effect” variable over time and that we have access to a (possibly turbulent and stochastic) mathematical model describing how the system’s variables interact. Even if some potential causes are never directly measured, they are represented in the model. ACI uses two flavors of state estimation commonly used in data assimilation: filtering, which estimates the system using data up to the present, and smoothing, which also uses data from the future. If adding future information about the observed effect sharply tightens our estimates of a candidate cause at a given instant, ACI interprets this reduction in uncertainty as a sign that the candidate truly influenced the effect at that time.

Following shifting roles in time

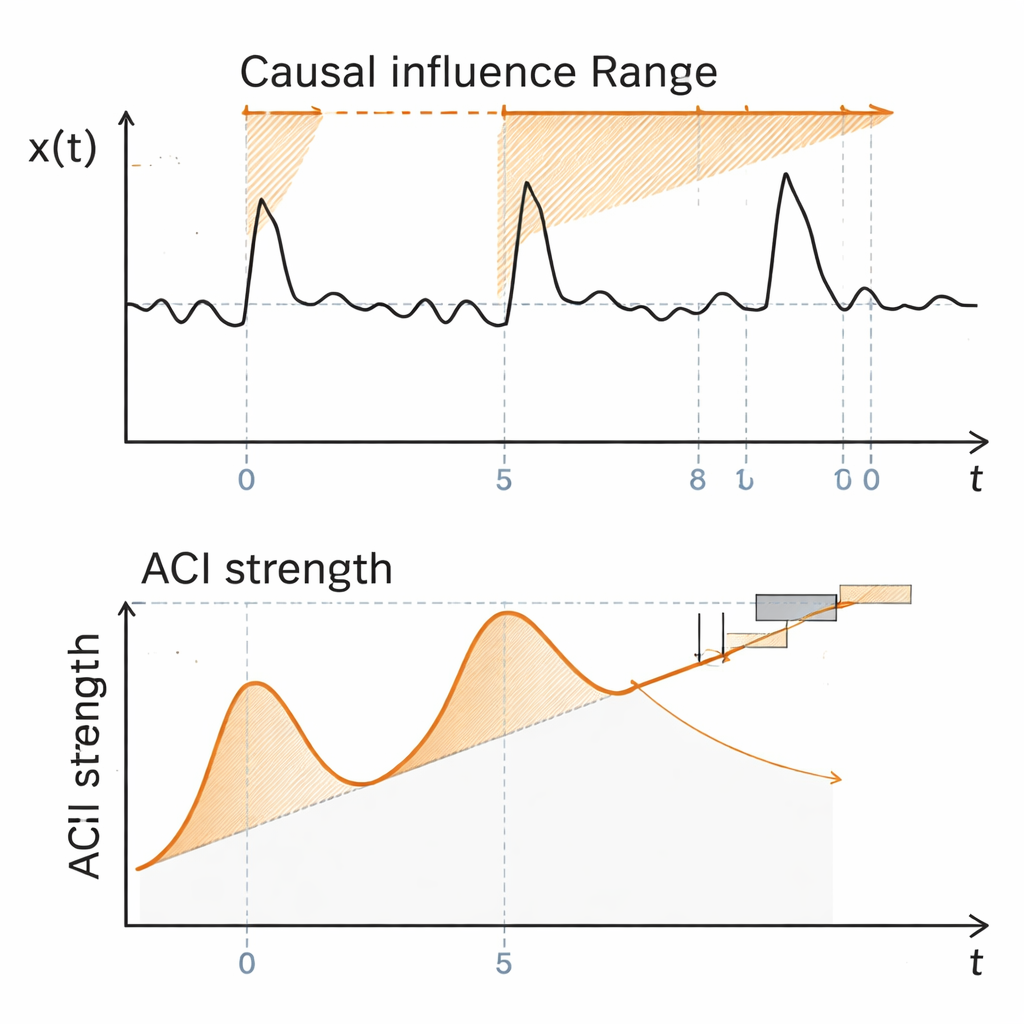

A key strength of ACI is that it tracks causal relationships as they evolve. Many real systems exhibit intermittency: long quiet stretches punctuated by bursts of intense activity, during which drivers and responders can swap roles. The authors illustrate this using a compact two-variable model that mimics atmospheric variability and its occasional extreme events. In this example, only one variable is observed. ACI reveals when the hidden partner variable temporarily becomes an “anti-damping” source that pumps energy into the observed variable, triggering large excursions. During these phases, the ACI measure spikes and the inferred influence extends far into the future. Once the extreme event peaks and the observed variable begins to decay, the causal strength from the hidden variable collapses, signaling a switch in roles: the former effect now strongly damps its previous driver.

To go beyond the simple question of “who influences whom,” ACI introduces the causal influence range (CIR). This quantity answers a temporal version of a familiar question: for how long does a given cause meaningfully shape the future of an effect? Technically, CIR is defined by watching how quickly the benefit of adding more future observations saturates. If new data far ahead in time barely improve our estimate of a past cause, its influence is considered to have faded. The authors propose both threshold-based (“subjective”) CIRs and an “objective” CIR that averages over all thresholds, closely analogous to how physicists turn noisy correlations into a single decorrelation time. This offers a mathematically grounded way to talk about how far, in time, causal impacts propagate.

Testing the method on climate extremes

The paper then applies ACI to a more realistic, six-variable model of the El Niño–Southern Oscillation (ENSO), a climate phenomenon that reshapes global weather by periodically warming and cooling the tropical Pacific Ocean. This conceptual model reproduces the rich diversity of El Niño flavors, including events centered in either the eastern or central Pacific, along with their La Niña counterparts. Using synthetic data from the model, the authors examine how different physical ingredients—sea-surface temperatures in the central Pacific, the depth of the warm water layer in the west, and rapidly fluctuating winds—jointly drive temperature anomalies in the eastern Pacific, the hallmark of El Niño.

ACI uncovers a nuanced, time-resolved picture consistent with established ENSO theory. For strong eastern Pacific El Niño events, central Pacific temperatures emerge as the dominant causal driver, with their ACI signal peaking slightly before the eastern warming maximum, reflecting the eastward spread of warm waters. Wind anomalies show a noisier but robust and nearly instantaneous influence, in line with their role in pushing warm water and altering heat exchange. Changes in the western Pacific thermocline, while important, exert a more indirect and earlier influence: their ACI values peak months before the event, echoing the “recharge–discharge” view in which subsurface heat builds up, affects central temperatures, and only then reaches the east. CIR estimates quantify these differences: central temperatures maintain the longest causal reach, winds the shortest, and subsurface depth an intermediate span. Remarkably, when ACI is applied to sparse real-world ENSO observations using an imperfect model, it still recovers qualitatively similar causal patterns.

Looking ahead: wider uses and open questions

Beyond these testbeds, the authors argue that ACI is well suited to many complex systems where only a single realization and short records are available, but some model of the dynamics exists—examples include large-scale climate, ecological networks, the brain, and even engineered infrastructures. Because ACI can incorporate efficient ensemble-based assimilation techniques, it is designed to scale to very high-dimensional problems, avoiding some of the curse of dimensionality that hampers traditional information-flow methods. The framework also extends to situations with many “background” variables by carefully removing their observational uncertainty from the analysis, so that inferred causal links are not merely side effects of shared influences or mediators.

What this means in simple terms

In everyday language, ACI offers a way to watch causes at work in real time, rather than averaging them into a static diagram. By borrowing tools from weather forecasting, it asks a pragmatic question: does knowing what will happen to an observable quantity in the near future help us pin down what an unseen driver was doing just before? If the answer is yes, ACI labels that driver as causal at that moment and estimates how long its fingerprint persists. This backwards-looking, uncertainty-based view turns causality into a measurable signal in complex, noisy systems. While challenges remain—especially dealing with imperfect models and measurement noise—the approach opens a path toward more precise, time-resolved explanations of extreme events in climate and other fields where understanding who pushed whom, and when, can have profound practical consequences.

Citation: Andreou, M., Chen, N. & Bollt, E. Assimilative causal inference. Nat Commun 17, 1854 (2026). https://doi.org/10.1038/s41467-026-68568-0

Keywords: causal inference, Bayesian data assimilation, complex dynamical systems, extreme climate events, El Niño Southern Oscillation