Clear Sky Science · en

Model-agnostic linear-memory online learning in spiking neural networks

Why training brain-like computers is so hard

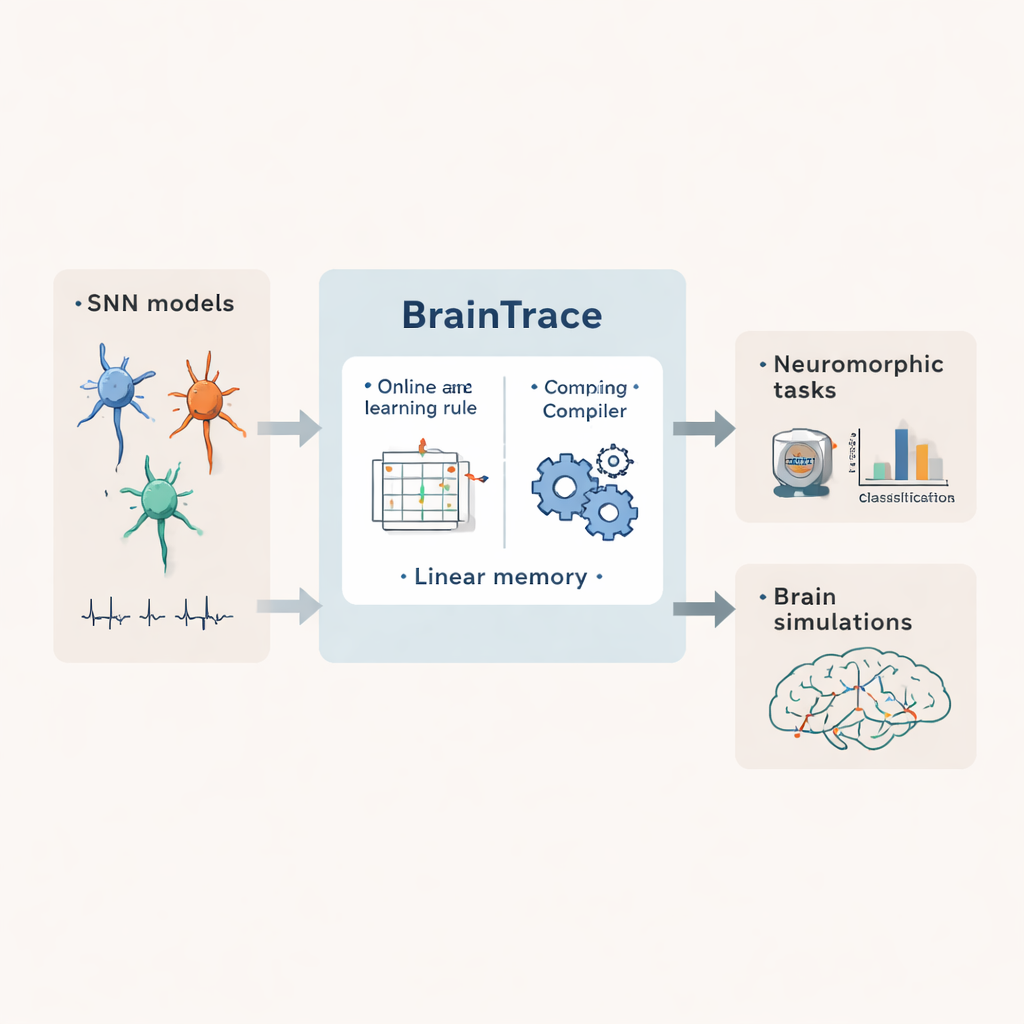

Spiking neural networks are a class of artificial networks that communicate using brief electrical pulses, much like real brain cells. They promise ultra‑efficient, brain‑inspired computing and more realistic simulations of neural circuits. But teaching these networks to perform complex tasks, especially over long stretches of time, usually demands huge amounts of computer memory and hand‑crafted code. This article introduces BrainTrace, a system designed to make training spiking networks both practical and widely usable.

Teaching networks that learn in real time

Most powerful training methods for brain‑like networks work by replaying an entire sequence of activity and pushing error signals backward through every time step. This approach, known as backpropagation through time, can be very accurate but quickly runs into trouble when sequences are long or networks are large: every intermediate state must be stored, leading to memory growth that scales with both time and network size. Alternative “online” methods update connections step by step while data stream in, which greatly reduces storage needs. However, existing online rules either work only for very simplified neuron models or still require memory that grows quadratically with network size, making them hard to apply to realistic brain‑scale systems.

A general recipe for many kinds of spiking networks

BrainTrace tackles this by first describing spiking networks in a unified way. The authors show that many neuron and synapse types can be expressed as two interacting parts: internal dynamics that describe how each neuron’s state changes over time, and interaction dynamics that turn incoming spikes into currents flowing between cells. They further introduce two modeling viewpoints, called AlignPre and AlignPost, which organize synapses either around the sending neuron or the receiving neuron. This abstraction allows a wide variety of biological and engineered models to be handled with the same mathematical machinery, from simple leaky neurons to richer cells with adaptive thresholds and complex synapses.

A memory‑lean way to follow cause and effect

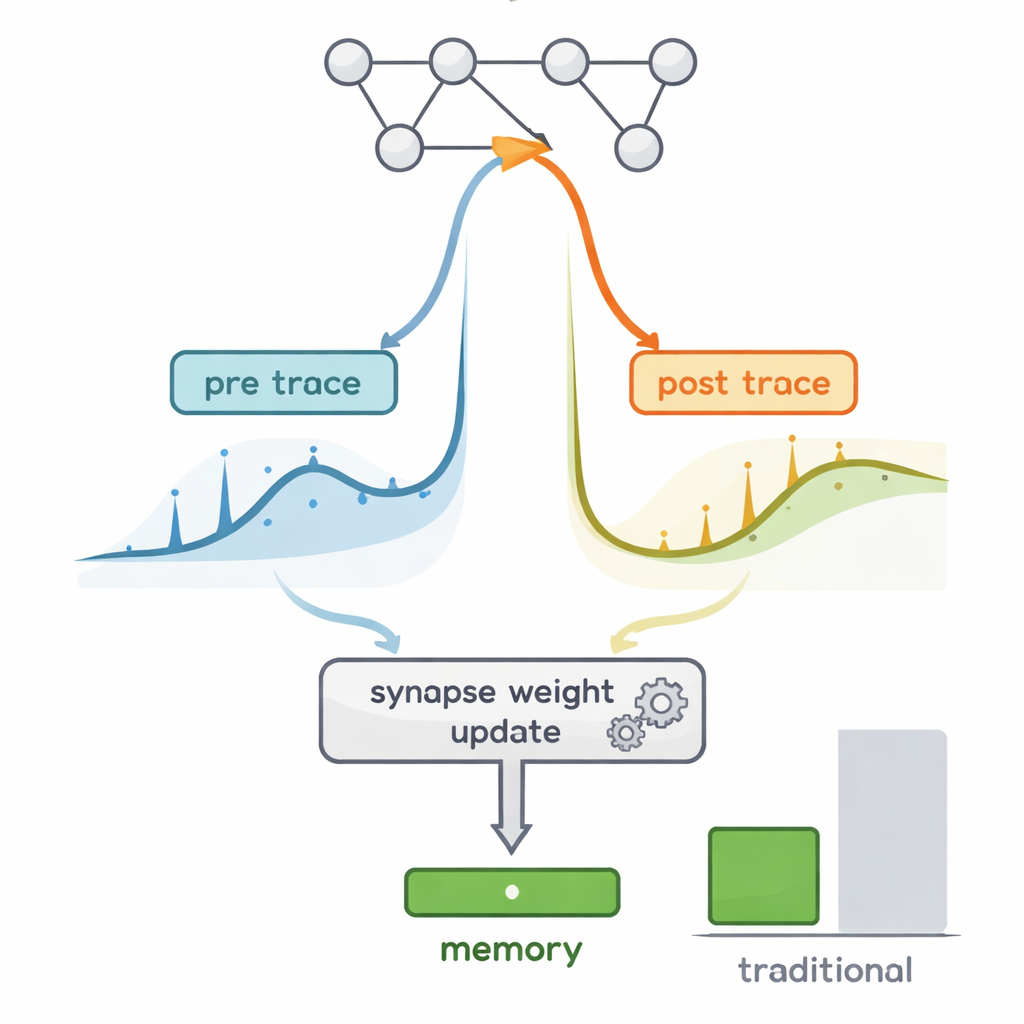

The core challenge in online learning is tracking how small changes in each connection would eventually affect network behavior, a quantity captured by so‑called “eligibility traces.” In principle, keeping full eligibility information requires tracking vast matrices that scale with the cube of the number of neurons. BrainTrace exploits three key properties of spiking networks: most neurons are silent most of the time; each neuron’s own leak and reset dominate how its state changes; and spikes and synaptic conductances are always positive. Using these facts, the authors show that the heavy eligibility matrices can be closely approximated by the product of just two compact traces per synapse, one summarizing presynaptic activity and one summarizing postsynaptic activity. This pre‑post propagation rule, dubbed pp‑prop, uses memory that grows only linearly with network size, yet still produces gradients that align well with those from full backpropagation.

Automatic tools that hide the math

Beyond the learning rule itself, BrainTrace provides a compiler that plays a role similar to automatic differentiation libraries in deep learning. A user writes down the dynamics of their spiking model in a high‑level language. The BrainTrace compiler then analyzes how states and parameters are connected, constructs the necessary eligibility traces, and emits optimized code that runs the pp‑prop or a related algorithm efficiently on CPUs, GPUs, or specialized accelerators. This means modelers can focus on scientific questions instead of hand‑crafting delicate gradient code, while still benefiting from online, memory‑efficient learning.

From tiny sensors to a whole fly brain

The authors test BrainTrace on standard neuromorphic benchmarks, where spiking networks classify event‑based versions of images, sounds, and gestures. Across multiple datasets and architectures, pp‑prop matches the accuracy of full backpropagation while using orders of magnitude less memory and running faster than other online methods. Crucially, the system also scales to demanding neuroscience problems. In one example, a biologically detailed spiking network with separate excitatory and inhibitory populations learns an evidence‑accumulation decision task and develops activity patterns resembling those recorded in mouse cortex. In another, a spiking model with more than 125,000 neurons, wired according to the fruit fly connectome, is trained to reproduce resting‑state activity recorded across the entire fly brain—a feat that exceeds the memory capacity of conventional training on a single graphics card.

What this means for future brain‑like computing

For non‑experts, the main message is that BrainTrace turns a once impractical dream—training rich, brain‑scale spiking networks in real time—into a realistic possibility. By finding a clever way to keep track of cause and effect with only a small amount of memory, and by wrapping this in automated tools, the work brings brain‑inspired computing closer to everyday use in both artificial intelligence and basic neuroscience. It suggests a path toward machines that learn and adapt with the efficiency and temporal precision of real nervous systems, without demanding supercomputer‑level resources.

Citation: Wang, C., Dong, X., Ji, Z. et al. Model-agnostic linear-memory online learning in spiking neural networks. Nat Commun 17, 1745 (2026). https://doi.org/10.1038/s41467-026-68453-w

Keywords: spiking neural networks, online learning, neuromorphic computing, brain simulation, gradient-based training