Clear Sky Science · en

Single-shot matrix-matrix photonic processor based on spatial-spectral hypermultiplexed parallel diffraction

Why Faster, Greener Computing Matters

Every time we ask a digital assistant a question or scroll through social media, powerful artificial intelligence models are working behind the scenes. These models are becoming so large that conventional computer chips are struggling to keep up without using enormous amounts of energy. This article describes a new kind of computing hardware that uses light instead of electricity to carry out key AI calculations, aiming to make future machines both faster and far more energy-efficient.

Turning Light into a Calculator

Modern AI runs on operations called matrix multiplications, repeated billions or trillions of times when a neural network analyzes images or text. Electronic chips do this work reliably but waste a lot of energy just moving data back and forth inside the chip. The researchers behind this study build on a different idea: let light itself perform the math. In an optical neural network, information is encoded into laser beams, manipulated as the beams pass through lenses and modulators, and then read out by light sensors. Because photons do not heat up wires the way electrons do, such systems can, in principle, reach far higher speeds and efficiencies.

Making Many Calculations in One Shot

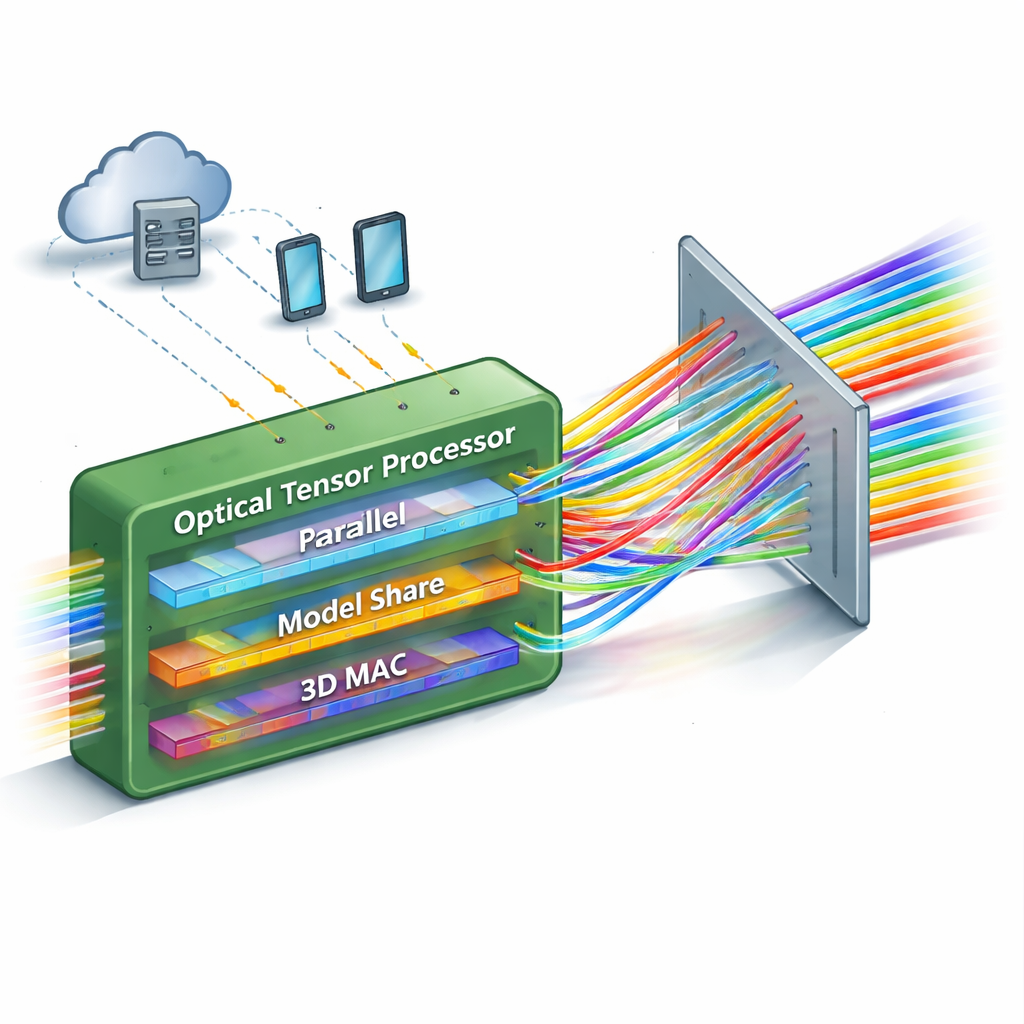

Most existing optical neural networks have a limitation: they can only handle a modest number of calculations in parallel, or they become too complex to scale up. This work introduces a “single-shot” matrix–matrix photonic processor that dramatically boosts how many operations can be done at once. The key idea is to pack information into three different aspects of light simultaneously—its position in space, its color (wavelength), and its timing. By carefully arranging these dimensions, the device can perform a full matrix–matrix multiplication, involving thousands of multiply-and-accumulate steps, in a single pass of light through the system.

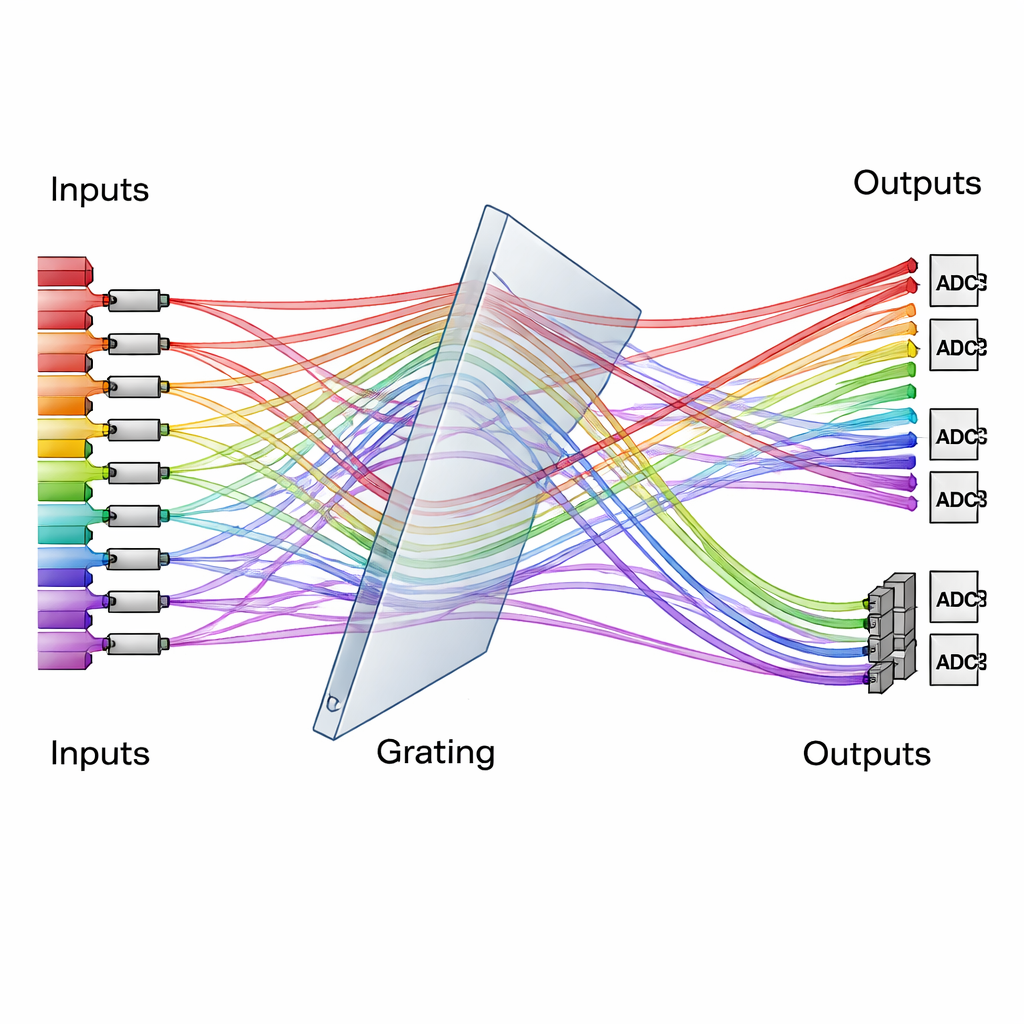

A Diffraction Grating as a Traffic Controller for Light

At the heart of the design is a simple but powerful optical element: a diffraction grating, which splits light into different angles depending on its color. The team uses a specially arranged, three-dimensional grating system like a traffic controller, routing many colored beams from many input channels into reordered output channels. Data to be processed are encoded as light intensities on one set of modulators, while the neural network’s “weights” are encoded on another set. When the beams meet and pass through the grating, their paths are rearranged so that each output channel naturally sums the right combinations of data and weights. Time-integrating detectors then accumulate contributions over several short time steps, effectively extending the size of the computation without adding extra complexity to the optics.

From Lab Setup to Real AI Tasks

The authors demonstrate a 16-by-16-by-16-by-16 optical tensor processor, meaning it can multiply a 16×16 matrix by another 16×16 matrix in one optical “shot,” achieving 4096 basic operations at once. The system runs at multi-gigahertz clock rates and reaches an effective computing precision of more than eight bits, comparable to many practical AI accelerators. To show that this is not just a physics demonstration, they use the processor to run parts of a small image-recognition pipeline: a convolutional neural network that extracts features from digit images, followed by a fully connected neural network that classifies them. Even with optical noise and hardware imperfections, the setup correctly recognizes handwritten digits with about 96% accuracy, close to an all-digital implementation of the same model.

Energy Use, Sensitivity, and How Far It Can Scale

Because the architecture reuses the same optical components across many parallel channels and accumulates signals efficiently, each basic operation can be carried out with extremely little energy—down to tens of attojoules of optical energy per multiplication. The authors estimate an overall energy efficiency already surpassing some state-of-the-art electronic AI accelerators, and argue that modest improvements in modulators and digital-to-analog converters could push this into the hundreds of trillions of operations per second per watt. Importantly, the design avoids some of the scaling roadblocks that plague other optical schemes, so larger versions with many more channels (for example 30×30 or even 60×60 arrays) appear feasible using similar components.

What This Means for Everyday Technology

In plain terms, this research shows that a relatively simple optical setup—a smart way of routing colored light beams through a diffraction grating—can act as a powerful, low-energy engine for AI-style calculations. While this is still a laboratory prototype, it points toward future data centers and edge devices where light-based processors handle the heaviest neural network workloads, cutting energy bills and enabling larger, faster models. If such photonic tensor processors can be integrated and manufactured at scale, they could become a key ingredient in the next generation of high-performance, energy-efficient artificial intelligence hardware.

Citation: Luan, C., Davis III, R., Chen, Z. et al. Single-shot matrix-matrix photonic processor based on spatial-spectral hypermultiplexed parallel diffraction. Nat Commun 17, 484 (2026). https://doi.org/10.1038/s41467-026-68452-x

Keywords: optical neural networks, photonic computing, matrix multiplication, energy-efficient AI hardware, diffraction grating