Clear Sky Science · en

Distinct roles of cortical layer 5 subtypes in associative learning

How the Brain Learns That a Touch Predicts a Treat

Imagine learning that a gentle tap on your wrist means dessert is coming. Your brain has to link a simple touch with a future reward. This study looks inside the mouse brain to see how two different types of nerve cells work together to form those links, revealing how everyday learning is split between “what happened” and “what it means for me.”

A Simple Whisker Game to Study Learning

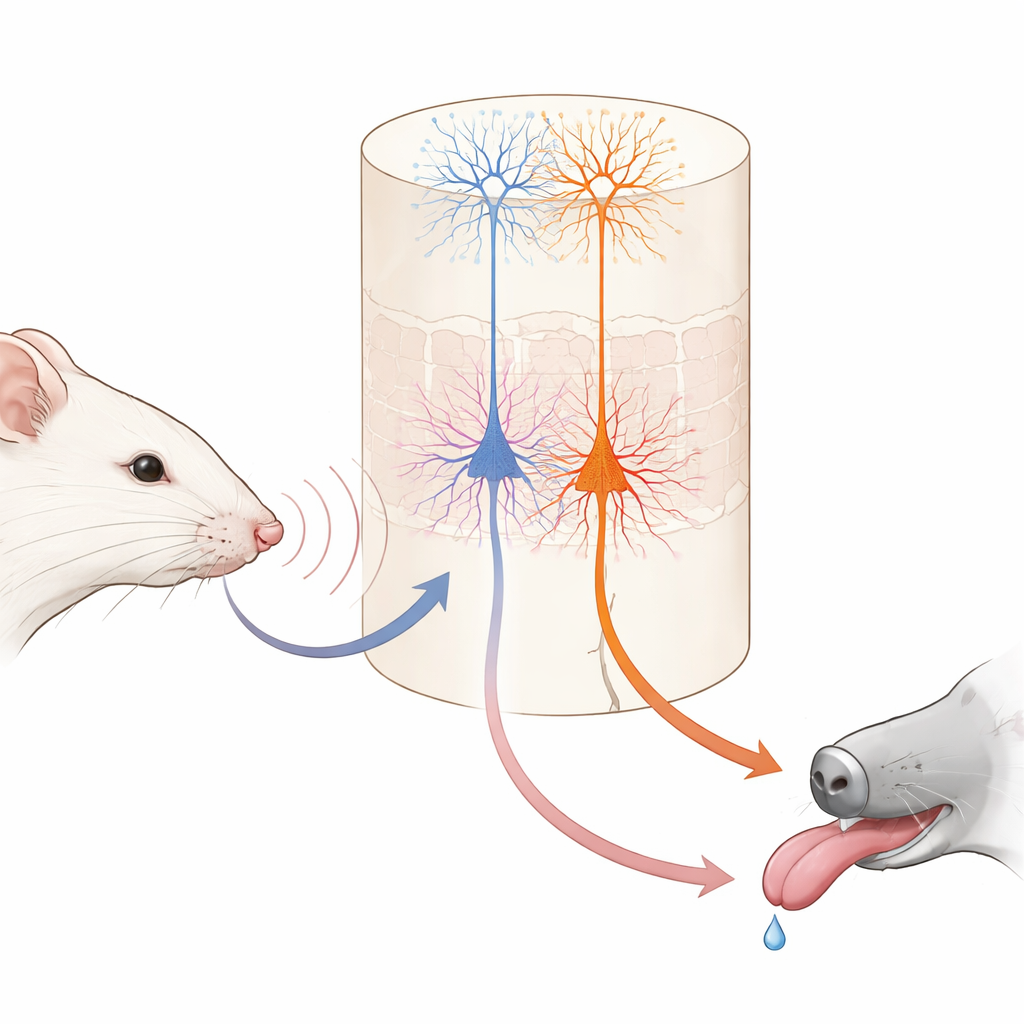

The researchers trained head‑fixed mice on a straightforward game using their facial whiskers. In each trial, a single whisker was vibrated at one of two speeds. One vibration was followed, after a brief delay, by a tiny drop of water; the other was never rewarded. At first, the mice licked in anticipation during both kinds of trials, not yet knowing which feeling on the whisker signaled water. Over several days, they gradually learned to lick early only when the “good” vibration occurred, and to hold back when the unrewarded one appeared. When the scientists temporarily shut down the primary touch area of the brain during training, this learning largely disappeared, showing that this sensory region is important for building the association, even though expert mice could later perform the task without it.

Two Output Cell Types with Very Different Jobs

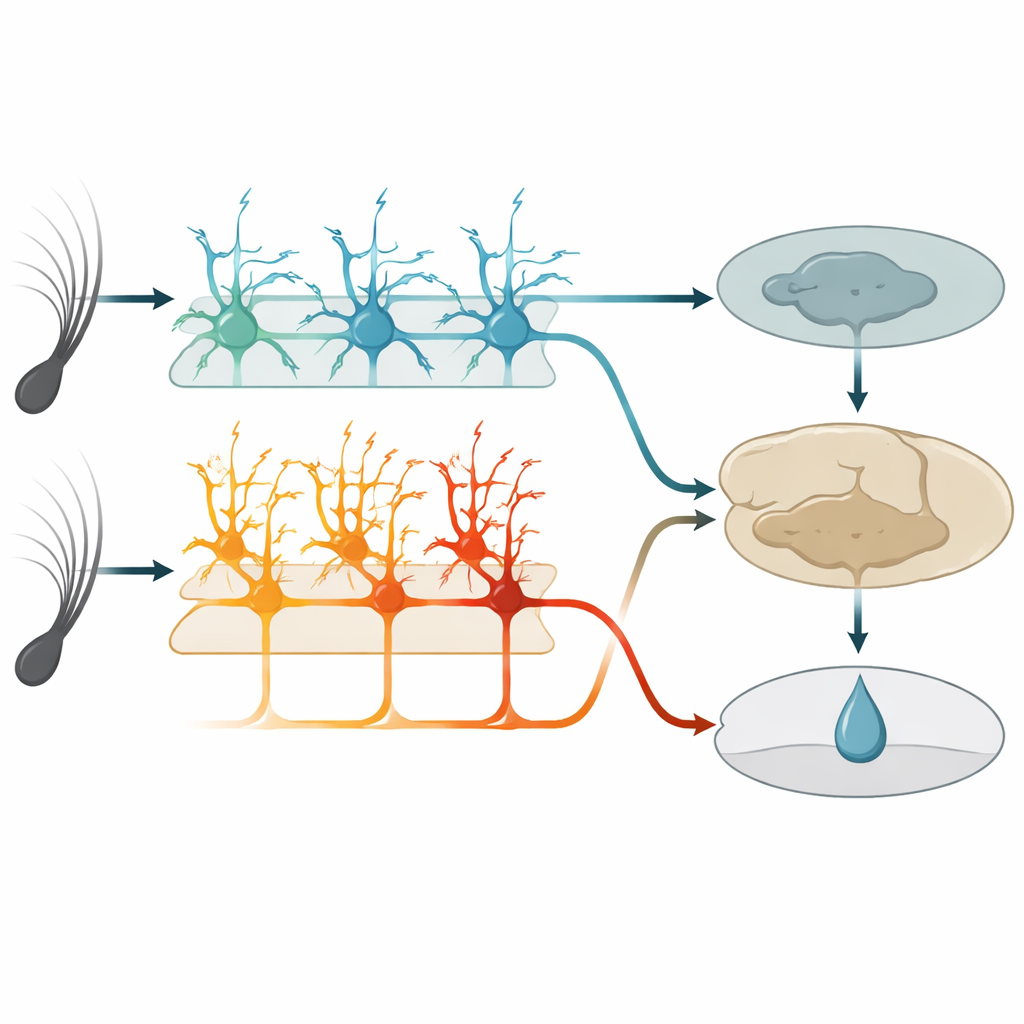

Within this touch area, a deep layer known as layer 5 contains two main kinds of output nerve cells. One group, called IT cells here, sends signals to other cortical regions in both brain hemispheres. The other group, ET cells, sends signals mainly downward to subcortical structures involved in action and reward. Using genetically modified mice and high‑resolution two‑photon imaging, the authors could selectively monitor each cell type’s activity through the tips of their long, tree‑like branches. Before learning, IT cells already responded strongly and reliably to whisker vibrations, and their combined activity could accurately tell the two vibration speeds apart. ET cells, in contrast, responded more weakly and less consistently to the stimuli, offering only a fuzzy readout of which vibration had occurred.

Stable Sensations Versus Growing Expectations

As the mice learned, IT cells behaved like dependable reporters. Their responses stayed tightly locked to the moment the whisker moved and changed little from day to day. They continued to encode which vibration happened, regardless of whether it predicted a reward. ET cells, however, transformed their behavior. Instead of simply spiking at stimulus onset, their activity gradually ramped up during the vibration and the short delay, peaking around the expected time of water delivery. This ramping grew in step with the animals’ anticipatory licking and became better at predicting whether a trial would end in licking than at reporting the exact stimulus. Individual ET cells shifted in and out of the active group across days, but at the population level the pattern became more consistent, suggesting a flexible but convergent code for reward expectation.

Switching Off Each Cell Type Reveals a Division of Labor

To test function, the team used chemogenetic tools to selectively dampen either IT or ET cells during training. When IT cells were silenced, mice showed fewer anticipatory licks overall and failed to build a clear difference between rewarded and unrewarded vibrations. When ET cells were silenced, mice did the opposite: they licked too much to both vibrations, especially the unrewarded one, and struggled to refine their behavior even though they still licked vigorously. Silencing either group after the task was mastered no longer hurt performance, implying that once other brain regions have stored the association, this sensory area and its layer‑5 outputs become less critical for executing the learned response.

A Learning Model that Mirrors Brain Behavior

The authors built a reinforcement‑learning style computer model to interpret these findings. In the model, an IT‑like network provides stable sensory representations that help estimate the “value” of each stimulus—how likely it is to be followed by reward. An ET‑like pathway relays this predicted value to a downstream circuit that compares it with the actual reward, generating a prediction error that adjusts future value estimates. Blocking the IT or ET pathways in the model reproduced the distinct learning failures seen in the mice: without IT‑like input, learning was slow and weak for both stimuli; without ET‑like output, initial learning occurred but the system failed to properly reduce responses to the unrewarded cue. The model also captured how, over time, non‑sensory regions could take over performance, consistent with the experiments.

What This Means for Everyday Learning

In plain terms, this study suggests that when we learn that a particular sight, sound, or touch predicts something good or bad, different sets of deep cortical neurons share the work. One set keeps a faithful record of “what happened” in the world, while another gradually comes to signal “what I expect will happen next” and helps fine‑tune behavior by comparing those expectations with reality. Together they form a bridge between raw sensation and flexible, experience‑based actions, offering a clearer picture of how the brain supports habits, learning from rewards, and possibly how these processes go awry in disease.

Citation: Moberg, S., Garibbo, M., Mazo, C. et al. Distinct roles of cortical layer 5 subtypes in associative learning. Nat Commun 17, 2648 (2026). https://doi.org/10.1038/s41467-026-68307-5

Keywords: associative learning, somatosensory cortex, layer 5 neurons, reward expectation, reinforcement learning