Clear Sky Science · en

Reducing bulky medical images via shape-texture decoupled deep neural networks

Why shrinking medical images matters

Modern hospitals generate vast numbers of detailed 3D scans from CT and MRI machines. These images are essential for diagnosis and research, but they are huge: a single dataset can take up hundreds of gigabytes, making it slow and expensive to store, share, and analyze. This paper introduces a new way to dramatically shrink these bulky files while keeping their diagnostic detail almost intact, potentially speeding up clinical work, remote consultation, and large-scale medical studies.

Two kinds of information in one scan

When you look at a body scan, you are really seeing two different kinds of information at once. First is the overall shape of organs and bones – where the spine curves, how big the liver is, the layout of the abdomen. Second is the fine-grained texture – tiny variations in brightness that hint at tissue types or subtle disease. The authors argue that most existing compression tools treat these two ingredients as if they were mixed together, which makes compression slower and less efficient. Their key idea is to separate shape and texture and compress each with a strategy that suits it best.

A template-based blueprint for the body

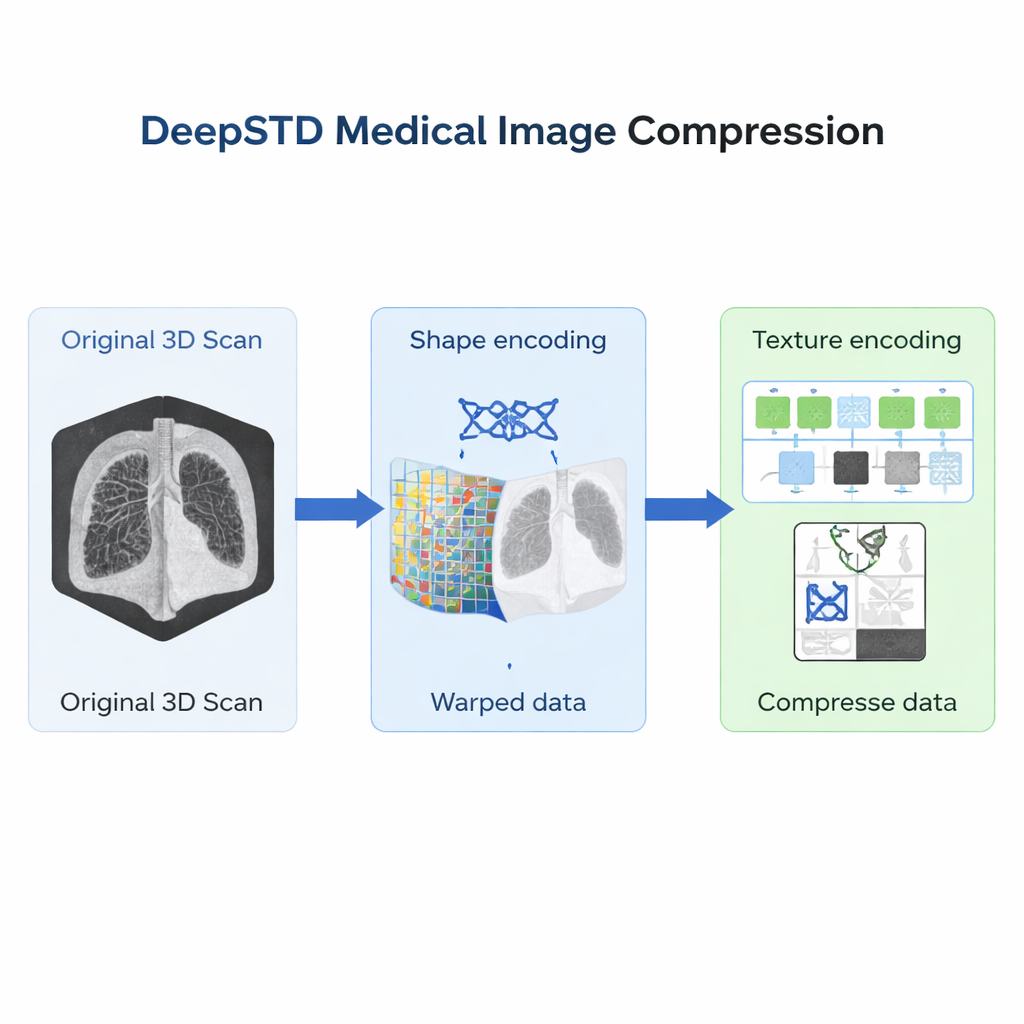

The new method, called Shape-Texture Decoupled Compression (DeepSTD), starts by choosing a “template” scan for a given body region and imaging type, such as torso CT or abdominal MRI. This template acts like a standard map of that anatomy. For each new scan, DeepSTD first figures out how that person’s body needs to be smoothly warped to line up with the template. That warping field describes the shape differences: perhaps one patient is taller, another has a slightly shifted liver, or a spine with different curvature. The authors represent this warping field using a compact kind of neural network that excels at encoding smooth 3D deformations, so the shape information can be stored efficiently.

Capturing subtle textures after alignment

Once a scan is morphed to match the template’s shape, what remains are mostly texture differences – the subtle intensity patterns that distinguish one patient from another. Because all scans are now in the same geometric layout, these textures are easier to model and compress. DeepSTD feeds the aligned data into a second neural network that mixes convolutional layers (good at local detail) with Transformer blocks (good at capturing longer-range structure) in full 3D. This network learns, from many examples, which texture details are common and which are unique, allowing it to store only the essentials in a compact “latent code.” The final compressed file is just the shape code plus the texture code.

Testing on real CT and MRI collections

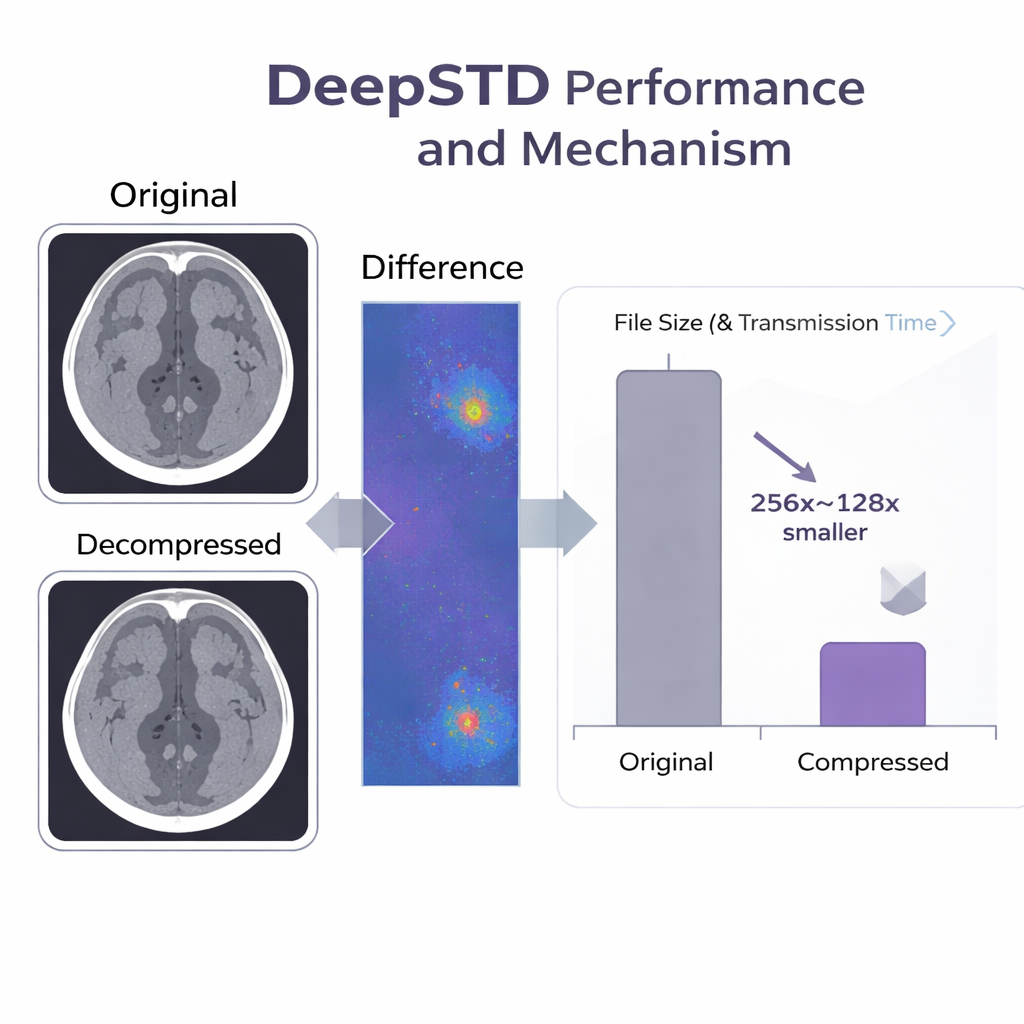

The team tested DeepSTD on large public datasets, including detailed spine CT scans and abdominal MRI volumes. They compared it with both traditional tools (like JPEG, HEVC, and newer video standards) and state-of-the-art neural methods. At compression levels up to 256-fold smaller than the original files, DeepSTD preserved both pixel-level similarity and medically important features, such as automatic organ segmentations, far better than the alternatives. At the same time, it encoded scans tens to over a hundred times faster than the best prior neural compression system based on implicit neural representations alone. In practical terms, a CT dataset that once took days to download over a slow connection could be transferred in under half an hour with DeepSTD, with almost no visible loss.

Built for everyday clinical use

Beyond raw numbers, the authors designed DeepSTD with real-world constraints in mind. The method can use multiple graphics cards in parallel, cutting encoding and decoding times further for large collections. It allows precise control over the compression ratio, so hospitals can match file size to available storage or network bandwidth. The system also works when training data are limited, thanks to clever data augmentation and “knowledge distillation” techniques that transfer what has been learned from richer datasets. Tests on additional chest X-rays and brain and knee MRI scans suggest that the approach is broadly applicable across different imaging types.

What this means for patients and doctors

For a non-specialist, the takeaway is simple: DeepSTD is a smarter way to pack medical images. By separately encoding how a patient’s body is shaped and how their tissues look, it squeezes scans down by more than a hundred-fold while keeping the information doctors and algorithms rely on. This could make it much easier to store long-term imaging records, share data between hospitals, and run large-scale AI studies, all without sacrificing diagnostic quality.

Citation: Yang, R., Xiao, T., Cheng, Y. et al. Reducing bulky medical images via shape-texture decoupled deep neural networks. Nat Commun 17, 1573 (2026). https://doi.org/10.1038/s41467-026-68292-9

Keywords: medical image compression, deep learning, CT and MRI data, neural representation, health data storage