Clear Sky Science · en

High-clockrate free-space optical in-memory computing

Why this matters for everyday smart tech

From self-driving cars and delivery drones to high‑speed trading and remote surgery, more and more decisions have to be made in fractions of a second, often far away from big data centers. Today’s electronics struggle to keep up without overheating or draining batteries. This paper introduces a new kind of light‑based computing engine that can perform key artificial intelligence tasks extremely fast and with low energy use, potentially transforming how smart devices work at the “edge” of the network.

Turning light into a calculator

Modern AI relies heavily on one basic operation: multiplying and adding large grids of numbers, similar to repeatedly sliding a small stencil over a picture and tallying what it sees. Doing this with electrons on chips is powerful but wasteful, because data must constantly be shuttled back and forth between memory and processors. The researchers instead build a system called FAST‑ONN that lets light do much of the work in mid‑air. They use tiny semiconductor lasers arranged in a neat grid to encode image pixels as light intensity, then let those beams travel through optical components that apply the “weights” of a neural network directly in space, before landing on light sensors that turn the results back into electrical signals.

How the optical engine is built

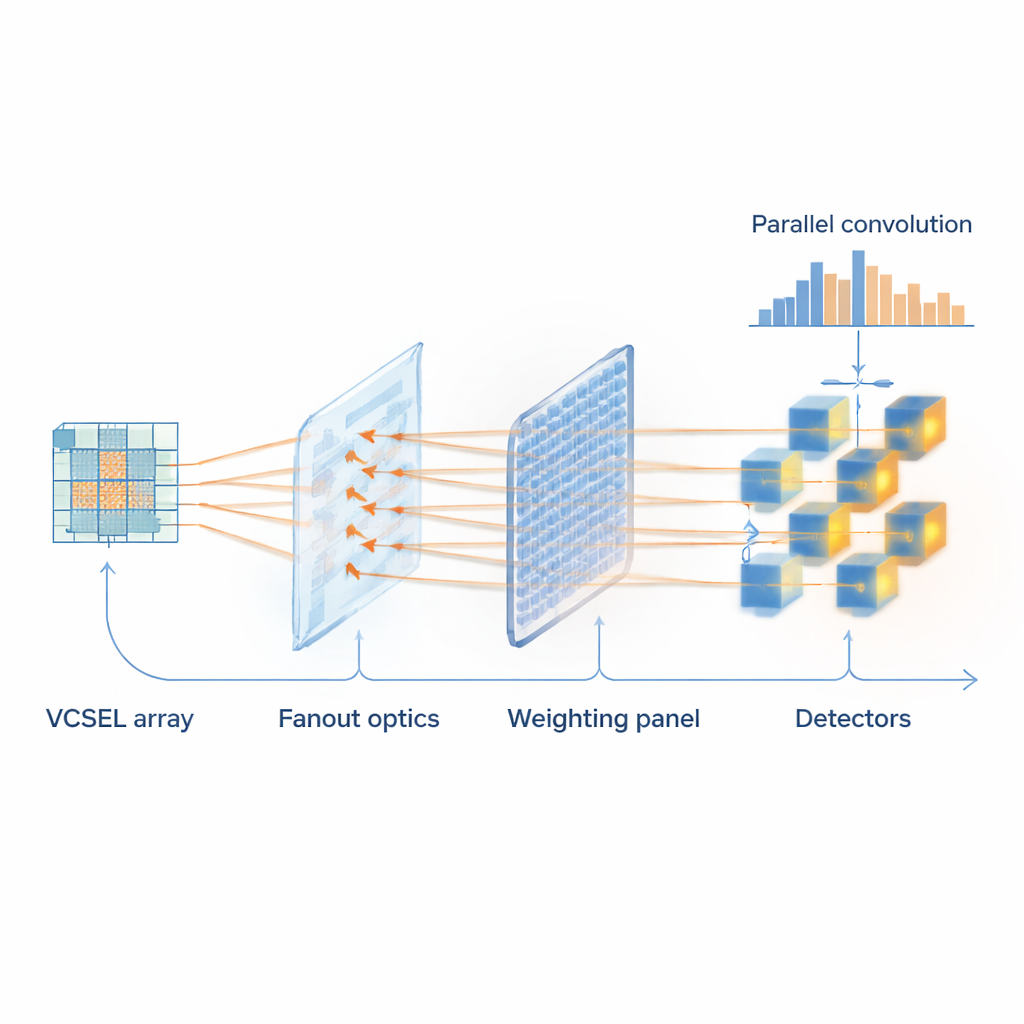

At the heart of the system is a dense array of microscopic lasers known as vertical‑cavity surface‑emitting lasers (VCSELs). Each device in a 5×5 grid represents one pixel of a small image patch and can be switched at gigahertz speeds—billions of times per second. A patterned glass element splits this grid of beams into multiple copies, so the same patch can be processed in parallel by several different filters. A programmable spatial light modulator, similar in spirit to a high‑resolution display, acts as in‑memory storage for the filter values: its millions of tiny pixels each dim or pass light to represent a neural network weight. The beams then converge into fiber‑coupled detectors that add up the light for each filter, effectively completing a batch of convolution operations in one optical step.

Handling positive and negative “thoughts”

AI models do not just need to strengthen certain patterns; they must also suppress others, which requires both positive and negative weights. Because light intensity is naturally never negative, this is a longstanding challenge for purely optical computing. The authors solve it by splitting the light into a signal path that carries the weighted beams and a reference path that is left unweighted. Both are fed into special paired detectors that subtract one from the other, so less light can represent a negative contribution. This clever differential readout allows the optical hardware to mimic the full behavior of standard neural networks while remaining robust to noise and small imperfections in the devices.

Putting the system to the test

To show that FAST‑ONN is not just a physics demo, the team plugs it into realistic recognition tasks. They connect the optical engine to a standard vision network trained on the COCO image dataset, widely used for testing object detection. In one experiment mirroring a self‑driving car scenario, cropped regions of traffic scenes are analyzed to decide whether each contains a vehicle. The most demanding convolution layer is offloaded to the optical hardware, while the remaining steps run digitally. The optical and purely electronic versions of the model agree closely, achieving almost identical performance in distinguishing cars from background clutter. They also demonstrate handwritten‑digit and clothing classification, and even perform training where the optical system computes forward passes while a computer updates the weights, which are then reloaded into the light modulator.

Speed, efficiency, and what comes next

In its current form, the prototype processes 100 million small image patches per second using 5×5 lasers and nine filters at once, already reaching nearly a billion convolution operations per second with microsecond‑scale decision times. Detailed analysis suggests that, using larger arrays and faster commercial lasers, this approach could be scaled to perform tens of thousands of trillions of operations per second while using far less energy than leading electronic accelerators. Because the key components are compact and mass‑manufacturable, FAST‑ONN could ultimately enable tiny, low‑power optical coprocessors inside cameras, drones, and other edge devices, allowing them to “think with light” and respond to the world almost as quickly as it changes.

Citation: Liang, Y., Wang, J., Xue, K. et al. High-clockrate free-space optical in-memory computing. Light Sci Appl 15, 115 (2026). https://doi.org/10.1038/s41377-026-02206-8

Keywords: optical neural networks, edge AI hardware, VCSEL arrays, in-memory computing, high-speed convolution