Clear Sky Science · en

Microcomb-enabled parallel self- calibration optical convolution streaming processor

Why faster thinking machines matter

From streaming video to training massive AI models, modern data centers are drowning in information. Moving and processing all that data with today’s electronic chips burns huge amounts of power and bumps up against speed limits. This paper introduces a new kind of light-based computing chip that can act as a fast, low‑energy “front end” for AI systems, handling some of the heaviest math before the data ever reaches conventional processors.

Letting light do the heavy lifting

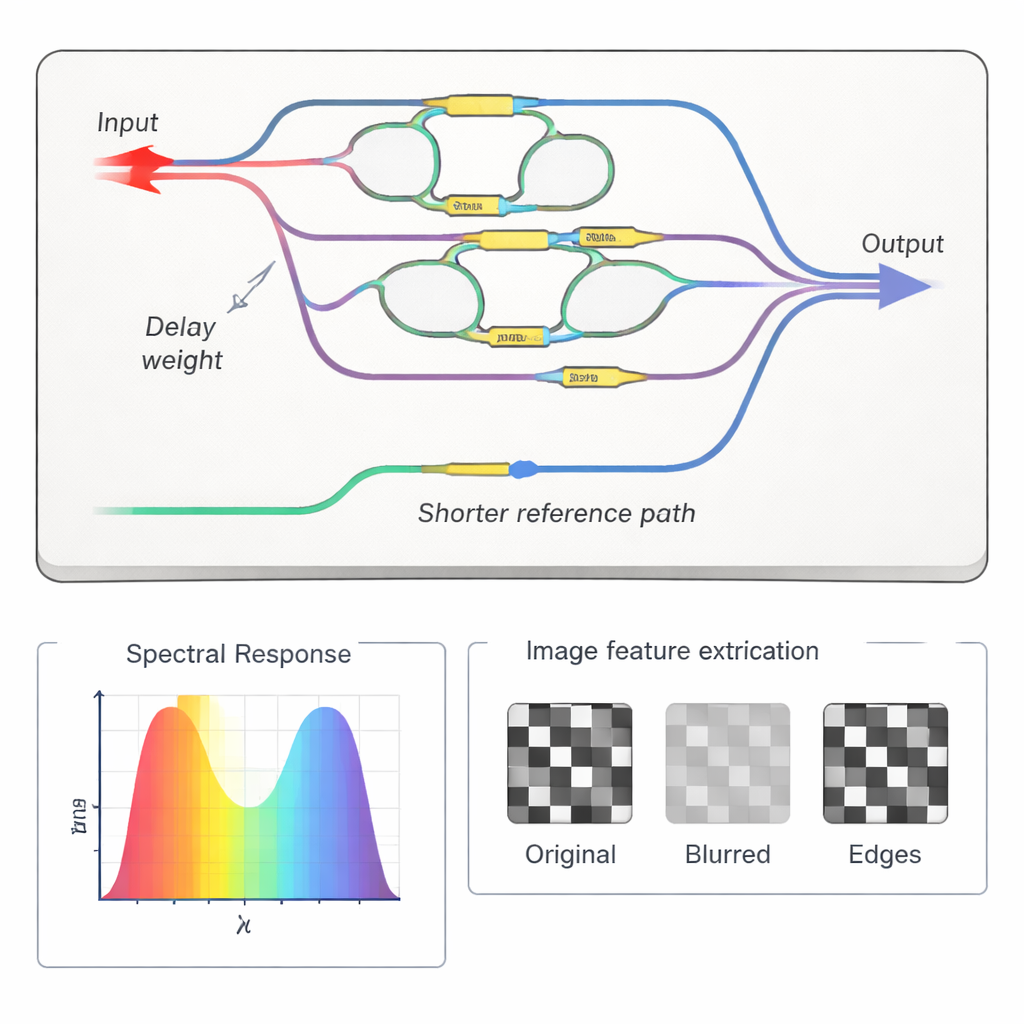

Most AI systems rely on convolution, a kind of sliding mathematical window that scans images, sound, or other signals to pick out features such as edges or textures. Electronics perform these operations step by step, shuffling numbers in and out of memory. The chip described here replaces that with a physical process in which light beams are split, delayed, weighted, and then recombined. Because the computation happens as the light travels, it avoids much of the data movement that slows and heats electronic hardware, and it can run at tens of billions of operations per second for each stream of data.

Many colors of light, many tasks at once

A key ingredient is a device called a microcomb: a tiny ring-shaped laser source that produces dozens of evenly spaced colors, or wavelengths, of light at once. Each color acts like an independent lane in a high‑speed optical highway. The team’s optical convolution streaming processor sends all these colors through the same chip, but arranges the pathways so that they experience the same “convolution kernel”—the set of weights used to analyze the data. Time delays between paths, combined with the different colors, create a three‑dimensional form of parallelism in time, space, and wavelength. In experiments, the system processed data at 50 gigabaud per color and reached a total computing speed of about 4 trillion operations per second across five wavelengths.

Teaching a light chip to stay accurate

Using interference between light waves for computation is powerful but fragile: nanometer‑scale changes in path length can ruin the carefully tuned weights. To keep the chip accurate, the researchers built in a special reference pathway and a self‑calibration procedure. By sweeping a laser across frequencies and measuring only output power, they reconstruct both the strength and the phase of each path inside the device. A feedback loop then adjusts tiny heaters on the chip until the measured convolution weights match the desired ones. This automatic tuning not only corrects for fabrication imperfections and temperature drift, it also lets the same chip be reprogrammed for different tasks, such as blurring or edge detection in images.

From image filters to real AI workloads

To show that the processor is useful beyond simple demos, the authors combined it with standard electronic neural‑network layers in a hybrid system. The optical chip handled the first convolutional layer, extracting basic features from color images carried on several wavelength channels. The resulting feature streams were converted back to electronics and fed into a deeper digital network. Tested on the CIFAR‑10 image dataset, which includes classes like airplanes, cats, and trucks, the mixed optical‑electronic system approached the accuracy of a fully digital model while offloading a chunk of the heavy computation into the photonic domain.

What this could mean for future data centers

In plain terms, this work shows that tiny chips that compute with light can plug directly into existing fiber‑optic links in data centers and act as shared accelerators for AI workloads. By combining many colors of light, multiple delay paths, and a built‑in self‑calibration method, the demonstrated processor achieves very high speeds and good accuracy without excessive power use. If scaled up, similar devices could sit between storage and compute racks, performing fast filtering and feature extraction on data as it flows, helping future “thinking” machines run faster and greener.

Citation: Wang, J., Xu, X., Zhu, X. et al. Microcomb-enabled parallel self- calibration optical convolution streaming processor. Light Sci Appl 15, 149 (2026). https://doi.org/10.1038/s41377-025-02093-5

Keywords: optical computing, photonic AI hardware, microcomb, data center acceleration, convolutional neural networks