Clear Sky Science · en

3DSynBrush a high quality 3D reconstruction framework for single Dunhuang murals

Ancient art meets modern tools

The painted caves of Dunhuang in western China hold some of the world’s most remarkable Buddhist murals, but many of these fragile works are fading, cracking, and flaking away. This study introduces a new way to turn a single photograph of a mural detail into a lifelike three-dimensional model, helping historians, conservators, and the public explore these artworks from every angle without ever touching the originals.

A treasure at risk of disappearing

The Dunhuang Mogao Grottoes are often called a library on stone, with murals that record religious stories, architecture, clothing, music, and daily life across a thousand years. Time, sand, salt, and human activity have all taken their toll, causing pigments to fade and plaster to crumble. Traditional preservation relies on photographs and painstaking physical repair, but these cannot fully capture the depth, color shifts, and spatial feeling of the murals, nor can they prevent further loss. Digital 3D models, in contrast, can freeze a moment in time, allowing curators to analyze details, simulate lighting, and share the works widely without endangering the originals.

Why turning flat paintings into 3D is hard

Creating a convincing 3D model usually requires many photos taken from different viewpoints around an object. Dunhuang murals, however, are flat paintings on cave walls, and often only a single high-quality image of a particular figure, animal, or architectural motif is available. The art style adds further complications: brushwork can be delicate, colors may be muted by age, and shapes such as thin ribbons or halos are extremely fine. Existing 3D algorithms are mostly trained on photographs of everyday objects and buildings, so they often misread these stylized images, either producing distorted shapes or inventing details that break the spirit of the original art.

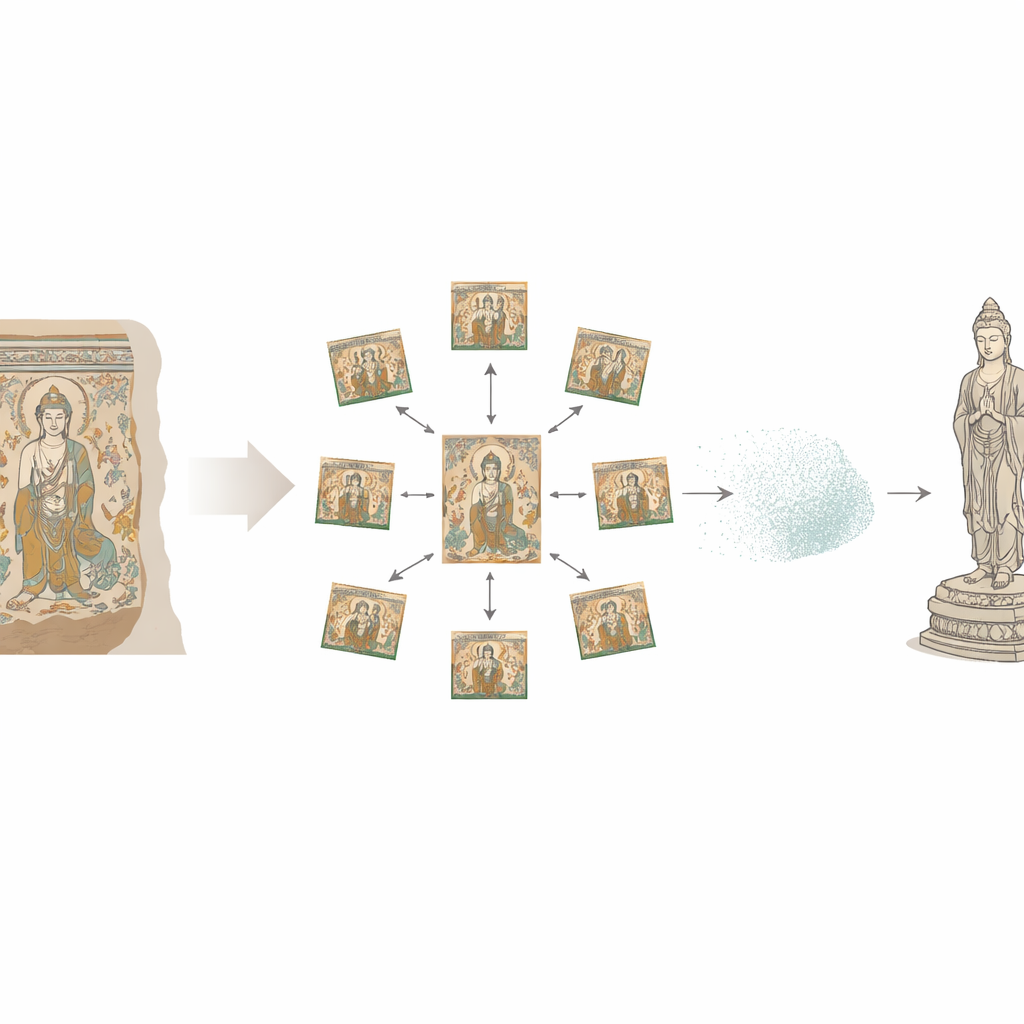

A smart step-by-step digital brush

The authors propose a framework called 3DSynBrush that cleverly chains together several advanced image and 3D techniques, each solving a different part of the problem. First, they assemble a specialized Chinese Mural Elements (CME) dataset: thousands of carefully chosen figures, animals, and plants cut out from high-resolution mural photos. A segmentation tool cleanly separates each element from its busy background, leaving a transparent outline that preserves edges and thin structures. Next, a perspective-synthesis module imagines how that single painted figure would look from several standard viewpoints, using a powerful “diffusion” model that has learned typical 3D relationships from large training sets. This produces a ring of consistent views around the element, even though only one original image was provided.

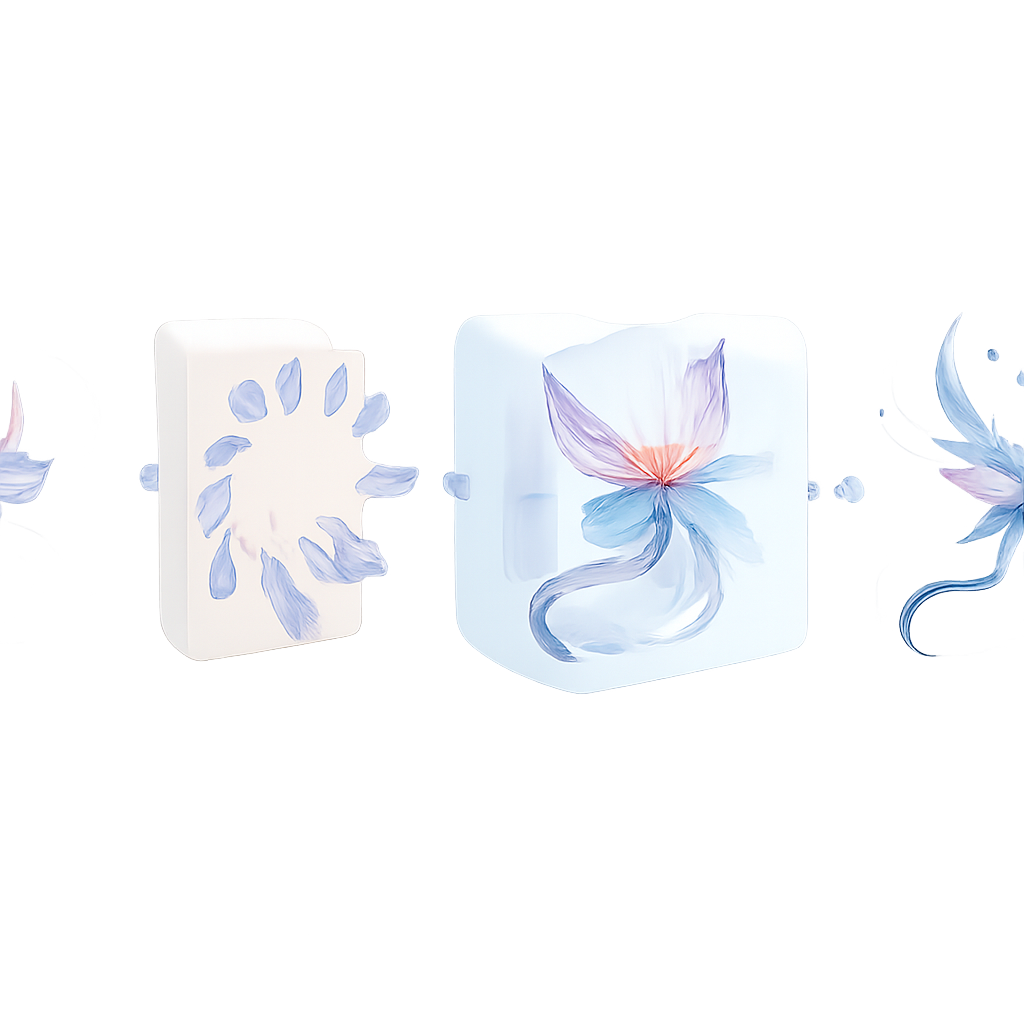

From imagined views to solid shapes

Those synthesized views are then passed to a neural rendering system that knits them into a continuous 3D “light field,” essentially a mathematical description of how light and color behave at every point around the element. This stage is tuned to handle the soft textures and non-photographic look of murals, smoothing away small inconsistencies between the generated views without erasing artistic features. Finally, a mesh-building module converts this invisible light field into a standard 3D surface made of triangles, automatically refining dense areas where edges and fine details matter, and simplifying flatter regions that do not need many points. Remarkably, the resulting models use only about 40 percent as many points and faces as leading alternatives, yet still match the original images more closely in both shape and texture.

Built to resist noise, shadows, and gaps

The team tested 3DSynBrush under difficult conditions to mimic real-world photography: grainy images, uneven lighting, and parts of the element hidden by dark patches. Even as noise and occlusions increased, the main 3D shape stayed stable, and the surface textures remained recognizable. When compared visually and numerically with several top single-image 3D methods, 3DSynBrush produced cleaner, more faithful reconstructions of mural-style subjects, avoiding common errors such as warped bodies, broken surfaces, or textures that look like unrelated objects.

Bringing lost worlds back to life

For non-specialists, the key outcome is that a lone photograph of a painted deer, dancer, or temple from Dunhuang can now be transformed into a compact, accurate 3D model that can be explored in virtual reality, used in digital exhibitions, or serve as a guide for careful restoration work. While the system still depends on the quality of the initial cutout and is limited by current image resolutions, it offers a practical path to preserving the appearance and spatial feel of fragile wall paintings. In essence, 3DSynBrush acts like a digital sculptor that respects the artist’s original style, turning vulnerable fragments of mural art into durable, interactive “statues” for future generations to study and enjoy.

Citation: Peng, X., Wang, J., Hu, Q. et al. 3DSynBrush a high quality 3D reconstruction framework for single Dunhuang murals. npj Herit. Sci. 14, 154 (2026). https://doi.org/10.1038/s40494-026-02424-8

Keywords: Dunhuang murals, cultural heritage digitization, single-image 3D reconstruction, neural rendering, virtual reality museums