Clear Sky Science · en

An automatic annotation method for colored 3D triangular meshes oriented to cultural relic deterioration segmentation

Why digital eyes on old treasures matter

Across museums and historic sites, sculptures, murals, and carved walls are slowly cracking, flaking, and fading. Conservators need to know exactly where this damage is happening to decide what to repair and how urgently, but carefully tracing every damaged patch on detailed 3D records of objects can take weeks. This paper introduces an automatic way to mark deterioration on richly colored 3D models of cultural relics, turning a tedious, expert-only task into a fast and precise digital process.

From fragile statues to detailed 3D twins

Today, many important artifacts are recorded as high‑fidelity 3D color models built from photographs. These models capture both shape and surface paint without touching the original object, and institutions from Dunhuang caves to the British Museum are using them. Yet most of that digital richness is wasted: models are used mainly for viewing and archiving, not for in‑depth analysis. For conservation work, a key challenge is to identify and measure exactly where paint is flaking or material is cracking across complex curved surfaces. Doing this by hand on 3D models is slow and exhausting; doing it on flat photos loses crucial information about where the damage lies on the object itself.

Linking flat pictures and 3D shapes

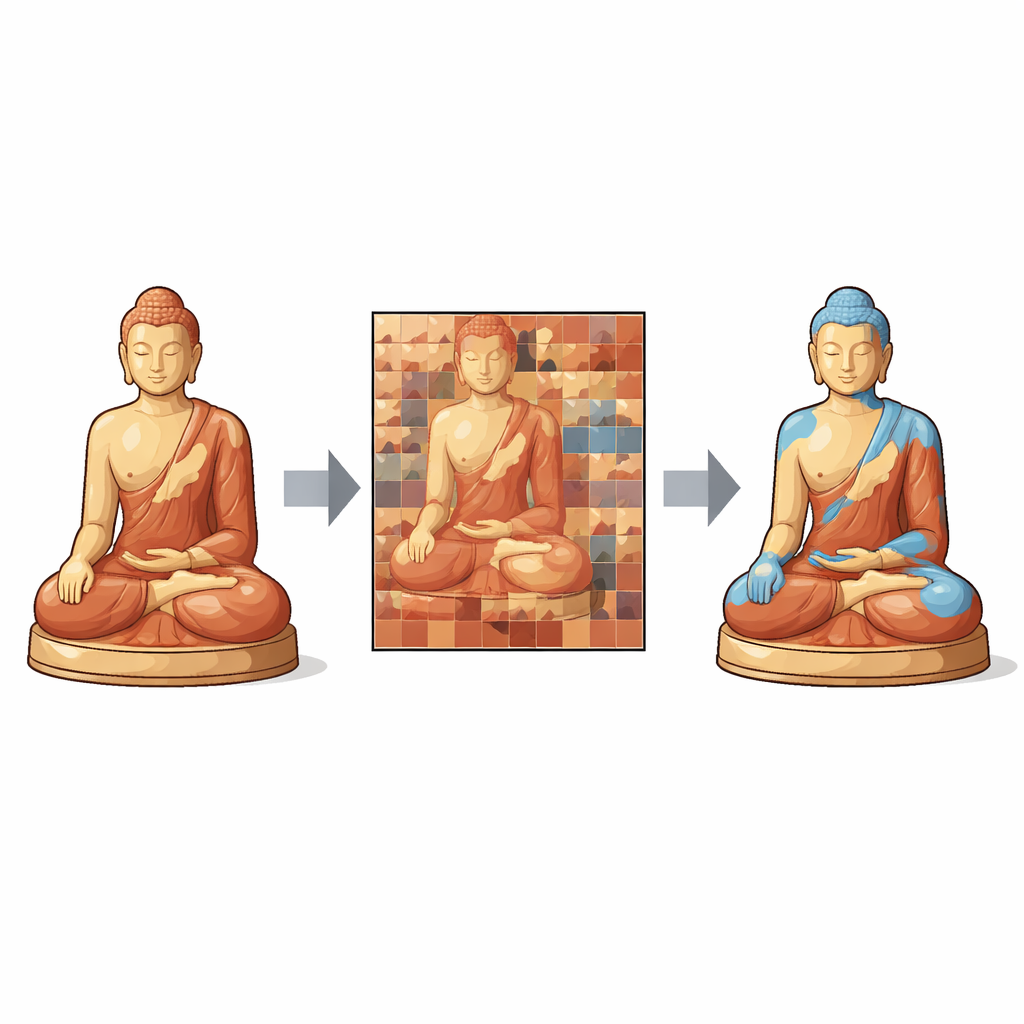

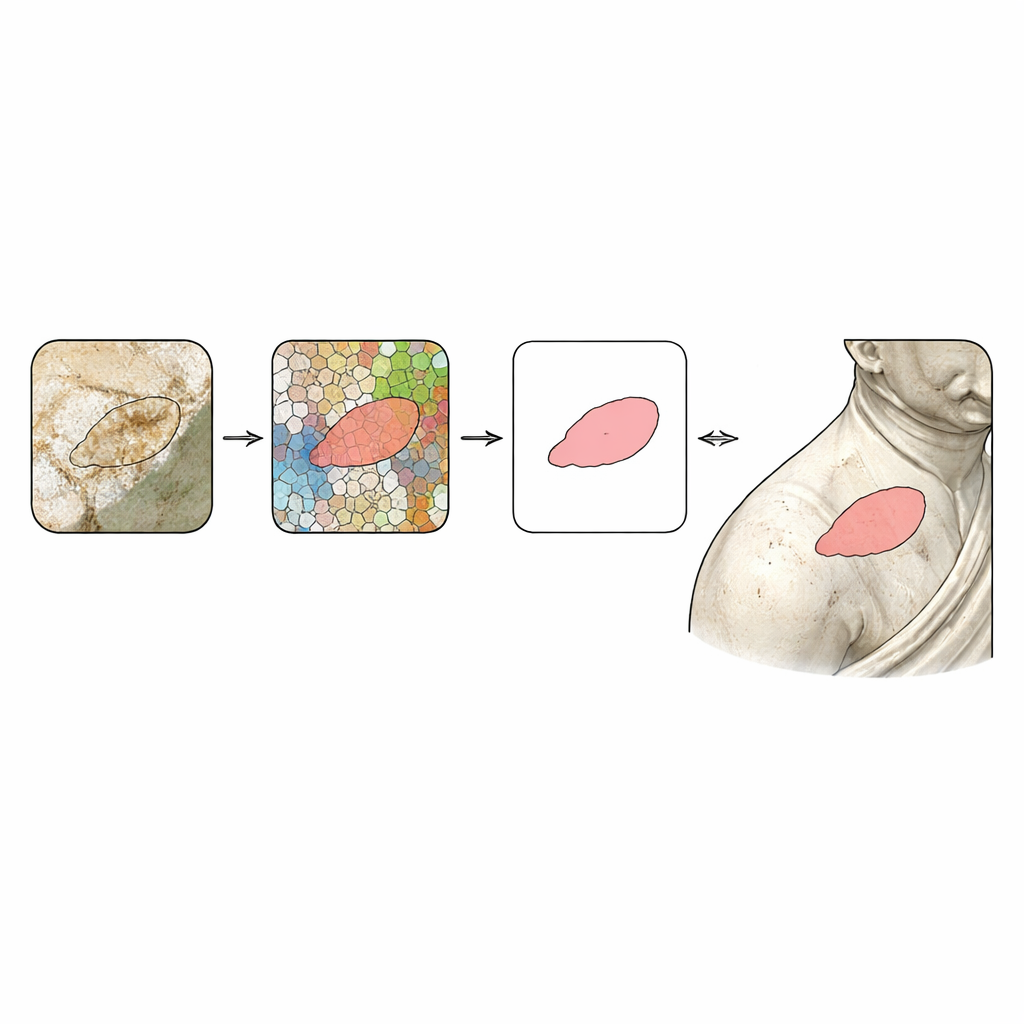

The authors propose a system that lets 2D and 3D “talk” to each other so that the strengths of both are used at once. First, conservators load a 3D color model into a custom platform and roughly box‑select an area they care about, such as the arm or base of a statue. The software then mathematically “unfolds” that part of the surface, laying it out as a continuous flat texture image—a sort of digital skin peeled and spread out with minimal distortion. Every pixel in this flat map knows exactly which tiny triangle on the 3D surface it came from, and vice versa. This two‑way link means any marks drawn—or in this case, detected—on the flat image can be faithfully projected back onto the curved 3D object.

Teaching the computer to see peeling paint

Once the surface is flattened into a clear, continuous image, the system focuses on finding damaged regions, especially places where paint has shed away. Instead of relying on rough color thresholds, the authors use an improved version of a method called SLIC, which divides the image into many small, uniform “superpixels.” The number and shape of these superpixels are chosen automatically based on how visually complex the image is, using a measure of texture contrast. Then, a clustering step groups superpixels into “damaged” and “healthy” areas. This approach hugs the irregular edges of flaking paint more closely and reduces noise compared with other popular segmentation techniques. The result is a precise damage mask drawn at pixel level on the 2D texture map.

Putting damage back onto the 3D artifact

With the help of the earlier 2D–3D link, the software traces each damaged pixel back to the exact spot on the 3D mesh where it belongs. Using simple geometric transformations, it converts 2D coordinates into full 3D positions that follow the object’s curvature. These points are then combined into a clean, colored “shell” of deterioration that clings to the original 3D model. On a painted wooden Guanyin statue from China’s Song Dynasty, the authors show that their automatic masks line up closely with painstaking manual work done in professional modeling software, even on sharply curved or highly detailed areas. They further enrich the data by digitally copying and transforming these 2D and 3D damage patterns, creating many realistic training examples for future deep‑learning systems.

What this means for preserving the past

The study demonstrates that careful coordination between flat images and 3D geometry can turn raw digital replicas of artifacts into practical conservation tools. Their platform cuts down the labor and subjectivity of manual labeling, produces consistent, high‑precision maps of damage, and supports batch processing to handle large collections. In plain terms, it gives conservators a reliable, semi‑automatic “highlighter” for deterioration on complex objects and generates the abundant, well‑annotated 3D data that modern AI methods need. While the approach still depends on good‑quality textures and smart unfolding to avoid distortions, it offers a powerful step toward scalable, data‑driven care for the world’s cultural heritage.

Citation: Hu, C., Xie, Y., Xia, G. et al. An automatic annotation method for colored 3D triangular meshes oriented to cultural relic deterioration segmentation. npj Herit. Sci. 14, 150 (2026). https://doi.org/10.1038/s40494-026-02421-x

Keywords: cultural heritage conservation, 3D digitization, automatic damage detection, texture mapping, deep learning datasets