Clear Sky Science · en

WCT-Net: joint restoration of tomb murals based on wavelet convolution and transformer self-attention collaborative network

Why saving old wall paintings needs new tools

Across China, ancient tombs hold wall paintings that are crumbling, cracked, and peeling away at the edges. These murals capture scenes of royal life, beliefs, and artistry that we can no longer witness firsthand. But many fragments are so damaged that even experts struggle to imagine how they once looked. This study presents a new kind of artificial intelligence system, WCT-Net, designed to digitally "mend" these broken images, offering safer guidance for conservators and richer visuals for researchers and the public.

The hidden problems inside broken murals

Tomb murals face a double threat. Over centuries, moisture seeps through soil and stone, carrying salts that crystallize inside the plaster. This weakens the layers beneath the paint, causing sections to detach, crack, and fall away. The result is often a small surviving fragment with two types of damage at once: the outer edges are missing, so the overall composition is incomplete, and the interior is scarred by fading, flaking, and fine cracks. Traditional hands-on restoration relies on matching fragments and careful re-adhesion, but when large areas are gone, guesswork can lead to errors or even new damage. Digital restoration promises a reversible, non-contact alternative—but only if computers can both invent plausible missing structures and faithfully preserve surviving details.

Why earlier digital fixes fall short

Earlier computer methods mostly learned from undamaged parts of the same image. Some spread neighboring colors and edges into gaps; others copied and pasted similar patches from intact regions. These tools can fill neat, hole-like defects, but they fail when entire borders are missing or when the mural’s subject matter must be inferred from very little context. More recent deep learning approaches, including convolutional neural networks and generative adversarial networks, improved realism but still face a trade-off: they either favor sharp local textures while losing the big picture, or they keep global structure while blurring fine brushwork. Transformer-based methods, which excel at long-range relationships, help with large missing areas but still struggle to align small details and large shapes when damage spans multiple scales.

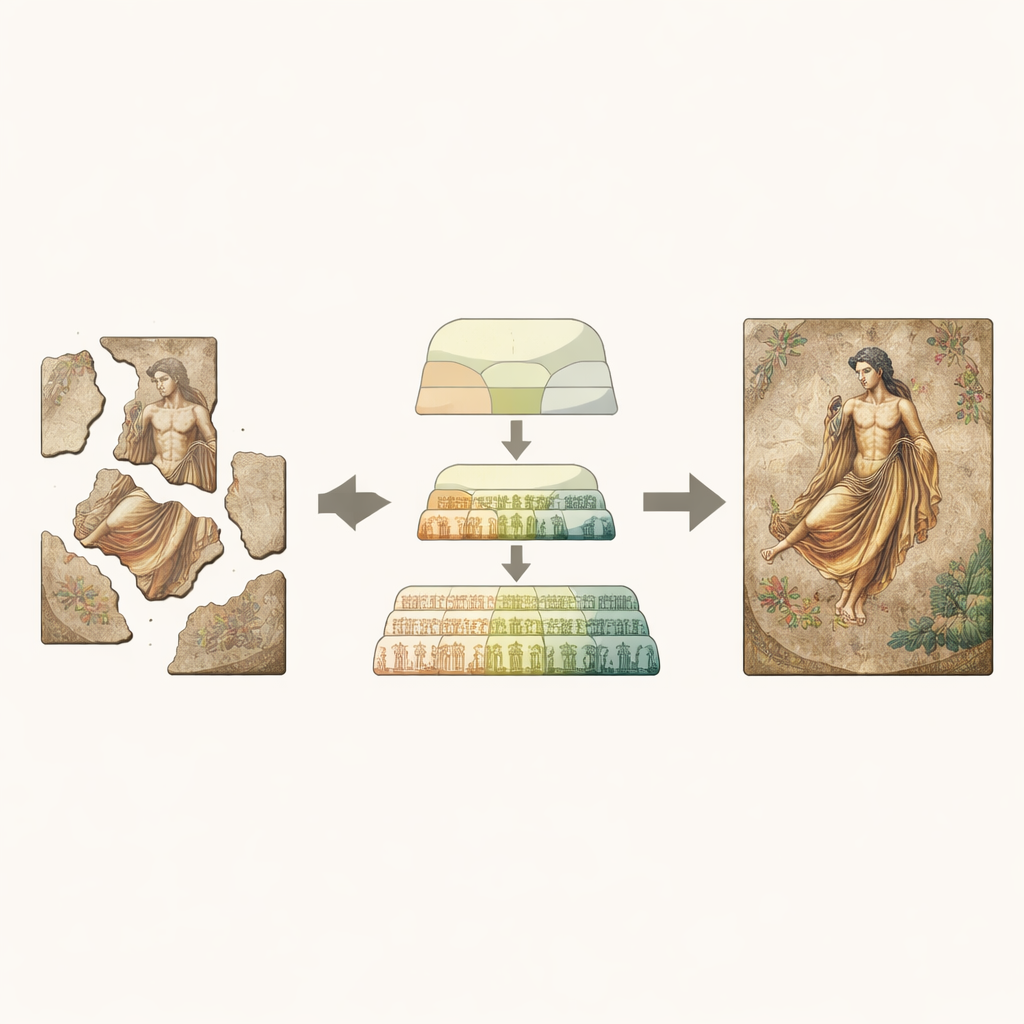

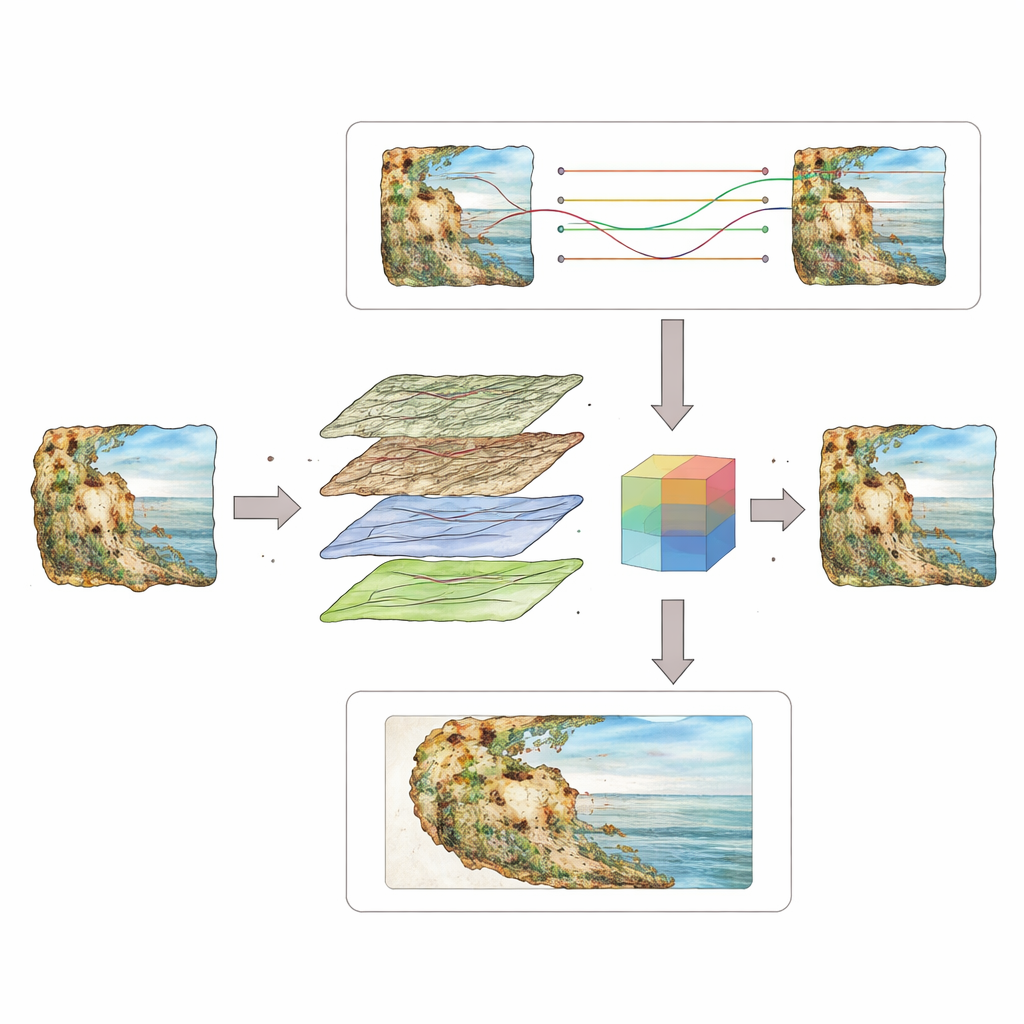

A two-track brain for seeing both near and far

WCT-Net tackles this problem by splitting the task into two cooperating branches inside a U-shaped encoder–decoder network. One branch uses wavelet-based convolutions, a way of separating an image into smooth, low-frequency components and crisp, high-frequency textures. By learning on these bands, this branch specializes in preserving tiny features such as hairlines, garment folds, and subtle shading that give murals their handcrafted character. In parallel, a Transformer-based branch uses self-attention to connect distant parts of the image, picking up long-range patterns like the pose of a horse or the rhythm of a procession. An enhanced fusion unit then learns how to weigh and blend these two kinds of information so that neither dominates: the model simultaneously honors surviving details and extrapolates a believable overall scene.

Teaching the system with realistic damage

To train and test WCT-Net, the authors assembled a high-quality dataset of imperial tomb murals from the Shaanxi History Museum, cutting large photographs into smaller image patches. They then created three families of artificial damage masks to mimic real decay: random speckles and scratches for interior flaking, irregular border losses like those caused by chunks of plaster falling away, and mixed patterns combining both. The system learned to reconstruct the original images from these damaged versions. The team compared WCT-Net against seven leading restoration algorithms, using measures that capture both structural accuracy and visual naturalness, and also tested it on a separate Dunhuang mural dataset with a different artistic style.

Sharper lines, fuller scenes, and what it means

Across all damage types—interior wear, missing edges, and complex combinations—WCT-Net produced restorations that kept contour lines continuous, textures crisp, and overall compositions more complete than competing methods. Objective scores improved by several percent, and the generated images more closely matched human perception of authenticity. While the model is computationally heavier than some rivals, its gains are most pronounced where murals are hardest to interpret: when both the inner painting and its outer boundaries have been disrupted. For conservators, this means a more reliable digital preview before touching fragile surfaces; for historians and the public, it offers clearer windows into the visual world of the past. The authors note that future work must better handle diverse styles and run more efficiently, but WCT-Net marks a significant step toward using AI as a careful, context-aware partner in cultural heritage preservation.

Citation: Li, J., Wu, M., Lu, Z. et al. WCT-Net: joint restoration of tomb murals based on wavelet convolution and transformer self-attention collaborative network. npj Herit. Sci. 14, 151 (2026). https://doi.org/10.1038/s40494-026-02412-y

Keywords: digital mural restoration, cultural heritage conservation, image inpainting, deep learning for art, ancient tomb murals