Clear Sky Science · en

Towards trustworthy AI in cultural heritage

Why smarter tools matter for our past

From ruined temples to fragile parchments, today’s heritage experts rely on digital tools to understand and protect the traces of human history. Artificial intelligence (AI) can sift through huge volumes of images, scans and records far faster than any person, but it can also misread or distort the stories these objects tell. This article explores how we can make AI not only powerful, but also fair, transparent and worthy of trust when it is used to study and safeguard cultural heritage.

New helpers for old treasures

Museums, archaeologists and conservationists are turning to AI to classify photos, map damage on buildings and reconstruct lost details from broken objects. Techniques originally built for self-driving cars or online shopping now help interpret old mosaics, sculptures and historic streets. Yet cultural heritage data are unusually messy and uneven: some regions and eras are richly documented, while others appear only in scattered records. If AI learns mainly from well-known monuments and Western collections, it may ignore or misinterpret the heritage of minority groups or less celebrated places. The paper argues that, because cultural heritage shapes identities and memories, mistakes here are not just technical glitches but ethical problems.

Where algorithms can go wrong

The authors map out the many ways bias can creep into AI used for heritage. Some biases stem from gaps in the data: for example, damaged mosaics where missing tiles confuse pattern-recognition systems, or historic records that omit entire communities. Others come from who is represented: popular datasets tend to feature coins, icons and buildings from Europe, leaving non‑Western objects underrepresented. Even when material exists, labels can differ between experts, and social media photos of famous sites may reflect tourist snapshots rather than local perspectives. The article groups these issues into categories such as missing data, minority underrepresentation, contextual differences between regions, and outdated views frozen in old scans or archives. For each type, it suggests practical countermeasures, from expanding collections to include minority narratives to routinely updating digital models as sites change.

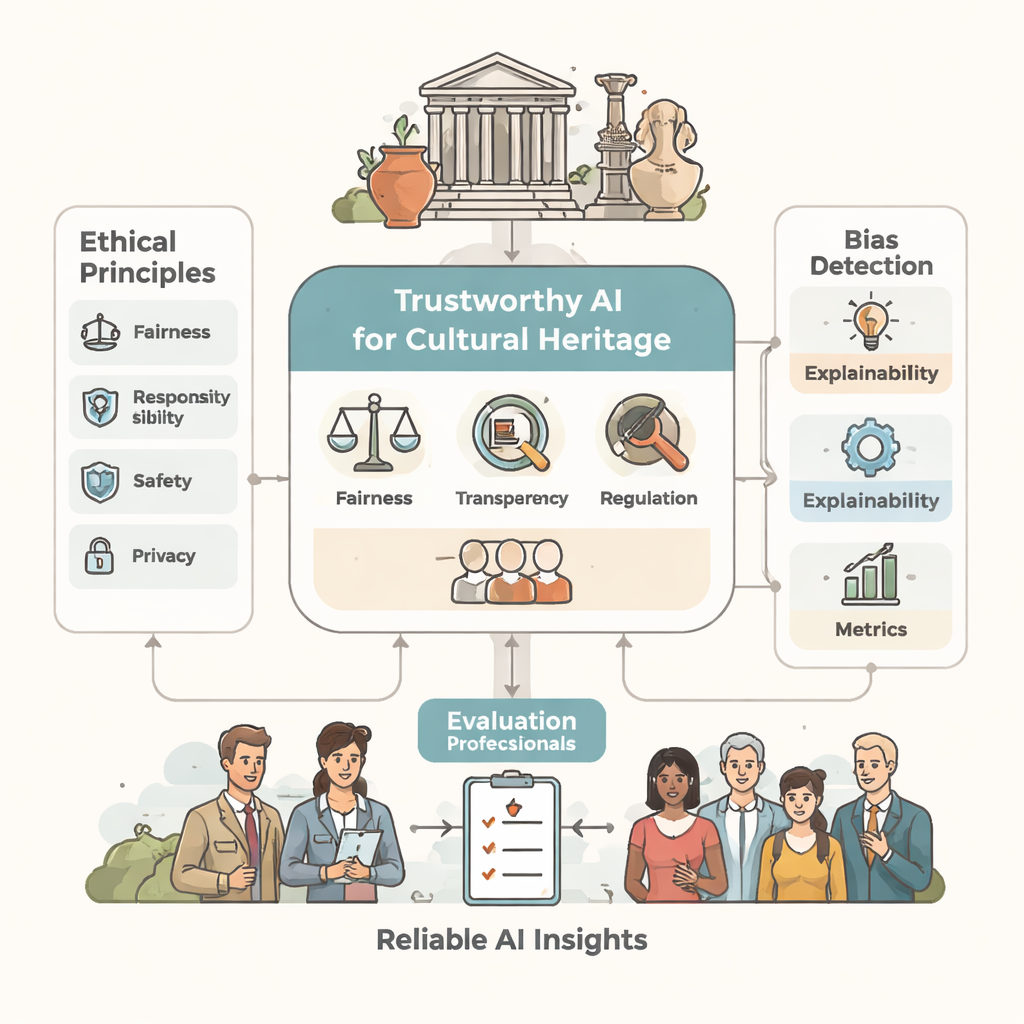

Making machine decisions understandable

Trust, the authors argue, depends not only on better data but also on clearer explanations. Many modern AI systems act as “black boxes”: they label an arch as Gothic or a wall as damaged without showing why. The paper proposes a multi‑layered approach to explainability. One layer looks at the internal mechanics of the model, another at how local history and context influence its decisions, and others focus on what the result means in practice and how confident the system is. Explanations can be global, describing how the system behaves overall, or local, focusing on a single prediction about a specific building or artifact. To judge whether these explanations truly help, the authors define simple, human‑centered metrics such as user satisfaction, curiosity, trust and the effect on decision quality.

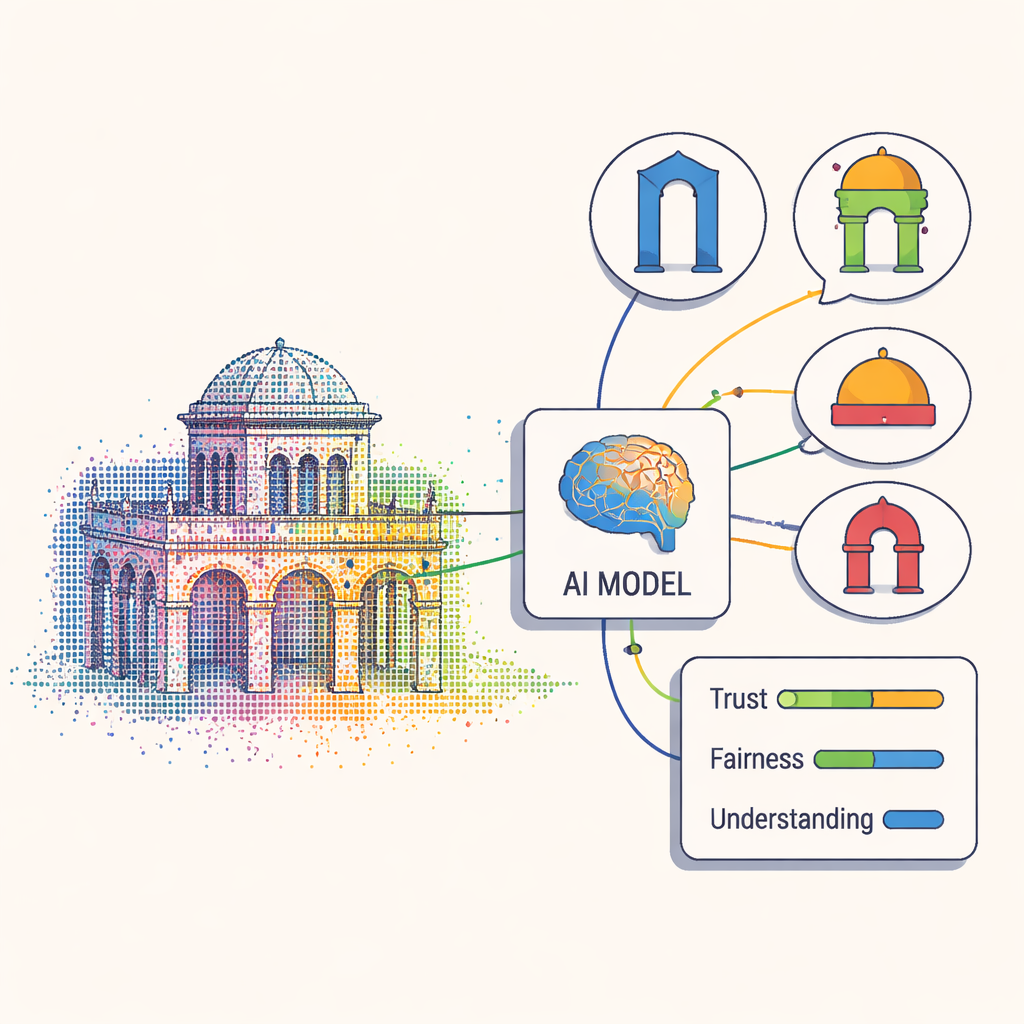

Testing the framework on real heritage data

To see how their ideas work in practice, the researchers revisit an earlier AI system that analyzes dense 3D point clouds of historic buildings. That system is very good at automatically assigning each cluster of points to architectural elements such as arches, windows or columns, but it was not built with fairness or transparency in mind. When evaluated with the new ethical metrics, experts found that they only partly understood how it reached its conclusions, and that the system did little to explain alternative interpretations. The authors propose using newer models that build explanation into their design. These models compare parts of a building to learned “prototype” shapes and highlight which examples guided a given label, so that heritage specialists can see and debate the reasoning instead of simply accepting an opaque answer.

Building future‑proof guardians of culture

In plain terms, this article argues that AI should assist human judgment in heritage work, not silently replace it. By systematically hunting for bias and insisting that systems explain their choices in language and visuals that experts can understand, the proposed framework aims to keep AI aligned with the values of inclusion, accuracy and respect for cultural diversity. The authors suggest that similar ethics‑aware designs could also benefit sensitive areas like health, education and the environment. For cultural heritage, the message is clear: only AI that is transparent, fair and open to questioning can be trusted to help tell the stories of our shared past.

Citation: Paolanti, M., Frontoni, E. & Pierdicca, R. Towards trustworthy AI in cultural heritage. npj Herit. Sci. 14, 131 (2026). https://doi.org/10.1038/s40494-026-02403-z

Keywords: cultural heritage, trustworthy AI, algorithmic bias, explainable AI, heritage conservation