Clear Sky Science · en

Identification methods and evaluation metrics for the condition of the Beijing masonry Great Wall

Why the Great Wall’s Health Matters Today

The Great Wall is more than a postcard image; it is a 21st‑century engineering problem. Stretching over rugged mountains around Beijing, its brick and stone sections are slowly being eaten away by weather, plants, and heavy tourism. Inspecting such a vast structure brick by brick is impossible for human teams alone. This study shows how drones, satellite-style imaging, and artificial intelligence can work together to scan the Wall automatically and score how well each part is holding up, helping conservators decide where to act before damage becomes irreversible.

Four Ways a Wall Can Fail

To teach computers what “healthy” and “unhealthy” look like, the researchers first had to agree on simple, real‑world categories of damage. They divided the masonry Great Wall in Beijing into four visible states. In the first, the wall is largely intact, often thanks to past repairs and regular inspections. The second shows local defects—missing bricks, cracks, or broken stones—while the main structure still stands. The third is dominated by vegetation, where plant roots invade and pry the masonry apart. The fourth is the most severe, with large collapses of towers and wall segments, leaving only low remnants. These categories turn a complex conservation problem into a set of clear visual patterns that computers can learn to recognize.

Building a Digital Twin of Hundreds of Kilometers

Armed with these four states, the team assembled a large digital snapshot of the Great Wall. Using drone flights and three‑dimensional models, they gathered imagery covering more than 500 kilometers of wall around Beijing and distilled this into over 300 kilometers of high‑quality “orthophotos” – aerial images corrected so that distances and angles on screen match those on the ground. Specialists then drew precise outlines around damaged areas and labeled them according to the four categories. A three‑level review process checked these labels against repair records and expert judgment. The result is a detailed dataset of 3,408 image tiles, each 512 by 512 pixels, complete with geographic coordinates and version history—essentially a traceable, zoom‑ready map of the Wall’s condition.

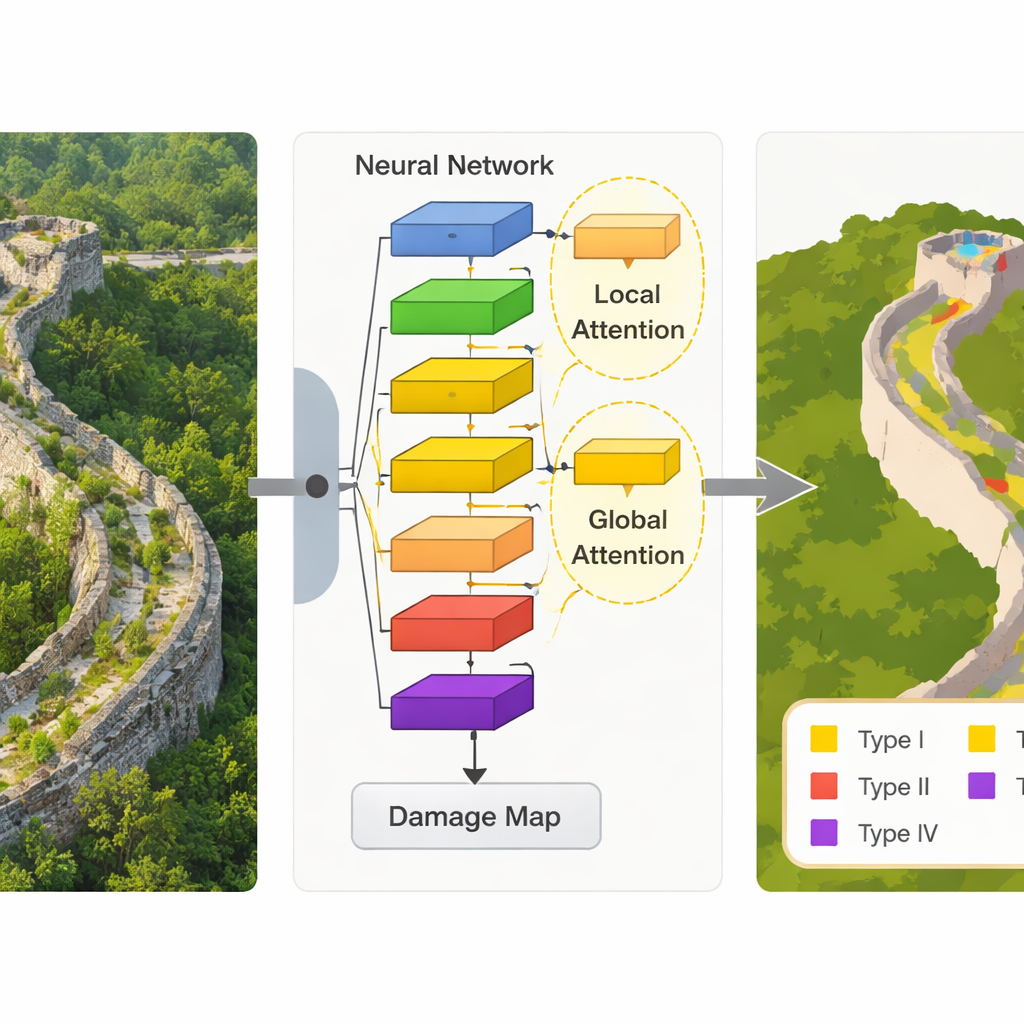

Teaching a Lean AI to Read the Wall’s Cracks

The heart of the study is a new computer vision model called MEP‑deep, designed to pick out subtle damage patterns in these images while remaining light enough to run on modest hardware. Built on a compact neural network architecture originally created for smartphones, the model adds two “attention” components that help it focus on what matters most. One adjusts how strongly different image features are weighted, so signals from cracks and missing bricks stand out from the background. The other looks at how patterns are arranged in space, allowing the system to distinguish, for example, a natural rock from a stone that was once part of the Wall. Tested not only on the Great Wall dataset but also on a standard international urban‑imagery benchmark, the model slightly but consistently outperformed several established methods, all while using far fewer computing resources.

Turning Colors on a Map into Actionable Scores

Recognizing damaged areas is only half the story; managers also need a number that summarizes how a stretch of wall is doing. The researchers therefore created a scoring system based on the proportion of each damage type within a given section. Areas with more intact masonry earn higher scores, while sections dominated by collapse or heavy vegetation are penalized more sharply. A mathematical “decay” term ensures that even small increases in severe damage types noticeably lower the score, reflecting their outsized impact on safety and authenticity. By comparing scores calculated from human labels with those from the model’s predictions on several restored sections, the team showed that the automated system can approximate expert judgment well enough to guide where to look first on the ground.

What This Means for the Future of the Great Wall

Put simply, this work turns the Beijing masonry Great Wall into a living dataset that can be watched over time. Instead of waiting for obvious collapses, heritage managers can use drone flights and the MEP‑deep model to generate up‑to‑date damage maps and health scores for long, hard‑to‑reach stretches of the Wall. While the authors acknowledge that even more accurate, heavier AI models exist, their lightweight approach is practical for field use and can be improved further. Beyond China, the same mix of clear visual categories, carefully built datasets, and efficient AI could help safeguard other long, fragile heritage sites—from ancient frontiers to historical canals—by turning scattered stones into actionable information.

Citation: Liu, F., Wang, Z., Zhang, Z. et al. Identification methods and evaluation metrics for the condition of the Beijing masonry Great Wall. npj Herit. Sci. 14, 122 (2026). https://doi.org/10.1038/s40494-026-02392-z

Keywords: Great Wall conservation, heritage monitoring, remote sensing, deep learning, structural damage detection