Clear Sky Science · en

An SfM system for mural digitization with attention-guided feature matching and robust sparse reconstruction

Why Saving Ancient Wall Paintings Needs New Digital Tricks

Across the deserts of northwest China, the painted walls of the Mogao Grottoes are slowly fading, cracking, and peeling. Conservators want detailed digital copies of these murals so scholars and the public can study them long after the originals have deteriorated. But turning thousands of close-up photographs into a single, flat, undistorted view of a curved, damaged wall is surprisingly hard. This paper introduces a new computer-vision system designed specifically for grotto murals, making digital reconstructions sharper, more reliable, and practical at large scale.

From Patchwork Photos to a Single Seamless Wall

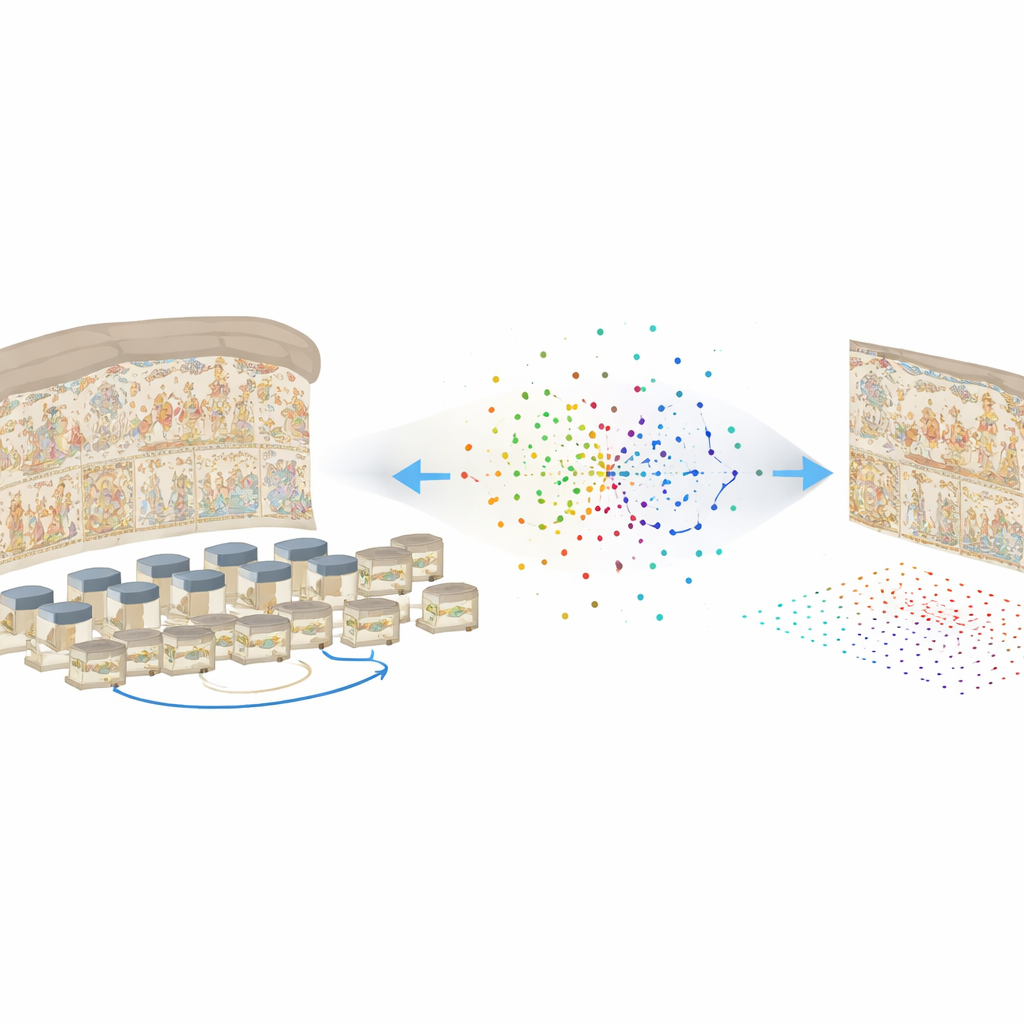

Digitizing a mural is not as simple as snapping one picture. High-resolution cameras capture many overlapping shots from a rail system that moves across the wall, producing a dense grid of local views. Traditional software often “stitches” these images in 2D, warping and blending them like a panorama. That works when walls are flat and lighting is even, but grotto murals bend, bulge, darken in corners, and contain both blank and highly repetitive areas. Under those conditions, stitching can create visible seams, misaligned figures, or distorted shapes. The authors instead embrace a 3D strategy called Structure-from-Motion (SfM): the computer estimates where the camera was for each shot and reconstructs the mural surface in space before projecting it back into a precise, frontal image.

Teaching the Computer to Spot the Right Details

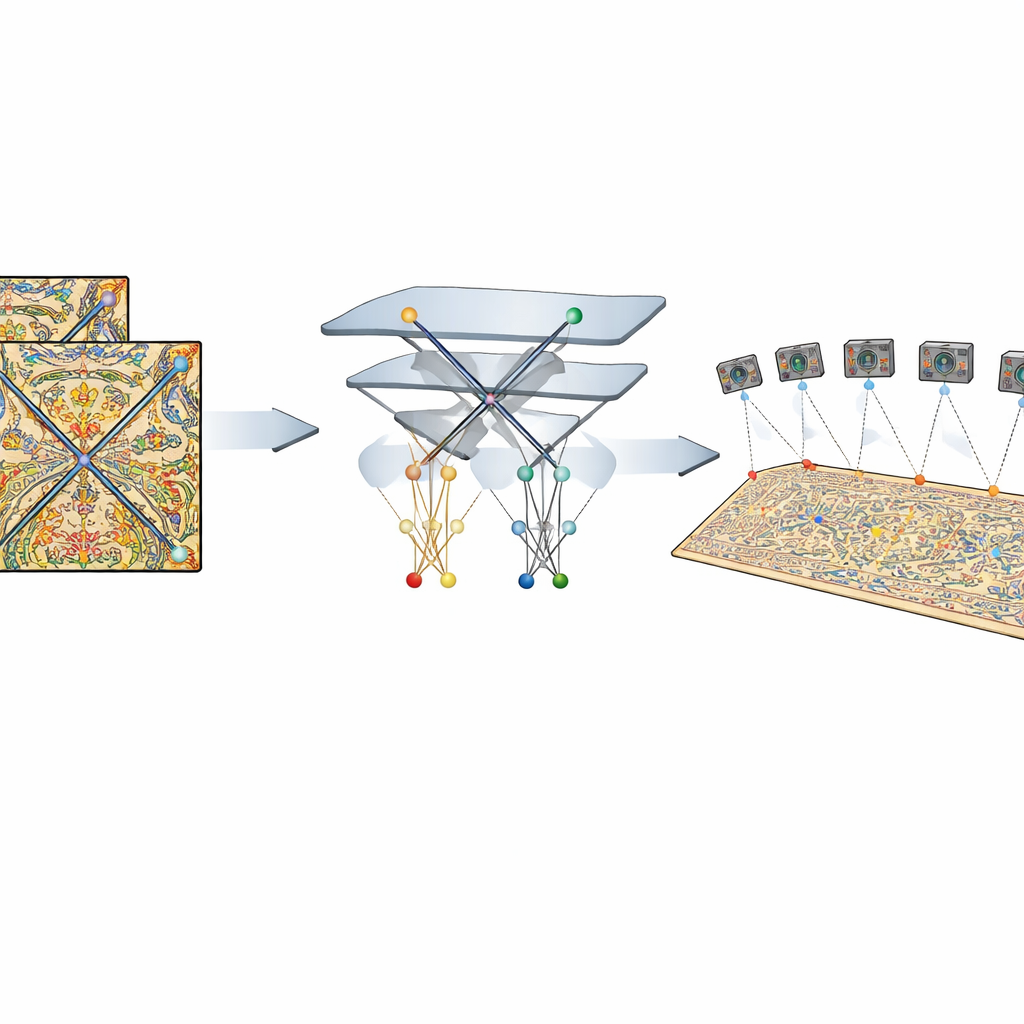

The heart of SfM is matching tiny visual details—“feature points”—across pairs of photos. On murals, this is treacherous: rows of nearly identical figures, faded pigments, and large blank patches can trick algorithms into linking the wrong points or finding too few matches. The new system tackles this with an “attention-guided” matching method inspired by modern deep-learning techniques. Instead of judging each feature in isolation, the algorithm looks at patterns of features together and learns which ones are likely to align across overlapping views. It also builds in an understanding of where overlap should occur: features far outside the shared region of two images are gently down-weighted, even if they look similar, while those in plausible overlapping zones are favored. This combination of visual context and spatial awareness sharply reduces false matches while keeping computation manageable for thousands of high-resolution images.

Rebuilding the Wall in 3D, One Edge at a Time

Even with better matches, SfM can still stumble if it guesses the wrong camera settings or tries to adjust too many viewpoints at once. Murals pose a particular problem because camera metadata are often missing or unreliable after processing, and the scene is almost planar, which can cause the recovered wall to “bow” in or out in the virtual model. The authors introduce two mural-specific fixes. First, they re-estimate camera focal length—not from file tags, but by testing candidate values and choosing those that yield coherent geometry, then sharing an averaged value across views captured with the same setup. Second, they replace global refinement with “edge-based bundle adjustment”: instead of constantly tweaking every camera, the system only fine-tunes cameras and 3D points at the growing boundary of the reconstruction, leaving well-constrained interior views alone. This focused optimization reduces drift, keeps the virtual wall flat, and cuts processing time.

Putting the System to the Test in Real Caves

The researchers evaluated their system on nearly 1,800 images from nine caves at Mogao and on a large public dataset called MuralDH, where they simulated the way a camera would sweep across a mural. In direct comparisons with widely used open-source tools such as COLMAP, VisualSFM, OpenMVG, and MVE, the new pipeline reconstructed more mural sets successfully, produced lower geometric errors, and ran faster. Some caves that competing systems failed to reconstruct at all yielded clean point clouds and stable camera paths with the new method. When the resulting sparse 3D models were fed into commercial software for dense reconstruction, they produced clear, nearly distortion-free frontal images that conservators could actually use, something that previous automatic workflows could not reliably deliver.

Clearer Digital Windows into the Past

For non-specialists, the takeaway is straightforward: this work makes it more feasible to build faithful, high-resolution digital facsimiles of fragile wall paintings at scale. By tailoring computer-vision tools to the quirks of grotto murals—repetitive motifs, subtle relief, missing camera data—the authors’ SfM system turns vast, messy photo archives into geometrically sound, seamless mural views. These digital reconstructions can support conservation planning, scholarly analysis, and public exhibitions, helping to preserve the visual stories on ancient walls even as the original pigments continue their slow, inevitable fade.

Citation: Fang, K., Min, Z. & Diao, C. An SfM system for mural digitization with attention-guided feature matching and robust sparse reconstruction. npj Herit. Sci. 14, 166 (2026). https://doi.org/10.1038/s40494-026-02385-y

Keywords: mural digitization, cultural heritage, 3D reconstruction, computer vision, Dunhuang Mogao Grottoes