Clear Sky Science · en

Digital restoration of ancient Jiangnan murals via proxy learning and structural guidance

Saving Fading Wall Paintings

Across the humid riverlands of southern China, centuries-old wall paintings are quietly disappearing. Heat, moisture, and time eat away at the plaster, causing cracks, stains, and flaking that are costly and risky to fix by hand. This article presents a new way for computers to digitally "restore" these fragile Jiangnan murals, reviving their scenes and brushwork on screen without touching the original walls. The work matters not just for art lovers, but for anyone who cares about how modern technology can help keep the world’s cultural memory alive.

The Hidden Treasures of Jiangnan

The murals studied here are scattered through ancestral halls, temples, and old homes in Zhejiang Province. Unlike the famous desert caves of Dunhuang, these works sit in a hot, damp climate that is especially harsh on materials made from earth, wood, and lime. Surveys show that many murals are covered with overlapping damage: cracks, mold, fading, water stains, and places where the paint layer has peeled away. Physical repair is expensive, irreversible, and technically demanding, so digital restoration—rebuilding the image in pixels rather than plaster—offers a safer first line of protection. But the very qualities that make these murals special also make them hard for computers to handle.

Why Ordinary AI Falls Short

Modern image-repair programs based on deep learning usually rely on huge collections of “before and after” image pairs for training. For Jiangnan murals, such data simply do not exist: the works are scattered, were painted by many different folk artists, and their original, undamaged appearances are unknown. At the same time, the damage itself confuses standard algorithms. Dark cracks and mold spots can look very similar to delicate ink lines, so a model that blindly follows visible edges tends to copy the damage instead of removing it. As a result, off-the-shelf restoration tools either leave behind blemishes or invent details that clash with the murals’ traditional style.

Learning Style From Related Art

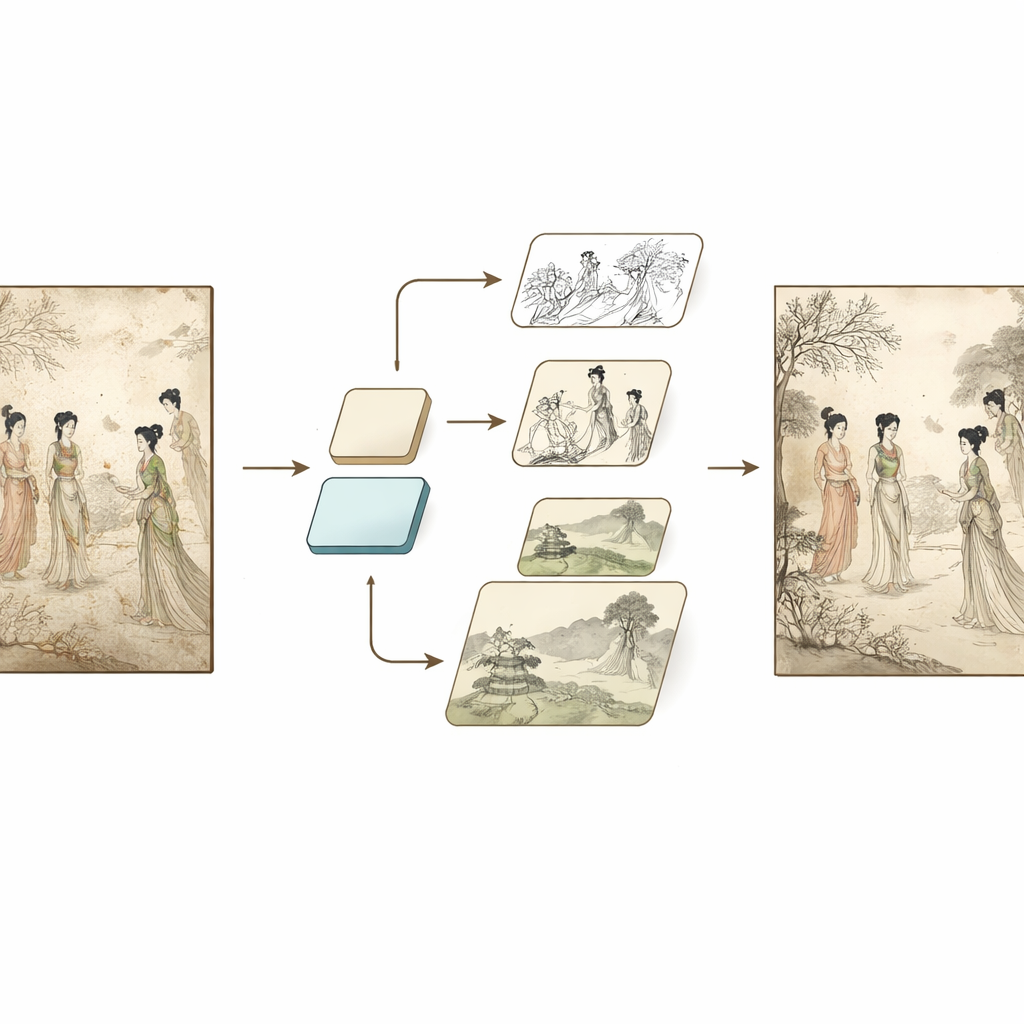

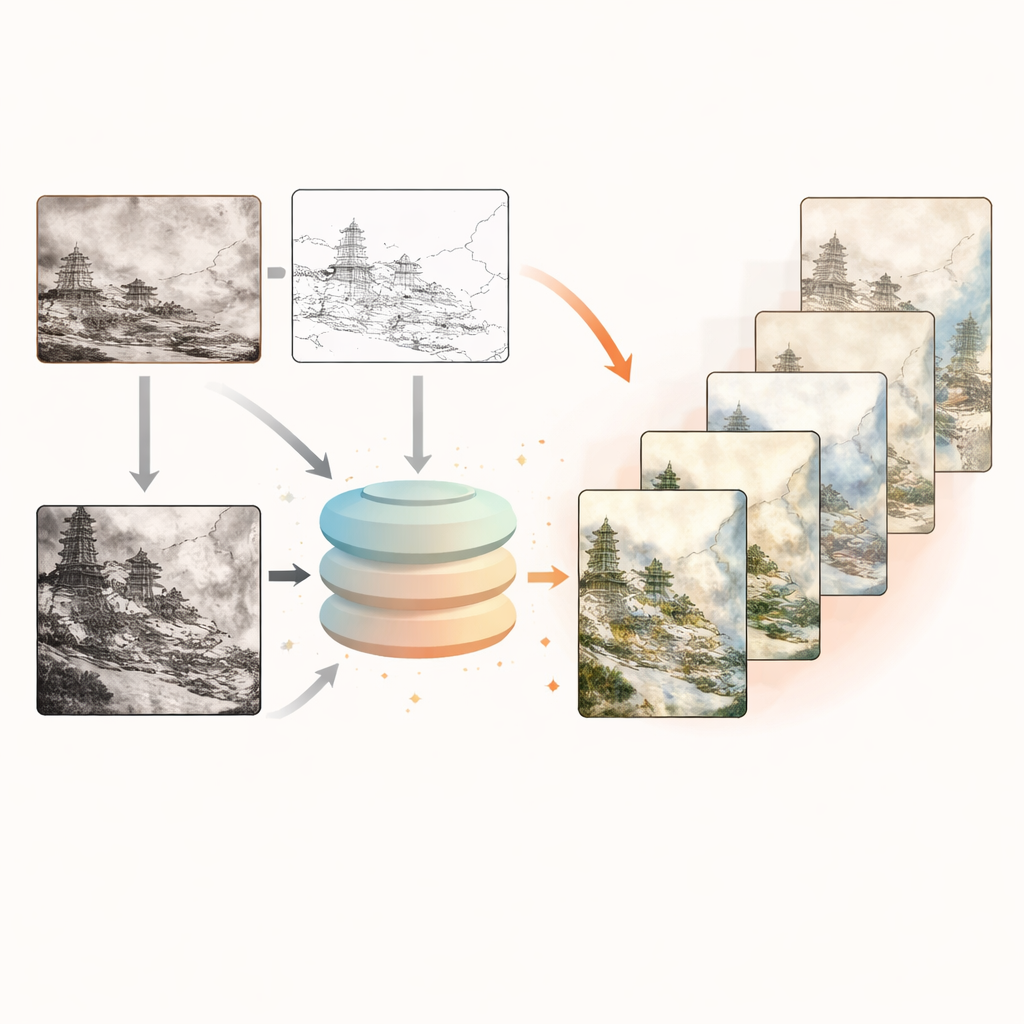

To break out of this dead end, the authors propose a workflow called Structurally Guided Proxy Restoration, or SGPR. The first step is to separate “style learning” from “mural fixing.” Instead of training directly on scarce mural photos, they assemble a large proxy collection of more than six thousand classical Chinese paintings from museums. These images share the same artistic language as Jiangnan murals: the way lines flow, how ink shades are layered, how scenes are composed. A powerful image generator, built on recent diffusion technology, is then fine-tuned on this proxy set. A special loss function encourages the model not just to mimic textures, but to capture broader artistic traits such as brushwork rhythm and color balance. The result, called ArtBooth, is a generator that “speaks” traditional Chinese painting fluently, even though it has never seen the actual damaged murals.

Finding Clean Lines in Dirty Images

The second key step is to tease out the murals’ original structure from messy photographs. Here the authors introduce a Selective Feature Extraction algorithm that does not require any learning. It looks at the same damaged mural at two image scales and runs two simple edge detectors on each version. Features that consistently appear in both detectors and both scales are likely to be genuine drawing lines—such as the outline of a robe or a tree trunk—while random specks and blotches are more likely to be mold or stains. By fusing these signals into an “envelope” mask, the algorithm boosts reliable lines and suppresses noise, producing two clean guiding maps: a crisp line drawing and a refined edge map that emphasize true structure while ignoring much of the deterioration.

Guided Digital Repair in Practice

The final part of SGPR links these clean structural maps with the style-savvy generator through an optimized control network. During restoration, the damaged mural image and a short text prompt are fed to ArtBooth, while the filtered line and edge maps act as a kind of scaffolding. An adapted version of the ControlNet framework injects these maps into the generator’s inner layers, gently steering each denoising step so that new pixels follow the original layout and brushwork rather than drifting into generic scenes. Tests on both simulated damage and real murals from Songxi village show that this combined system removes stains and cracks more thoroughly than existing methods, keeps figures and objects in the right places, and produces images that experts judged to be close in quality to careful manual digital restoration.

What This Means for Cultural Heritage

For non-specialists, the takeaway is straightforward: by learning the visual language of related artworks and carefully separating true lines from damage, AI can now offer museum-grade digital touch-ups for fragile murals that might otherwise fade away. While the method still struggles when whole sections of a painting are missing, and has yet to be extended to richly colored works, it already provides conservators with a powerful new tool. More broadly, the study shows how smart use of proxy data and structural guidance can help protect many kinds of heritage objects that are too rare, too damaged, or too precious to supply the massive training sets modern AI usually demands.

Citation: Yang, C., Liu, Y. & Cai, Y. Digital restoration of ancient Jiangnan murals via proxy learning and structural guidance. npj Herit. Sci. 14, 182 (2026). https://doi.org/10.1038/s40494-026-02369-y

Keywords: digital mural restoration, cultural heritage conservation, image generation AI, Chinese painting style, damage-resistant feature extraction