Clear Sky Science · en

Adaptive multi-feature fusion for visible-infrared image registration and character enhancement of bamboo slips

Ancient texts hiding in plain sight

For more than two millennia, government records, medical notes, and everyday writings in China were brushed onto slim strips of bamboo and wood. Many of these fragile slips have survived in the ground, but their ink has faded so badly that much of the writing is nearly invisible. This study presents a new imaging method that aligns ordinary photographs with infrared pictures of the same slips, then digitally restores the lost characters—making damaged texts readable again and opening a clearer window onto ancient history.

Why old bamboo records are so hard to read

Excavated bamboo and wooden slips are priceless historical documents, but centuries of burial leave them cracked, stained, and worn. In normal light, the wood grain, dirt, and discoloration often overwhelm the faint ink, so that characters blur into the background or disappear altogether. Infrared cameras can reveal traces of ink that no longer show up in visible light, because the ink and the wood reflect infrared differently. Yet infrared images usually lack the rich color and surface detail curators and historians need to study how the slips were made and to match fragments. Researchers today often flip back and forth between visible and infrared images of the same slip, trying to mentally combine what each view shows—a slow, fatiguing process that still leaves many characters uncertain.

Bringing two kinds of vision into focus

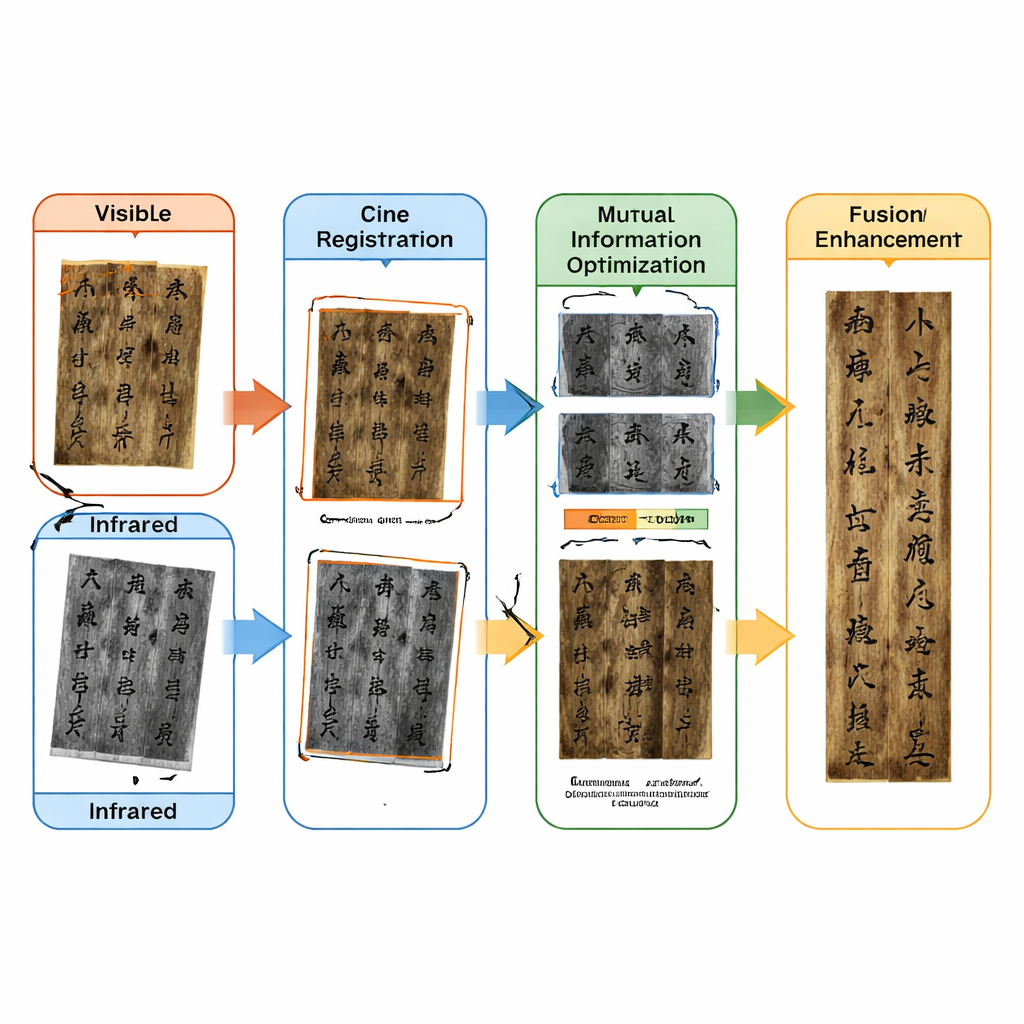

The team tackles this problem by precisely aligning, or “registering,” visible and infrared images of each bamboo slip so they can be fused into one enhanced picture. This is far from trivial: because the two cameras and lighting setups are different, the images can be shifted, rotated, slightly scaled, and even distorted relative to each other. On top of that, the slips have weak texture—few sharp corners or patterns—which makes it hard for standard computer-vision tools to find matching points between the two views. The authors design a multi-step registration pipeline that takes advantage of one thing that does stay stable across wavelengths: the outer shape of each slip.

From rough alignment to pixel-perfect match

The method begins with a coarse alignment stage that shrinks both images to suppress distracting details and then detects the long outer edges of the slips along with a modest number of corner-like points. Because the contour of a bamboo strip changes little between visible and infrared images, these edges are given high importance when estimating how one image should be rotated, shifted, and scaled to line up with the other. Next comes a fine alignment stage that works at full resolution. Here the algorithm repeatedly refines the fit using both edge contours and character corners, but with a twist: early on it trusts the large-scale edges more; as the match improves, it gradually increases the weight of the precise corner points traced around individual strokes. This adaptive shift from “outline first” to “details first” helps avoid getting stuck in a bad solution while still achieving very tight alignment.

Letting information content guide the final tweak

Even with good geometric matching, the brightness patterns in visible and infrared images can differ greatly. To finish the job, the researchers add an optimization step based on “mutual information,” a statistical measure of how well one image predicts the gray levels in the other. The algorithm makes many small trial adjustments to the transformation between the two views and keeps whichever change increases this shared information the most. A hybrid search strategy—combining a global exploration method known as simulated annealing with more traditional gradient-based refinement—allows the system to home in on a transformation that is both geometrically reasonable and information-rich, even when the images are noisy or partly degraded.

Bringing vanished characters back to life

Once the visible and infrared images are locked together, the second part of the framework focuses on the characters themselves. The infrared image is processed to accentuate ink against background and then thresholded to isolate stroke regions. After cleaning up noise and gaps, the extracted writing is converted into a transparent “ink mask.” Instead of simply pasting this mask on top, the method uses a form of difference-based fusion: it essentially subtracts the ink pattern from the background, causing places where the visible image once had writing—but now only shows bare wood—to turn dark again. Finally, color corrections restore the natural bamboo tones in areas where the original visible characters were already clear. The result is a single image that preserves the realistic look, color, and texture of the slip while making both faint and invisible strokes stand out crisply.

Sharper views for historians and heritage

Tests on more than 800 pairs of bamboo slip images, including many with severely damaged writing, show that the new method outperforms a range of existing registration techniques, from classic feature-matching to modern deep learning approaches. Quantitative scores confirm that the aligned images share more information and match structures better, while visual overlays show near-perfect overlap between visible and infrared content. For historians and conservators, this means they can read and interpret difficult texts from a single enhanced image, speeding up transcription and helping to rejoin scattered fragments. More broadly, the work demonstrates how combining multiple kinds of imaging with smart alignment and fusion can rescue fragile writings from the brink of illegibility, strengthening efforts to digitally preserve and study the world’s documentary heritage.

Citation: Wan, T., Qi, F., Yang, Y. et al. Adaptive multi-feature fusion for visible-infrared image registration and character enhancement of bamboo slips. npj Herit. Sci. 14, 96 (2026). https://doi.org/10.1038/s40494-026-02368-z

Keywords: bamboo slips, infrared imaging, image registration, text restoration, cultural heritage