Clear Sky Science · en

Multiscale voxel feature fusion network for large scale noisy point cloud completion in cultural heritage restoration

Bringing Ancient Structures Back into Digital Focus

When historians scan historic temples or monuments with lasers, the resulting 3D data often looks more like static-filled television than a clear picture. Parts of roofs or sculptures are missing, and random specks of “ghost” points clutter the view. This paper presents a new artificial intelligence (AI) method that cleans and completes these 3D point clouds, helping curators and researchers see complex cultural heritage sites—such as centuries‑old Japanese shrines—with far greater clarity.

Why 3D Scans of Heritage Sites Are So Messy

Modern tools like LiDAR and depth cameras can capture millions of 3D points from buildings and landscapes in minutes. But trees, shadows, awkward viewing angles, and the limits of the scanners themselves mean that some regions are never “seen” at all, while others are corrupted by noise. In practice, this leads to patchy, uneven point clouds where key features—like interlocking roof beams or intricate eaves—are either missing or buried under spurious points. Earlier digital repair techniques either filled gaps crudely, blurred fine details, or demanded heavy computation that did not scale to very large outdoor scenes.

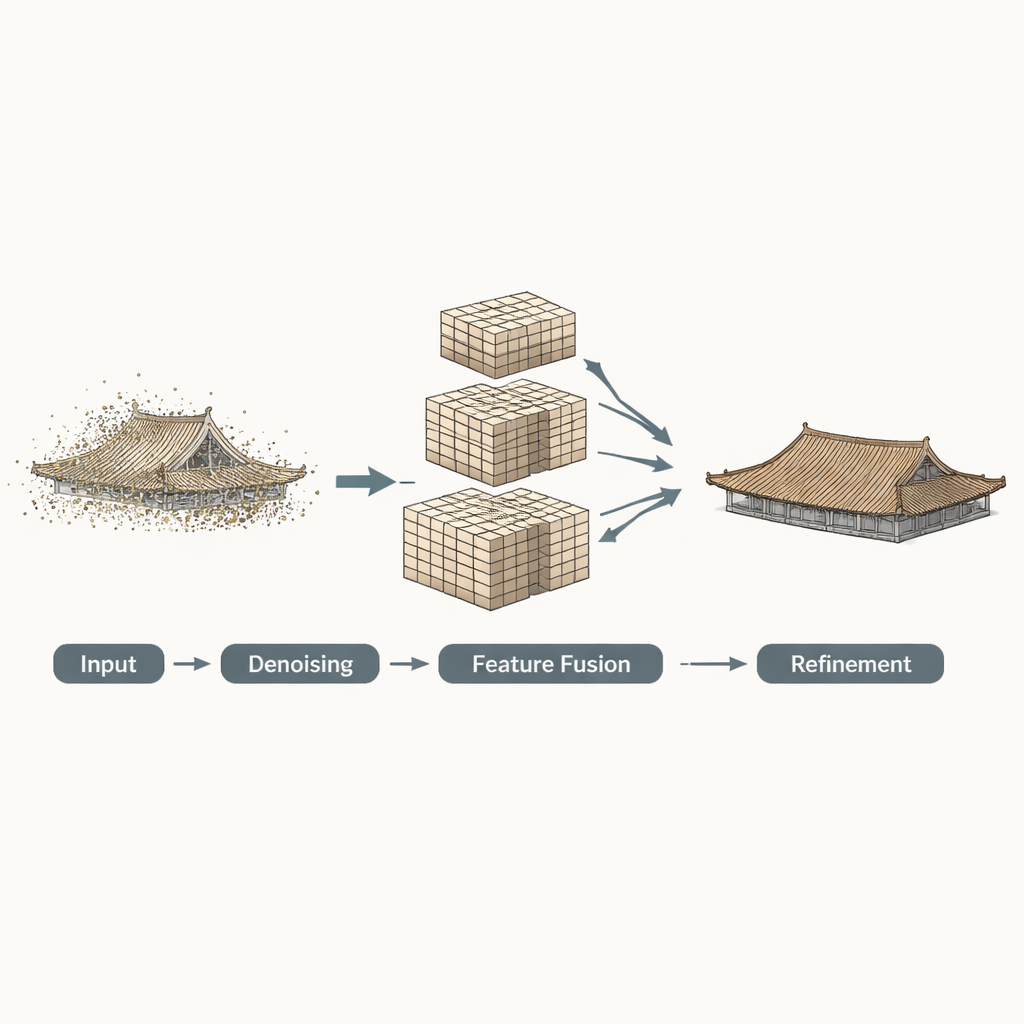

A Three-Step Digital Restoration Pipeline

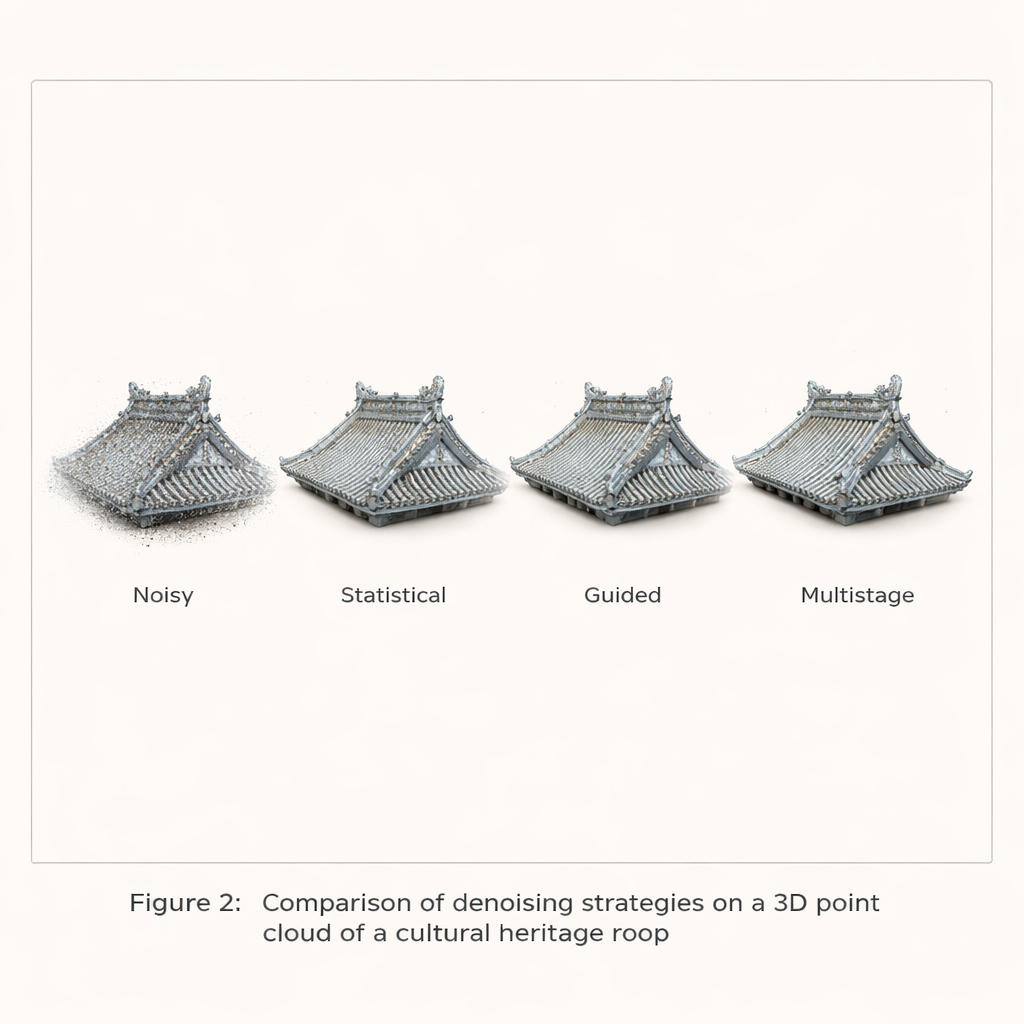

The authors build on their earlier work and propose a three‑stage AI framework tailored to large, noisy 3D scans of cultural heritage. First comes a multistage filtering step: the algorithm initially applies a statistical test to remove obvious outliers, then uses a guided filter that looks at local surface patches to smooth away remaining noise while preserving sharp shapes like edges. Second, the cleaned points are converted into 3D “voxels”—small cubes—and analyzed at several resolutions at once. Coarse grids capture the overall structure of a roof; finer grids capture ridges, tiles, and edges. These multi‑scale voxel features are then fused with attention mechanisms that let the network decide how much to trust each scale in different regions of the object.

Sharpening Edges and Filling in the Blanks

In the third stage, the fused features are passed through a Transformer-based module that predicts a sparse “skeleton” of key points representing the missing regions. A special curvature‑guided enhancement step then measures how sharply each region bends and uses this information to adjust the features, so that the predicted skeleton better follows true edges and corners instead of rounding them off. Finally, an upsampling module expands this skeleton into a dense, completed point cloud that aims to match the true surface while keeping the distribution of points even, avoiding clumps or holes that would distract viewers or mislead analysts.

How Well Does It Work in Practice?

The team tested their approach on both synthetic shapes and real scans. On a standard benchmark of 3D models (ShapeNet‑55), their method recovered missing parts more accurately than several leading networks, improving a key distance measure by up to about 16 percent while maintaining high completeness. More importantly for heritage applications, they assembled a dataset of Japanese temple roofs derived from actual laser scans that include real‑world noise. Here, the method clearly outperformed alternatives, especially when the data were heavily contaminated. In visual comparisons, the proposed pipeline produced sharper tiles, more faithful eaves, and fewer artifacts. Applied to the large‑scale scan of the Tamaki‑jinja Shrine—over 25 million points—it was able to reconstruct missing roof sections and refine noisy surfaces within a practical time and memory budget.

Seeing Through Walls with Clearer Data

The researchers also integrated their completion method with a transparent visualization technique they had developed earlier, which lets viewers “see through” the outer surfaces of dense point clouds to internal structures. On the original noisy data, transparent views of Tamaki‑jinja’s roofs were confusing: gaps, stray points, and missing regions obscured the true structure. After applying the new completion framework, the same views showed much clearer outlines of roofs and eaves, making it easier to interpret how the building is put together. Although the method still struggles in areas where scans are extremely incomplete or overwhelmed by noise, it substantially improves both geometric accuracy and visual readability for most regions.

What This Means for Cultural Heritage

In plain terms, this work provides a smarter “digital restorer” for 3D scans of historic sites. By carefully cleaning the data, understanding shapes at multiple scales, and paying special attention to edges and curves, the method can plausibly reconstruct missing parts of buildings while avoiding over‑smoothed or distorted results. For curators, architects, and historians, this means more reliable virtual models for study, conservation planning, and public exhibitions, including immersive see‑through views of complex wooden frameworks. While the approach does not replace physical conservation, it offers a powerful tool for preserving and exploring the geometry of fragile cultural heritage in the digital realm.

Citation: Li, W., Pan, J., Hasegawa, K. et al. Multiscale voxel feature fusion network for large scale noisy point cloud completion in cultural heritage restoration. npj Herit. Sci. 14, 93 (2026). https://doi.org/10.1038/s40494-026-02331-y

Keywords: 3D point cloud, cultural heritage, LiDAR scanning, deep learning, digital restoration