Clear Sky Science · en

DCADif: decoupled conditional adaptive time-dynamic fusion diffusion inpainting of traditional Chinese mural paintings

Bringing Ancient Wall Art Back to Life

Across China, temple walls and grotto ceilings are covered with centuries-old murals that are fading, flaking, and cracking away. These paintings are not only beautiful; they are visual records of beliefs, stories, and everyday life from long ago. Restoring them by hand is difficult, slow, and sometimes risky for the fragile surfaces. This study introduces a new artificial intelligence (AI) method, called DCADif, that helps experts digitally "inpaint" missing or damaged parts of murals while keeping both the drawing and the style faithful to the original artwork.

Why Old Murals Are So Hard to Repair

Traditional Chinese murals are far more than colored pictures on a wall. They weave together complex compositions, delicate line work, and subtle textures created by ancient pigments and tools. When time, moisture, and pollution leave gaps and stains, conservators must guess what once filled those spaces. Digital inpainting tools try to do the same, but most existing methods blur two crucial tasks: rebuilding the underlying shapes and preserving the unique artistic style. As a result, repaired areas may look structurally wrong, or they may match the shapes but lose the historical feel of the original brushwork and color. The challenge is to recover both the "bones" and the "soul" of the painting at once.

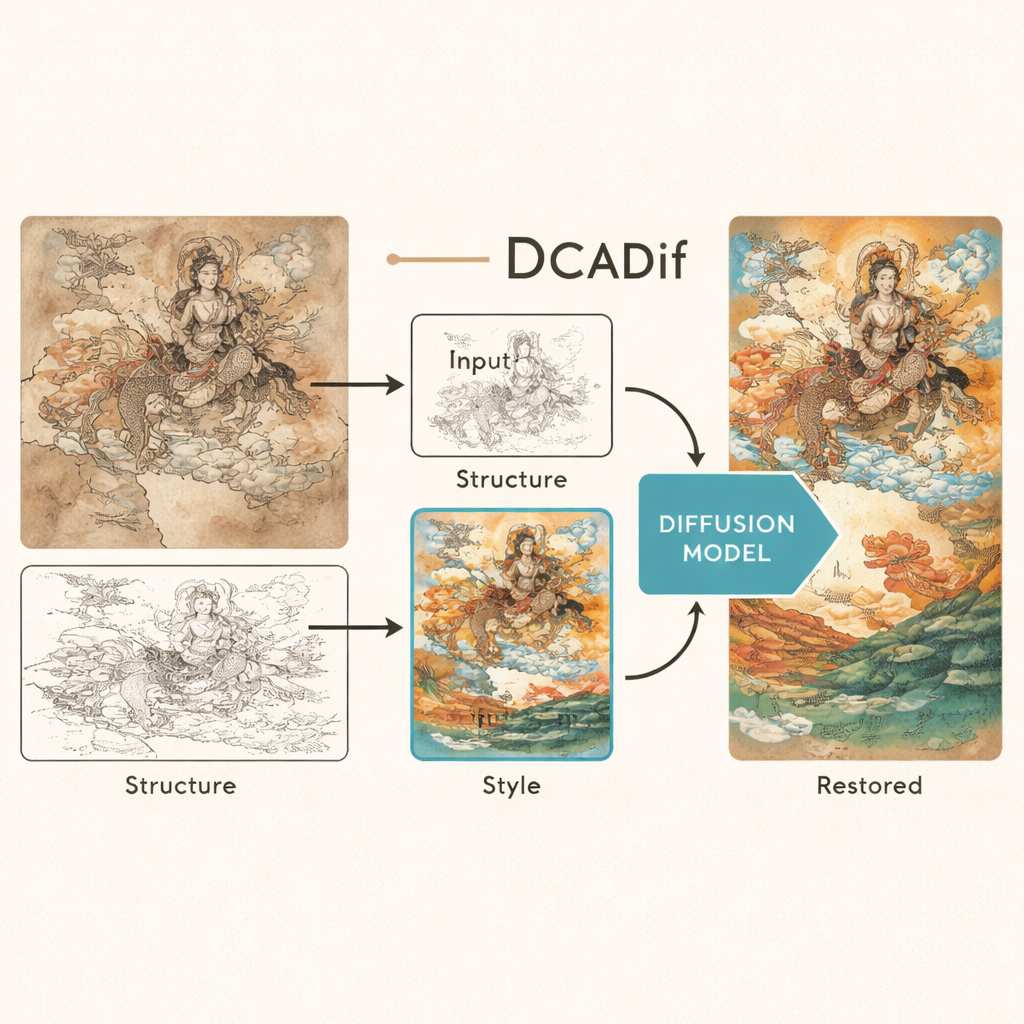

Teaching an AI to See Structure and Style Separately

The DCADif system tackles this challenge by splitting the problem into two streams. First, the researchers convert a mural into simple line art, much like an ink outline. This stripped-down version captures where figures, objects, and borders are, without being distracted by color or texture. A powerful vision model (adapted from a tool originally trained to understand millions of images) reads this line art and distills it into a compact description of the mural’s structure. In a separate path, a new "SwinStyle" encoder studies the original damaged painting itself to learn its stylistic fingerprint: the way colors blend, how brushstrokes curve, and how surfaces crack or fade. By keeping these two descriptions—structure and style—apart, DCADif can later control them independently during restoration.

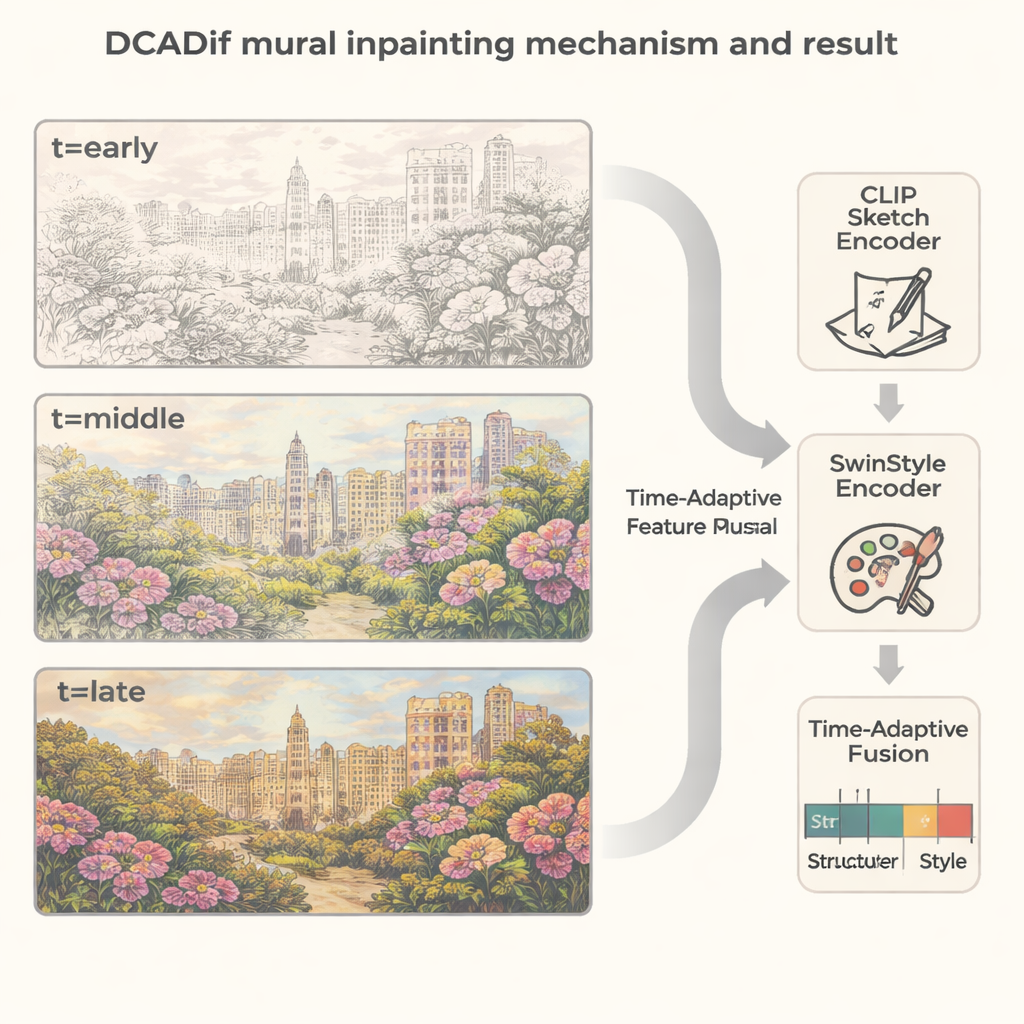

Letting the Image Emerge From Noise

At the heart of DCADif is a diffusion model, a type of AI that creates images by starting from random noise and gradually "denoising" it into a realistic picture. This process happens over many small steps, a bit like watching a fuzzy image slowly sharpen into focus. The authors designed a Time-Adaptive Feature Fusion module that acts like a smart dial between structure and style as the image emerges. In the early, very noisy stages, the model leans heavily on structure, using the line drawing to lay down correct shapes and contours. As the noise fades and the image becomes clearer, the dial slowly turns toward style, allowing rich colors, textures, and historical details to flow in without warping the underlying drawing.

Testing on a New Mural and Painting Library

To judge whether DCADif truly improves digital restoration, the team assembled a large new dataset called MuralVerse-S, created from murals in regions such as Dunhuang, Gansu, Hebei, and Inner Mongolia, along with realistic masks that mimic real-world cracks and flaking paint. They compared DCADif with nine leading inpainting methods, spanning older convolutional networks, transformer-based models, and other diffusion approaches. Across multiple levels of simulated damage, DCADif produced images with sharper structures, more coherent global layouts, and textures that human observers judged closer to the originals. The method also performed well on a separate collection of Chinese landscape paintings, successfully reconstructing subtle ink strokes and mountain contours, which suggests that it can generalize beyond murals alone.

What This Means for Cultural Heritage

Beyond raw numbers and charts, the researchers asked 50 art specialists and graduate students to score different restoration results. Participants consistently rated DCADif highest for content accuracy, stylistic faithfulness, and overall quality. Real examples, including famous works like Court Ladies Wearing Flowered Headdresses, showed that the system can fill in missing faces, garments, and decorative motifs in a way that blends seamlessly with the surrounding painting. Still, the authors acknowledge limits: when huge regions are destroyed, any digital guess risks historical inaccuracy, and the method remains computationally heavy. Even so, DCADif offers conservators a new, non-invasive tool—one that can propose careful, high-fidelity reconstructions while leaving the original wall untouched, helping museums and researchers better study, visualize, and safeguard irreplaceable cultural treasures.

Citation: Peng, X., Li, C., Hu, Q. et al. DCADif: decoupled conditional adaptive time-dynamic fusion diffusion inpainting of traditional Chinese mural paintings. npj Herit. Sci. 14, 61 (2026). https://doi.org/10.1038/s40494-026-02327-8

Keywords: digital mural restoration, image inpainting, diffusion models, Chinese cultural heritage, art conservation technology