Clear Sky Science · en

Multi-view image fusion using knowledge distillation for classification of ancient glass beads excavated in Japan

Beads as Time Capsules

For more than a thousand years, tiny glass beads traveled along trade routes from the Mediterranean and India to the Japanese archipelago. Today, these colorful fragments are among the most common artifacts excavated in Japan—over 600,000 have been found—but figuring out exactly where they were made usually demands slow, expensive chemical tests and the trained eye of a specialist. This study asks a simple yet powerful question: can ordinary photographs and modern AI stand in for the lab, helping archaeologists trace the journeys of these beads quickly and gently?

Why Ancient Glass Matters

Glass beads are more than jewelry; they are clues to long-distance contact across Eurasia. Different regions used distinct mixtures of raw materials and colorants, producing chemical “signatures” that specialists use to group beads into families linked to places such as East Asia, India, Southeast Asia, Central Asia, and the Mediterranean. Traditional provenance work relies on instruments that measure chemical components and on experts who examine shapes, colors, and manufacturing marks under magnification. These approaches have revealed rich stories about ancient trade, but they are hard to scale up to hundreds of thousands of fragile objects housed in museums and storerooms across Japan.

From Lab Measurements to Simple Photographs

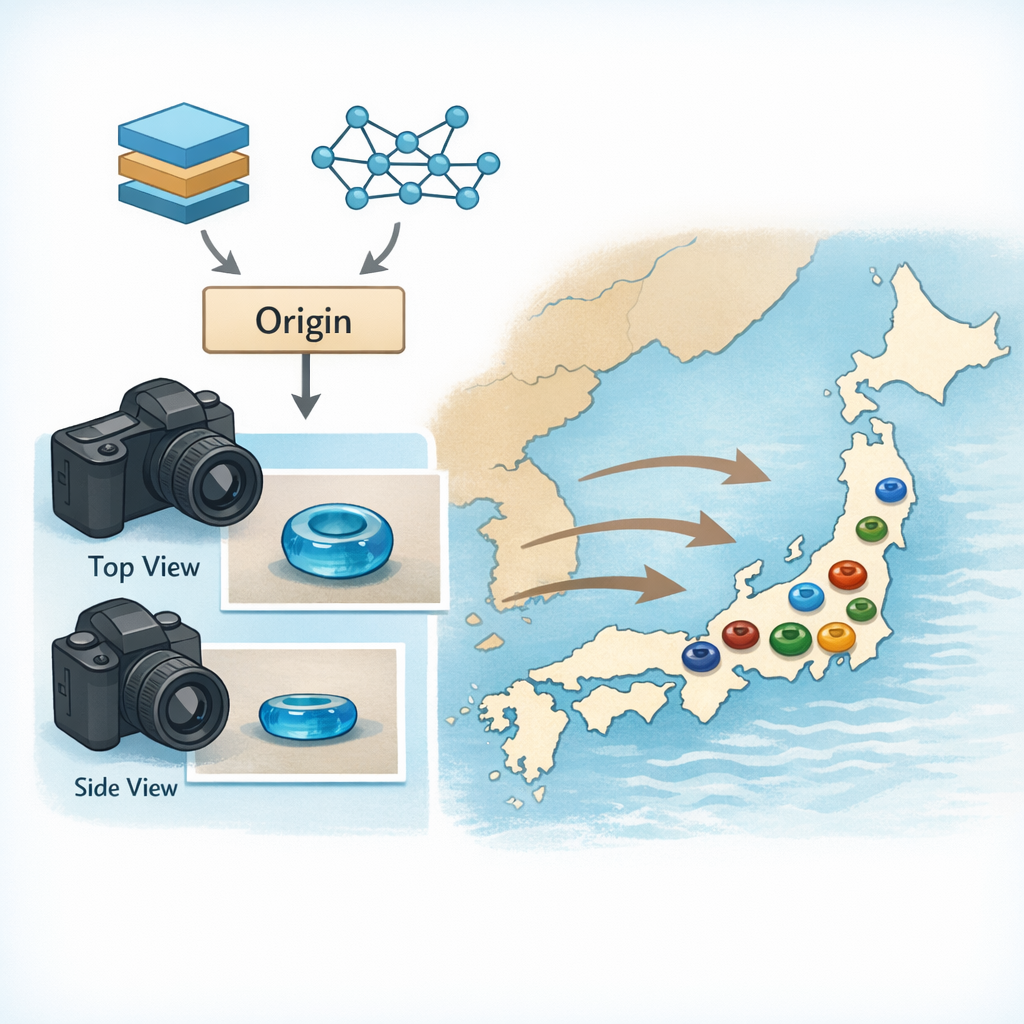

To break this bottleneck, the authors explore a method that uses only images of the beads. Instead of dissolving a sliver of glass for analysis, they photograph each bead from two angles: a top view that reveals the ring-shaped hole and overall color patterns, and a side view that shows thickness and profile. This dual viewpoint mimics how human experts handle artifacts, rotating them in their hands to catch subtle changes in surface texture and form. The goal is ambitious: given just these pictures, can a computer automatically assign each bead to one of 16 established chemical and regional groups that archaeologists already use?

Teaching Machines to See Like Experts

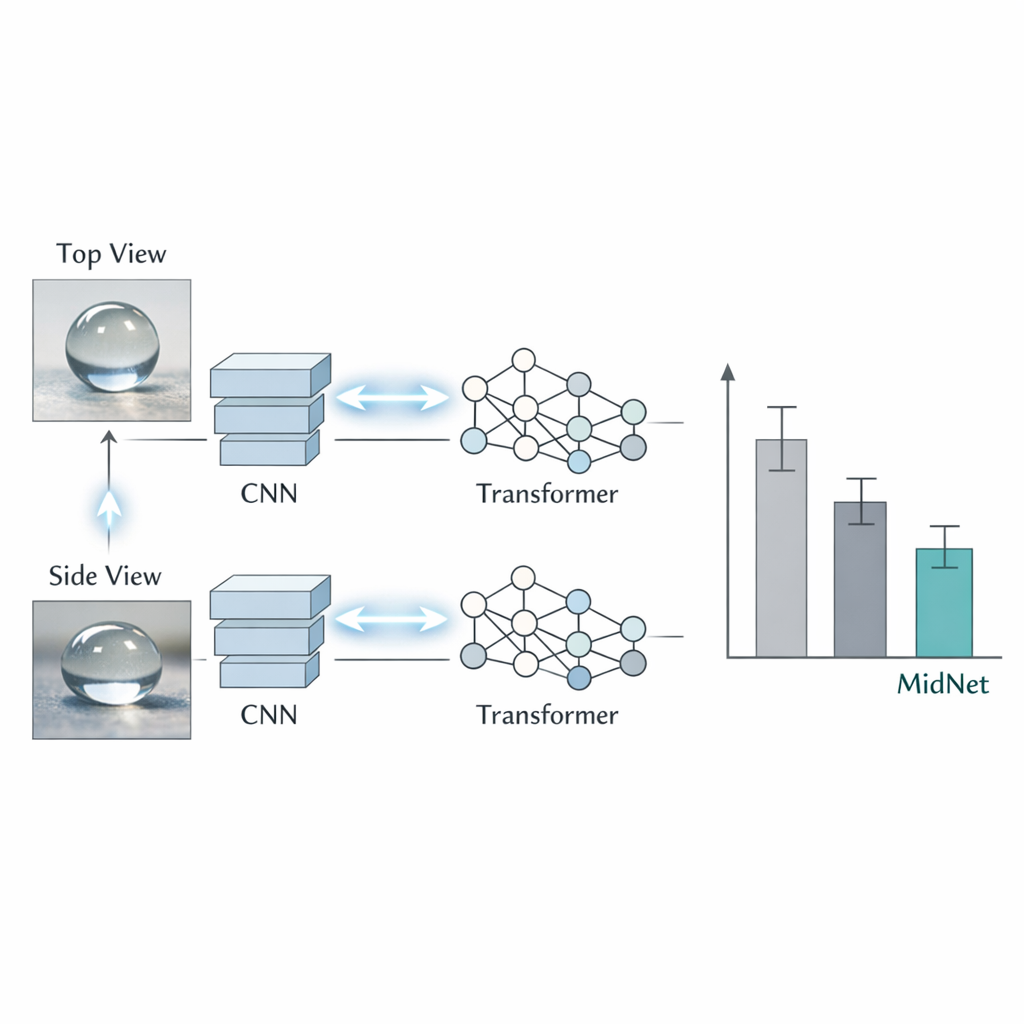

The team turns to a hybrid artificial intelligence system called MidNet. It combines two leading image-analysis strategies. One, known as a convolutional neural network, is especially good at picking up fine details such as tiny pits, streaks of color, or surface damage. The other, a vision transformer, is designed to see the bigger picture—how colors and shapes relate across the entire bead. MidNet processes both views (top and side) through both types of models and then encourages them to “agree” with each other. During training, each model learns not only from the correct label but also from the predictions of its partner and from the alternate viewpoint. This back-and-forth exchange reduces the risk that the system will latch onto quirks of a particular angle or model type rather than the enduring visual traits tied to origin.

Working with Uneven and Imperfect Data

The dataset behind MidNet consists of 3,434 bead images whose classes were previously established using careful expert study and chemical analysis. Some bead types are abundant, while others are represented by only a handful of examples—a common problem in archaeology. To prevent the AI from simply favoring the most common classes, the researchers used two tricks. First, they generated additional training images for very rare types using a modern image-synthesis technique, making believable variations without touching the artifacts themselves. Second, they deliberately distorted training photos—slightly changing color, cropping, or hiding small patches—to make the system less sensitive to minor damage or lighting differences. They then evaluated performance with a rigorous cross-validation procedure to see how well the method would generalize to unseen beads.

How Well Does the System Work?

When the researchers compared their hybrid MidNet to more standard image models, they found that using both top and side views always helped, confirming that the two angles capture complementary clues. In terms of raw accuracy, MidNet matched the best competing method within a margin of only a few beads out of thousands, but it showed the most stable behavior across different test splits. In other words, its performance varied less from one experiment to the next, indicating that it is less sensitive to which specific beads happen to be in the training set—a crucial quality when dealing with rare artifact types. The method still struggles with certain lookalike categories that even specialists find hard to distinguish, hinting at an “ultra-fine-grained” problem where differences are almost imperceptible in photographs alone.

What This Means for Future Digs

This study demonstrates that careful photography plus advanced image analysis can reliably estimate where many ancient glass beads were made, without touching their chemistry. For archaeologists, that opens the door to quick, low-cost, non-destructive sorting of large collections, even in the field or in small museums that lack laboratories. While challenging cases will still require expert judgment and chemical tests, a system like MidNet could handle the bulk of routine classification, highlight unusual pieces, and support large digital archives that track the movement of glass across continents and centuries. In short, the work shows how artificial intelligence can help reconstruct human history, one tiny bead at a time.

Citation: Fukuchi, T., Tamura, T. & Fukunaga, K. Multi-view image fusion using knowledge distillation for classification of ancient glass beads excavated in Japan. npj Herit. Sci. 14, 41 (2026). https://doi.org/10.1038/s40494-026-02305-0

Keywords: archaeology, glass beads, machine learning, image-based classification, cultural heritage